\n

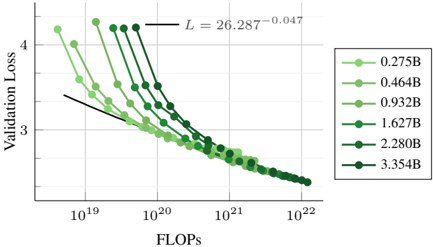

## Chart: Validation Loss vs. FLOPS for Different Model Sizes

### Overview

The image presents a line chart illustrating the relationship between Validation Loss and FLOPS (Floating Point Operations) for several different model sizes. The chart appears to be evaluating the performance of machine learning models as computational cost increases. A linear regression line is also plotted.

### Components/Axes

* **X-axis:** FLOPS, labeled "FLOPS". The scale is logarithmic, ranging from approximately 10<sup>19</sup> to 10<sup>22</sup>.

* **Y-axis:** Validation Loss, labeled "Validation Loss". The scale is linear, ranging from approximately 2.5 to 4.5.

* **Legend:** Located in the top-right corner, the legend identifies six different model sizes, each represented by a different shade of green and a corresponding marker:

* 0.275B (lightest green)

* 0.464B (slightly darker green)

* 0.932B (medium green)

* 1.627B (darker green)

* 2.280B (very dark green)

* 3.534B (darkest green)

* **Linear Regression Line:** A black line is plotted across the chart, with the equation "L = 26.287<sup>-0.047</sup>" displayed near the top-left.

### Detailed Analysis

Each model size is represented by a line showing how Validation Loss decreases as FLOPS increase.

* **0.275B (lightest green):** The line starts at approximately (10<sup>19</sup>, 4.2) and decreases to approximately (5 x 10<sup>21</sup>, 2.7). The slope is initially steep, then flattens out.

* **0.464B (slightly darker green):** The line starts at approximately (10<sup>19</sup>, 4.1) and decreases to approximately (5 x 10<sup>21</sup>, 2.6). The slope is initially steep, then flattens out.

* **0.932B (medium green):** The line starts at approximately (10<sup>19</sup>, 3.9) and decreases to approximately (5 x 10<sup>21</sup>, 2.5). The slope is initially steep, then flattens out.

* **1.627B (darker green):** The line starts at approximately (10<sup>19</sup>, 3.7) and decreases to approximately (5 x 10<sup>21</sup>, 2.4). The slope is initially steep, then flattens out.

* **2.280B (very dark green):** The line starts at approximately (10<sup>19</sup>, 3.5) and decreases to approximately (5 x 10<sup>21</sup>, 2.3). The slope is initially steep, then flattens out.

* **3.534B (darkest green):** The line starts at approximately (10<sup>19</sup>, 3.3) and decreases to approximately (5 x 10<sup>21</sup>, 2.2). The slope is initially steep, then flattens out.

All lines exhibit a similar trend: a rapid decrease in Validation Loss for lower FLOPS values, followed by a gradual flattening as FLOPS increase. The larger models (3.534B) consistently achieve lower Validation Loss values for a given FLOPS value.

The linear regression line starts at approximately (10<sup>19</sup>, 3.5) and decreases to approximately (10<sup>22</sup>, 2.5).

### Key Observations

* **Negative Correlation:** There is a clear negative correlation between FLOPS and Validation Loss. As computational cost (FLOPS) increases, the model's performance (Validation Loss) generally improves.

* **Diminishing Returns:** The rate of improvement in Validation Loss decreases as FLOPS increase, suggesting diminishing returns from increasing computational cost beyond a certain point.

* **Model Size Impact:** Larger models consistently outperform smaller models, achieving lower Validation Loss for the same FLOPS.

* **Convergence:** All lines appear to converge at higher FLOPS values, indicating that the performance difference between models diminishes as computational resources become abundant.

### Interpretation

This chart demonstrates the trade-off between computational cost and model performance. Increasing the size of the model (and therefore the number of FLOPS required) generally leads to improved performance, as measured by Validation Loss. However, the gains in performance diminish as the model size increases, suggesting that there is an optimal point beyond which further increases in model size do not provide significant benefits.

The linear regression line provides a baseline for expected performance. The model lines generally fall below the regression line, indicating that the models are performing better than expected based on a simple linear relationship between FLOPS and Validation Loss.

The convergence of the lines at higher FLOPS values suggests that all models are approaching a similar level of performance, and that further increases in computational cost may not be worthwhile. This information is crucial for resource allocation and model selection in machine learning projects. The equation provided for the linear regression line could be used to estimate the expected Validation Loss for a given FLOPS value, and to compare the performance of different models.