## Diagram: Knowledge Graph-Augmented LLM Question Answering System

### Overview

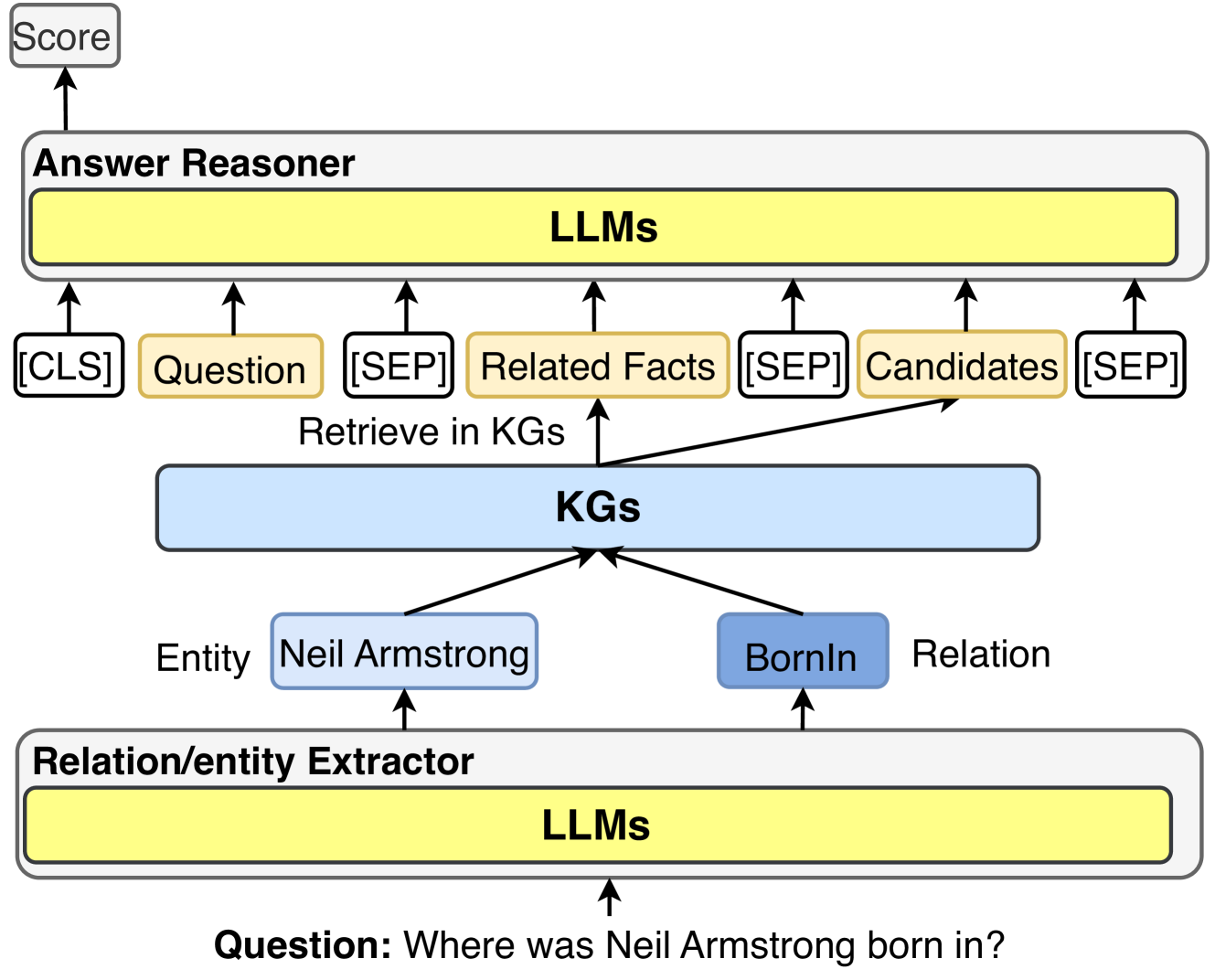

This image is a technical system architecture diagram illustrating a pipeline for answering factual questions using Large Language Models (LLMs) augmented with Knowledge Graphs (KGs). The flow starts with a natural language question at the bottom and progresses upward through extraction, retrieval, and reasoning stages to produce a final score. The diagram uses color-coded boxes and directional arrows to show data flow and component relationships.

### Components/Axes

The diagram is structured into three main horizontal layers or components, from bottom to top:

1. **Bottom Layer: Relation/entity Extractor**

* A large, rounded rectangular box labeled **"Relation/entity Extractor"**.

* Inside this box is a yellow rectangular block labeled **"LLMs"**.

* An upward-pointing arrow enters this box from below, originating from the input question.

2. **Middle Layer: Knowledge Graphs (KGs)**

* A central, light-blue rectangular box labeled **"KGs"**.

* Two smaller boxes feed into the "KGs" box from below:

* A light-blue box labeled **"Neil Armstrong"**, with the label **"Entity"** to its left.

* A darker blue box labeled **"BornIn"**, with the label **"Relation"** to its right.

* Two arrows point upward from the "KGs" box, labeled **"Retrieve in KGs"**, leading to the top layer.

3. **Top Layer: Answer Reasoner**

* A large, rounded rectangular box labeled **"Answer Reasoner"**.

* Inside this box is a yellow rectangular block labeled **"LLMs"**.

* Multiple inputs feed into this "LLMs" block from below, structured as a sequence:

* A white box labeled **"[CLS]"**.

* A yellow box labeled **"Question"**.

* A white box labeled **"[SEP]"**.

* A yellow box labeled **"Related Facts"** (this receives an arrow from the "Retrieve in KGs" process).

* A white box labeled **"[SEP]"**.

* A yellow box labeled **"Candidates"** (this also receives an arrow from the "Retrieve in KGs" process).

* A white box labeled **"[SEP]"**.

* An upward-pointing arrow exits the "Answer Reasoner" box, leading to a final output box.

4. **Output**

* A small, gray box at the very top labeled **"Score"**.

5. **Input**

* Text at the very bottom of the diagram: **"Question: Where was Neil Armstrong born in?"**. An arrow points from this text into the "Relation/entity Extractor".

### Detailed Analysis

The diagram explicitly models the processing of the example question: **"Where was Neil Armstrong born in?"**.

* **Step 1 - Extraction:** The input question is processed by the **Relation/entity Extractor** (powered by LLMs). This component identifies and extracts the key components from the question:

* **Entity:** "Neil Armstrong"

* **Relation:** "BornIn"

* **Step 2 - Retrieval:** The extracted entity and relation are used to query the **KGs** (Knowledge Graphs). The system retrieves relevant information, which is categorized as:

* **Related Facts:** General facts from the KG connected to the entity and relation.

* **Candidates:** Specific potential answer entities (e.g., cities or locations) from the KG.

* **Step 3 - Reasoning:** The retrieved information, along with the original question, is formatted into a specific input sequence for the **Answer Reasoner** (also powered by LLMs). The sequence follows a pattern reminiscent of BERT-style models: `[CLS] Question [SEP] Related Facts [SEP] Candidates [SEP]`. The LLM within the Answer Reasoner processes this combined context.

* **Step 4 - Output:** The final output of the system is a **"Score"**, which likely represents a confidence score or ranking for the candidate answers.

### Key Observations

* **Modular Design:** The system is clearly divided into specialized modules for extraction, retrieval, and reasoning.

* **LLM Core:** LLMs are the core computational engine in both the extraction and reasoning stages.

* **Structured Input:** The Answer Reasoner uses a structured, separator-based input format (`[CLS]`, `[SEP]`) to combine different information sources, suggesting the use of a transformer-based model.

* **Example-Driven:** The entire diagram is annotated with a concrete example ("Neil Armstrong", "BornIn") to illustrate the abstract process.

* **Color Coding:** Yellow is consistently used for LLM components and primary data inputs (Question, Related Facts, Candidates). Blue shades are used for Knowledge Graph elements.

### Interpretation

This diagram illustrates a **Retrieval-Augmented Generation (RAG)** or **Knowledge Graph-Enhanced** approach to question answering. The core idea is to overcome the static knowledge limitations of a base LLM by dynamically retrieving factual information from an external, structured knowledge source (the KG) before generating an answer.

* **What it demonstrates:** The pipeline shows how a complex factual question is decomposed, how relevant knowledge is fetched, and how all information is synthesized by an LLM to produce a reasoned output. It emphasizes a **"retrieve-then-read"** paradigm.

* **Relationships:** The flow is strictly bottom-up and linear: Question → Extraction → Retrieval → Reasoning → Score. The "KGs" component acts as a bridge between the raw question and the final reasoning, providing grounded facts.

* **Notable Design Choices:** The separation of "Related Facts" and "Candidates" suggests the system distinguishes between contextual knowledge and direct answer options. The use of `[CLS]` and `[SEP]` tokens indicates the Answer Reasoner is likely a fine-tuned BERT-like model for a multiple-choice or ranking task, where it scores the plausibility of each "Candidate" given the "Question" and "Related Facts".

* **Underlying Purpose:** This architecture aims to produce more accurate, factual, and verifiable answers compared to an LLM relying solely on its parametric memory. It explicitly incorporates a symbolic knowledge source (KG) to ground the neural model's reasoning.