## Flowchart: Knowledge Graph Question Answering System

### Overview

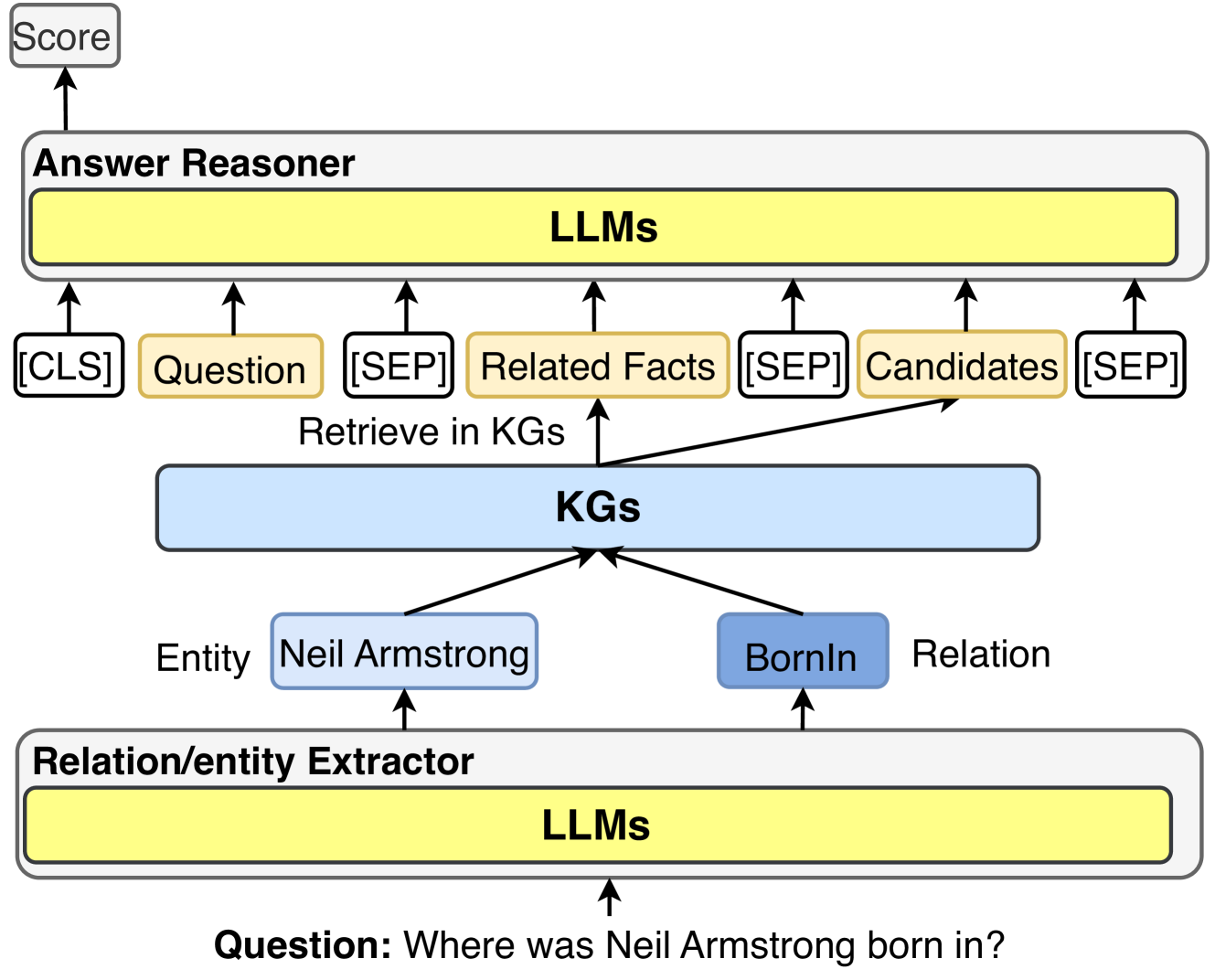

The diagram illustrates a multi-stage pipeline for answering questions using a knowledge graph (KG) system. It shows the flow of information from a user question through entity/relation extraction, KG retrieval, and answer reasoning using large language models (LLMs). The example question "Where was Neil Armstrong born in?" is used to demonstrate the process.

### Components/Axes

1. **Top Section (Answer Reasoner)**:

- Contains a yellow box labeled "LLMs" (Large Language Models)

- Inputs:

- `[CLS]` token (classification start)

- Question text

- `[SEP]` separator

- Related Facts

- `[SEP]` separator

- Candidates

- `[SEP]` separator

- Output: Score (top arrow)

2. **Middle Section (KGs)**:

- Blue box labeled "KGs" (Knowledge Graphs)

- Contains:

- Entity: Neil Armstrong (light blue box)

- Relation: BornIn (light blue box)

- Arrows show bidirectional relationships between entity and relation

3. **Bottom Section (Relation/entity Extractor)**:

- Yellow box labeled "LLMs"

- Input: Question text

- Output: Arrows to KGs section

4. **Connecting Elements**:

- Arrows show flow between components:

- Relation/entity Extractor → KGs

- KGs → Answer Reasoner

- Text labels indicate data flow:

- "Retrieve in KGs" (from KGs to Answer Reasoner)

- "Entity Neil Armstrong" and "Relation BornIn" (within KGs)

### Detailed Analysis

- **Textual Elements**:

- All labels are in English

- Special tokens: `[CLS]`, `[SEP]` (common in NLP pipelines)

- Example question: "Where was Neil Armstrong born in?"

- Entity/relation pairs: Neil Armstrong - BornIn

- **Color Coding**:

- Yellow: Answer Reasoner and Relation/entity Extractor components

- Blue: KGs section

- Light blue: Entity and Relation boxes

- White: `[SEP]` tokens

- **Spatial Relationships**:

- Vertical hierarchy: Answer Reasoner (top) → KGs (middle) → Relation/entity Extractor (bottom)

- Horizontal flow: Left-to-right within each section

- Diagonal arrows connect KGs to Answer Reasoner

### Key Observations

1. The system uses LLMs at both the input (extraction) and output (reasoning) stages

2. Knowledge Graphs serve as the central data store for entities and relations

3. Special tokens (`[CLS]`, `[SEP]`) structure the input format for LLMs

4. The example question directly maps to the BornIn relation in the KG

5. The final output is a score, suggesting a ranking or confidence measure

### Interpretation

This diagram represents a hybrid AI system combining:

1. **Natural Language Processing** (LLMs for question understanding and answer generation)

2. **Knowledge Graph Technology** (structured storage of factual relationships)

3. **Information Retrieval** (connecting questions to relevant KG facts)

The process demonstrates how modern QA systems:

- Parse questions to identify key entities/relations

- Query structured knowledge bases

- Use language models to generate coherent answers

- Assign confidence scores to responses

The Neil Armstrong example shows how the system would:

1. Extract "Neil Armstrong" and "born in" from the question

2. Query the KG for the BornIn relation of Neil Armstrong

3. Use LLMs to formulate the final answer

4. Score the response quality

This architecture highlights the integration of symbolic AI (KGs) with statistical AI (LLMs) for robust question answering capabilities.