## Line Chart: Cost per Sequence vs. Number of Items per Sequence

### Overview

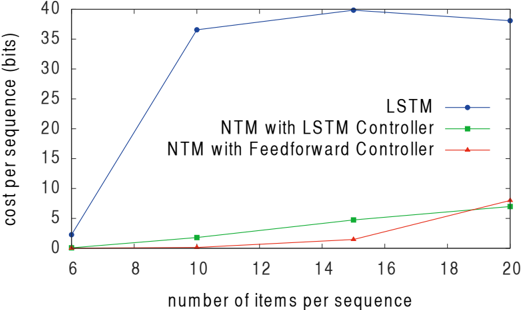

This line chart depicts the relationship between the number of items per sequence and the cost per sequence (in bits) for three different neural network architectures: LSTM, NTM with LSTM Controller, and NTM with Feedforward Controller. The chart visually compares the computational cost of each architecture as the sequence length increases.

### Components/Axes

* **X-axis:** "number of items per sequence". Scale ranges from 6 to 20, with markers at 6, 8, 10, 12, 14, 16, 18, and 20.

* **Y-axis:** "cost per sequence (bits)". Scale ranges from 0 to 40, with markers at 0, 5, 10, 15, 20, 25, 30, 35, and 40.

* **Legend:** Located in the top-right corner.

* LSTM (Blue line with circle markers)

* NTM with LSTM Controller (Green line with triangle markers)

* NTM with Feedforward Controller (Red line with diamond markers)

### Detailed Analysis

* **LSTM (Blue Line):** The line slopes sharply upward from x=6 to x=10, then plateaus with a slight downward trend from x=14 to x=20.

* At x=6, y ≈ 1.5 bits.

* At x=8, y ≈ 11 bits.

* At x=10, y ≈ 36 bits.

* At x=12, y ≈ 37 bits.

* At x=14, y ≈ 39 bits.

* At x=16, y ≈ 39 bits.

* At x=18, y ≈ 38 bits.

* At x=20, y ≈ 38 bits.

* **NTM with LSTM Controller (Green Line):** The line exhibits a relatively flat trend with a slight upward slope.

* At x=6, y ≈ 2 bits.

* At x=8, y ≈ 2 bits.

* At x=10, y ≈ 3 bits.

* At x=12, y ≈ 4 bits.

* At x=14, y ≈ 5 bits.

* At x=16, y ≈ 6 bits.

* At x=18, y ≈ 7 bits.

* At x=20, y ≈ 8 bits.

* **NTM with Feedforward Controller (Red Line):** The line shows a gradual upward slope throughout the entire range.

* At x=6, y ≈ 1 bit.

* At x=8, y ≈ 2 bits.

* At x=10, y ≈ 2 bits.

* At x=12, y ≈ 3 bits.

* At x=14, y ≈ 4 bits.

* At x=16, y ≈ 5 bits.

* At x=18, y ≈ 6 bits.

* At x=20, y ≈ 7 bits.

### Key Observations

* The LSTM architecture has significantly higher cost per sequence compared to both NTM architectures, especially as the number of items per sequence increases.

* The NTM with LSTM Controller and NTM with Feedforward Controller exhibit similar cost per sequence values, with the Feedforward Controller consistently slightly lower.

* The LSTM cost per sequence appears to saturate around 38-39 bits after x=14, while the NTM architectures continue to increase, albeit at a slower rate.

### Interpretation

The data suggests that LSTM networks become computationally expensive as the sequence length increases, likely due to the vanishing gradient problem or the increased memory requirements for longer sequences. The NTM architectures, which incorporate external memory, demonstrate a more scalable approach, maintaining lower costs per sequence even with longer sequences. The NTM with Feedforward Controller appears to be slightly more efficient than the NTM with LSTM Controller, potentially due to the simpler controller structure. The saturation of the LSTM cost per sequence after a certain length could indicate a limit to its ability to effectively process longer sequences, while the NTM architectures continue to scale, albeit with increasing cost. This implies that NTMs are better suited for tasks involving long-range dependencies and variable-length sequences. The difference in cost could be due to the complexity of the LSTM's internal state updates versus the NTM's external memory access.