\n

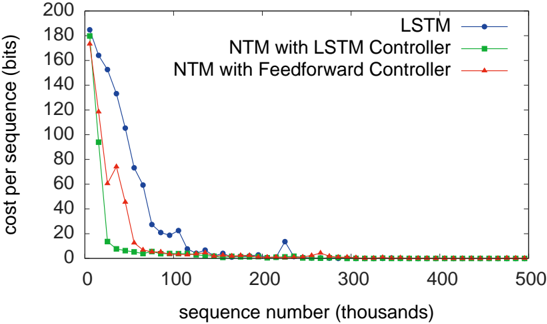

## Line Chart: Cost per Sequence vs. Sequence Number for Three Neural Network Architectures

### Overview

The image is a line plot comparing the training cost (measured in bits per sequence) over the number of training sequences (in thousands) for three different neural network models: a standard LSTM, a Neural Turing Machine (NTM) with an LSTM controller, and an NTM with a Feedforward controller. The chart demonstrates the learning efficiency and convergence speed of each architecture.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **Y-Axis:**

* **Label:** `cost per sequence (bits)`

* **Scale:** Linear, ranging from 0 to 200, with major tick marks every 20 units.

* **X-Axis:**

* **Label:** `sequence number (thousands)`

* **Scale:** Linear, ranging from 0 to 500, with major tick marks every 100 units.

* **Legend:** Located in the top-right corner of the plot area.

* **Blue line with circle markers:** `LSTM`

* **Green line with square markers:** `NTM with LSTM Controller`

* **Red line with triangle markers:** `NTM with Feedforward Controller`

### Detailed Analysis

**1. LSTM (Blue line, circle markers):**

* **Trend:** Shows a steady, gradual downward slope from a high initial cost, converging towards zero.

* **Key Data Points (Approximate):**

* Sequence 0k: ~185 bits

* Sequence 25k: ~160 bits

* Sequence 50k: ~135 bits

* Sequence 75k: ~75 bits

* Sequence 100k: ~20 bits

* Sequence 125k: ~5 bits

* Sequence 225k: A small, isolated spike to ~15 bits before returning to near-zero.

* From ~150k sequences onward, the cost remains very close to 0 bits.

**2. NTM with LSTM Controller (Green line, square markers):**

* **Trend:** Exhibits an extremely rapid, steep decline in cost, converging to near-zero much faster than the standard LSTM.

* **Key Data Points (Approximate):**

* Sequence 0k: ~175 bits

* Sequence 10k: ~95 bits

* Sequence 20k: ~15 bits

* Sequence 30k: ~5 bits

* From ~40k sequences onward, the cost is consistently at or very near 0 bits.

**3. NTM with Feedforward Controller (Red line, triangle markers):**

* **Trend:** Shows a rapid initial decline, followed by a significant, temporary increase (spike), before a final steep descent to convergence.

* **Key Data Points (Approximate):**

* Sequence 0k: ~180 bits

* Sequence 10k: ~120 bits

* Sequence 20k: ~75 bits (This is the peak of the spike)

* Sequence 30k: ~45 bits

* Sequence 40k: ~10 bits

* Sequence 50k: ~5 bits

* From ~60k sequences onward, the cost remains at or very near 0 bits.

### Key Observations

1. **Convergence Speed:** The two NTM models converge to near-zero cost significantly faster than the standard LSTM. The NTM with LSTM Controller is the fastest, reaching near-zero by ~40k sequences. The NTM with Feedforward Controller converges by ~60k sequences, while the LSTM takes until ~150k sequences.

2. **Learning Anomaly:** The NTM with Feedforward Controller (red line) exhibits a notable non-monotonic learning curve, with a cost spike peaking at ~75 bits around 20k sequences before resuming its descent. This suggests a period of instability or adjustment in its learning process.

3. **Final Performance:** All three models eventually achieve a cost per sequence at or very near 0 bits, indicating successful learning of the task given sufficient training sequences.

4. **LSTM Spike:** The standard LSTM shows a minor, isolated cost increase around 225k sequences, which is quickly corrected.

### Interpretation

This chart provides strong empirical evidence for the superior data efficiency of memory-augmented neural networks (like the NTM) over a standard LSTM on a specific sequential task. The key takeaway is that providing an external memory structure (the "Turing Machine" part) allows the model to learn the underlying algorithm much more quickly, requiring far fewer training examples.

* The **NTM with LSTM Controller** combines the best of both worlds: the external memory of the NTM and the internal recurrent memory of the LSTM controller, resulting in the most efficient and stable learning curve.

* The **NTM with Feedforward Controller** also learns quickly but experiences a transient phase of high error. This could indicate that without the internal memory of an LSTM, the feedforward controller initially struggles to manage the external memory operations, leading to a temporary performance drop before it masters the task.

* The **standard LSTM**, lacking explicit external memory, must rely solely on its internal state to remember long-term dependencies. This is a less efficient inductive bias for algorithmic tasks, resulting in a much slower, more gradual learning process.

The data suggests that for tasks requiring the learning of precise algorithms or manipulation of structured data, architectures with explicit, addressable memory (like the NTM) offer a significant advantage in sample efficiency. The spike in the Feedforward variant is a critical observation, highlighting that the choice of controller within a memory-augmented network impacts not just speed but also the stability of the learning dynamics.