## Stacked Bar Chart: Time Breakdown for Computational Scenarios

### Overview

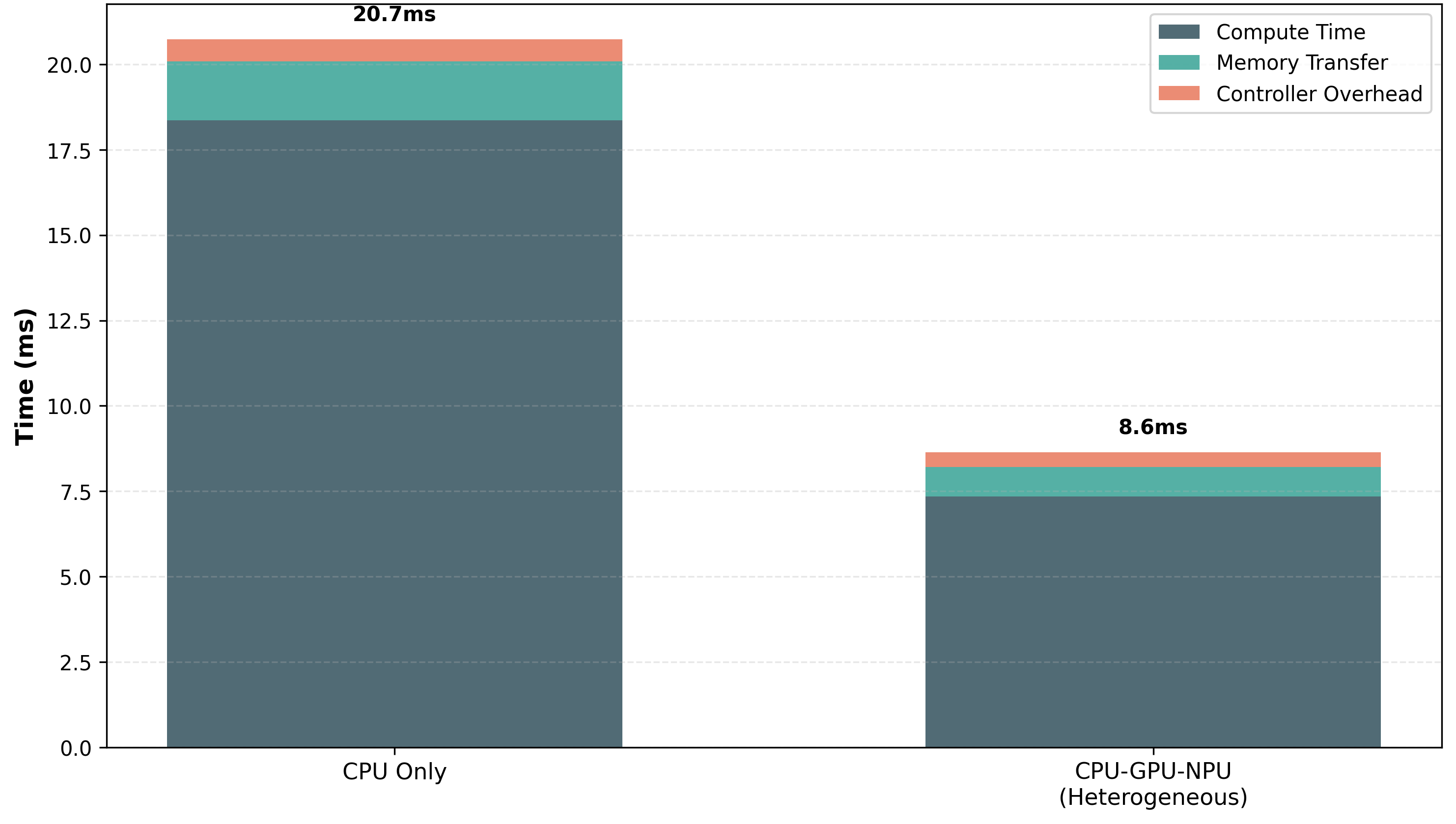

This image displays a stacked bar chart comparing the total time taken for two computational scenarios: "CPU Only" and "CPU-GPU-NPU (Heterogeneous)". The time is broken down into three components: "Compute Time", "Memory Transfer", and "Controller Overhead". The chart visually represents the significant reduction in total time when utilizing a heterogeneous computing approach.

### Components/Axes

* **Chart Type**: Stacked Bar Chart

* **Title**: Implicitly, the chart compares performance between two computational configurations.

* **Y-axis Title**: "Time (ms)"

* **Scale**: Linear, ranging from 0.0 to 20.0 ms, with major tick marks at 2.5 ms intervals (0.0, 2.5, 5.0, 7.5, 10.0, 12.5, 15.0, 17.5, 20.0).

* **X-axis Labels**:

* "CPU Only"

* "CPU-GPU-NPU (Heterogeneous)"

* **Legend**: Located in the top-right quadrant of the chart.

* **Compute Time**: Represented by a dark grey color.

* **Memory Transfer**: Represented by a teal/cyan color.

* **Controller Overhead**: Represented by a coral/salmon color.

* **Data Labels**:

* "20.7ms" is displayed above the "CPU Only" bar.

* "8.6ms" is displayed above the "CPU-GPU-NPU (Heterogeneous)" bar.

### Detailed Analysis

The chart contains two stacked bars, each representing a computational scenario.

**Bar 1: CPU Only**

* **Total Time**: Approximately 20.7 ms (as indicated by the data label).

* **Components (from bottom to top)**:

* **Compute Time (Dark Grey)**: This component extends from 0.0 ms to approximately 17.7 ms.

* *Estimated Value*: ~17.7 ms.

* **Memory Transfer (Teal/Cyan)**: This component is stacked on top of Compute Time, extending from approximately 17.7 ms to approximately 20.0 ms.

* *Estimated Value*: ~2.3 ms.

* **Controller Overhead (Coral/Salmon)**: This component is stacked on top of Memory Transfer, extending from approximately 20.0 ms to approximately 20.7 ms.

* *Estimated Value*: ~0.7 ms.

**Bar 2: CPU-GPU-NPU (Heterogeneous)**

* **Total Time**: Approximately 8.6 ms (as indicated by the data label).

* **Components (from bottom to top)**:

* **Compute Time (Dark Grey)**: This component extends from 0.0 ms to approximately 7.2 ms.

* *Estimated Value*: ~7.2 ms.

* **Memory Transfer (Teal/Cyan)**: This component is stacked on top of Compute Time, extending from approximately 7.2 ms to approximately 8.3 ms.

* *Estimated Value*: ~1.1 ms.

* **Controller Overhead (Coral/Salmon)**: This component is stacked on top of Memory Transfer, extending from approximately 8.3 ms to approximately 8.6 ms.

* *Estimated Value*: ~0.3 ms.

### Key Observations

* **Significant Time Reduction**: The heterogeneous "CPU-GPU-NPU" configuration results in a total time of approximately 8.6 ms, which is less than half of the time taken by the "CPU Only" configuration (approximately 20.7 ms). This represents a reduction of approximately 12.1 ms, or about a 58.5% improvement.

* **Dominance of Compute Time**: In both scenarios, "Compute Time" is the largest contributor to the total time. However, its absolute value is significantly lower in the heterogeneous setup.

* **Reduced Overhead**: Both "Memory Transfer" and "Controller Overhead" are also substantially reduced in the heterogeneous configuration compared to the "CPU Only" configuration.

### Interpretation

This stacked bar chart clearly demonstrates the performance benefits of employing a heterogeneous computing architecture (CPU-GPU-NPU) over a CPU-only approach for the task represented. The data suggests that the heterogeneous setup is more efficient, leading to a dramatic reduction in overall execution time.

The breakdown into "Compute Time", "Memory Transfer", and "Controller Overhead" provides insight into where these improvements are realized. The significant decrease in "Compute Time" indicates that the GPU and NPU are effectively offloading computational tasks from the CPU, leading to faster processing. Furthermore, the reduction in "Memory Transfer" and "Controller Overhead" suggests that the heterogeneous system is also more optimized in terms of data movement and inter-component communication, or that the overall workload is smaller due to faster computation.

The data implies that for workloads that can be parallelized or accelerated by specialized hardware like GPUs and NPUs, adopting a heterogeneous architecture is a highly effective strategy for improving performance and reducing latency. The chart serves as a strong piece of evidence for the advantages of such systems.