## Diagram: LLM-Based Entity Prediction Process

### Overview

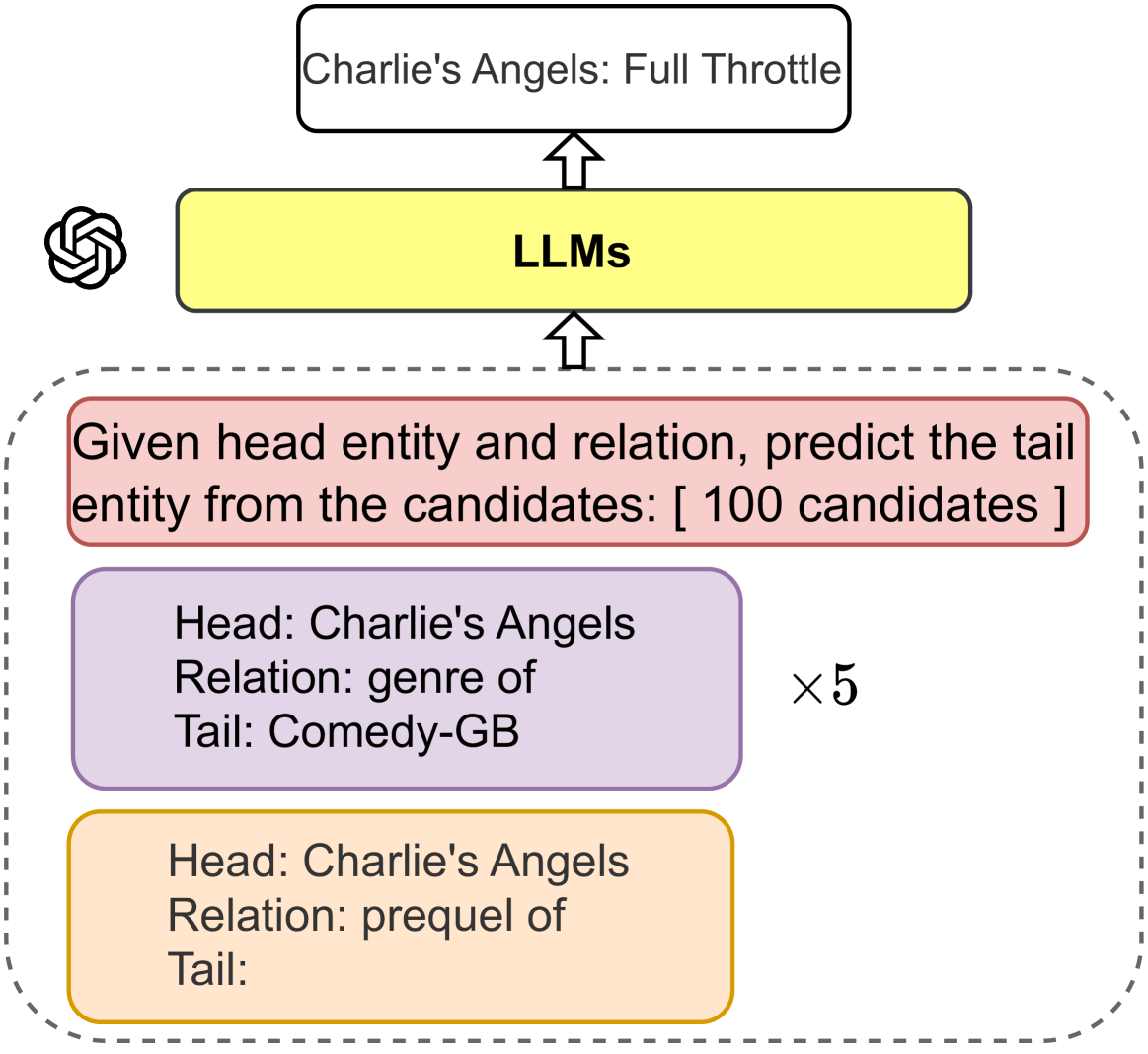

This image is a flowchart or process diagram illustrating how Large Language Models (LLMs) are used to perform a knowledge graph completion task. The diagram shows a pipeline where a specific query about a movie franchise is processed by an LLM to generate a predicted answer.

### Components/Axes

The diagram is structured vertically with a clear upward flow indicated by arrows. The components are color-coded and positioned as follows:

1. **Top Output Box (White, Top-Center):** A rounded rectangle containing the text "Charlie's Angels: Full Throttle". This represents the final output or prediction.

2. **LLMs Processing Box (Yellow, Center):** A rounded rectangle labeled "LLMs". An arrow points from this box to the top output box. To its left is the OpenAI logo (a black and white geometric flower-like symbol).

3. **Input Task Container (Dashed Gray Border, Bottom):** A large, dashed-border container holds the input data and examples. An arrow points from this container up to the "LLMs" box.

* **Task Description (Red Box, Top of Container):** "Given head entity and relation, predict the tail entity from the candidates: [ 100 candidates ]".

* **Example 1 (Purple Box, Middle of Container):**

* Head: Charlie's Angels

* Relation: genre of

* Tail: Comedy-GB

* **Example 2 (Orange Box, Bottom of Container):**

* Head: Charlie's Angels

* Relation: prequel of

* Tail: [This field is blank, indicating it is the target for prediction.]

* **Multiplier (Text, Right of Examples):** "×5" is placed to the right of the two example boxes, suggesting that five such examples (or a few-shot prompt with 5 demonstrations) are provided as context.

### Detailed Analysis

The diagram explicitly details a **few-shot learning** setup for an LLM.

* **Task:** The core task is defined in the red box: predicting a tail entity from a set of 100 candidates, given a head entity and a relation. This is a standard knowledge graph link prediction or completion task.

* **Input Structure:** The input consists of:

1. A natural language instruction (the red box).

2. A set of demonstration examples (the purple and orange boxes). The "×5" indicates there are five such example triples provided in the prompt.

3. The final query to be solved is embedded as the last example (the orange box), where the "Tail:" field is empty, prompting the model to fill it.

* **Example Content:** The examples use the head entity "Charlie's Angels". The first example provides a completed triple ("genre of" -> "Comedy-GB"). The second example sets up the actual prediction problem: given "Charlie's Angels" and the relation "prequel of", what is the tail entity?

* **Process Flow:** The entire input package (instruction + examples) is fed into the "LLMs" (represented by the yellow box and the OpenAI logo). The LLM processes this prompt and generates the text in the top white box as its output: "Charlie's Angels: Full Throttle".

### Key Observations

1. **Spatial Grounding:** The legend (color-coding) is consistent: White for final output, Yellow for the model, Red for the task rule, Purple for a solved example, and Orange for the target query. The "×5" is positioned to the right of the example stack, clearly modifying them.

2. **Trend/Flow Verification:** The arrows establish a clear, unidirectional data flow: Input Examples -> LLMs -> Output Prediction. There is no branching or feedback loop shown.

3. **Component Isolation:** The diagram is cleanly segmented into three logical regions: the **Input Region** (dashed box), the **Processing Region** (LLMs box), and the **Output Region** (top box).

4. **Precision in Transcription:** All text is transcribed exactly. Notably, the tail in the orange box is intentionally left blank, which is a critical part of the diagram's meaning.

### Interpretation

This diagram demonstrates a **prompt engineering** technique for leveraging LLMs as knowledge base completion engines. It shows how a complex relational reasoning task can be framed as a text completion problem.

* **What it suggests:** The LLM is being used not just for its language understanding, but as a repository of world knowledge (e.g., knowing that "Charlie's Angels: Full Throttle" is the sequel to "Charlie's Angels"). The "×5" few-shot examples are crucial; they teach the model the desired input-output format and provide in-context learning cues about the type of knowledge required.

* **Relationships:** The diagram highlights the relationship between structured knowledge (head, relation, tail triples) and unstructured language models. It positions the LLM as an intermediary that can translate between these formats.

* **Notable Anomaly/Insight:** The blank "Tail:" in the orange box is the most significant element. It transforms the diagram from a simple flowchart into a **problem statement**. The entire setup exists to fill that blank. The output "Charlie's Angels: Full Throttle" is the model's solution, confirming that the LLM successfully retrieved the correct sequel from its parametric knowledge based on the provided context and examples. This illustrates the potential of LLMs to perform symbolic reasoning tasks when prompted appropriately.