## Heatmap: Layer Activation by Token Type

### Overview

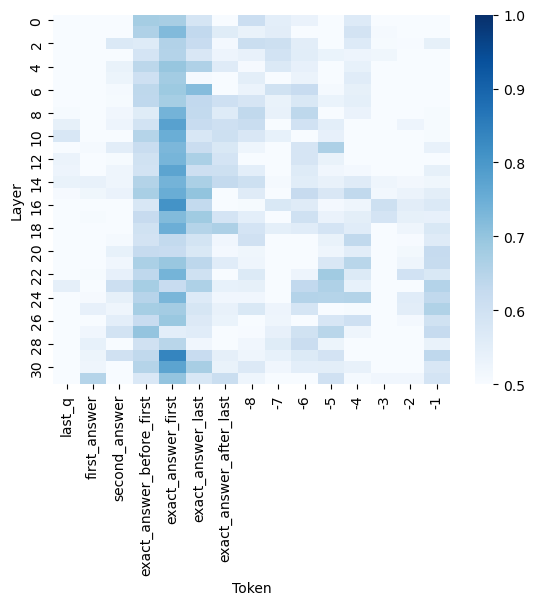

The image is a heatmap visualizing the activation levels of different layers in a neural network for various token types. The x-axis represents different token types, and the y-axis represents the layer number. The color intensity indicates the activation level, with darker blue representing higher activation and lighter blue representing lower activation.

### Components/Axes

* **X-axis (Token):** Represents different token types. The labels are: `last_q`, `first_answer`, `second_answer`, `exact_answer_before_first`, `exact_answer_first`, `exact_answer_last`, `exact_answer_after_last`, `-8`, `-7`, `-6`, `-5`, `-4`, `-3`, `-2`, `-1`, `0`.

* **Y-axis (Layer):** Represents the layer number, ranging from 0 to 30 in increments of 2. The labels are: `0`, `2`, `4`, `6`, `8`, `10`, `12`, `14`, `16`, `18`, `20`, `22`, `24`, `26`, `28`, `30`.

* **Color Scale:** A vertical color bar on the right side of the heatmap represents the activation level. The scale ranges from 0.5 (lightest blue) to 1.0 (darkest blue) in increments of 0.1.

### Detailed Analysis

The heatmap shows the activation levels for each layer and token combination.

* **last\_q:** Activation is generally low (around 0.5-0.6) across all layers, with a slight increase in activation around layer 28-30 (approximately 0.7-0.8).

* **first\_answer:** Activation is low (around 0.5-0.6) across all layers.

* **second\_answer:** Activation is low (around 0.5-0.6) across all layers.

* **exact\_answer\_before\_first:** Shows a distinct vertical band of high activation (0.8-0.9) between layers 6 and 16, with lower activation (0.5-0.7) in other layers.

* **exact\_answer\_first:** Similar to `exact_answer_before_first`, it shows a vertical band of high activation (0.8-0.9) between layers 6 and 16, with lower activation (0.5-0.7) in other layers.

* **exact\_answer\_last:** Similar to `exact_answer_before_first` and `exact_answer_first`, it shows a vertical band of high activation (0.8-0.9) between layers 6 and 16, with lower activation (0.5-0.7) in other layers.

* **exact\_answer\_after\_last:** Activation is generally low (around 0.5-0.6) across all layers.

* **-8 to 0:** Activation is generally low (around 0.5-0.7) across all layers, with some layers showing slightly higher activation (0.7-0.8).

### Key Observations

* The tokens `exact_answer_before_first`, `exact_answer_first`, and `exact_answer_last` show a clear pattern of high activation in the middle layers (approximately layers 6 to 16).

* The other tokens generally show lower and more uniform activation across all layers.

* The activation levels for tokens `-8` to `0` are generally low, with some variation across layers.

### Interpretation

The heatmap suggests that the middle layers (6-16) of the neural network are particularly sensitive to the tokens related to the exact answer (`exact_answer_before_first`, `exact_answer_first`, and `exact_answer_last`). This could indicate that these layers are responsible for processing and extracting information related to the answer. The lower activation for other tokens suggests that these layers are less relevant for processing those types of tokens. The tokens `-8` to `0` might represent positional embeddings or other contextual information, and their lower activation could indicate that these features are less important for the specific task being performed by the network.