\n

## Bar Chart: Prediction Flip Rate Comparison for Llama-3 Models

### Overview

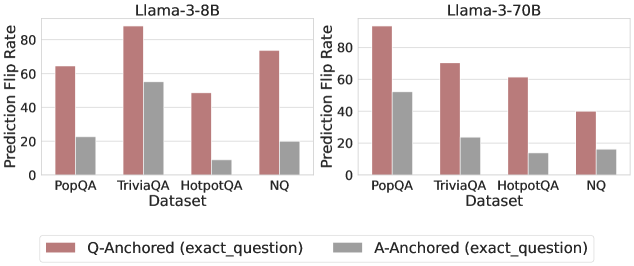

The image displays two side-by-side grouped bar charts comparing the "Prediction Flip Rate" for two different sizes of the Llama-3 model (8B and 70B parameters) across four question-answering datasets. The charts evaluate the stability of model predictions when using two different anchoring methods: "Q-Anchored (exact_question)" and "A-Anchored (exact_question)".

### Components/Axes

* **Chart Titles (Top):**

* Left Chart: `Llama-3-8B`

* Right Chart: `Llama-3-70B`

* **Y-Axis (Vertical):**

* Label: `Prediction Flip Rate`

* Scale: 0 to 80, with major tick marks at 0, 20, 40, 60, 80.

* **X-Axis (Horizontal):**

* Label: `Dataset`

* Categories (for both charts): `PopQA`, `TriviaQA`, `HotpotQA`, `NQ`.

* **Legend (Bottom Center):**

* A red/brown bar: `Q-Anchored (exact_question)`

* A grey bar: `A-Anchored (exact_question)`

* **Data Series:** Each dataset category contains two bars, one for each anchoring method, placed side-by-side.

### Detailed Analysis

**Llama-3-8B Chart (Left Panel):**

* **PopQA:**

* Q-Anchored (red/brown): ~65

* A-Anchored (grey): ~22

* **TriviaQA:**

* Q-Anchored (red/brown): ~88 (highest in this panel)

* A-Anchored (grey): ~55

* **HotpotQA:**

* Q-Anchored (red/brown): ~48

* A-Anchored (grey): ~8 (lowest in this panel)

* **NQ:**

* Q-Anchored (red/brown): ~74

* A-Anchored (grey): ~20

**Llama-3-70B Chart (Right Panel):**

* **PopQA:**

* Q-Anchored (red/brown): ~90 (highest in the entire image)

* A-Anchored (grey): ~52

* **TriviaQA:**

* Q-Anchored (red/brown): ~70

* A-Anchored (grey): ~24

* **HotpotQA:**

* Q-Anchored (red/brown): ~61

* A-Anchored (grey): ~14

* **NQ:**

* Q-Anchored (red/brown): ~40

* A-Anchored (grey): ~16

### Key Observations

1. **Consistent Anchoring Effect:** Across all datasets and both model sizes, the **Q-Anchored** method (red/brown bars) consistently results in a significantly higher Prediction Flip Rate than the **A-Anchored** method (grey bars).

2. **Model Size Impact:** The larger Llama-3-70B model shows a more extreme pattern. Its highest flip rate (PopQA, Q-Anchored) is higher than the 8B model's peak, and its lowest flip rate (HotpotQA, A-Anchored) is lower than the 8B model's low.

3. **Dataset Variability:** The flip rate varies substantially by dataset. For the 8B model, TriviaQA shows the highest instability with Q-Anchoring. For the 70B model, PopQA shows the highest instability with Q-Anchoring, while NQ shows the lowest flip rates overall for both anchoring methods.

4. **Trend Verification:**

* For the **Q-Anchored** series in the 8B model, the trend is: PopQA (medium) -> TriviaQA (peak) -> HotpotQA (dip) -> NQ (high).

* For the **A-Anchored** series in the 8B model, the trend is: PopQA (medium) -> TriviaQA (peak) -> HotpotQA (deep valley) -> NQ (medium-low).

* For the **Q-Anchored** series in the 70B model, the trend is: PopQA (peak) -> TriviaQA (medium) -> HotpotQA (medium) -> NQ (low).

* For the **A-Anchored** series in the 70B model, the trend is: PopQA (peak) -> TriviaQA (medium) -> HotpotQA (low) -> NQ (low).

### Interpretation

The data suggests that the **method used to anchor or frame a question (Q-Anchored vs. A-Anchored) has a profound and consistent impact on the stability of a large language model's predictions**, more so than the model's size or the specific dataset in many cases.

* **Q-Anchoring** (likely using the exact question text as a prompt anchor) leads to much higher prediction flip rates, indicating **lower consistency**. This could mean the model's answers are more sensitive to minor variations or perturbations when the question itself is the primary anchor.

* **A-Anchoring** (likely using the exact answer text as an anchor) results in dramatically lower flip rates, suggesting **higher robustness and consistency**. This implies that anchoring on the answer space stabilizes the model's output.

* The **increase in model scale (8B to 70B) amplifies this effect** rather than mitigating it. The larger model becomes even more stable with A-Anchoring and even more volatile with Q-Anchoring on certain datasets (like PopQA). This challenges the assumption that larger models are inherently more robust; their stability appears highly dependent on the prompting or anchoring strategy.

* The **variation across datasets** (PopQA, TriviaQA, HotpotQA, NQ) indicates that the nature of the questions and knowledge domain also interacts with the anchoring method. Datasets requiring multi-hop reasoning (HotpotQA) or containing popular knowledge (PopQA) may elicit different stability profiles.

In essence, the charts provide strong empirical evidence that **how you "ground" or anchor a query to an LLM critically determines the reliability of its responses**, and this design choice may be as important as model size for practical applications requiring consistent outputs.