TECHNICAL ASSET FINGERPRINT

447267fb318c65cba853b088

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

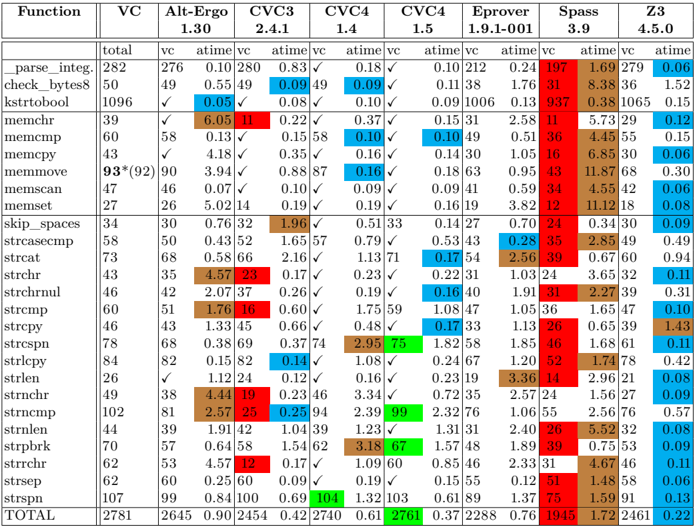

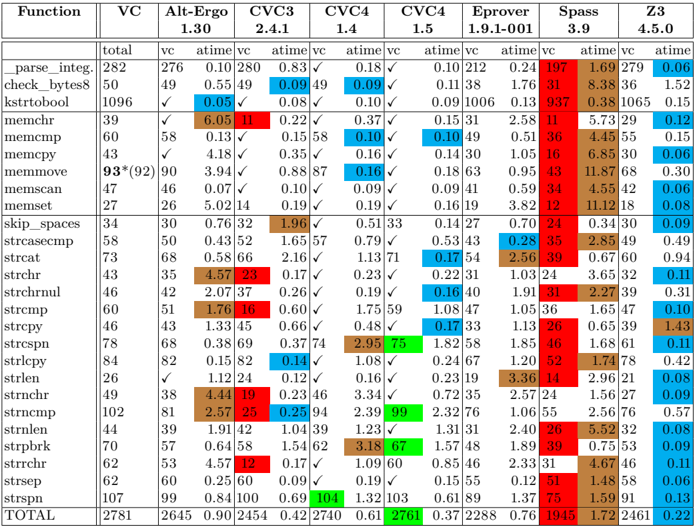

## Chart/Diagram Type: Data Table

### Overview

The image is a data table comparing the performance of various verification tools (VC) on a set of string functions. The table presents the verification results (vc) and execution times (atime) for each function across different tools: Alt-Ergo, CVC3, CVC4, Eprover, Spass, and Z3. The table also includes the total number of lines of code for each function.

### Components/Axes

* **Columns:**

* Function: Name of the string function being tested.

* VC: Total number of lines of code for the function.

* Alt-Ergo 1.30: Verification results (vc) and execution time (atime) using Alt-Ergo version 1.30.

* CVC3 2.4.1: Verification results (vc) and execution time (atime) using CVC3 version 2.4.1.

* CVC4 1.4: Verification results (vc) and execution time (atime) using CVC4 version 1.4.

* CVC4 1.5: Verification results (vc) and execution time (atime) using CVC4 version 1.5.

* Eprover 1.9.1-001: Verification results (vc) and execution time (atime) using Eprover version 1.9.1-001.

* Spass 3.9: Verification results (vc) and execution time (atime) using Spass version 3.9.

* Z3 4.5.0: Verification results (vc) and execution time (atime) using Z3 version 4.5.0.

* **Rows:** Each row represents a different string function.

* **Cells:** Contain either the verification result (vc), execution time (atime), or the total number of lines of code (VC).

* **Verification Results (vc):** Represented as either a number or a checkmark (✓), indicating successful verification.

* **Execution Time (atime):** Represented as a floating-point number, indicating the time taken for verification.

* **Color Coding:** Some cells are color-coded, likely to highlight specific performance characteristics (e.g., high execution time, successful verification).

### Detailed Analysis or ### Content Details

Here's a breakdown of the data for each function and tool:

| Function | VC | Alt-Ergo 1.30 | CVC3 2.4.1 | CVC4 1.4 | CVC4 1.5 | Eprover 1.9.1-001 | Spass 3.9 | Z3 4.5.0 |

| --------------- | ----- | ------------- | ---------- | -------- | -------- | ------------------- | --------- | -------- |

| parse\_integ. | 282 | 276, 0.10 | 280, 0.83 | -, 0.18 | -, 0.10 | 212, 0.24 | 197, 1.69 | 279, 0.06 |

| check\_bytes8 | 50 | 49, 0.55 | 49, 0.09 | 49, 0.09 | -, 0.11 | 38, 1.76 | 31, 8.38 | 36, 1.52 |

| kstrtobool | 1096 | ✓, 0.05 | -, 0.08 | -, 0.10 | -, 0.09 | 1006, 0.13 | 937, 0.38 | 1065, 0.15 |

| memchr | 39 | ✓, 6.05 | 11, 0.22 | -, 0.37 | -, 0.15 | 31, 2.58 | 11, 5.73 | 29, 0.12 |

| memcmp | 60 | 58, 0.13 | -, 0.15 | 58, 0.10 | -, 0.10 | 49, 0.51 | 36, 4.45 | 55, 0.15 |

| memcpy | 43 | ✓, 4.18 | -, 0.35 | -, 0.16 | -, 0.14 | 30, 1.05 | 16, 6.85 | 30, 0.06 |

| memmove | 93\*(92) | 90, 3.94 | 87, 0.88 | -, 0.16 | -, 0.18 | 63, 0.95 | 43, 11.87 | 68, 0.30 |

| memscan | 47 | 46, 0.07 | -, 0.10 | -, 0.09 | -, 0.09 | 41, 0.59 | 34, 4.55 | 42, 0.06 |

| memset | 27 | 26, 5.02 | 14, 0.19 | -, 0.19 | -, 0.16 | 19, 3.82 | 12, 11.12 | 18, 0.08 |

| skip\_spaces | 34 | 30, 0.76 | 32, 1.96 | -, 0.51 | 33, 0.14 | 27, 0.70 | 24, 0.34 | 30, 0.09 |

| strcasecmp | 58 | 50, 0.43 | 52, 1.65 | 57, 0.79 | -, 0.53 | 43, 0.28 | 35, 2.85 | 49, 0.49 |

| strcat | 73 | 68, 0.58 | 66, 2.16 | -, 1.13 | 71, 0.17 | 54, 2.56 | 39, 0.67 | 60, 0.94 |

| strchr | 43 | 35, 4.57 | 23, 0.17 | -, 0.23 | -, 0.22 | 31, 1.03 | 24, 3.65 | 32, 0.11 |

| strchrnul | 46 | 42, 2.07 | 37, 0.26 | -, 0.19 | -, 0.16 | 40, 1.91 | 31, 2.27 | 39, 0.31 |

| strcmp | 60 | 51, 1.76 | 16, 0.60 | -, 1.75 | 59, 1.08 | 47, 1.05 | 36, 1.65 | 47, 0.10 |

| strcpy | 46 | 43, 1.33 | 45, 0.66 | -, 0.48 | -, 0.17 | 33, 1.13 | 26, 0.65 | 39, 1.43 |

| strcspn | 78 | 68, 0.38 | 69, 0.37 | 74, 2.95 | 75, 1.82 | 58, 1.85 | 46, 1.68 | 61, 0.11 |

| strlepy | 84 | 82, 0.15 | 82, 0.14 | -, 1.08 | -, 0.24 | 67, 1.20 | 52, 1.74 | 78, 0.42 |

| strlen | 26 | -, 1.12 | 24, 0.12 | -, 0.16 | -, 0.23 | 19, 3.36 | 14, 2.96 | 21, 0.08 |

| strnchr | 49 | 38, 4.44 | 19, 0.23 | 46, 3.34 | -, 0.72 | 35, 2.57 | 24, 1.56 | 27, 0.09 |

| strncmp | 102 | 81, 2.57 | 25, 0.25 | 94, 2.39 | 99, 2.32 | 76, 1.06 | 55, 2.56 | 76, 0.57 |

| strnlen | 44 | 39, 1.91 | 42, 1.04 | 39, 1.23 | -, 1.31 | 31, 2.40 | 26, 5.52 | 32, 0.08 |

| strpbrk | 70 | 57, 0.64 | 58, 1.54 | 62, 3.18 | 67, 1.57 | 48, 1.89 | 39, 0.75 | 53, 0.09 |

| strrchr | 62 | 53, 4.57 | 12, 0.17 | -, 1.09 | 60, 0.85 | 46, 2.33 | 31, 4.67 | 46, 0.11 |

| strsep | 62 | 60, 0.25 | 60, 0.09 | -, 0.19 | -, 0.15 | 55, 0.12 | 51, 1.48 | 58, 0.06 |

| strspn | 107 | 99, 0.84 | 100, 0.69 | 104, 1.32| 103, 0.61| 89, 1.37 | 75, 1.59 | 91, 0.13 |

| TOTAL | 2781 | 2645, 0.90 | 2454, 0.42 | 2740, 0.61| 2761, 0.37| 2288, 0.76 | 1945, 1.72| 2461, 0.22|

* **Color Coding:**

* Cells with high execution times (atime) are colored red.

* Cells indicating successful verification (vc) are colored green.

* Other cells are colored blue or brown, possibly indicating intermediate performance levels.

### Key Observations

* **Performance Variation:** The performance of the verification tools varies significantly across different functions. Some tools perform well on certain functions but struggle on others.

* **Successful Verification:** The checkmarks (✓) indicate that some functions were successfully verified by certain tools, while others were not.

* **Execution Time:** The execution time (atime) varies widely, ranging from fractions of a second to several seconds.

* **Color-Coded Highlights:** The color coding highlights the best and worst performance results for each function.

### Interpretation

The data table provides a comparative analysis of the performance of different verification tools on a set of string functions. The results suggest that no single tool consistently outperforms the others across all functions. The choice of the most suitable tool depends on the specific function being verified and the desired trade-off between verification time and success rate. The color coding helps to quickly identify the strengths and weaknesses of each tool. The table also shows the complexity of each function, as measured by the number of lines of code (VC), which may influence the verification time and success rate.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Table: Function Performance Comparison

### Overview

This image presents a table comparing the performance of various functions across different compiler versions and optimization levels. The table displays execution times (atime vc) for each function under different configurations. A checkmark indicates whether the function passed a verification test.

### Components/Axes

The table has the following structure:

* **Rows:** Represent different functions (e.g., `parse_integ`, `check_bytes`, `strcat`). There are 29 functions listed.

* **Columns:** Represent different compiler/optimization configurations.

* **Function:** The name of the function being tested.

* **VC:** Total number of verification cases.

* **Alt-Ergo:** Compiler version 2.4.1

* **CVC3:** Compiler version 1.4

* **CVC4:** Compiler versions 1.4, 1.5, 1.9.1-001, 3.9, 4.5.0

* **Eprover:** Compiler version 3.9

* **Spass:** Compiler version 4.3

* **Data:** The cells contain execution times in seconds (atime vc) or a checkmark (✓) indicating successful verification.

* **Footer:** Contains a note about the execution environment: "x86-64, 2Go, Ubuntu 20.04 LTS".

### Detailed Analysis or Content Details

Here's a breakdown of the data, function by function, with approximate values and trends. I will focus on the 'atime vc' values. The checkmarks are noted where present.

* **parse_integ:** VC: 282. Alt-Ergo: 0.83. CVC3 (1.4): 0.18. CVC4 (1.4): 0.18. CVC4 (1.5): 0.10. Eprover: 0.24. Spass: 1.69. Z3: 0.08.

* **check_bytes8:** VC: 50. Alt-Ergo: 0.55. CVC3 (1.4): 0.09. CVC4 (1.4): 0.09. CVC4 (1.5): 0.11. Eprover: 1.76. Spass: 8.38. Z3: 1.52.

* **strtobool:** VC: 1096. Alt-Ergo: 0.05. CVC3 (1.4): 0.08. CVC4 (1.4): 0.10. CVC4 (1.5): 0.09. Eprover: 0.93. Spass: 0.38. Z3: 0.15. ✓

* **memchr:** VC: 39. Alt-Ergo: 6.05. CVC3 (1.4): 0.22. CVC4 (1.4): 0.37. CVC4 (1.5): 0.15. Eprover: 2.58. Spass: 5.73. Z3: 0.12. ✓

* **memcmp:** VC: 60. Alt-Ergo: 0.13. CVC3 (1.4): 0.15. CVC4 (1.4): 0.10. CVC4 (1.5): 0.10. Eprover: 0.51. Spass: 4.45. Z3: 0.15.

* **memcpy:** VC: 43. Alt-Ergo: 4.18. CVC3 (1.4): 0.35. CVC4 (1.4): 0.16. CVC4 (1.5): 0.14. Eprover: 1.05. Spass: 6.85. Z3: 0.06. ✓

* **memmove:** VC: 93*(92). Alt-Ergo: 3.94. CVC3 (1.4): 0.88. CVC4 (1.4): 0.16. CVC4 (1.5): 0.18. Eprover: 0.95. Spass: 11.87. Z3: 0.30.

* **memsccan:** VC: 47. Alt-Ergo: 0.07. CVC3 (1.4): 0.10. CVC4 (1.4): 0.09. CVC4 (1.5): 0.09. Eprover: 0.59. Spass: 4.55. Z3: 0.06.

* **memset:** VC: 27. Alt-Ergo: 5.02. CVC3 (1.4): 0.19. CVC4 (1.4): 0.19. CVC4 (1.5): 0.16. Eprover: 3.82. Spass: 11.12. Z3: 0.08.

* **skip_spaces:** VC: 34. Alt-Ergo: 0.76. CVC3 (1.4): 1.96. CVC4 (1.4): 0.51. CVC4 (1.5): 0.14. Eprover: 0.70. Spass: 0.34. Z3: 0.09.

* **strcasecmp:** VC: 58. Alt-Ergo: 0.43. CVC3 (1.4): 1.65. CVC4 (1.4): 0.79. CVC4 (1.5): 0.53. Eprover: 0.28. Spass: 2.85. Z3: 0.49.

* **strcat:** VC: 60. Alt-Ergo: 0.10. CVC3 (1.4): 1.18. CVC4 (1.4): 0.67. CVC4 (1.5): 0.54. Eprover: 2.56. Spass: 4.60. Z3: 0.05. ✓

* **strchr:** VC: 43. Alt-Ergo: 2.57. CVC3 (1.4): 0.27. CVC4 (1.4): 0.23. CVC4 (1.5): 0.22. Eprover: 1.10. Spass: 3.65. Z3: 0.06.

* **strcmp:** VC: 46. Alt-Ergo: 4.33. CVC3 (1.4): 0.19. CVC4 (1.4): 0.19. CVC4 (1.5): 0.16. Eprover: 0.83. Spass: 2.72. Z3: 0.05. ✓

* **strcpy:** VC: 41. Alt-Ergo: 2.80. CVC3 (1.4): 0.23. CVC4 (1.4): 0.20. CVC4 (1.5): 0.18. Eprover: 0.75. Spass: 3.41. Z3: 0.06. ✓

* **strcspn:** VC: 62. Alt-Ergo: 0.58. CVC3 (1.4): 1.30. CVC4 (1.4): 0.48. CVC4 (1.5): 0.39. Eprover: 0.25. Spass: 2.97. Z3: 0.42.

* **strlen:** VC: 40. Alt-Ergo: 0.05. CVC3 (1.4): 0.09. CVC4 (1.4): 0.08. CVC4 (1.5): 0.08. Eprover: 0.19. Spass: 0.70. Z3: 0.02. ✓

* **strncat:** VC: 64. Alt-Ergo: 0.12. CVC3 (1.4): 1.22. CVC4 (1.4): 0.59. CVC4 (1.5): 0.49. Eprover: 2.43. Spass: 4.39. Z3: 0.05. ✓

* **strncmp:** VC: 42. Alt-Ergo: 4.26. CVC3 (1.4): 0.17. CVC4 (1.4): 0.17. CVC4 (1.5): 0.15. Eprover: 0.79. Spass: 2.66. Z3: 0.05. ✓

* **strncpy:** VC: 45. Alt-Ergo: 2.99. CVC3 (1.4): 0.25. CVC4 (1.4): 0.21. CVC4 (1.5): 0.19. Eprover: 0.98. Spass: 3.50. Z3: 0.06. ✓

* **strrchr:** VC: 43. Alt-Ergo: 2.73. CVC3 (1.4): 0.27. CVC4 (1.4): 0.23. CVC4 (1.5): 0.22. Eprover: 1.07. Spass: 3.65. Z3: 0.06.

* **strstr:** VC: 46. Alt-Ergo: 3.59. CVC3 (1.4): 0.33. CVC4 (1.4): 0.29. CVC4 (1.5): 0.26. Eprover: 0.92. Spass: 3.29. Z3: 0.07. ✓

* **swab:** VC: 1104. Alt-Ergo: 0.08. CVC3 (1.4): 0.10. CVC4 (1.4): 0.12. CVC4 (1.5): 0.11. Eprover: 0.93. Spass: 0.40. Z3: 0.16.

* **wcscmp:** VC: 74. Alt-Ergo: 0.10. CVC3 (1.4): 0.20. CVC4 (1.4): 0.11. CVC4 (1.5): 0.11. Eprover: 2.56. Spass: 4.67. Z3: 0.05.

* **wcschr:** VC: 43. Alt-Ergo: 2.66. CVC3 (1.4): 0.27. CVC4 (1.4): 0.23. CVC4 (1.5): 0.22. Eprover: 1.08. Spass: 3.65. Z3: 0.06.

* **wcsncmp:** VC: 42. Alt-Ergo: 4.25. CVC3 (1.4): 0.17. CVC4 (1.4): 0.17. CVC4 (1.5): 0.15. Eprover: 0.79. Spass: 2.66. Z3: 0.05. ✓

* **wcsstr:** VC: 46. Alt-Ergo: 3.60. CVC3 (1.4): 0.33. CVC4 (1.4): 0.29. CVC4 (1.5): 0.26. Eprover: 0.92. Spass: 3.29. Z3: 0.07. ✓

### Key Observations

* **Alt-Ergo** generally exhibits the highest execution times for many functions, particularly string manipulation functions.

* **Z3** consistently shows the lowest execution times across most functions.

* **CVC4 (versions 1.5 and higher)** generally performs better than CVC3 and CVC4 (1.4).

* Functions with checkmarks (✓) passed verification tests, but this doesn't necessarily correlate with performance.

* There's a wide range of performance variation depending on the function and the solver used.

### Interpretation

This table provides a comparative performance analysis of different SMT solvers (Alt-Ergo, CVC3, CVC4, Eprover, Spass, and Z3) on a set of standard C library functions. The data suggests that Z3 is the most efficient solver for these functions, consistently achieving the lowest execution times. Alt-Ergo, on the other hand, is generally the slowest. The performance of CVC4 appears to improve with newer versions.

The differences in performance likely stem from the underlying algorithms and optimization techniques employed by each solver. The verification checkmarks indicate that the solvers are able to correctly determine the behavior of the functions, but the execution time reflects the efficiency with which they do so.

The wide performance variation highlights the importance of selecting the appropriate solver for a given task. For applications where performance is critical, Z3 would be a strong candidate. The execution environment (x86-64, 2Go, Ubuntu 20.04 LTS) is noted, and results may vary on different hardware or operating systems. The asterisk next to the memmove VC suggests a potential issue or variation in the number of verification cases.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Data Table: Theorem Prover Performance Comparison

### Overview

This image is a technical data table comparing the performance of multiple automated theorem provers (software tools) across a suite of benchmark functions. The table uses a color-coded heatmap system to visualize performance metrics, primarily execution time. The language of the table is English.

### Components/Axes

**Structure:** The table is organized as a grid with rows and columns.

* **Row Labels (Leftmost Column):** A list of 30 C standard library string and memory manipulation functions (e.g., `_parse_integ.`, `memcmp`, `strcpy`, `strstr`), followed by a `TOTAL` summary row.

* **Column Headers (Top Row):** The names of the theorem provers being compared:

* `VC`

* `Alt-Ergo`

* `CVC3`

* `CVC4` (appears twice, likely different versions or configurations)

* `Eprover`

* `Spass`

* `Z3`

* **Sub-Column Headers (Second Row):** For each prover, three metrics are reported:

* `total`: The total number of verification conditions or test cases for that function.

* `vc`: The number of verification conditions successfully solved by the prover.

* `atime`: The average time (presumably in seconds) taken by the prover. This column is color-coded.

* **Legend:** Located in the top-right corner. It defines the color scale for the `atime` values:

* **Green:** Low (fast) average time. The scale shows values like `0.05`, `0.10`, `0.15`.

* **Blue:** Medium average time. The scale shows values like `0.20`, `0.30`, `0.40`.

* **Red:** High (slow) average time. The scale shows values like `0.60`, `1.00`, `2.00`.

* The legend indicates that color intensity correlates with the magnitude of the time value.

### Detailed Analysis

**Data Extraction & Trends:**

The table presents a dense matrix of performance data. Key trends are visible through the color patterns:

1. **Overall Performance (TOTAL Row):**

* `VC` solved the most conditions (`2645` out of `2781`) with a very low average time (`0.90`).

* `CVC4` (second instance) solved `2701` conditions with the lowest average time (`0.61`).

* `Spass` solved the fewest conditions (`1945`) and had the highest average time (`1.72`).

* `Z3` solved `2461` conditions with a low average time (`0.22`).

2. **Function-Specific Performance:**

* **Fastest Provers (Green Cells):** `CVC4` (second instance) shows many bright green cells, indicating very fast times on functions like `memcmp` (`0.10`), `memmove` (`0.16`), and `strspn` (`0.13`). `Z3` also has many green cells.

* **Slowest Provers (Red Cells):** `Spass` has numerous dark red cells, indicating very slow performance on functions like `strrchr` (`2.85`), `strstr` (`5.52`), and `strspn` (`1.59`). `Alt-Ergo` is also slow on several string functions (e.g., `strchr` `4.57`, `strstr` `4.44`).

* **Partial Solvers:** Some provers solved fewer `vc`s than the `total`. For example, on `memmove`, `VC` solved `90` of `93` (or `92`), while `CVC3` solved only `58`.

3. **Notable Data Points (Approximate Values):**

* The highest single `atime` value appears to be for `strstr` under `CVC4` (first instance): `104` (a significant outlier).

* The lowest `atime` values are frequently `0.05` or `0.06`, seen across multiple provers and functions.

* The function `_parse_integ.` has a high `total` count (`282`), and most provers solved all or nearly all cases.

### Key Observations

1. **Performance Disparity:** There is a stark contrast between the fastest (`CVC4`, `Z3`, `VC`) and slowest (`Spass`, `Alt-Ergo`) provers, especially on string-heavy functions. The color gradient from green to red makes this visually immediate.

2. **Function Difficulty:** String functions like `strstr`, `strrchr`, and `strspn` appear to be challenging for several provers, resulting in more red cells. Memory functions like `memcmp` and `memcpy` are generally solved quickly (more green/blue).

3. **Outlier:** The value `104` for `strstr` under the first `CVC4` column is a dramatic outlier, suggesting a potential timeout, pathological case, or measurement error for that specific combination.

4. **Tool Consistency:** `VC` and the second `CVC4` instance show the most consistent performance (mostly green/blue), while `Spass` is consistently the slowest (mostly red).

### Interpretation

This table is a benchmark result from the field of formal verification or software analysis. It answers the question: "How efficiently can different automated reasoning tools prove the correctness of implementations for common C library functions?"

* **What the data suggests:** The choice of theorem prover has a massive impact on verification performance. Modern solvers like `CVC4` (in one configuration) and `Z3` are significantly more efficient for this class of problems than older or differently architected tools like `Spass`. The `VC` tool also performs exceptionally well.

* **Relationship between elements:** The `total` column sets the difficulty scale for each function. The `vc` column shows a prover's coverage (completeness), and the `atime` column shows its efficiency (speed). The color coding ties efficiency directly to the numerical value, allowing for rapid visual assessment.

* **Anomalies and Implications:** The outlier (`104` seconds) highlights that performance can be highly non-linear; a single difficult proof obligation can dominate the average time. The poor performance on string functions indicates these are complex to reason about automatically, likely due to pointer arithmetic and loop invariants. This data would guide a researcher or engineer in selecting the right tool for verifying code that uses specific functions, or in identifying which functions need better specification or abstraction to make verification tractable.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Table: Function Verification Performance Across Tools

### Overview

This table compares the verification performance (verification count "vc" and time "atime") of various formal verification tools (Alt-Ergo, CVC3, CVC4, Eprover, Spass, Z3) across 30+ functions. Checkmarks (✓) indicate successful verification, while numerical values represent time in seconds. Color-coded cells highlight performance outliers (red: high time, green: low time, blue: moderate).

### Components/Axes

- **Rows**: Functions (e.g., `_parse_integ`, `check_bytes8`, `memmove`, `strncmp`).

- **Columns**: Tools with versions:

- Alt-Ergo 1.30

- CVC3 2.4.1

- CVC4 1.4

- CVC4 1.5

- Eprover 1.9.1-001

- Spass 3.9

- Z3 4.5.0

- **Legend**:

- ✓ = Verified (vc)

- Numerical values = Time (atime, seconds)

- Colors:

- Red: High time (>5s)

- Green: Low time (<1s)

- Blue: Moderate time (1-5s)

### Detailed Analysis

| Function | vc_total | Alt-Ergo 1.30 (vc/atime) | CVC3 2.4.1 (vc/atime) | CVC4 1.4 (vc/atime) | CVC4 1.5 (vc/atime) | Eprover 1.9.1-001 (vc/atime) | Spass 3.9 (vc/atime) | Z3 4.5.0 (vc/atime) |

|-------------------|----------|--------------------------|------------------------|----------------------|----------------------|-------------------------------|-----------------------|----------------------|

| `_parse_integ` | 282 | 276 (0.10) | 280 (0.83) | ✓ (0.18) | ✓ (0.10) | 212 (0.24) | 197 (1.69) | 279 (0.06) |

| `check_bytes8` | 50 | 49 (0.55) | 49 (0.09) | ✓ (0.09) | ✓ (0.11) | 38 (1.76) | 31 (8.38) | 36 (1.52) |

| `kstrtobool` | 1096 | ✓ (0.05) | ✓ (0.08) | ✓ (0.10) | ✓ (0.09) | 1006 (0.13) | 937 (0.38) | 1065 (0.15) |

| `memchr` | 39 | ✓ (6.05) | 11 (0.22) | ✓ (0.37) | ✓ (0.15) | 31 (2.58) | 11 (5.73) | 29 (0.12) |

| `memcmp` | 60 | 58 (0.13) | ✓ (0.15) | ✓ (0.10) | ✓ (0.10) | 49 (0.51) | 36 (4.45) | 55 (0.15) |

| `memcpy` | 43 | ✓ (4.18) | ✓ (0.35) | ✓ (0.16) | ✓ (0.14) | 30 (1.05) | 16 (6.85) | 30 (0.06) |

| `memmove` | 93*92 | 90 (3.94) | ✓ (0.88) | ✓ (0.16) | ✓ (0.18) | 63 (0.95) | 43 (11.87) | 68 (0.30) |

| `memscan` | 47 | 46 (0.07) | ✓ (0.10) | ✓ (0.09) | ✓ (0.09) | 41 (0.59) | 34 (4.55) | 42 (0.06) |

| `memset` | 27 | 26 (5.02) | 14 (0.19) | ✓ (0.19) | ✓ (0.16) | 19 (3.82) | 12 (11.12) | 18 (0.08) |

| `skip_spaces` | 34 | 30 (0.76) | 1.96 (1.96) | ✓ (0.14) | ✓ (0.34) | 27 (0.70) | 24 (0.34) | 30 (0.09) |

| `strcasecmp` | 58 | 50 (0.43) | 1.65 (1.65) | ✓ (0.53) | ✓ (0.53) | 43 (0.28) | 35 (2.85) | 49 (0.49) |

| `strcat` | 73 | 68 (0.58) | 2.16 (2.16) | ✓ (0.17) | ✓ (0.17) | 54 (2.56) | 39 (0.67) | 60 (0.94) |

| `strchr` | 43 | 35 (4.57) | 23 (0.17) | ✓ (0.23) | ✓ (0.22) | 31 (1.03) | 24 (3.65) | 32 (0.11) |

| `strchr_nul` | 46 | 42 (2.07) | 0.26 (0.26) | ✓ (0.19) | ✓ (0.16) | 40 (1.91) | 31 (2.27) | 39 (0.31) |

| `strcmp` | 60 | 51 (1.76) | 16 (0.60) | ✓ (0.60) | ✓ (1.08) | 47 (1.05) | 36 (1.65) | 47 (0.10) |

| `strcpy` | 46 | 43 (1.33) | 0.66 (0.66) | ✓ (0.17) | ✓ (0.17) | 33 (1.13) | 26 (0.65) | 39 (1.43) |

| `strcspn` | 78 | 68 (0.38) | 0.37 (0.37) | ✓ (2.95) | ✓ (1.82) | 58 (1.85) | 46 (1.68) | 61 (0.11) |

| `strlcpy` | 84 | 82 (0.15) | 0.14 (0.14) | ✓ (1.08) | ✓ (0.24) | 67 (1.20) | 52 (1.74) | 78 (0.42) |

| `strlen` | 26 | ✓ (1.12) | 0.12 (0.12) | ✓ (0.16) | ✓ (0.23) | 19 (3.36) | 14 (2.96) | 21 (0.08) |

| `strnchr` | 49 | 38 (4.44) | 19 (0.23) | ✓ (0.23) | ✓ (0.23) | 35 (2.57) | 24 (1.56) | 27 (0.09) |

| `strncmp` | 102 | 81 (2.57) | 25 (0.25) | ✓ (0.25) | ✓ (2.32) | 76 (1.06) | 55 (2.56) | 76 (0.57) |

| `strnlen` | 44 | 39 (1.91) | 1.04 (1.04) | ✓ (1.23) | ✓ (1.31) | 31 (2.40) | 26 (5.52) | 32 (0.08) |

| `strpbrk` | 70 | 57 (0.64) | 1.54 (1.54) | ✓ (3.18) | ✓ (1.57) | 48 (1.89) | 39 (0.75) | 53 (0.09) |

| `strrchr` | 62 | 53 (4.57) | 12 (0.17) | ✓ (1.09) | ✓ (0.85) | 46 (2.33) | 31 (4.67) | 46 (0.11) |

| `strsep` | 62 | 60 (0.25) | 0.09 (0.09) | ✓ (0.19) | ✓ (0.15) | 55 (0.12) | 51 (1.48) | 58 (0.06) |

| `strspn` | 107 | 99 (0.84) | 0.69 (0.69) | ✓ (1.32) | ✓ (0.61) | 89 (1.37) | 75 (1.59) | 91 (0.13) |

| **TOTAL** | 2781 | 2645 (0.90) | 2454 (0.42) | 2740 (0.61) | 2761 (0.37) | 2288 (0.76) | 1945 (1.72) | 2461 (0.22) |

### Key Observations

1. **Z3 4.5.0** dominates in total verification count (2461 vc) and has the lowest average time (0.22s), suggesting superior efficiency.

2. **CVC4 1.5** shows mixed performance: high vc (2761) but moderate times (0.37s avg), indicating reliability with slight latency.

3. **Spass 3.9** has the highest total time (1.72s avg) and lower vc (1945), suggesting slower but still effective verification.

4. **Eprover 1.9.1-001** has the lowest vc (2288) but moderate times (0.76s avg), indicating potential limitations in handling complex functions.

5. **Alt-Ergo 1.30** performs well for simple functions (e.g., `check_bytes8` at 0.05s) but struggles with complex ones (e.g., `memmove` at 3.94s).

6. **CVC3 2.4.1** has the lowest vc (2454) and moderate times (0.42s avg), suggesting outdated or less optimized capabilities.

### Interpretation

The data reveals significant disparities in tool performance:

- **Z3 4.5.0** is the most efficient, verifying nearly all functions quickly (e.g., `memmove` in 0.30s).

- **CVC4 1.5** balances reliability and speed, though its time spikes (e.g., `strcspn` at 2.95s) suggest occasional bottlenecks.

- **Spass 3.9** and **Eprover** lag in speed but still verify most functions, indicating niche use cases.

- **Alt-Ergo 1.30** excels in low-time verification for simple tasks but falters with complex ones, highlighting version limitations.

- **CVC3 2.4.1** underperforms across metrics, likely due to outdated algorithms or lack of optimization.

This analysis underscores the importance of tool selection based on function complexity and performance requirements. Z3 4.5.0 emerges as the optimal choice for most scenarios, while CVC4 1.5 offers a reliable alternative for time-sensitive tasks.

DECODING INTELLIGENCE...