## Scatter Plot with Trend Lines: AI Model Scaling Laws (Parameters vs. FLOPs)

### Overview

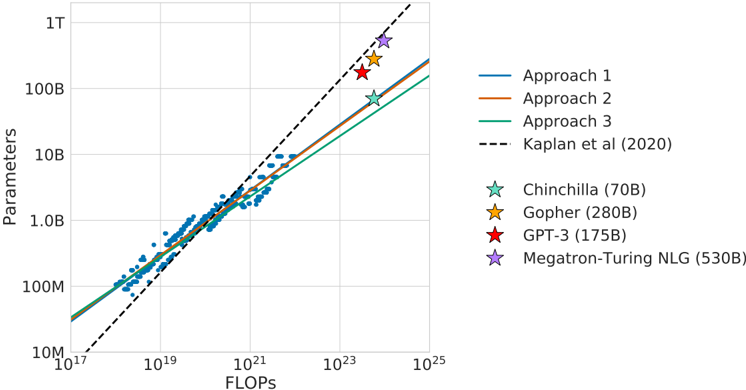

This image is a log-log scatter plot illustrating the scaling relationship between the number of parameters in various large language models (LLMs) and the computational cost, measured in Floating Point Operations (FLOPs). It compares three empirical scaling approaches ("Approach 1, 2, 3") against a previously established scaling law from "Kaplan et al. (2020)." Specific, notable AI models are highlighted as star-shaped data points.

### Components/Axes

* **Chart Type:** Log-log scatter plot with overlaid trend lines.

* **X-Axis:** Labeled **"FLOPs"**. It uses a logarithmic scale with major tick marks at `10^17`, `10^19`, `10^21`, `10^23`, and `10^25`.

* **Y-Axis:** Labeled **"Parameters"**. It uses a logarithmic scale with major tick marks at `10M` (10 million), `100M`, `1.0B` (1 billion), `10B`, `100B`, and `1T` (1 trillion).

* **Legend (Right Side):**

* **Lines:**

* `Approach 1`: Solid blue line.

* `Approach 2`: Solid orange line.

* `Approach 3`: Solid green line.

* `Kaplan et al (2020)`: Dashed black line.

* **Star-Marked Data Points:**

* `Chinchilla (70B)`: Teal star.

* `Gopher (280B)`: Yellow star.

* `GPT-3 (175B)`: Red star.

* `Megatron-Turing NLG (530B)`: Purple star.

* **Data Points:** A dense cluster of small blue dots, primarily between `10^18` and `10^22` FLOPs and `100M` to `10B` parameters, representing a broader dataset of models.

### Detailed Analysis

* **Trend Lines:** All four trend lines slope upward from left to right, indicating a positive correlation: as the number of parameters increases, the required FLOPs also increase.

* The **Kaplan et al. (2020)** dashed line has the steepest slope, suggesting a scaling law where parameters grow more rapidly relative to compute compared to the other approaches.

* **Approaches 1, 2, and 3** are solid lines with very similar, slightly shallower slopes than the Kaplan line. They are tightly grouped, with Approach 1 (blue) being the highest, followed by Approach 2 (orange), and then Approach 3 (green).

* **Star-Marked Models (Approximate Positions):**

* **Chinchilla (70B):** Positioned near the `Approach 3` (green) line. Approximate coordinates: `~10^23 FLOPs`, `~70B Parameters`.

* **GPT-3 (175B):** Positioned above all three solid approach lines and slightly below the Kaplan line. Approximate coordinates: `~3 x 10^23 FLOPs`, `~175B Parameters`.

* **Gopher (280B):** Positioned above the solid lines and very close to the Kaplan line. Approximate coordinates: `~10^24 FLOPs`, `~280B Parameters`.

* **Megatron-Turing NLG (530B):** Positioned highest on the chart, slightly above the Kaplan line. Approximate coordinates: `~2 x 10^24 FLOPs`, `~530B Parameters`.

* **Blue Data Points:** The cluster of smaller blue dots generally follows the trajectory of the solid trend lines (Approaches 1-3), with significant scatter, especially in the `10^20` to `10^22` FLOPs range.

### Key Observations

1. **Divergence from Kaplan Law:** The three solid "Approach" lines consistently predict a **lower parameter count for a given FLOPs budget** compared to the Kaplan et al. (2020) dashed line, especially at higher compute scales (beyond `10^22` FLOPs).

2. **Model Placement:** The highlighted models (stars) do not uniformly follow one trend line.

* Chinchilla aligns closely with Approach 3.

* GPT-3 and Gopher fall between the solid lines and the Kaplan line.

* Megatron-Turing NLG sits slightly above the Kaplan line.

3. **Scaling Efficiency:** The chart visually suggests that more recent models (like Chinchilla) may be following a different, potentially more compute-efficient scaling path (Approach 3) than the one proposed in the earlier Kaplan et al. work.

### Interpretation

This chart is a technical comparison of **scaling laws** for large language models. Scaling laws are formulas that predict how a model's performance (or in this case, its size) should change as you increase the computational resources (FLOPs) used to train it.

* **What the data suggests:** The plot demonstrates that the relationship between model size (parameters) and training compute (FLOPs) is not singular. The "Kaplan et al. (2020)" line represents an earlier, influential hypothesis. The three solid "Approach" lines likely represent newer, empirically derived scaling laws that suggest a different trade-off—specifically, that you can achieve a certain capability level with fewer parameters (and thus a smaller model) than the Kaplan law would predict for the same compute budget. This has major implications for cost, inference speed, and deployment.

* **How elements relate:** The blue scatter points provide the empirical foundation from which the trend lines are derived. The star-marked models serve as real-world benchmarks to test these theoretical lines. The fact that models like GPT-3 and Gopher lie between the lines indicates they were likely trained under paradigms that didn't strictly adhere to any single published scaling law, or that the laws are approximations.

* **Notable insight:** The position of **Chinchilla** is particularly significant. It sits almost exactly on the "Approach 3" line, which is the lowest of the three solid lines. This visually reinforces the key finding from the Chinchilla paper: that many large models were **over-parameterized** and could achieve the same performance with fewer parameters if trained on more data, following a more compute-optimal scaling path. This chart is essentially a visual argument for that more efficient scaling paradigm.