\n

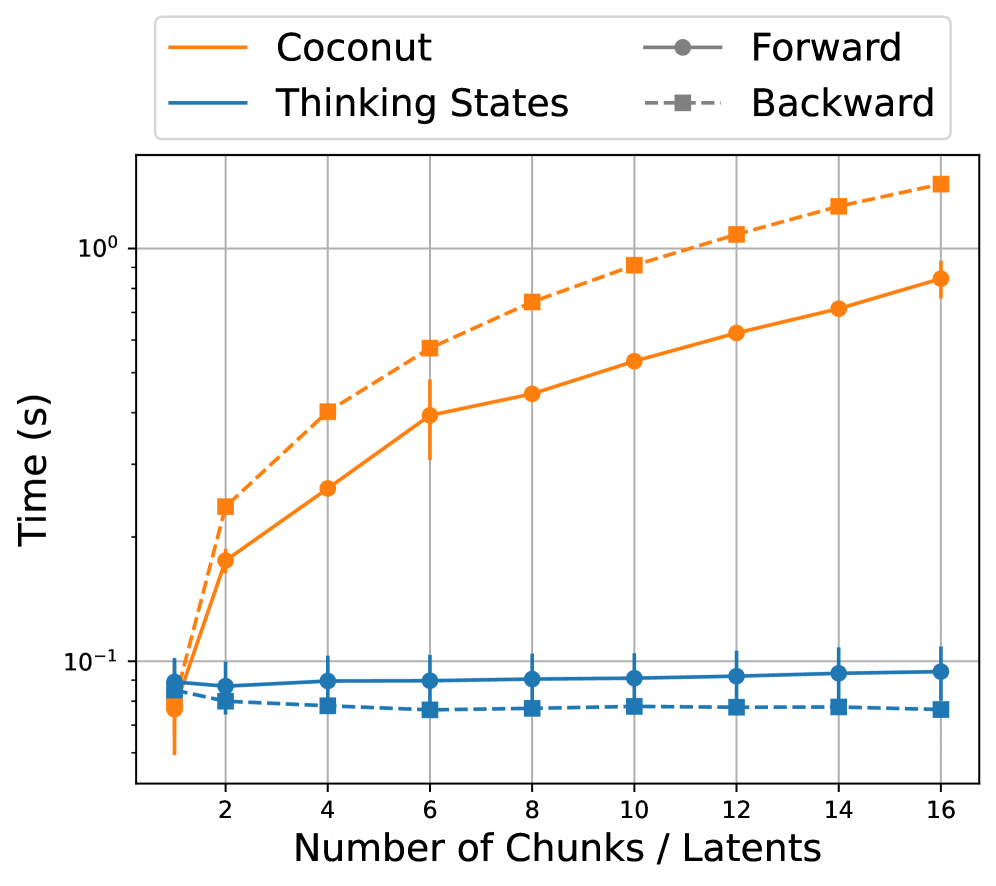

## Line Chart: Performance vs. Chunk Size

### Overview

This image presents a line chart comparing the time taken (in seconds) by different methods – Coconut, Thinking States, Forward, and Backward – as the number of chunks/latents increases from 1 to 16. The y-axis uses a logarithmic scale.

### Components/Axes

* **X-axis:** "Number of Chunks / Latents" ranging from 1 to 16. The axis is linearly scaled.

* **Y-axis:** "Time (s)" ranging from approximately 0.01 to 100 seconds. The axis is logarithmically scaled, with markers at 0.1 and 10.

* **Legend:** Located at the top-center of the chart.

* Coconut (Orange solid line)

* Thinking States (Blue solid line)

* Forward (Gray solid line with circle markers)

* Backward (Blue dashed line with square markers)

### Detailed Analysis

The chart displays four distinct lines representing the performance of each method.

* **Coconut (Orange):** The line slopes upward, indicating increasing time with increasing chunks/latents.

* At 1 chunk/latent: ~0.03 seconds

* At 2 chunks/latents: ~0.2 seconds

* At 4 chunks/latents: ~0.6 seconds

* At 6 chunks/latents: ~1.2 seconds

* At 8 chunks/latents: ~1.7 seconds

* At 10 chunks/latents: ~2.2 seconds

* At 12 chunks/latents: ~2.7 seconds

* At 14 chunks/latents: ~3.2 seconds

* At 16 chunks/latents: ~3.7 seconds

* **Thinking States (Blue):** The line is relatively flat, indicating minimal change in time with increasing chunks/latents.

* At 1 chunk/latent: ~0.04 seconds

* At 2 chunks/latents: ~0.05 seconds

* At 4 chunks/latents: ~0.05 seconds

* At 6 chunks/latents: ~0.05 seconds

* At 8 chunks/latents: ~0.05 seconds

* At 10 chunks/latents: ~0.05 seconds

* At 12 chunks/latents: ~0.05 seconds

* At 14 chunks/latents: ~0.05 seconds

* At 16 chunks/latents: ~0.05 seconds

* **Forward (Gray):** The line is nearly horizontal, showing very little variation in time.

* At 1 chunk/latent: ~0.06 seconds

* At 2 chunks/latents: ~0.06 seconds

* At 4 chunks/latents: ~0.06 seconds

* At 6 chunks/latents: ~0.06 seconds

* At 8 chunks/latents: ~0.06 seconds

* At 10 chunks/latents: ~0.06 seconds

* At 12 chunks/latents: ~0.06 seconds

* At 14 chunks/latents: ~0.06 seconds

* At 16 chunks/latents: ~0.06 seconds

* **Backward (Blue dashed):** The line is also relatively flat, but slightly below the "Thinking States" line.

* At 1 chunk/latent: ~0.03 seconds

* At 2 chunks/latents: ~0.04 seconds

* At 4 chunks/latents: ~0.04 seconds

* At 6 chunks/latents: ~0.04 seconds

* At 8 chunks/latents: ~0.04 seconds

* At 10 chunks/latents: ~0.04 seconds

* At 12 chunks/latents: ~0.04 seconds

* At 14 chunks/latents: ~0.04 seconds

* At 16 chunks/latents: ~0.04 seconds

### Key Observations

* The "Coconut" method exhibits a clear positive correlation between the number of chunks/latents and the time taken.

* "Thinking States", "Forward", and "Backward" methods show minimal sensitivity to the number of chunks/latents, maintaining relatively constant execution times.

* The logarithmic scale on the y-axis emphasizes the significant difference in time scaling between "Coconut" and the other methods.

* "Coconut" is significantly slower than the other methods, especially as the number of chunks/latents increases.

### Interpretation

The data suggests that the "Coconut" method's computational cost scales with the size of the input (number of chunks/latents), while the other methods ("Thinking States", "Forward", and "Backward") are largely independent of this parameter. This could indicate that "Coconut" involves a process that requires more computation per chunk/latent, such as iterative refinement or complex calculations. The flat lines for the other methods suggest they may utilize a more efficient algorithm or have a fixed computational cost regardless of input size. The logarithmic scale highlights the exponential increase in time for "Coconut", making it less suitable for large numbers of chunks/latents. The "Backward" method is slightly faster than "Thinking States" and "Forward" but the difference is minimal. This data could be used to inform the selection of an appropriate method based on the expected input size and performance requirements.