## Line Chart: Training Loss Comparison Across Sequential Tasks

### Overview

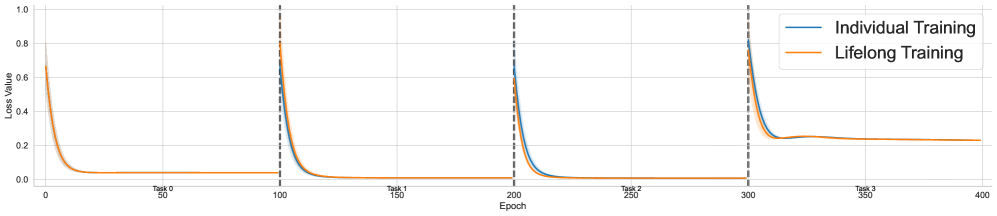

The image displays a line chart comparing the training loss over 400 epochs for two different machine learning training paradigms: "Individual Training" and "Lifelong Training." The chart is segmented into four distinct phases or tasks (Task 0, Task 1, Task 2, Task 3), separated by vertical dashed lines. The primary purpose is to visualize and compare how the loss evolves for each method as the model encounters new tasks sequentially.

### Components/Axes

* **Chart Type:** Line chart with two data series.

* **X-Axis:** Labeled "Epoch." It runs from 0 to 400 with major tick marks every 50 epochs (0, 50, 100, 150, 200, 250, 300, 350, 400).

* **Y-Axis:** Labeled "Loss Value." It runs from 0.0 to 1.0 with major tick marks every 0.2 units (0.0, 0.2, 0.4, 0.6, 0.8, 1.0).

* **Legend:** Positioned in the top-right corner of the chart area.

* **Blue Line:** "Individual Training"

* **Orange Line:** "Lifelong Training"

* **Task Segmentation:** Vertical, gray, dashed lines are placed at epochs 100, 200, and 300, dividing the chart into four equal segments. Text labels are placed at the bottom of each segment:

* "Task 0" (Epochs 0-100)

* "Task 1" (Epochs 100-200)

* "Task 2" (Epochs 200-300)

* "Task 3" (Epochs 300-400)

### Detailed Analysis

The chart shows the loss trajectory for both training methods across four sequential tasks. The general pattern for each task segment is a sharp initial decrease in loss followed by a plateau.

**Task 0 (Epochs 0-100):**

* **Trend:** Both lines start at a high loss (approximately 0.65-0.70) and decrease rapidly, converging to a very low loss value near 0.0 by epoch 50. They remain nearly identical and flat for the remainder of the task.

* **Data Points (Approximate):**

* Start (Epoch 0): Loss ~0.68 (Individual), ~0.65 (Lifelong).

* End (Epoch 100): Loss ~0.02 for both.

**Task 1 (Epochs 100-200):**

* **Trend:** At the start of Task 1 (epoch 100), both lines spike upward sharply to a loss of approximately 0.75-0.80. They then decrease rapidly again, converging to a near-zero loss by epoch 150 and remaining flat until epoch 200. The lines are virtually indistinguishable.

* **Data Points (Approximate):**

* Start (Epoch 100): Loss ~0.78 (Individual), ~0.75 (Lifelong).

* End (Epoch 200): Loss ~0.01 for both.

**Task 2 (Epochs 200-300):**

* **Trend:** A similar pattern occurs. At epoch 200, both lines spike to a loss of approximately 0.70. They decrease rapidly, but a slight separation becomes visible. The "Individual Training" (blue) line appears to descend slightly faster and reaches a marginally lower plateau than the "Lifelong Training" (orange) line.

* **Data Points (Approximate):**

* Start (Epoch 200): Loss ~0.70 (Individual), ~0.68 (Lifelong).

* End (Epoch 300): Loss ~0.01 (Individual), ~0.02 (Lifelong).

**Task 3 (Epochs 300-400):**

* **Trend:** This task shows the most significant divergence. At epoch 300, both lines spike to their highest point on the chart, approximately 0.85-0.90. They decrease, but the "Lifelong Training" (orange) line plateaus at a notably higher loss value than the "Individual Training" (blue) line. The blue line settles around 0.22, while the orange line settles around 0.25. Both lines show a very slight upward drift or instability in the final 50 epochs.

* **Data Points (Approximate):**

* Start (Epoch 300): Loss ~0.88 (Individual), ~0.85 (Lifelong).

* End (Epoch 400): Loss ~0.22 (Individual), ~0.25 (Lifelong).

### Key Observations

1. **Catastrophic Forgetting/Interference:** The sharp loss spikes at the beginning of each new task (epochs 100, 200, 300) for both methods indicate that the model's performance on the previous task degrades immediately when training on a new task. This is a classic sign of catastrophic forgetting in sequential learning.

2. **Convergence Speed:** In Tasks 0, 1, and 2, both methods converge to a near-zero loss very quickly (within ~50 epochs of starting the task).

3. **Divergence in Later Tasks:** The performance of the two methods is nearly identical for the first three tasks. A clear performance gap emerges only in Task 3, where "Individual Training" achieves a lower final loss than "Lifelong Training."

4. **Final Task Difficulty:** Task 3 appears to be the most challenging, as evidenced by the highest initial loss spike and the highest final plateau loss for both methods. The slight upward drift in loss at the end of Task 3 suggests potential training instability or that the model has reached its capacity for this task.

### Interpretation

This chart illustrates a core challenge in continual or lifelong learning: balancing the acquisition of new knowledge with the retention of old knowledge.

* **What the data suggests:** The "Individual Training" method, which likely involves training a separate model or resetting the model for each task, consistently achieves the lowest possible loss for each task in isolation. The "Lifelong Training" method, which uses a single model to learn tasks sequentially, performs comparably for the initial tasks but shows a measurable degradation in performance (higher final loss) on the fourth task (Task 3).

* **Relationship between elements:** The vertical dashed lines act as critical event markers, triggering the loss spikes that demonstrate the interference between tasks. The legend allows us to attribute the slightly worse final performance in Task 3 specifically to the lifelong learning approach.

* **Notable anomaly/trend:** The key anomaly is the **divergence in Task 3**. This suggests that the lifelong model's capacity to mitigate forgetting or integrate new knowledge without interference may be reaching its limit by the fourth task. The accumulated knowledge from Tasks 0-2 might be interfering with the learning of Task 3, or the model's parameters may be becoming "saturated."

* **Implication:** The data demonstrates that while lifelong learning can be effective for a small number of sequential tasks, its performance may degrade as the sequence grows longer. This highlights the need for specialized techniques (e.g., replay buffers, parameter isolation, meta-learning) in lifelong learning systems to maintain performance over extended task sequences. The "Individual Training" line serves as an idealized baseline, showing the best possible performance if forgetting were not an issue.