\n

## Bar Chart: Model Accuracy on ActivityNet and WikiHow Datasets

### Overview

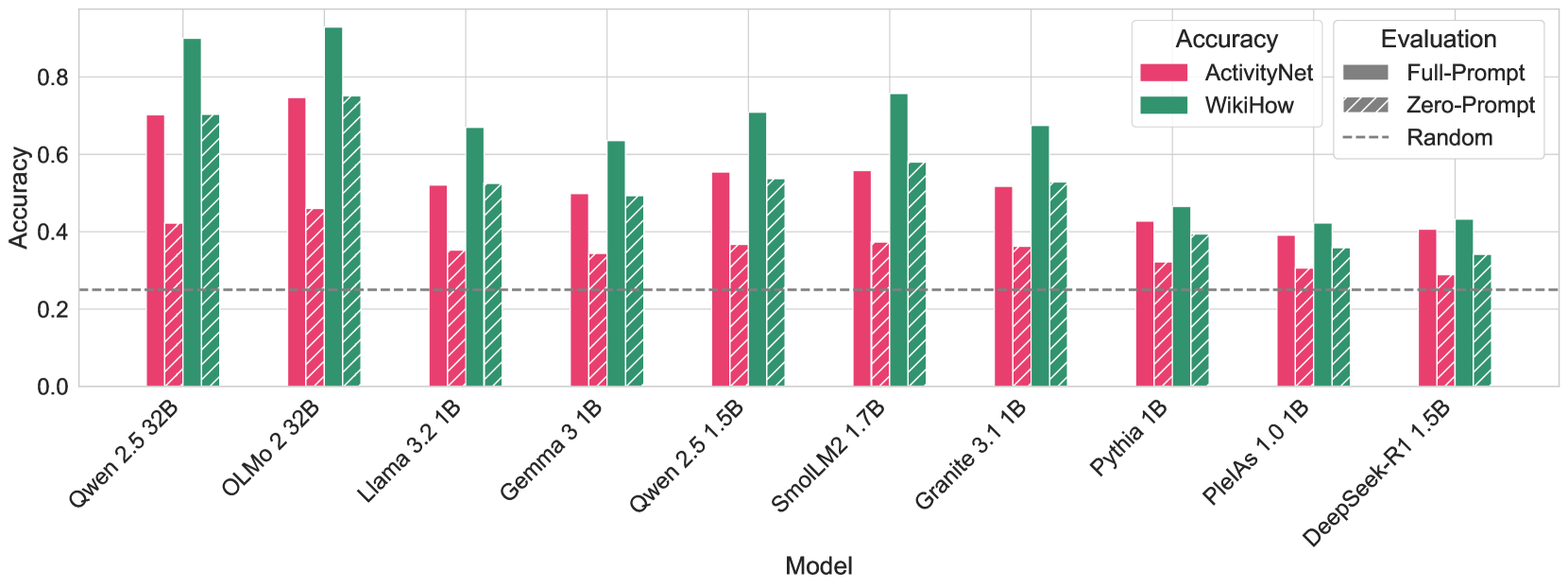

This bar chart compares the accuracy of several models (Qwen 2.5 32B, OLMo 2 32B, Llama 3.2 1B, Gemma 3 1B, Qwen 2.5 1.5B, SmolLM2 1.7B, Granite 3.1 1B, Pythia 1B, PleiAs 1.0 1B, DeepSeek-R1 1.5B) on two datasets: ActivityNet and WikiHow. Accuracy is measured on the y-axis, and models are listed on the x-axis. Each model has two bars representing its accuracy on each dataset. Additionally, dashed lines indicate the performance of a "Random" baseline. The chart uses a color-coded legend to distinguish between the datasets and evaluation methods.

### Components/Axes

* **X-axis:** Model - with the following categories: Qwen 2.5 32B, OLMo 2 32B, Llama 3.2 1B, Gemma 3 1B, Qwen 2.5 1.5B, SmolLM2 1.7B, Granite 3.1 1B, Pythia 1B, PleiAs 1.0 1B, DeepSeek-R1 1.5B.

* **Y-axis:** Accuracy - Scale ranges from 0.0 to 0.8, with increments of 0.2.

* **Legend (Top-Right):**

* **Accuracy:**

* ActivityNet (Green)

* WikiHow (Red)

* **Evaluation:**

* Full-Prompt (Solid Line)

* Zero-Prompt (Hatched Line)

* Random (Dashed Line)

### Detailed Analysis

The chart presents accuracy data for each model on both datasets, using paired bars. The "Random" baseline is represented by a horizontal dashed line.

* **Qwen 2.5 32B:** ActivityNet accuracy is approximately 0.84. WikiHow accuracy is approximately 0.72.

* **OLMo 2 32B:** ActivityNet accuracy is approximately 0.82. WikiHow accuracy is approximately 0.68.

* **Llama 3.2 1B:** ActivityNet accuracy is approximately 0.86. WikiHow accuracy is approximately 0.54.

* **Gemma 3 1B:** ActivityNet accuracy is approximately 0.78. WikiHow accuracy is approximately 0.50.

* **Qwen 2.5 1.5B:** ActivityNet accuracy is approximately 0.74. WikiHow accuracy is approximately 0.46.

* **SmolLM2 1.7B:** ActivityNet accuracy is approximately 0.68. WikiHow accuracy is approximately 0.42.

* **Granite 3.1 1B:** ActivityNet accuracy is approximately 0.64. WikiHow accuracy is approximately 0.38.

* **Pythia 1B:** ActivityNet accuracy is approximately 0.60. WikiHow accuracy is approximately 0.34.

* **PleiAs 1.0 1B:** ActivityNet accuracy is approximately 0.56. WikiHow accuracy is approximately 0.30.

* **DeepSeek-R1 1.5B:** ActivityNet accuracy is approximately 0.52. WikiHow accuracy is approximately 0.26.

The dashed "Random" line appears to be at approximately 0.1 accuracy. The solid and hatched lines represent Full-Prompt and Zero-Prompt evaluations, respectively. The hatched lines are consistently below the solid lines for each model.

### Key Observations

* Larger models (Qwen 2.5 32B, OLMo 2 32B) generally achieve higher accuracy on both datasets.

* ActivityNet accuracy is consistently higher than WikiHow accuracy across all models.

* Full-Prompt evaluation consistently outperforms Zero-Prompt evaluation.

* All models significantly outperform the "Random" baseline.

* There is a clear negative correlation between model size and the gap between ActivityNet and WikiHow accuracy. Larger models have a smaller difference in accuracy between the two datasets.

### Interpretation

The data suggests that model size is a significant factor in achieving high accuracy on both ActivityNet and WikiHow datasets. The consistent outperformance of Full-Prompt evaluation indicates that providing prompts significantly improves model performance. The higher accuracy on ActivityNet compared to WikiHow suggests that ActivityNet may be an easier task for these models, or that the models are better trained on data similar to ActivityNet. The decreasing gap between ActivityNet and WikiHow accuracy as model size increases could indicate that larger models are better at generalizing to different types of data. The "Random" baseline provides a crucial point of reference, demonstrating that the observed accuracy is not due to chance. The chart provides a comparative analysis of model performance, allowing for informed decisions about model selection based on specific task requirements and available resources.