## Bar Chart: Model Accuracy Comparison Across Evaluation Methods

### Overview

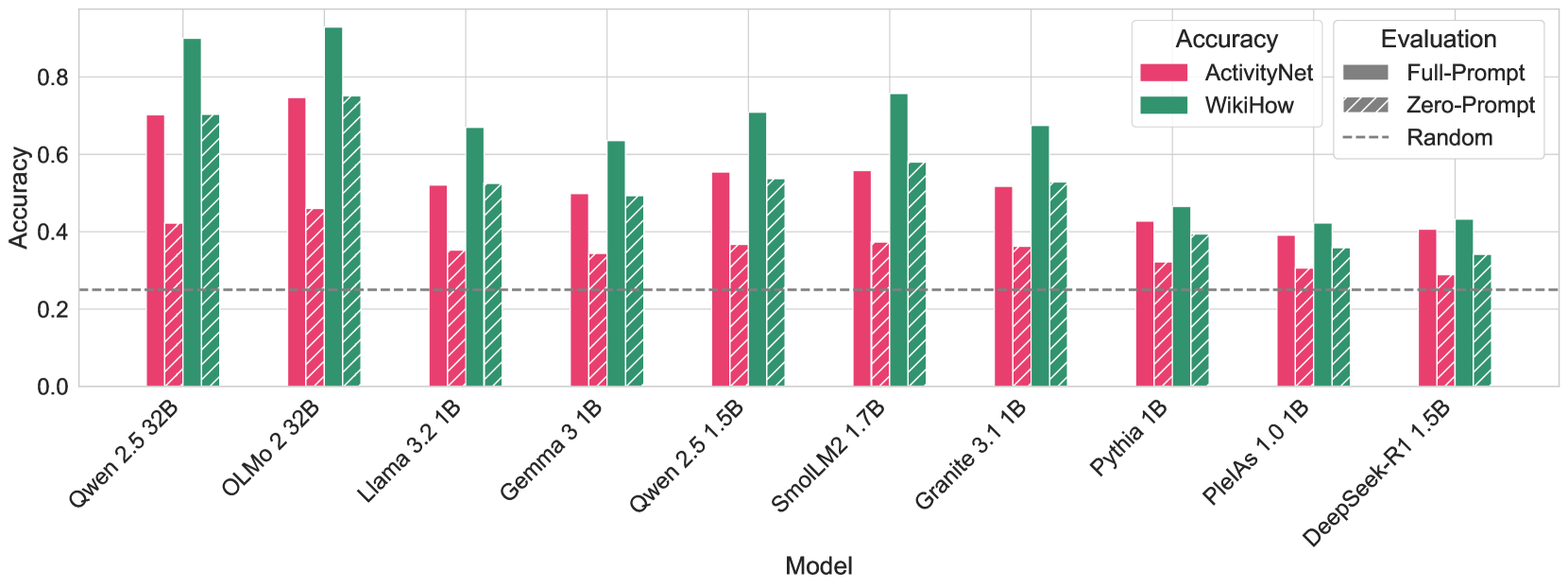

The chart compares the accuracy of various large language models (LLMs) under three evaluation methods: Full-Prompt, Zero-Prompt, and Random. Models are grouped by name on the x-axis, with accuracy (0–0.8) on the y-axis. Each model has three bars representing the evaluation methods, with distinct patterns/colors for clarity.

### Components/Axes

- **X-Axis (Model)**: Labeled "Model," listing 10 LLMs:

Qwen 2.5 32B, OLMo 2 32B, Llama 3.2 1B, Gemma 3 1B, Qwen 2.5 1.5B, SmolLM2 1.7B, Granite 3.1 1B, Pythia 1B, PleiAs 1.0 1B, DeepSeek-R1 1.5B.

- **Y-Axis (Accuracy)**: Labeled "Accuracy," scaled from 0.0 to 0.8 in increments of 0.2.

- **Legend**: Positioned top-right, with three entries:

- **Full-Prompt**: Solid gray bars.

- **Zero-Prompt**: Striped gray bars.

- **Random**: Dashed gray bars.

### Detailed Analysis

- **Qwen 2.5 32B**:

- Full-Prompt: ~0.7

- Zero-Prompt: ~0.4

- Random: ~0.3

- **OLMo 2 32B**:

- Full-Prompt: ~0.75

- Zero-Prompt: ~0.45

- Random: ~0.35

- **Llama 3.2 1B**:

- Full-Prompt: ~0.65

- Zero-Prompt: ~0.5

- Random: ~0.45

- **Gemma 3 1B**:

- Full-Prompt: ~0.6

- Zero-Prompt: ~0.5

- Random: ~0.4

- **Qwen 2.5 1.5B**:

- Full-Prompt: ~0.7

- Zero-Prompt: ~0.55

- Random: ~0.45

- **SmolLM2 1.7B**:

- Full-Prompt: ~0.75

- Zero-Prompt: ~0.55

- Random: ~0.45

- **Granite 3.1 1B**:

- Full-Prompt: ~0.6

- Zero-Prompt: ~0.5

- Random: ~0.4

- **Pythia 1B**:

- Full-Prompt: ~0.45

- Zero-Prompt: ~0.4

- Random: ~0.35

- **PleiAs 1.0 1B**:

- Full-Prompt: ~0.4

- Zero-Prompt: ~0.35

- Random: ~0.3

- **DeepSeek-R1 1.5B**:

- Full-Prompt: ~0.4

- Zero-Prompt: ~0.35

- Random: ~0.3

### Key Observations

1. **Full-Prompt Dominance**: Full-Prompt consistently outperforms Zero-Prompt and Random across all models, with accuracies ranging from ~0.4 (PleiAs) to ~0.75 (SmolLM2).

2. **Zero-Prompt Variability**: Zero-Prompt shows moderate performance, with accuracies between ~0.35 (PleiAs) and ~0.55 (Qwen 2.5 1.5B).

3. **Random Baseline**: Random evaluations are uniformly the lowest, clustering around ~0.3–0.45.

4. **Model-Specific Trends**: Larger models (e.g., Qwen 2.5 32B, OLMo 2 32B) generally achieve higher accuracies than smaller models (e.g., Pythia 1B, PleiAs 1.0 1B).

### Interpretation

The data demonstrates that **explicit prompting (Full-Prompt)** significantly improves model accuracy compared to **implicit prompting (Zero-Prompt)** and **random evaluations**. This suggests that structured instructions enhance model performance, likely by reducing ambiguity in task interpretation. Larger models (e.g., 32B parameters) outperform smaller ones, indicating scalability benefits. However, the gap between Full-Prompt and Zero-Prompt narrows for smaller models (e.g., Pythia 1B), suggesting they may be less sensitive to prompting style. The Random method’s consistent underperformance underscores the necessity of structured evaluations for reliable benchmarking.

### Spatial Grounding & Trend Verification

- **Legend Placement**: Top-right ensures clear association between colors/patterns and evaluation methods.

- **Bar Order**: Full-Prompt (solid) > Zero-Prompt (striped) > Random (dashed) aligns with legend order.

- **Trend Consistency**: Full-Prompt bars are taller than Zero-Prompt, which exceed Random across all models, confirming the expected hierarchy.

### Notable Outliers

- **SmolLM2 1.7B**: Highest Full-Prompt accuracy (~0.75), suggesting strong performance with explicit instructions.

- **PleiAs 1.0 1B**: Lowest Full-Prompt accuracy (~0.4), indicating potential architectural or training limitations.