\n

## Diagram: AI Training Pipeline with Verification and Feedback Loops

### Overview

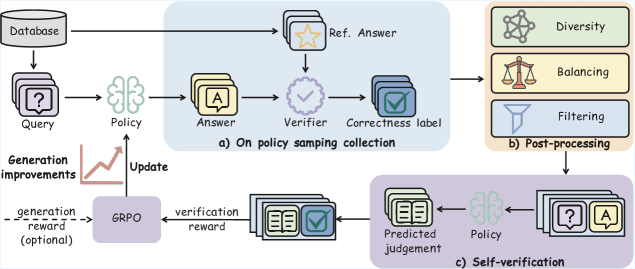

The image is a technical flowchart illustrating a multi-stage process for training or refining an AI policy model. The system incorporates answer generation, verification against reference answers, post-processing, and a self-verification loop with reward mechanisms. The diagram is divided into three primary, interconnected stages labeled a), b), and c).

### Components/Axes

The diagram is structured into three main shaded regions, each representing a distinct phase:

**a) On policy sampling collection (Top-left, light blue background):**

* **Components:** Database, Query, Policy, Answer, Ref. Answer, Verifier, Correctness label.

* **Flow:** A `Query` is drawn from a `Database` and fed into a `Policy` model. The `Policy` generates an `Answer`. This `Answer`, along with a `Ref. Answer` (Reference Answer) also sourced from the `Database`, is sent to a `Verifier`. The `Verifier` outputs a `Correctness label`.

* **Auxiliary Element:** A graph labeled `Generation improvements` shows an upward trend. It points to a `GRPO` module, indicating optional `generation reward` input. The `GRPO` module sends an `Update` signal back to the `Policy`.

**b) Post-processing (Top-right, light yellow background):**

* **Components:** Diversity, Balancing, Filtering.

* **Flow:** The output from stage a) (presumably the collected data with correctness labels) flows into this stage. It is processed through three sequential modules: `Diversity`, `Balancing`, and `Filtering`.

**c) Self-verification (Bottom, light purple background):**

* **Components:** Predicted judgement, Policy, verification reward.

* **Flow:** The processed data from stage b) enters this loop. A `Predicted judgement` is made (likely by a model). This is fed into the `Policy` along with a `Query` and `Answer` pair. The output of this `Policy` generates a `verification reward`, which is sent to the `GRPO` module in stage a).

**Central Connecting Module:**

* **GRPO:** This module sits between stages a) and c). It receives a `generation reward (optional)` from the `Generation improvements` graph and a `verification reward` from stage c). It then sends an `Update` to the `Policy` in stage a), closing the main feedback loop.

### Detailed Analysis

The diagram details a sophisticated, iterative training pipeline:

1. **Data Collection & Initial Verification (Stage a):** The core process begins with generating answers to queries and verifying them against ground-truth references. This creates a labeled dataset (`Correctness label`).

2. **Data Refinement (Stage b):** The collected data undergoes post-processing to ensure quality and balance. The `Diversity` module likely ensures varied examples, `Balancing` adjusts class distributions, and `Filtering` removes low-quality or noisy data.

3. **Self-Verification Loop (Stage c):** This is a key innovation. The refined data is used to train or run a `Predicted judgement` model. This model's output, in conjunction with the original `Policy`, generates a `verification reward`. This reward signal is an alternative or supplement to the direct `generation reward`.

4. **Policy Update via GRPO:** The `GRPO` (likely an acronym for a specific reinforcement learning or optimization algorithm like "Generative Reward Policy Optimization") module aggregates rewards from two sources:

* **Generation Reward:** Optional, based on the trend of `Generation improvements`.

* **Verification Reward:** Derived from the self-verification loop.

The aggregated reward signal is used to `Update` the main `Policy` model, aiming to improve its performance iteratively.

### Key Observations

* **Dual Reward Mechanism:** The system uses both a direct performance metric (`generation reward`) and an indirect, model-based metric (`verification reward`).

* **Closed-Loop System:** The pipeline is cyclical. The updated `Policy` generates new answers, which go through verification and post-processing, leading to new rewards and further updates.

* **Role of Reference Answers:** The `Ref. Answer` is crucial for the initial `Verifier` in stage a), providing a ground truth for creating the `Correctness label`.

* **Data-Centric Post-Processing:** The dedicated `Post-processing` stage emphasizes the importance of data quality (diversity, balance, cleanliness) before it's used in the advanced self-verification loop.

### Interpretation

This diagram represents a **Reinforcement Learning from Human and AI Feedback (RLHAF)** or a similar advanced training paradigm for generative AI models. It moves beyond simple supervised learning.

* **What it demonstrates:** The system aims to create a more robust and reliable AI `Policy` by not just learning from static correct answers, but by incorporating a dynamic verification process. The `Self-verification` loop (stage c) suggests the model is learning to judge the quality of its own or other models' outputs, creating a form of **recursive self-improvement**.

* **How elements relate:** The `Database` is the source of truth and queries. The `Policy` is the core model being improved. The `Verifier` and `Predicted judgement` act as critics or reward models. `GRPO` is the optimizer that translates feedback into model updates. The post-processing ensures the feedback is based on high-quality data.

* **Notable implications:** The inclusion of `Diversity` and `Balancing` suggests an awareness of and mitigation for dataset bias. The optional `generation reward` indicates flexibility in the training signal. The entire architecture is designed to be **scalable and automated**, reducing reliance on constant human annotation by using AI verifiers and self-verification loops. The ultimate goal is likely to produce a policy that generates answers that are not only correct but also diverse, balanced, and verifiable.