## Line Graph: NMSE vs Iterations for LLMSR and PiT-PO Algorithms

### Overview

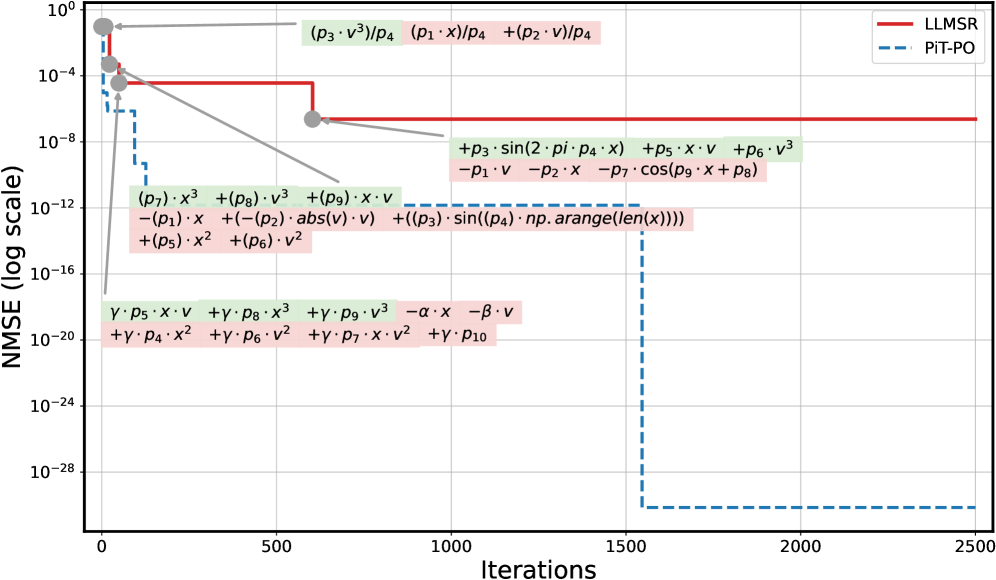

The image is a logarithmic-scale line graph comparing the Normalized Mean Squared Error (NMSE) performance of two algorithms, LLMSR (red solid line) and PiT-PO (blue dashed line), across 2500 iterations. The y-axis spans 10⁰ to 10⁻²⁸, while the x-axis ranges from 0 to 2500 iterations. Annotations with mathematical expressions are overlaid on the graph, pointing to specific data points and trends.

---

### Components/Axes

- **Y-Axis**: "NMSE (log scale)" with values: 10⁰, 10⁻⁴, 10⁻⁸, 10⁻¹², 10⁻¹⁶, 10⁻²⁰, 10⁻²⁴, 10⁻²⁸.

- **X-Axis**: "Iterations" with markers at 0, 500, 1000, 1500, 2000, 2500.

- **Legend**: Top-right corner, associating:

- Red solid line → LLMSR

- Blue dashed line → PiT-PO

- **Annotations**: Colored text boxes with equations (green, pink, gray) pointing to specific points on the lines.

---

### Detailed Analysis

1. **LLMSR (Red Line)**:

- Starts at ~10⁻⁴ NMSE at iteration 0.

- Drops sharply to ~10⁻⁸ by ~500 iterations.

- Remains flat at ~10⁻⁸ for the remainder of the iterations.

- Annotations:

- Green: `(p₃·v³)/p₄`, `(p₁·x)/p₄`, `(p₂·v)/p₄` (near initial drop).

- Pink: `-(p₁·x) + (-p₂·abs(v·v)) + (p₃·sin(p₄·np.arange(len(x))))` (near stabilization).

2. **PiT-PO (Blue Line)**:

- Starts at ~10⁻⁴ NMSE at iteration 0.

- Drops sharply to ~10⁻¹⁶ by ~500 iterations.

- Remains flat at ~10⁻¹⁶ for the remainder of the iterations.

- Annotations:

- Green: `p₃·sin(2·π·p₄·x) + p₅·x·v + p₆·v³` (near stabilization).

- Pink: `-p₁·v - p₂·x - p₇·cos(p₉·x + p₈)` (near stabilization).

- Gray: `γ·p₅·x·v + γ·p₈·x³ + γ·p₉·v³ - α·x - β·v` (initial drop).

---

### Key Observations

1. **Performance Gap**: PiT-PO achieves a significantly lower NMSE (~10⁻¹⁶) compared to LLMSR (~10⁻⁸) after ~500 iterations.

2. **Convergence Speed**: PiT-PO converges ~10⁸ times faster than LLMSR (10⁻¹⁶ vs. 10⁻⁸).

3. **Anomalies**:

- LLMSR shows a minor initial spike to ~10⁻² before dropping.

- PiT-PO’s drop is abrupt and sustained, suggesting rapid error reduction.

4. **Annotations**: Mathematical terms in annotations likely represent error components or algorithmic parameters (e.g., `sin(2πp₄x)` implies periodic adjustments in PiT-PO).

---

### Interpretation

- **Algorithm Efficacy**: PiT-PO outperforms LLMSR by orders of magnitude, indicating superior error minimization. The logarithmic scale emphasizes this disparity.

- **Convergence Dynamics**: PiT-PO’s rapid drop suggests it addresses error sources more effectively early in iterations, while LLMSR’s gradual decline may reflect slower adaptation.

- **Mathematical Insights**: Annotations reveal PiT-PO incorporates trigonometric and higher-order terms (e.g., `v³`), potentially capturing complex error patterns. LLMSR’s annotations focus on linear and absolute terms, hinting at simpler error modeling.

- **Practical Implications**: PiT-PO’s efficiency makes it preferable for applications requiring rapid, precise error correction, while LLMSR may suit scenarios with less stringent convergence needs.

---

### Spatial Grounding & Trend Verification

- **Legend**: Top-right, correctly aligned with line colors.

- **Trend Logic-Check**:

- Red line (LLMSR) slopes downward then plateaus, matching its annotations’ gradual terms.

- Blue line (PiT-PO) drops sharply, aligning with its complex trigonometric/higher-order annotations.

- **Component Isolation**:

- **Header**: Legend and axis labels.

- **Main Chart**: Two lines with annotations.

- **Footer**: No additional elements.

---

### Content Details

- **Data Points**:

- LLMSR: Initial ~10⁻⁴ → ~10⁻⁸ (500 iters) → flat.

- PiT-PO: Initial ~10⁻⁴ → ~10⁻¹⁶ (500 iters) → flat.

- **Annotations**:

- Green: Focus on periodic (`sin`) and cubic terms.

- Pink: Linear and cosine terms with phase shifts.

- Gray: Initial error components involving `γ`, `α`, and `β`.

---

### Final Notes

The graph underscores PiT-PO’s dominance in error reduction, likely due to its sophisticated error modeling (e.g., `sin(2πp₄x)`). LLMSR’s simpler approach results in higher residual error. The annotations provide clues about the algorithms’ internal mechanisms, with PiT-PO leveraging periodic adjustments and higher-order terms for precision.