\n

## Grouped Bar Chart: Meta-Tuning Performance Improvement by Problem Level (GPT-4 vs. Gemini)

### Overview

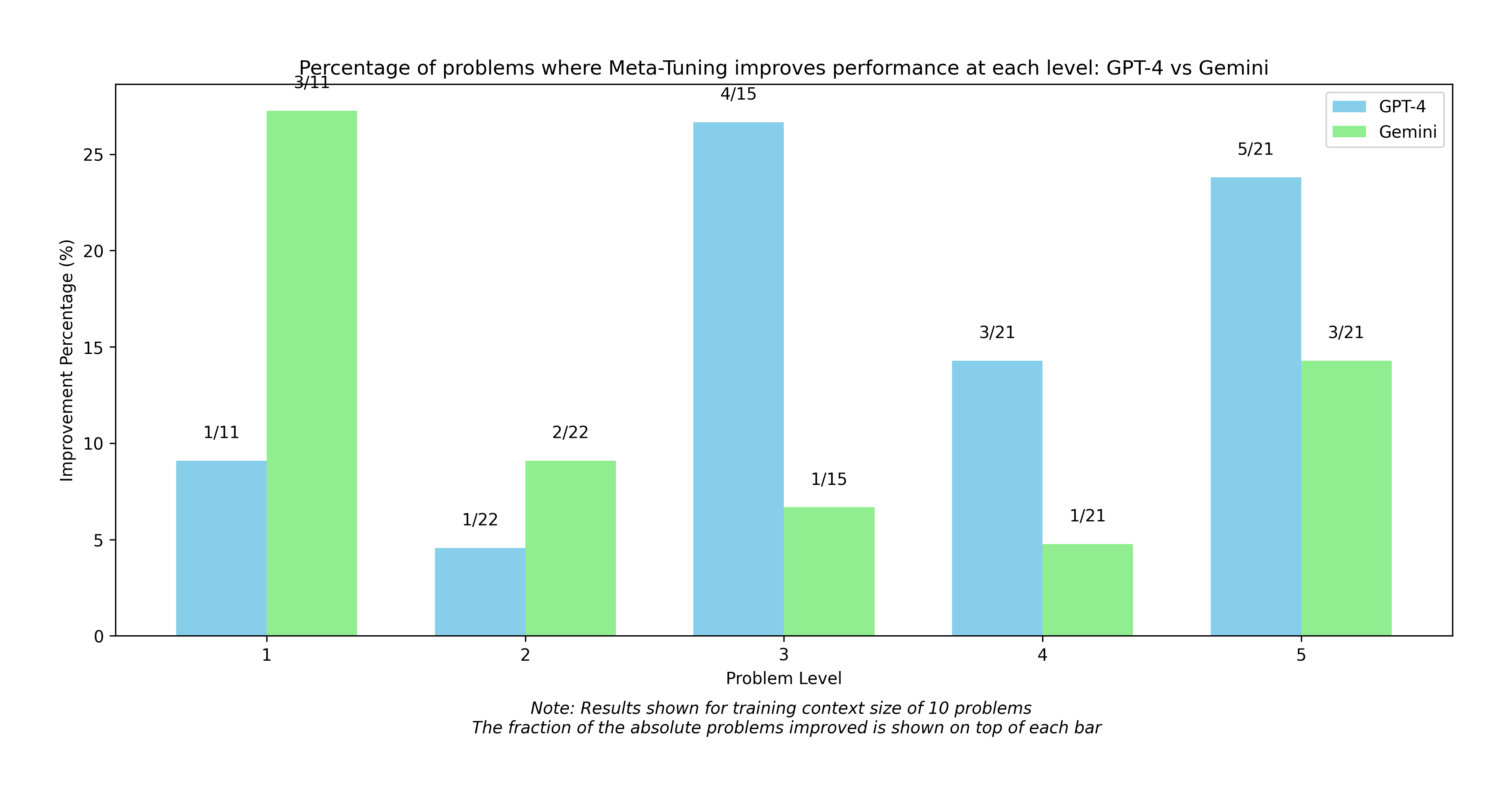

This is a grouped bar chart titled "Percentage of problems where Meta-Tuning improves performance at each level: GPT-4 vs Gemini". It compares the effectiveness of a technique called "Meta-Tuning" on two AI models (GPT-4 and Gemini) across five distinct problem difficulty levels. The chart displays the improvement percentage for each model at each level, with the absolute fraction of problems improved annotated above each bar. A note at the bottom specifies the results are for a training context size of 10 problems.

### Components/Axes

* **Chart Title:** "Percentage of problems where Meta-Tuning improves performance at each level: GPT-4 vs Gemini"

* **X-Axis:** Labeled "Problem Level". It has five categorical ticks: 1, 2, 3, 4, and 5.

* **Y-Axis:** Labeled "Improvement Percentage (%)". The scale runs from 0 to 25 with increments of 5.

* **Legend:** Located in the top-right corner.

* **GPT-4:** Represented by light blue bars.

* **Gemini:** Represented by light green bars.

* **Data Annotations:** Each bar has a fraction (X/Y) written above it, where X is the number of problems improved and Y is the total number of problems at that level.

* **Footer Note:** Contains two lines of text:

1. "Note: Results shown for training context size of 10 problems"

2. "The fraction of the absolute problems improved is shown on top of each bar"

### Detailed Analysis

The chart presents the following data points for each Problem Level:

**Problem Level 1:**

* **GPT-4 (Light Blue):** Bar height is approximately 9.1%. Annotation: `1/11`.

* **Gemini (Light Green):** Bar height is approximately 27.3%. Annotation: `3/11`.

**Problem Level 2:**

* **GPT-4 (Light Blue):** Bar height is approximately 4.5%. Annotation: `1/22`.

* **Gemini (Light Green):** Bar height is approximately 9.1%. Annotation: `2/22`.

**Problem Level 3:**

* **GPT-4 (Light Blue):** Bar height is approximately 26.7%. Annotation: `4/15`.

* **Gemini (Light Green):** Bar height is approximately 6.7%. Annotation: `1/15`.

**Problem Level 4:**

* **GPT-4 (Light Blue):** Bar height is approximately 14.3%. Annotation: `3/21`.

* **Gemini (Light Green):** Bar height is approximately 4.8%. Annotation: `1/21`.

**Problem Level 5:**

* **GPT-4 (Light Blue):** Bar height is approximately 23.8%. Annotation: `5/21`.

* **Gemini (Light Green):** Bar height is approximately 14.3%. Annotation: `3/21`.

### Key Observations

1. **Model Performance Inversion:** The effectiveness of Meta-Tuning differs dramatically between the two models. Gemini shows its highest improvement at the lowest problem level (Level 1: ~27.3%), while GPT-4 shows its highest improvement at a mid-to-high level (Level 3: ~26.7%).

2. **Trend for GPT-4:** The improvement percentage for GPT-4 does not follow a simple linear trend. It starts low (~9.1% at L1), dips at L2 (~4.5%), peaks sharply at L3 (~26.7%), dips again at L4 (~14.3%), and rises to a second high at L5 (~23.8%).

3. **Trend for Gemini:** Gemini's improvement percentage generally decreases as problem level increases, with the exception of a rise at Level 5. The trend is: High at L1 (~27.3%), lower at L2 (~9.1%), lower still at L3 (~6.7%), lowest at L4 (~4.8%), then a rebound at L5 (~14.3%).

4. **Absolute Problem Counts:** The denominators in the annotations (11, 22, 15, 21, 21) indicate the total number of problems evaluated at each level, which varies. The numerators show the raw count of successes.

5. **Lowest Improvement:** The single lowest improvement percentage on the chart is for GPT-4 at Problem Level 2 (~4.5%, 1/22 problems).

### Interpretation

The data suggests that the benefit of Meta-Tuning is highly context-dependent, varying significantly by both the AI model and the difficulty of the task.

* **Model-Specific Efficacy:** Meta-Tuning appears to be particularly effective for Gemini on simpler problems (Level 1), but its benefit diminishes for Gemini as problems get harder, with a curious resurgence at the hardest level (Level 5). For GPT-4, the technique seems most beneficial for problems of intermediate (Level 3) and high (Level 5) difficulty, suggesting it may help the model tackle more complex reasoning or knowledge integration tasks.

* **Anomaly at Level 2:** Both models show relatively low improvement at Problem Level 2. This could indicate that problems at this specific difficulty tier are either inherently resistant to this tuning method or that the baseline performance of the models was already high, leaving less room for improvement.

* **Practical Implication:** The results argue against a one-size-fits-all application of Meta-Tuning. To maximize performance gains, the tuning strategy might need to be tailored to the target model and the expected difficulty distribution of the problems it will face. The small absolute numbers (e.g., 1/11, 4/15) also highlight that these percentages are derived from relatively small sample sizes at each level, so the findings should be considered indicative rather than definitive.