## Line Graph: Model Accuracy vs. Operations

### Overview

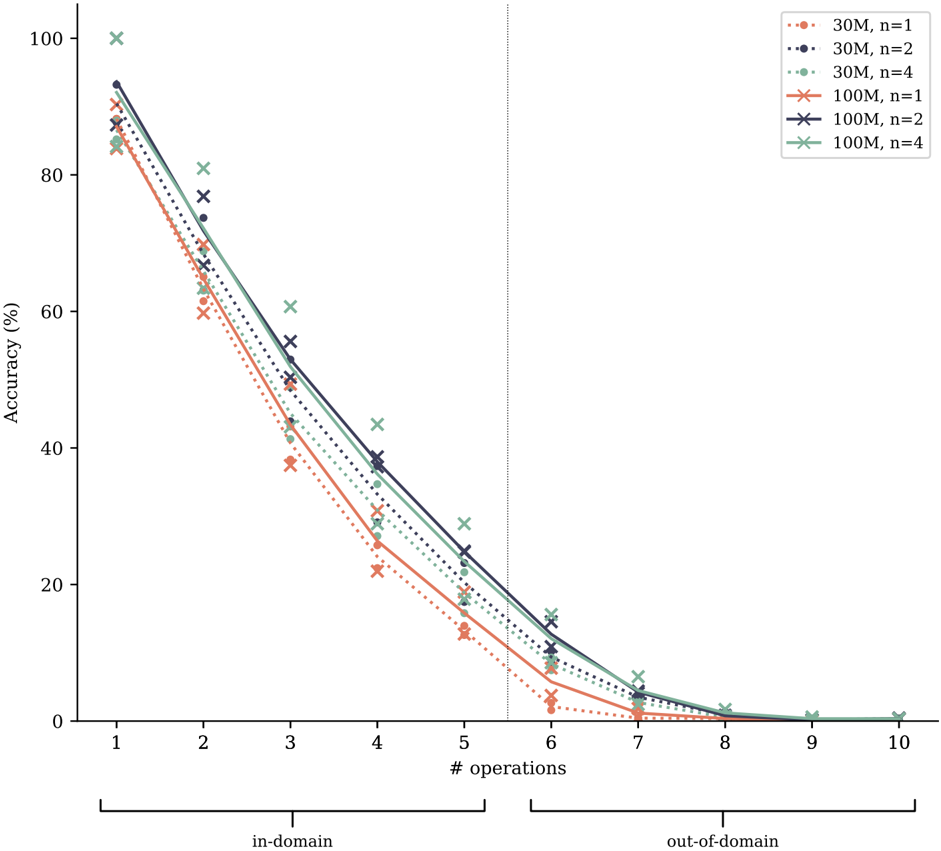

The graph compares the accuracy of machine learning models under varying operational constraints, distinguishing between in-domain (operations 1-6) and out-of-domain (operations 7-10) scenarios. Accuracy declines as the number of operations increases, with distinct trends for different model configurations.

### Components/Axes

- **Y-axis**: Accuracy (%) ranging from 0 to 100.

- **X-axis**: Number of operations (1-10), split into:

- In-domain: Operations 1-6 (left of vertical dashed line).

- Out-of-domain: Operations 7-10 (right of vertical dashed line).

- **Legend**: Located in the top-right corner, with entries:

- **30M**: Red (solid), Blue (dashed), Green (dotted) for n=1, 2, 4.

- **100M**: Red (solid), Blue (dashed), Green (dotted) for n=1, 2, 4.

### Detailed Analysis

1. **30M Model**:

- **n=1** (Red solid): Starts at ~90% accuracy at 1 operation, dropping to ~10% by 10 operations.

- **n=2** (Blue dashed): Begins at ~85%, declining to ~5% by 10 operations.

- **n=4** (Green dotted): Starts at ~80%, falling to ~2% by 10 operations.

2. **100M Model**:

- **n=1** (Red solid): Starts at ~95%, dropping to ~15% by 10 operations.

- **n=2** (Blue dashed): Begins at ~90%, declining to ~10% by 10 operations.

- **n=4** (Green dotted): Starts at ~85%, falling to ~5% by 10 operations.

**Trends**:

- All lines exhibit a steep decline in accuracy as operations increase.

- 100M models consistently outperform 30M models across all n values.

- Out-of-domain performance (operations 7-10) shows a sharper drop compared to in-domain.

### Key Observations

- **Performance Degradation**: Accuracy decreases exponentially with more operations, especially beyond 6 operations (out-of-domain).

- **Model Robustness**: 100M models maintain higher accuracy than 30M models, suggesting better scalability.

- **n=4 Impact**: Higher n values (more operations per step) correlate with faster accuracy decline, particularly in out-of-domain tasks.

### Interpretation

The data demonstrates that increasing operational complexity (more operations) reduces model accuracy, with out-of-domain tasks exacerbating this effect. Larger models (100M) exhibit greater resilience, maintaining higher accuracy across operations. The steeper decline in out-of-domain performance highlights the challenge of generalization. The n=4 configurations (most operations per step) underperform, indicating that smaller, more frequent steps may be more effective for maintaining accuracy. This suggests trade-offs between model size, operational granularity, and task domain alignment.