## Line Graph: Answer Accuracy Across Layers for Llama-3-8B and Llama-3-70B Models

### Overview

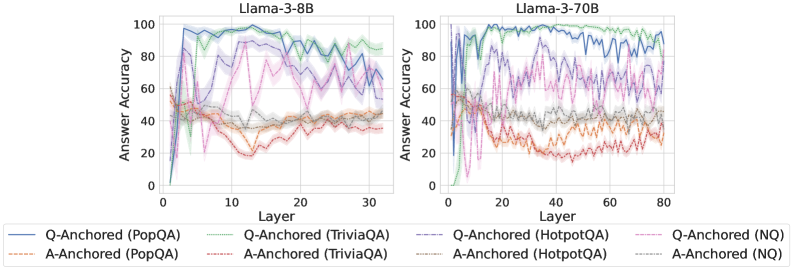

The image contains two side-by-side line graphs comparing answer accuracy across layers for two versions of the Llama-3 model (8B and 70B parameters). Each graph tracks performance across 30 layers (8B) or 80 layers (70B) for six distinct methods/dataset combinations. The y-axis represents answer accuracy (0-100%), while the x-axis represents layer depth. Multiple colored lines with distinct styles represent different Q-Anchored and A-Anchored methods applied to specific datasets.

### Components/Axes

- **X-Axis (Layer Depth)**:

- Llama-3-8B: 0–30 layers (discrete increments)

- Llama-3-70B: 0–80 layers (discrete increments)

- **Y-Axis (Answer Accuracy)**: 0–100% (continuous scale)

- **Legends**:

- **Llama-3-8B** (left chart):

- Solid blue: Q-Anchored (PopQA)

- Dashed green: Q-Anchored (TriviaQA)

- Dotted orange: A-Anchored (PopQA)

- Dashed gray: A-Anchored (TriviaQA)

- Solid purple: Q-Anchored (HotpotQA)

- Dotted pink: Q-Anchored (NQ)

- **Llama-3-70B** (right chart):

- Same legend entries as above, with matching colors/styles

### Detailed Analysis

#### Llama-3-8B (Left Chart)

1. **Q-Anchored (PopQA)** (solid blue):

- Starts at ~90% accuracy, dips to ~70% at layer 10, then stabilizes near 80%.

2. **Q-Anchored (TriviaQA)** (dashed green):

- Peaks at ~95% at layer 5, drops to ~60% by layer 20, then fluctuates between 50–70%.

3. **A-Anchored (PopQA)** (dotted orange):

- Begins at ~50%, rises to ~70% at layer 15, then declines to ~40% by layer 30.

4. **A-Anchored (TriviaQA)** (dashed gray):

- Starts at ~40%, peaks at ~60% at layer 10, then drops to ~30% by layer 30.

5. **Q-Anchored (HotpotQA)** (solid purple):

- Starts at ~80%, dips to ~60% at layer 10, then stabilizes near 70%.

6. **Q-Anchored (NQ)** (dotted pink):

- Highly volatile: starts at ~50%, peaks at ~90% at layer 5, crashes to ~20% at layer 15, then fluctuates between 10–50%.

#### Llama-3-70B (Right Chart)

1. **Q-Anchored (PopQA)** (solid blue):

- Starts at ~95%, dips to ~80% at layer 20, then stabilizes near 90%.

2. **Q-Anchored (TriviaQA)** (dashed green):

- Peaks at ~98% at layer 10, drops to ~70% by layer 40, then fluctuates between 60–80%.

3. **A-Anchored (PopQA)** (dotted orange):

- Begins at ~60%, rises to ~80% at layer 30, then declines to ~50% by layer 80.

4. **A-Anchored (TriviaQA)** (dashed gray):

- Starts at ~50%, peaks at ~70% at layer 20, then drops to ~40% by layer 80.

5. **Q-Anchored (HotpotQA)** (solid purple):

- Starts at ~85%, dips to ~70% at layer 40, then stabilizes near 80%.

6. **Q-Anchored (NQ)** (dotted pink):

- Less volatile than 8B: starts at ~60%, peaks at ~85% at layer 10, then fluctuates between 50–70%.

### Key Observations

1. **Model Size Impact**:

- Llama-3-70B consistently outperforms Llama-3-8B across most methods/datasets.

- Larger model shows smoother accuracy curves with fewer extreme fluctuations.

2. **Method Performance**:

- Q-Anchored methods generally outperform A-Anchored methods.

- NQ dataset shows the most instability, especially in the 8B model.

3. **Layer-Specific Trends**:

- Early layers (0–10) often show peak accuracy for Q-Anchored methods.

- Later layers (20–80) exhibit gradual declines or stabilization.

4. **Dataset Variability**:

- HotpotQA and TriviaQA show moderate stability.

- PopQA and NQ exhibit higher volatility, particularly in the 8B model.

### Interpretation

The data suggests that:

- **Model Scale Matters**: The 70B model achieves higher baseline accuracy and more stable performance across layers compared to the 8B model.

- **Q-Anchored Superiority**: Q-Anchored methods consistently outperform A-Anchored counterparts, likely due to better contextual grounding.

- **Dataset Sensitivity**: NQ (Natural Questions) introduces significant instability, possibly due to its open-ended nature or data complexity.

- **Layer Depth Dynamics**: Early layers (0–10) are critical for establishing accuracy, with later layers showing diminishing returns or degradation.

The graphs highlight trade-offs between model size, anchoring strategies, and dataset characteristics. The 70B model’s improved performance suggests that scaling enhances robustness, while Q-Anchored methods provide more reliable accuracy across diverse datasets.