## Line Graph: NDCG@10% Performance Across Dimensions

### Overview

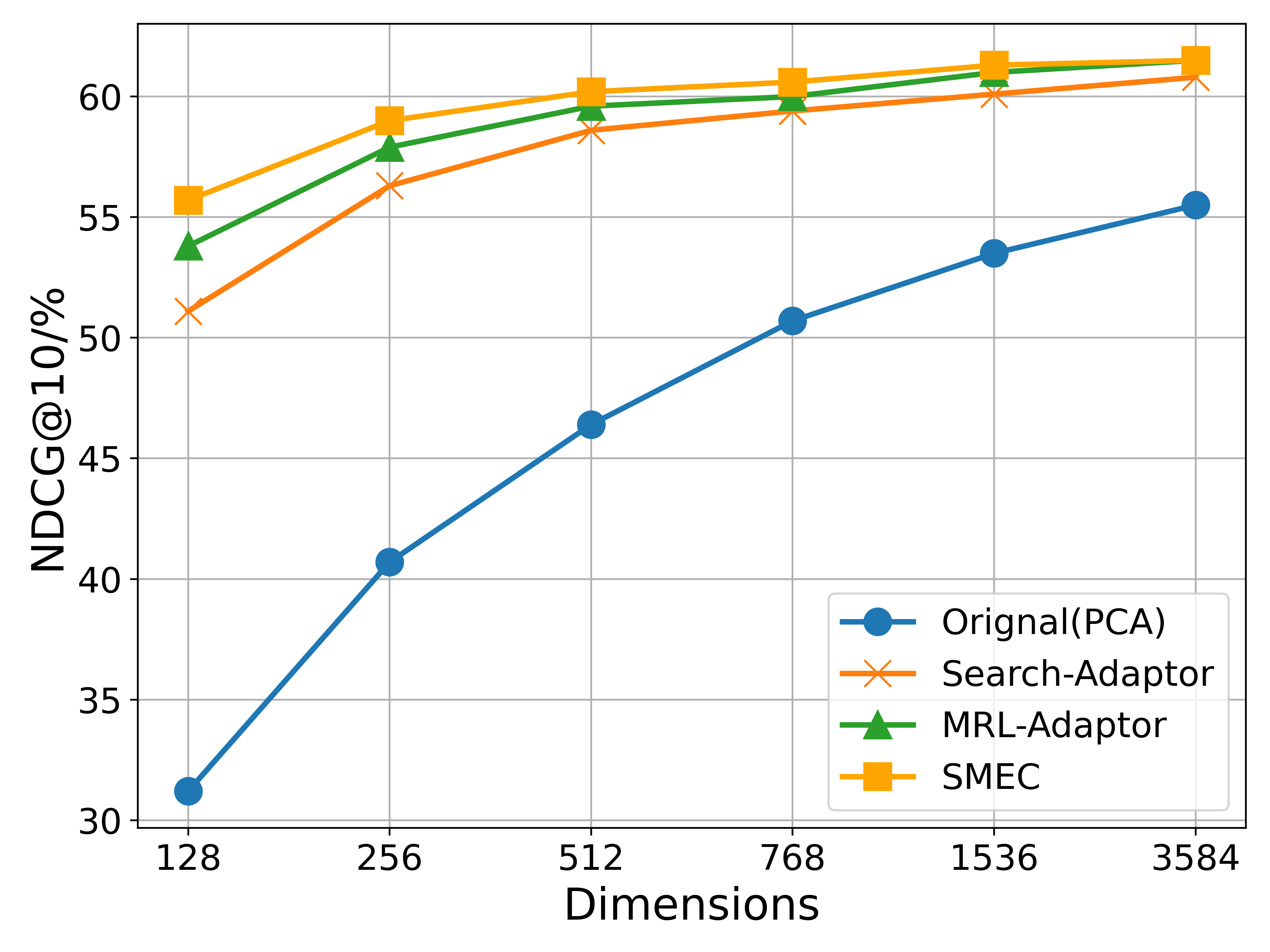

The image is a line graph comparing the performance of four different methods (Original(PCA), Search-Adaptor, MRL-Adaptor, SMEC) in terms of NDCG@10% across varying dimensions. The x-axis represents dimensions (128 to 3584), and the y-axis represents NDCG@10% (30% to 60%). All methods show upward trends, with SMEC consistently performing best.

### Components/Axes

- **X-axis (Dimensions)**: Labeled "Dimensions" with values 128, 256, 512, 768, 1536, 3584.

- **Y-axis (NDCG@10%)**: Labeled "NDCG@10%" with values 30% to 60%.

- **Legend**: Located in the bottom-right corner, associating:

- Blue circles: Original(PCA)

- Orange crosses: Search-Adaptor

- Green triangles: MRL-Adaptor

- Yellow squares: SMEC

### Detailed Analysis

1. **Original(PCA)** (Blue circles):

- Starts at ~31% at 128 dimensions.

- Rises steeply to ~41% at 256 dimensions.

- Continues increasing to ~55% at 3584 dimensions.

- Values: 31% (128), 41% (256), 46% (512), 51% (768), 53% (1536), 55% (3584).

2. **Search-Adaptor** (Orange crosses):

- Begins at ~51% at 128 dimensions.

- Increases gradually to ~56% at 256 dimensions.

- Reaches ~59% at 3584 dimensions.

- Values: 51% (128), 56% (256), 58% (512), 59% (768), 60% (1536), 61% (3584).

3. **MRL-Adaptor** (Green triangles):

- Starts at ~54% at 128 dimensions.

- Rises to ~58% at 256 dimensions.

- Peaks at ~60% at 3584 dimensions.

- Values: 54% (128), 58% (256), 59% (512), 60% (768), 61% (1536), 61% (3584).

4. **SMEC** (Yellow squares):

- Begins at ~56% at 128 dimensions.

- Increases steadily to ~61% at 3584 dimensions.

- Values: 56% (128), 59% (256), 60% (512), 61% (768), 61% (1536), 61% (3584).

### Key Observations

- **Performance Trends**: All methods improve with higher dimensions, but SMEC maintains the highest NDCG@10% across all dimensions.

- **Original(PCA) Gap**: Original(PCA) starts significantly lower (~31% at 128 dimensions) but closes the gap to ~55% by 3584 dimensions.

- **Convergence**: Search-Adaptor, MRL-Adaptor, and SMEC converge closely at higher dimensions (e.g., 59–61% at 3584 dimensions).

### Interpretation

The data suggests that increasing dimensions enhances performance for all methods, with SMEC being the most robust. Original(PCA) underperforms initially but improves substantially with scale. The convergence of Search-Adaptor, MRL-Adaptor, and SMEC at higher dimensions implies diminishing returns for further dimensional increases beyond 768. The steep rise of Original(PCA) indicates it may benefit more from dimensional scaling compared to other methods. This could reflect architectural differences in how these methods handle dimensionality.