## Bar Chart: Unfaithfulness Retention After Oversampling by AI Model

### Overview

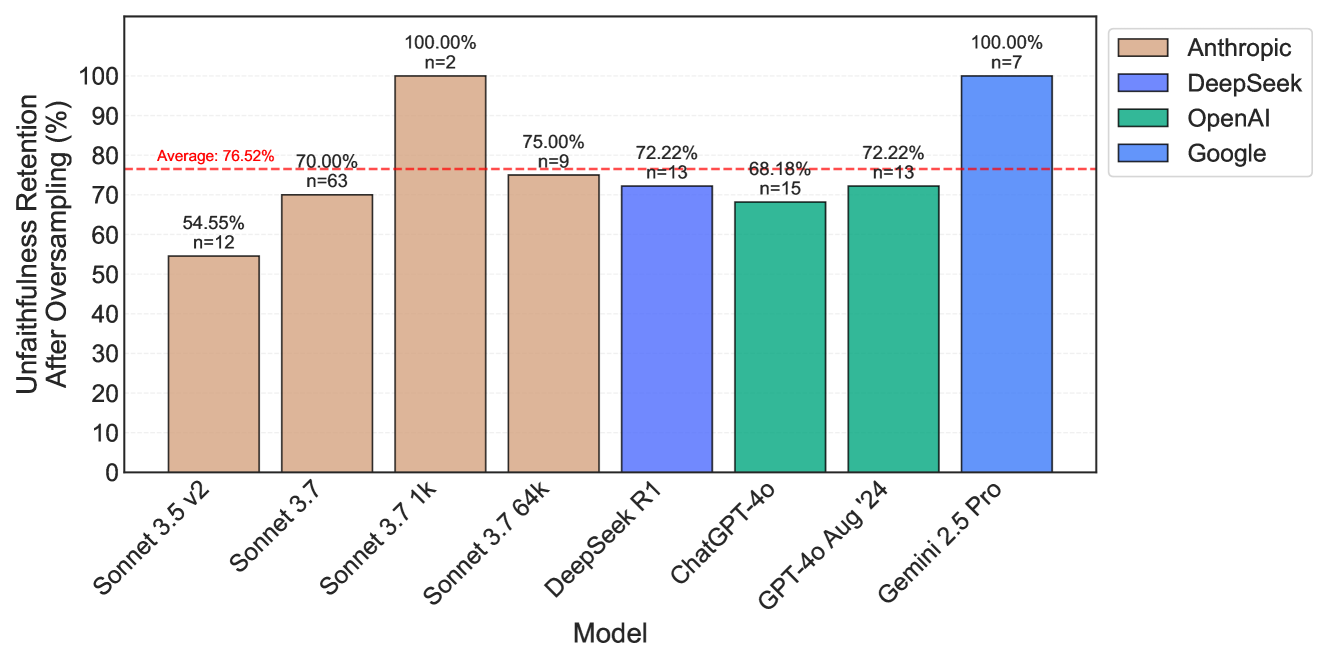

This is a vertical bar chart comparing eight different large language models (LLMs) from four companies on a metric called "Unfaithfulness Retention After Oversampling." The chart displays the percentage of unfaithfulness retained by each model after an oversampling process, along with the sample size (n) for each measurement. A red dashed line indicates the average retention across all models.

### Components/Axes

* **Chart Type:** Vertical Bar Chart.

* **Y-Axis (Vertical):**

* **Label:** "Unfaithfulness Retention After Oversampling (%)"

* **Scale:** Linear scale from 0 to 100, with major tick marks every 10 units (0, 10, 20, ..., 100).

* **X-Axis (Horizontal):**

* **Label:** "Model"

* **Categories (from left to right):** Sonnet 3.5 V2, Sonnet 3.7, Sonnet 3.7 1k, Sonnet 3.7 64k, DeepSeek R1, ChatGPT-4o, GPT-4o Aug '24, Gemini 2.5 Pro.

* **Legend:** Located in the top-right corner, outside the plot area. It maps bar colors to the model's originating company:

* **Tan/Light Brown:** Anthropic

* **Medium Blue:** DeepSeek

* **Teal/Green:** OpenAI

* **Bright Blue:** Google

* **Reference Line:** A horizontal red dashed line spanning the chart at approximately 76.52% on the y-axis, labeled "Average: 76.52%".

* **Data Labels:** Each bar has two text annotations above it:

1. The exact percentage value (e.g., "54.55%").

2. The sample size in the format "n=[number]" (e.g., "n=12").

### Detailed Analysis

The following table reconstructs the data presented in the chart, ordered from left to right as they appear on the x-axis.

| Model (X-Axis) | Company (Legend Color) | Unfaithfulness Retention (%) | Sample Size (n) |

| :--- | :--- | :--- | :--- |

| Sonnet 3.5 V2 | Anthropic (Tan) | 54.55% | 12 |

| Sonnet 3.7 | Anthropic (Tan) | 70.00% | 63 |

| Sonnet 3.7 1k | Anthropic (Tan) | 100.00% | 2 |

| Sonnet 3.7 64k | Anthropic (Tan) | 75.00% | 9 |

| DeepSeek R1 | DeepSeek (Medium Blue) | 72.22% | 13 |

| ChatGPT-4o | OpenAI (Teal) | 68.18% | 15 |

| GPT-4o Aug '24 | OpenAI (Teal) | 72.22% | 13 |

| Gemini 2.5 Pro | Google (Bright Blue) | 100.00% | 7 |

**Trend Verification:**

* The Anthropic models (first four bars) show a non-linear trend: starting at 54.55%, rising to 70%, peaking at 100%, then dropping to 75%.

* The DeepSeek and OpenAI models (middle bars) cluster relatively close to the average, ranging from 68.18% to 72.22%.

* The final Google model (Gemini 2.5 Pro) shows a sharp increase to 100%.

### Key Observations

1. **Maximum Retention:** Two models, **Sonnet 3.7 1k** (Anthropic) and **Gemini 2.5 Pro** (Google), exhibit 100.00% unfaithfulness retention. However, their sample sizes are very small (n=2 and n=7, respectively), which may affect the statistical reliability of this perfect score.

2. **Minimum Retention:** **Sonnet 3.5 V2** (Anthropic) has the lowest retention at 54.55%.

3. **Average Performance:** The overall average is 76.52%. Five of the eight models (Sonnet 3.7, Sonnet 3.7 64k, DeepSeek R1, GPT-4o Aug '24, and the two 100% models) are at or above this average. Three models (Sonnet 3.5 V2, ChatGPT-4o, and implicitly Sonnet 3.7 1k is an outlier) are below it.

4. **Sample Size Variance:** The sample sizes (n) vary significantly, from a low of 2 to a high of 63. The model with the largest sample, Sonnet 3.7 (n=63), has a retention of 70.00%, which is below the overall average.

5. **Company Grouping:** Anthropic's models show the widest performance spread (54.55% to 100%). OpenAI's two listed models (ChatGPT-4o and GPT-4o Aug '24) have very similar performance (68.18% vs. 72.22%).

### Interpretation

This chart measures a specific failure mode of AI models: their tendency to retain "unfaithful" outputs (likely meaning incorrect, fabricated, or non-grounded information) even after an "oversampling" technique is applied, which is presumably a method intended to improve reliability or correct errors.

* **What the data suggests:** A high retention percentage indicates that the model's unfaithful behavior is robust and resistant to correction via oversampling. A lower percentage suggests the oversampling technique is more effective at reducing unfaithfulness for that model.

* **Relationship between elements:** The chart directly compares the effectiveness of a mitigation strategy (oversampling) across different model architectures and versions. The average line provides a benchmark for "typical" performance.

* **Notable anomalies and implications:**

* The 100% retention scores for Sonnet 3.7 1k and Gemini 2.5 Pro are striking. They suggest that for these specific model configurations, the oversampling process had no measurable effect on reducing unfaithfulness in the tested samples. The very small sample size for Sonnet 3.7 1k (n=2) warrants caution in interpreting this result.

* The significant drop from Sonnet 3.7 1k (100%) to Sonnet 3.7 64k (75%) within the same model family (Sonnet 3.7) suggests that the context window or a related parameter ("1k" vs. "64k") dramatically influences how oversampling affects unfaithfulness retention.

* The fact that the model with the most data (Sonnet 3.7, n=63) performs below average could indicate that larger-scale testing reveals a more challenging baseline for this metric.

In summary, the chart reveals that the efficacy of oversampling as a technique to combat AI unfaithfulness is highly variable and model-dependent. It is not a universally reliable fix, as evidenced by models that show perfect retention of unfaithfulness even after its application.