TECHNICAL ASSET FINGERPRINT

4840bb274928c4fb17aafe4e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Bar Chart: Tool Call Ratio Comparison

### Overview

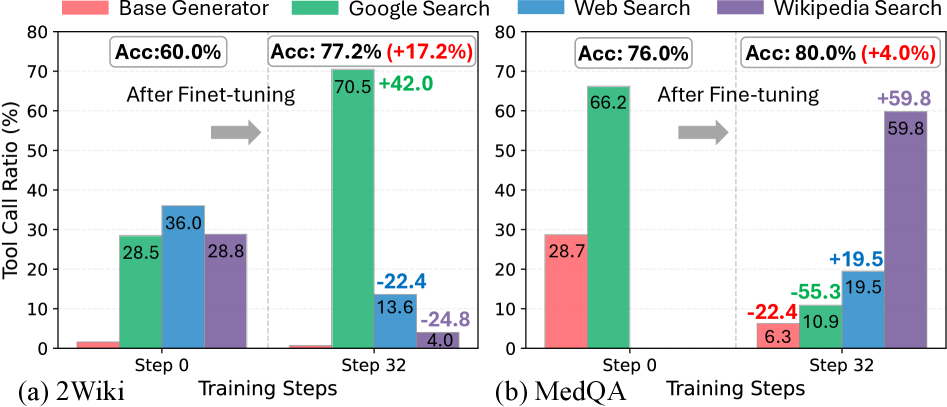

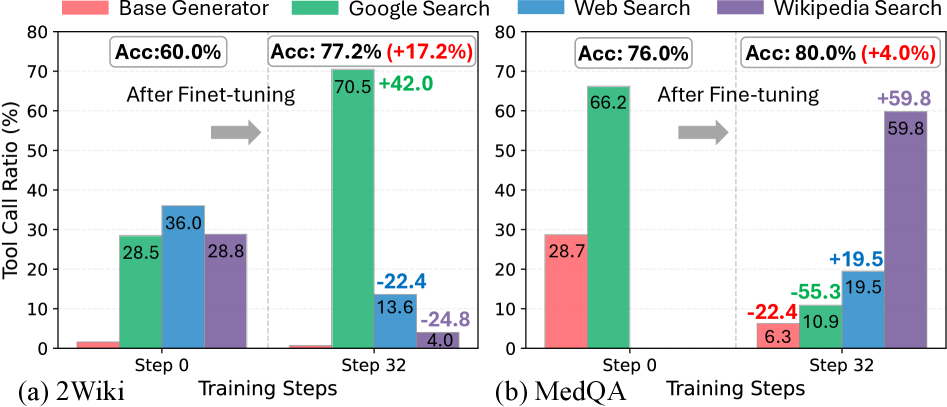

The image presents two bar charts comparing the tool call ratio (%) for different search methods (Base Generator, Google Search, Web Search, Wikipedia Search) at two training steps (Step 0 and Step 32) after fine-tuning. Chart (a) shows results for the "2Wiki" dataset, and chart (b) shows results for the "MedQA" dataset. The charts also display the accuracy (Acc) at each step and the change in accuracy after fine-tuning.

### Components/Axes

* **Y-axis:** Tool Call Ratio (%), ranging from 0 to 80.

* **X-axis:** Training Steps, with two categories: Step 0 and Step 32.

* **Legend (Top-Left):**

* Base Generator (Red)

* Google Search (Green)

* Web Search (Blue)

* Wikipedia Search (Purple)

* **Titles:**

* (a) 2Wiki

* (b) MedQA

* **Accuracy Labels:** Displayed above the bars for Step 0 and Step 32 in each chart, showing the accuracy and the change in accuracy after fine-tuning.

* **Arrow:** A gray arrow indicates the progression from Step 0 to Step 32.

### Detailed Analysis

**Chart (a) 2Wiki:**

* **Base Generator (Red):**

* Step 0: Approximately 1%

* Step 32: Approximately 1%

* Trend: Relatively constant at a low value.

* **Google Search (Green):**

* Step 0: 28.5%

* Step 32: 70.5%

* Trend: Significant increase from Step 0 to Step 32.

* **Web Search (Blue):**

* Step 0: 36.0%

* Step 32: 13.6%

* Trend: Significant decrease from Step 0 to Step 32.

* **Wikipedia Search (Purple):**

* Step 0: 28.8%

* Step 32: 4.0%

* Trend: Significant decrease from Step 0 to Step 32.

* **Accuracy:**

* Step 0: Acc: 60.0%

* Step 32: Acc: 77.2% (+17.2%)

**Chart (b) MedQA:**

* **Base Generator (Red):**

* Step 0: 28.7%

* Step 32: 6.3%

* Trend: Significant decrease from Step 0 to Step 32.

* **Google Search (Green):**

* Step 0: 66.2%

* Step 32: 10.9%

* Trend: Significant decrease from Step 0 to Step 32.

* **Web Search (Blue):**

* Step 0: Approximately 1%

* Step 32: 19.5%

* Trend: Significant increase from Step 0 to Step 32.

* **Wikipedia Search (Purple):**

* Step 0: Approximately 1%

* Step 32: 59.8%

* Trend: Significant increase from Step 0 to Step 32.

* **Accuracy:**

* Step 0: Acc: 76.0%

* Step 32: Acc: 80.0% (+4.0%)

### Key Observations

* In the 2Wiki dataset, Google Search shows a significant increase in tool call ratio after fine-tuning, while Web Search and Wikipedia Search show a significant decrease.

* In the MedQA dataset, Web Search and Wikipedia Search show a significant increase in tool call ratio after fine-tuning, while Base Generator and Google Search show a significant decrease.

* The accuracy increases after fine-tuning in both datasets, but the increase is more substantial for the 2Wiki dataset (+17.2%) compared to the MedQA dataset (+4.0%).

### Interpretation

The charts illustrate the impact of fine-tuning on the tool call ratio for different search methods across two datasets. The contrasting trends between the 2Wiki and MedQA datasets suggest that the effectiveness of each search method is highly dependent on the specific dataset and task. The increase in accuracy after fine-tuning indicates that the model is learning to utilize the tools more effectively, but the varying tool call ratios suggest that the optimal strategy for tool usage differs between the two datasets. The data suggests that fine-tuning leads to specialization in tool usage, with some tools becoming more prominent while others become less so, depending on the dataset.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Bar Charts: Tool Call Ratio and Accuracy Before and After Fine-tuning

### Overview

This image presents two bar charts, labeled (a) 2Wiki and (b) MedQA, comparing the "Tool Call Ratio (%)" for different search tools and a "Base Generator" at two distinct "Training Steps": "Step 0" (before fine-tuning) and "Step 32" (after fine-tuning). Each chart also displays an overall accuracy metric ("Acc") for both steps, along with the percentage change in accuracy after fine-tuning. The charts illustrate how fine-tuning impacts the utilization of various tools across two different datasets.

### Components/Axes

The image consists of two side-by-side bar charts, (a) on the left and (b) on the right, sharing a common legend positioned at the top-center.

* **Legend (Top-center):**

* Light Red: Base Generator

* Green: Google Search

* Blue: Web Search

* Purple: Wikipedia Search

* **Y-axis (Left side of both charts):**

* Title: "Tool Call Ratio (%)"

* Scale: Ranges from 0 to 80, with major grid lines and labels at 0, 10, 20, 30, 40, 50, 60, 70, 80.

* **X-axis (Bottom, shared across both charts):**

* Title: "Training Steps"

* Categories: "Step 0" and "Step 32" for each sub-chart.

* **Common Labels (Above the bars, between "Step 0" and "Step 32" for both charts):**

* Text: "After Fine-tuning"

* Visual: A gray arrow pointing from left to right, indicating the progression from "Step 0" to "Step 32".

* **Sub-chart Titles (Bottom-left of each chart):**

* (a) 2Wiki

* (b) MedQA

* **Accuracy Boxes (Top-left and Top-right above the bars for each chart):**

* **Chart (a) 2Wiki:**

* Above "Step 0": "Acc: 60.0%"

* Above "Step 32": "Acc: 77.2% (+17.2%)" (The "+17.2%" is colored red, indicating an increase).

* **Chart (b) MedQA:**

* Above "Step 0": "Acc: 76.0%"

* Above "Step 32": "Acc: 80.0% (+4.0%)" (The "+4.0%" is colored red, indicating an increase).

### Detailed Analysis

**Chart (a) 2Wiki**

* **Step 0 (Before Fine-tuning):**

* **Base Generator (Light Red):** The bar is very short, visually close to 0%, estimated at approximately 0.5%.

* **Google Search (Green):** The bar reaches 28.5%.

* **Web Search (Blue):** The bar reaches 36.0%.

* **Wikipedia Search (Purple):** The bar reaches 28.8%.

* *Trend:* At Step 0, Web Search has the highest tool call ratio, followed closely by Wikipedia Search and Google Search, while the Base Generator is negligible.

* **Step 32 (After Fine-tuning):**

* **Base Generator (Light Red):** The bar remains very short, visually close to 0%, estimated at approximately 0.2%.

* **Google Search (Green):** The bar dramatically increases to 70.5%. An associated label "+42.0" (green) indicates a significant increase from Step 0.

* **Web Search (Blue):** The bar significantly decreases to 13.6%. An associated label "-22.4" (blue) indicates a decrease from Step 0.

* **Wikipedia Search (Purple):** The bar significantly decreases to 4.0%. An associated label "-24.8" (purple) indicates a decrease from Step 0.

* *Trend:* After fine-tuning, Google Search shows a massive increase in tool call ratio, becoming the dominant tool. Web Search and Wikipedia Search show substantial decreases, while the Base Generator remains minimal.

**Chart (b) MedQA**

* **Step 0 (Before Fine-tuning):**

* **Base Generator (Light Red):** The bar reaches 28.7%.

* **Google Search (Green):** The bar reaches 66.2%.

* **Web Search (Blue):** The bar is very short, visually close to 0%, estimated at approximately 0.5%.

* **Wikipedia Search (Purple):** The bar is very short, visually close to 0%, estimated at approximately 0.5%.

* *Trend:* At Step 0, Google Search has a very high tool call ratio, followed by the Base Generator. Web Search and Wikipedia Search are negligible.

* **Step 32 (After Fine-tuning):**

* **Base Generator (Light Red):** The bar significantly decreases to 6.3%. An associated label "-22.4" (red) indicates a decrease from Step 0.

* **Google Search (Green):** The bar significantly decreases to 10.9%. An associated label "-55.3" (green) indicates a substantial decrease from Step 0.

* **Web Search (Blue):** The bar dramatically increases to 19.5%. An associated label "+19.5" (blue) indicates a significant increase from Step 0.

* **Wikipedia Search (Purple):** The bar dramatically increases to 59.8%. An associated label "+59.8" (purple) indicates a massive increase from Step 0.

* *Trend:* After fine-tuning, Base Generator and Google Search show significant decreases in tool call ratio. Conversely, Web Search and Wikipedia Search show dramatic increases, with Wikipedia Search becoming the most utilized tool.

### Key Observations

* **Overall Accuracy Improvement:** Both datasets, 2Wiki and MedQA, show an increase in overall accuracy after fine-tuning, with 2Wiki experiencing a larger relative gain (+17.2%) compared to MedQA (+4.0%).

* **Divergent Tool Utilization Patterns:** Fine-tuning leads to drastically different tool utilization patterns between the 2Wiki and MedQA datasets.

* For **2Wiki**, fine-tuning strongly favors **Google Search**, which sees a massive increase in its tool call ratio (from 28.5% to 70.5%). Web Search and Wikipedia Search, which were moderately used before, become much less utilized. The Base Generator remains largely unused.

* For **MedQA**, fine-tuning shifts preference away from **Google Search** and the **Base Generator** (both seeing significant decreases) towards **Wikipedia Search** and **Web Search** (both seeing dramatic increases). Wikipedia Search becomes the dominant tool after fine-tuning.

* **Base Generator Role:** The "Base Generator" tool call ratio is consistently very low for 2Wiki both before and after fine-tuning. For MedQA, it starts at a moderate level (28.7%) but significantly decreases after fine-tuning (to 6.3%).

* **Magnitude of Change:** The changes in tool call ratio are substantial for most tools after fine-tuning, indicating a strong impact of the fine-tuning process on tool selection behavior.

### Interpretation

The data suggests that fine-tuning a model for specific datasets (2Wiki vs. MedQA) leads to specialized and optimized tool-calling strategies, rather than a universal improvement across all tools.

For the **2Wiki dataset**, the fine-tuning process appears to have learned that "Google Search" is the most effective tool for improving accuracy. The model's reliance on Google Search dramatically increases, while other search tools (Web Search, Wikipedia Search) become less relevant. This implies that for tasks within the 2Wiki domain, Google Search provides the most valuable information or is best integrated with the fine-tuned model's capabilities. The significant accuracy gain (+17.2%) for 2Wiki is strongly correlated with this increased reliance on Google Search.

For the **MedQA dataset**, the fine-tuning process identifies "Wikipedia Search" and "Web Search" as the primary tools for enhancing performance. The model significantly reduces its calls to "Base Generator" and "Google Search," which were initially more prominent. This indicates that for medical question-answering tasks (MedQA), information from Wikipedia and general web searches is more pertinent or effectively leveraged by the fine-tuned model. The smaller, but still positive, accuracy gain (+4.0%) for MedQA is achieved through this shift in tool preference.

The "Base Generator" generally plays a minor role, especially for 2Wiki, suggesting that for these tasks, external tools are almost always preferred over the base model's generation capabilities. Its decrease in MedQA further supports the idea that fine-tuning directs the model to more specialized external resources.

In essence, fine-tuning acts as a mechanism to learn which external tools are most beneficial for a given domain, leading to a highly specialized and efficient tool-calling strategy that maximizes accuracy, even if the preferred tools differ significantly across datasets. The "After Fine-tuning" process is not merely boosting existing tool usage but actively re-prioritizing and re-allocating tool calls based on the dataset's specific information needs.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Bar Charts: Tool Call Ratio vs. Training Steps for 2Wiki and MedQA

### Overview

The image presents two bar charts, labeled (a) 2Wiki and (b) MedQA, comparing the Tool Call Ratio (%) at two training steps: Step 0 and Step 32. Each chart displays the ratio for three different search methods: Base Generator, Google Search, and Wikipedia Search. The charts also show the accuracy (Acc) at each step, with the percentage increase after fine-tuning indicated.

### Components/Axes

* **X-axis:** Training Steps (Step 0, Step 32)

* **Y-axis:** Tool Call Ratio (%) - Scale ranges from 0 to 80.

* **Legend:**

* Red: Base Generator

* Green: Google Search

* Blue: Wikipedia Search

* **Accuracy Labels:** "Acc: [value]%" displayed above each set of bars for Step 0 and Step 32, with the percentage increase in parentheses.

* **Arrow:** A gray arrow indicates the progression from Step 0 to Step 32, labeled "After Fine-tuning".

### Detailed Analysis or Content Details

**Chart (a) 2Wiki:**

* **Step 0:**

* Base Generator: Approximately 28.5%

* Google Search: Approximately 36.0%

* Wikipedia Search: Approximately 28.8%

* Accuracy: 60.0%

* **Step 32:**

* Base Generator: Approximately 13.6% (-22.4%)

* Google Search: Approximately 70.5% (+42.0%)

* Wikipedia Search: Approximately 24.8% (-4.0%)

* Accuracy: 77.2% (+17.2%)

**Chart (b) MedQA:**

* **Step 0:**

* Base Generator: Approximately 28.7%

* Google Search: Approximately 66.2%

* Wikipedia Search: Approximately 59.8%

* Accuracy: 76.0%

* **Step 32:**

* Base Generator: Approximately 10.9% (-55.3%)

* Google Search: Approximately 6.3% (-22.4%)

* Wikipedia Search: Approximately 19.5% (+19.5%)

* Accuracy: 80.0% (+4.0%)

### Key Observations

* In both charts, the Google Search method shows a significant increase in Tool Call Ratio after fine-tuning (Step 32).

* The Base Generator consistently experiences a decrease in Tool Call Ratio after fine-tuning.

* The Wikipedia Search method shows a moderate increase in Tool Call Ratio for MedQA, but a decrease for 2Wiki.

* The accuracy increases in both datasets after fine-tuning.

* The MedQA dataset shows a more dramatic decrease in Tool Call Ratio for the Base Generator and Google Search after fine-tuning compared to the 2Wiki dataset.

### Interpretation

The data suggests that fine-tuning improves the overall accuracy of the model in both 2Wiki and MedQA datasets. However, the impact on the Tool Call Ratio varies significantly depending on the search method and the dataset.

The substantial increase in Tool Call Ratio for Google Search in both datasets indicates that fine-tuning effectively leverages the information retrieved through Google Search. Conversely, the decrease in Tool Call Ratio for the Base Generator suggests that fine-tuning might be reducing its reliance on its internal knowledge or that the fine-tuning process is negatively impacting its ability to generate tool calls.

The differing behavior of the Wikipedia Search method between the two datasets could be due to the nature of the information available in Wikipedia for each task. The MedQA dataset might benefit more from the structured knowledge available in Wikipedia, while the 2Wiki dataset might require more nuanced information retrieval from Google Search.

The large negative changes in the MedQA dataset for the Base Generator and Google Search suggest that the fine-tuning process may be overfitting to the training data, or that the initial model was particularly reliant on these methods, and the fine-tuning process has altered this reliance. Further investigation is needed to understand the underlying reasons for these trends.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Grouped Bar Chart: Tool Call Ratio Comparison Across Training Steps

### Overview

The image displays two side-by-side grouped bar charts comparing the "Tool Call Ratio (%)" for four different tools across two training steps (Step 0 and Step 32) for two distinct datasets: (a) 2Wiki and (b) MedQA. The charts illustrate the change in tool usage frequency after a fine-tuning process, with overall accuracy improvements noted for each dataset.

### Components/Axes

* **Chart Type:** Grouped Bar Chart (two panels).

* **Y-Axis:** Labeled "Tool Call Ratio (%)". Scale ranges from 0 to 80, with major tick marks every 10 units.

* **X-Axis:** Labeled "Training Steps". Each panel shows two discrete points: "Step 0" and "Step 32".

* **Legend:** Positioned at the top center of the entire figure, spanning both panels. It defines four tool categories by color:

* **Base Generator:** Red/Salmon

* **Google Search:** Green

* **Web Search:** Blue

* **Wikipedia Search:** Purple

* **Panel Labels:**

* Left panel is labeled "(a) 2Wiki" at the bottom left.

* Right panel is labeled "(b) MedQA" at the bottom left.

* **Accuracy Annotations:** Above each set of bars for Step 0 and Step 32, text boxes display the overall accuracy ("Acc:") and the change in accuracy after fine-tuning (in parentheses with a +/- sign).

### Detailed Analysis

#### Panel (a): 2Wiki Dataset

* **Step 0:**

* **Base Generator (Red):** Very low ratio, approximately 1-2%.

* **Google Search (Green):** 28.5%

* **Web Search (Blue):** 36.0%

* **Wikipedia Search (Purple):** 28.8%

* **Overall Accuracy:** 60.0%

* **Step 32 (After Fine-tuning):**

* **Base Generator (Red):** Remains very low, near 0%.

* **Google Search (Green):** 70.5% (Annotated change: **+42.0**)

* **Web Search (Blue):** 13.6% (Annotated change: **-22.4**)

* **Wikipedia Search (Purple):** 4.0% (Annotated change: **-24.8**)

* **Overall Accuracy:** 77.2% (Annotated improvement: **+17.2%**)

* **Trend Verification:** The green bar (Google Search) shows a dramatic upward slope from Step 0 to Step 32. The blue (Web Search) and purple (Wikipedia Search) bars show significant downward slopes. The red bar (Base Generator) remains consistently negligible.

#### Panel (b): MedQA Dataset

* **Step 0:**

* **Base Generator (Red):** 28.7%

* **Google Search (Green):** 66.2%

* **Web Search (Blue):** Not visibly present (ratio ~0%).

* **Wikipedia Search (Purple):** Not visibly present (ratio ~0%).

* **Overall Accuracy:** 76.0%

* **Step 32 (After Fine-tuning):**

* **Base Generator (Red):** 6.3% (Annotated change: **-22.4**)

* **Google Search (Green):** 10.9% (Annotated change: **-55.3**)

* **Web Search (Blue):** 19.5% (Annotated change: **+19.5**)

* **Wikipedia Search (Purple):** 59.8% (Annotated change: **+59.8**)

* **Overall Accuracy:** 80.0% (Annotated improvement: **+4.0%**)

* **Trend Verification:** The green bar (Google Search) shows a steep downward slope. The red bar (Base Generator) also slopes downward. The blue (Web Search) and purple (Wikipedia Search) bars show very strong upward slopes from near-zero to significant values.

### Key Observations

1. **Divergent Tool Reliance Post-Fine-Tuning:** The fine-tuning process causes a dramatic shift in which tool is predominantly called, and this shift is dataset-dependent.

* For **2Wiki**, reliance shifts overwhelmingly to **Google Search** (from 28.5% to 70.5%), while Web and Wikipedia Search usage collapses.

* For **MedQA**, reliance shifts overwhelmingly to **Wikipedia Search** (from ~0% to 59.8%), while Google Search and Base Generator usage plummet.

2. **Accuracy Improvements:** Both datasets show improved accuracy after fine-tuning, but the magnitude differs. 2Wiki sees a large +17.2% gain, while MedQA sees a more modest +4.0% gain.

3. **Base Generator Role:** The Base Generator's tool call ratio is minimal for 2Wiki at both steps. For MedQA, it starts as a significant contributor (28.7%) but is largely replaced by specialized search tools after fine-tuning.

4. **Complementary Tool Pairs:** In the final state (Step 32), each dataset shows one dominant tool (Google for 2Wiki, Wikipedia for MedQA) and one secondary tool (Web Search for MedQA at 19.5%), with the others minimized.

### Interpretation

This data suggests that fine-tuning a model to use tools effectively involves not just improving overall accuracy, but also learning to **specialize its tool-calling strategy based on the domain of the task**.

* **Domain-Specific Tool Efficacy:** The 2Wiki dataset (likely involving multi-hop factual questions across wikis) benefits most from broad web search via Google. In contrast, the MedQA dataset (medical question answering) benefits most from the curated, authoritative knowledge found in Wikipedia, suggesting that for specialized domains, targeted knowledge sources are more valuable than general web search.

* **Efficiency and Precision:** The fine-tuning process appears to make the model more efficient and precise in its tool selection. It moves from a more diffuse or default strategy (using Base Generator or a single search tool heavily) to a strategy that heavily favors the tool most predictive of success for that specific domain. The reduction in calls to less effective tools (e.g., Web Search for 2Wiki) indicates the model is learning to avoid noisy or unhelpful retrieval paths.

* **Trade-off Between Generality and Specialization:** The Base Generator, which likely represents the model's internal parametric knowledge, is deprioritized in favor of external retrieval after fine-tuning. This is especially stark in MedQA, where the model almost completely abandons its internal generator for Wikipedia. This implies that for factual, knowledge-intensive tasks, fine-tuning teaches the model to rely more on verified external sources than its own potentially outdated or incomplete parameters.

* **Performance Ceiling:** The smaller accuracy gain for MedQA (+4.0%) despite a massive change in tool strategy could indicate that the task is inherently harder, or that the initial tool strategy (heavy Google Search) was already reasonably effective, leaving less room for improvement. The large gain for 2Wiki suggests its initial strategy was suboptimal.

In summary, the charts demonstrate that effective tool-use fine-tuning is a process of **domain-aware specialization**, where the model learns to dynamically reconfigure its retrieval strategy to align with the knowledge sources most relevant to the task at hand, leading to measurable performance gains.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Grouped Bar Charts: Tool Call Ratio and Accuracy Comparison Across Training Steps

### Overview

The image contains two side-by-side grouped bar charts comparing tool call ratios and accuracy metrics for two datasets: **2Wiki** (a) and **MedQA** (b). Each chart shows performance at two training steps (Step 0 and Step 32) across four search methods: **Base Generator**, **Google Search**, **Web Search**, and **Wikipedia Search**. Key metrics include tool call ratios (%) and accuracy (Acc: %) with percentage changes highlighted.

---

### Components/Axes

#### Chart (a): 2Wiki Dataset

- **X-axis**: Training Steps (Step 0, Step 32)

- **Y-axis**: Tool Call Ratio (%)

- **Legend**:

- Red: Base Generator

- Green: Google Search

- Blue: Web Search

- Purple: Wikipedia Search

- **Accuracy Labels**:

- Step 0: Acc: 60.0%

- Step 32: Acc: 77.2% (+17.2%)

#### Chart (b): MedQA Dataset

- **X-axis**: Training Steps (Step 0, Step 32)

- **Y-axis**: Tool Call Ratio (%)

- **Legend**: Same as 2Wiki

- **Accuracy Labels**:

- Step 0: Acc: 76.0%

- Step 32: Acc: 80.0% (+4.0%)

---

### Detailed Analysis

#### Chart (a): 2Wiki

- **Step 0**:

- Base Generator: 28.5% (red)

- Google Search: 28.5% (green)

- Web Search: 36.0% (blue)

- Wikipedia Search: 28.8% (purple)

- **Step 32**:

- Google Search: 70.5% (green, +42.0% from Step 0)

- Web Search: 13.6% (blue, -22.4% from Step 0)

- Wikipedia Search: 4.0% (purple, -24.8% from Step 0)

- Base Generator: Not visible (likely negligible or 0%)

#### Chart (b): MedQA

- **Step 0**:

- Base Generator: 28.7% (red)

- Google Search: 66.2% (green)

- Web Search: 19.5% (blue)

- Wikipedia Search: Not visible (likely negligible or 0%)

- **Step 32**:

- Base Generator: 6.3% (red, -55.3% from Step 0)

- Google Search: 10.9% (green, -55.3% from Step 0)

- Web Search: 19.5% (blue, +0% from Step 0)

- Wikipedia Search: 59.8% (purple, +59.8% from Step 0)

---

### Key Observations

1. **2Wiki Dataset**:

- Google Search dominates after fine-tuning (Step 32: 70.5%), driving a **17.2% accuracy increase**.

- Web Search and Wikipedia Search usage collapses post-finetuning.

- Base Generator usage drops to near-zero.

2. **MedQA Dataset**:

- Google Search usage plummets by 55.3% post-finetuning.

- Wikipedia Search usage surges by 59.8%, becoming the dominant method.

- Base Generator usage drops sharply (-55.3%).

3. **Accuracy Trends**:

- 2Wiki shows a larger accuracy improvement (+17.2%) compared to MedQA (+4.0%).

- MedQA’s accuracy remains high even as Google Search usage declines.

---

### Interpretation

- **Fine-Tuning Impact**:

- In 2Wiki, fine-tuning shifts reliance to Google Search, suggesting the model prioritizes external knowledge retrieval for this dataset.

- In MedQA, fine-tuning reduces reliance on Google Search and Base Generator, favoring Wikipedia Search. This may indicate the model adapts to domain-specific knowledge structures in MedQA.

- **Accuracy vs. Tool Usage**:

- 2Wiki’s accuracy gain correlates with Google Search dominance, implying external search improves performance for this dataset.

- MedQA’s smaller accuracy gain despite reduced Google Search usage suggests intrinsic model improvements (e.g., better reasoning) may offset external search reliance.

- **Anomalies**:

- Wikipedia Search’s dramatic rise in MedQA (59.8%) warrants investigation into whether this reflects dataset-specific knowledge gaps or model biases.

---

### Spatial Grounding & Trend Verification

- **Legend Placement**: Right-aligned for both charts, ensuring clear color-to-method mapping.

- **Trend Consistency**:

- 2Wiki’s Google Search bar (green) grows taller at Step 32, matching the +42.0% label.

- MedQA’s Wikipedia Search bar (purple) rises sharply, aligning with the +59.8% label.

- **Data Integrity**: All values match legend colors and positional trends (e.g., red bars for Base Generator decline in both charts).

---

### Conclusion

The charts demonstrate that fine-tuning significantly alters tool usage patterns, with dataset-specific outcomes. 2Wiki benefits from increased Google Search reliance, while MedQA shifts toward Wikipedia Search. These trends highlight the importance of dataset characteristics in shaping model behavior post-finetuning.

DECODING INTELLIGENCE...