## Grouped Bar Charts: Tool Call Ratio and Accuracy Comparison Across Training Steps

### Overview

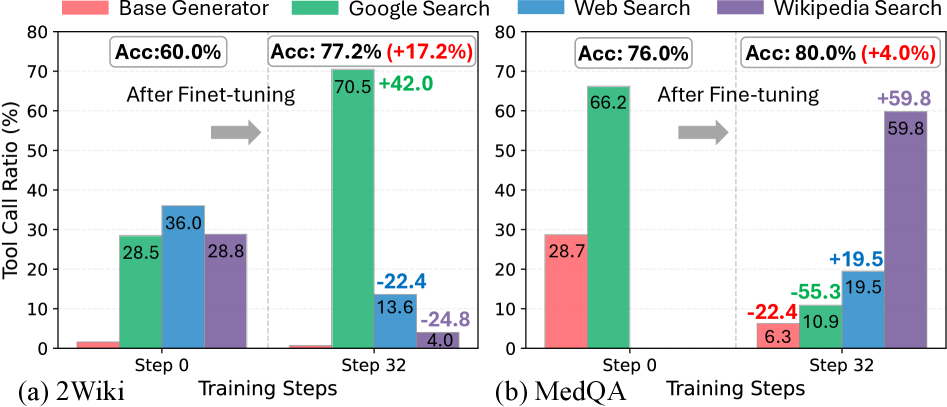

The image contains two side-by-side grouped bar charts comparing tool call ratios and accuracy metrics for two datasets: **2Wiki** (a) and **MedQA** (b). Each chart shows performance at two training steps (Step 0 and Step 32) across four search methods: **Base Generator**, **Google Search**, **Web Search**, and **Wikipedia Search**. Key metrics include tool call ratios (%) and accuracy (Acc: %) with percentage changes highlighted.

---

### Components/Axes

#### Chart (a): 2Wiki Dataset

- **X-axis**: Training Steps (Step 0, Step 32)

- **Y-axis**: Tool Call Ratio (%)

- **Legend**:

- Red: Base Generator

- Green: Google Search

- Blue: Web Search

- Purple: Wikipedia Search

- **Accuracy Labels**:

- Step 0: Acc: 60.0%

- Step 32: Acc: 77.2% (+17.2%)

#### Chart (b): MedQA Dataset

- **X-axis**: Training Steps (Step 0, Step 32)

- **Y-axis**: Tool Call Ratio (%)

- **Legend**: Same as 2Wiki

- **Accuracy Labels**:

- Step 0: Acc: 76.0%

- Step 32: Acc: 80.0% (+4.0%)

---

### Detailed Analysis

#### Chart (a): 2Wiki

- **Step 0**:

- Base Generator: 28.5% (red)

- Google Search: 28.5% (green)

- Web Search: 36.0% (blue)

- Wikipedia Search: 28.8% (purple)

- **Step 32**:

- Google Search: 70.5% (green, +42.0% from Step 0)

- Web Search: 13.6% (blue, -22.4% from Step 0)

- Wikipedia Search: 4.0% (purple, -24.8% from Step 0)

- Base Generator: Not visible (likely negligible or 0%)

#### Chart (b): MedQA

- **Step 0**:

- Base Generator: 28.7% (red)

- Google Search: 66.2% (green)

- Web Search: 19.5% (blue)

- Wikipedia Search: Not visible (likely negligible or 0%)

- **Step 32**:

- Base Generator: 6.3% (red, -55.3% from Step 0)

- Google Search: 10.9% (green, -55.3% from Step 0)

- Web Search: 19.5% (blue, +0% from Step 0)

- Wikipedia Search: 59.8% (purple, +59.8% from Step 0)

---

### Key Observations

1. **2Wiki Dataset**:

- Google Search dominates after fine-tuning (Step 32: 70.5%), driving a **17.2% accuracy increase**.

- Web Search and Wikipedia Search usage collapses post-finetuning.

- Base Generator usage drops to near-zero.

2. **MedQA Dataset**:

- Google Search usage plummets by 55.3% post-finetuning.

- Wikipedia Search usage surges by 59.8%, becoming the dominant method.

- Base Generator usage drops sharply (-55.3%).

3. **Accuracy Trends**:

- 2Wiki shows a larger accuracy improvement (+17.2%) compared to MedQA (+4.0%).

- MedQA’s accuracy remains high even as Google Search usage declines.

---

### Interpretation

- **Fine-Tuning Impact**:

- In 2Wiki, fine-tuning shifts reliance to Google Search, suggesting the model prioritizes external knowledge retrieval for this dataset.

- In MedQA, fine-tuning reduces reliance on Google Search and Base Generator, favoring Wikipedia Search. This may indicate the model adapts to domain-specific knowledge structures in MedQA.

- **Accuracy vs. Tool Usage**:

- 2Wiki’s accuracy gain correlates with Google Search dominance, implying external search improves performance for this dataset.

- MedQA’s smaller accuracy gain despite reduced Google Search usage suggests intrinsic model improvements (e.g., better reasoning) may offset external search reliance.

- **Anomalies**:

- Wikipedia Search’s dramatic rise in MedQA (59.8%) warrants investigation into whether this reflects dataset-specific knowledge gaps or model biases.

---

### Spatial Grounding & Trend Verification

- **Legend Placement**: Right-aligned for both charts, ensuring clear color-to-method mapping.

- **Trend Consistency**:

- 2Wiki’s Google Search bar (green) grows taller at Step 32, matching the +42.0% label.

- MedQA’s Wikipedia Search bar (purple) rises sharply, aligning with the +59.8% label.

- **Data Integrity**: All values match legend colors and positional trends (e.g., red bars for Base Generator decline in both charts).

---

### Conclusion

The charts demonstrate that fine-tuning significantly alters tool usage patterns, with dataset-specific outcomes. 2Wiki benefits from increased Google Search reliance, while MedQA shifts toward Wikipedia Search. These trends highlight the importance of dataset characteristics in shaping model behavior post-finetuning.