## Bar Chart: Performance Comparison of Methods Across Language Models

### Overview

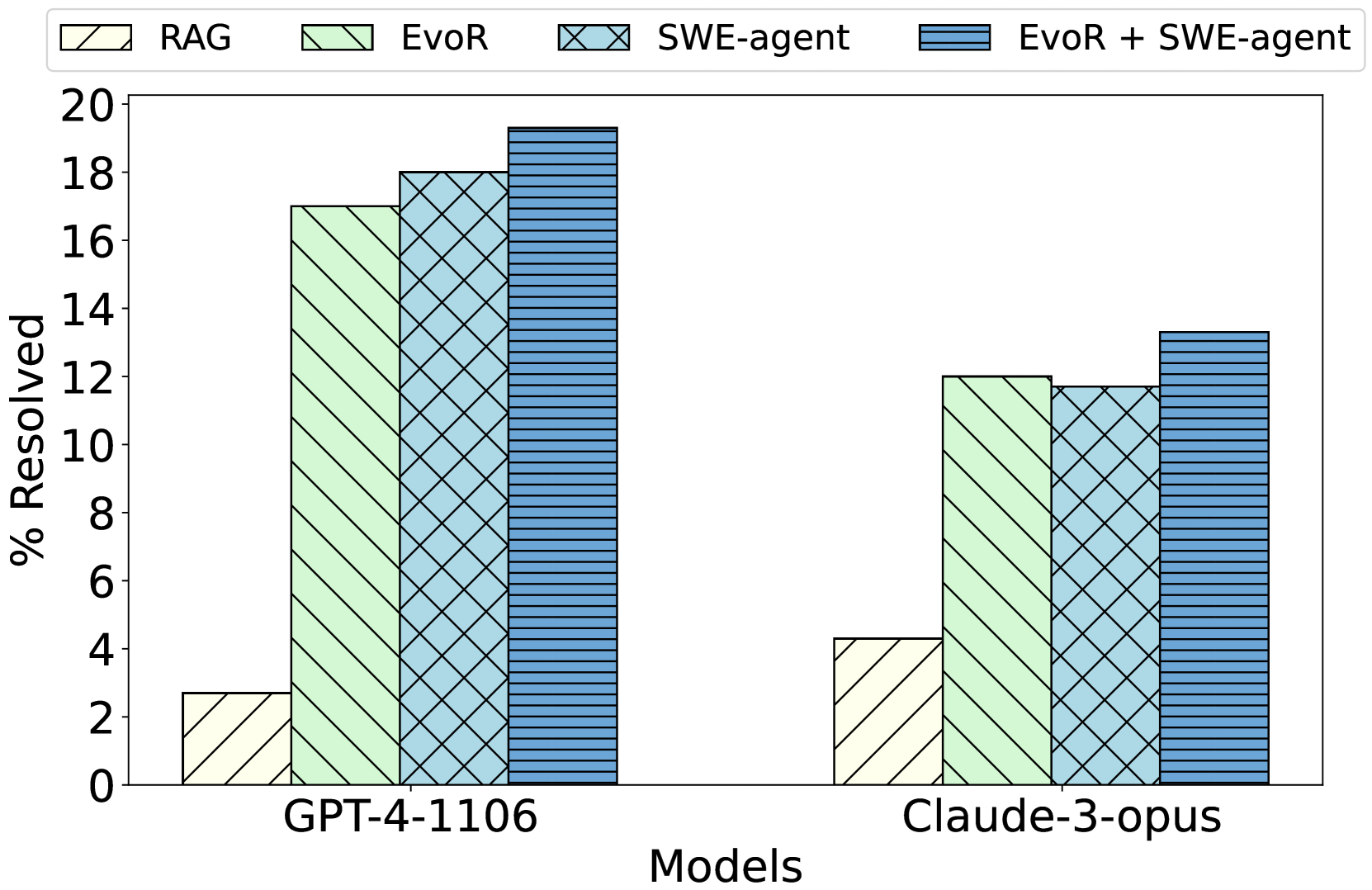

This is a grouped bar chart comparing the performance of four different methods (RAG, EvoR, SWE-agent, and EvoR + SWE-agent) on two large language models (GPT-4-1106 and Claude-3-opus). The performance metric is the percentage of issues resolved ("% Resolved").

### Components/Axes

* **Y-Axis:** Labeled "% Resolved". The scale runs from 0 to 20 with major tick marks every 2 units (0, 2, 4, 6, 8, 10, 12, 14, 16, 18, 20).

* **X-Axis:** Labeled "Models". It contains two categorical groups: "GPT-4-1106" (left group) and "Claude-3-opus" (right group).

* **Legend:** Positioned at the top of the chart, centered horizontally. It defines four data series:

* **RAG:** Light yellow bar with a single diagonal hatch pattern (\\).

* **EvoR:** Light green bar with a single diagonal hatch pattern (\\).

* **SWE-agent:** Light blue bar with a cross-hatch pattern (X).

* **EvoR + SWE-agent:** Darker blue bar with a horizontal line pattern (-).

### Detailed Analysis

**Data Series & Approximate Values:**

**1. Model: GPT-4-1106 (Left Group)**

* **Trend:** Performance increases sequentially from RAG to EvoR + SWE-agent.

* **RAG (Yellow, Diagonal Hatch):** ~2.7% resolved.

* **EvoR (Green, Diagonal Hatch):** ~17.0% resolved.

* **SWE-agent (Light Blue, Cross-hatch):** ~18.0% resolved.

* **EvoR + SWE-agent (Dark Blue, Horizontal Lines):** ~19.3% resolved.

**2. Model: Claude-3-opus (Right Group)**

* **Trend:** Performance increases from RAG to EvoR, dips slightly for SWE-agent, then rises to the highest for EvoR + SWE-agent.

* **RAG (Yellow, Diagonal Hatch):** ~4.3% resolved.

* **EvoR (Green, Diagonal Hatch):** ~12.0% resolved.

* **SWE-agent (Light Blue, Cross-hatch):** ~11.7% resolved.

* **EvoR + SWE-agent (Dark Blue, Horizontal Lines):** ~13.3% resolved.

### Key Observations

1. **Method Hierarchy:** For both models, the combined "EvoR + SWE-agent" method achieves the highest resolution percentage. The standalone "RAG" method performs the worst by a significant margin.

2. **Model Comparison:** GPT-4-1106 consistently outperforms Claude-3-opus across all four methods. The performance gap is most pronounced for the EvoR and SWE-agent methods.

3. **Synergy Effect:** The combination of EvoR and SWE-agent yields a performance boost over either method alone for both models, suggesting a complementary relationship.

4. **Anomaly:** For Claude-3-opus, the SWE-agent method (~11.7%) performs slightly worse than the EvoR method (~12.0%), which is the opposite of the trend seen with GPT-4-1106.

### Interpretation

The data suggests that advanced agentic or retrieval-augmented methods (EvoR, SWE-agent) dramatically outperform a basic RAG approach for the task measured (likely software engineering or issue resolution, given the "SWE-agent" name). The consistent superiority of the combined "EvoR + SWE-agent" method indicates that integrating evolutionary retrieval with a software engineering agent creates a more robust system than either component in isolation.

The significant performance difference between GPT-4-1106 and Claude-3-opus implies that the underlying capabilities of the base model remain a critical factor, even when augmented with these specialized methods. The task may leverage specific strengths of the GPT-4 architecture. The slight underperformance of SWE-agent vs. EvoR on Claude-3-opus could indicate a less optimal integration or a mismatch between the agent's design and this model's particular response patterns. Overall, the chart demonstrates the value of methodological innovation and combination, while also highlighting the persistent influence of the foundational model's quality.