TECHNICAL ASSET FINGERPRINT

48956a9dd6c34f863bfb77b7

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

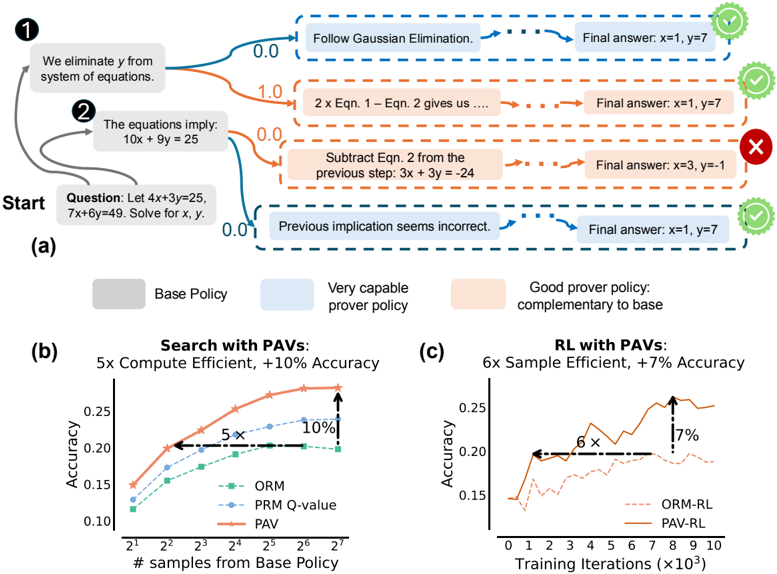

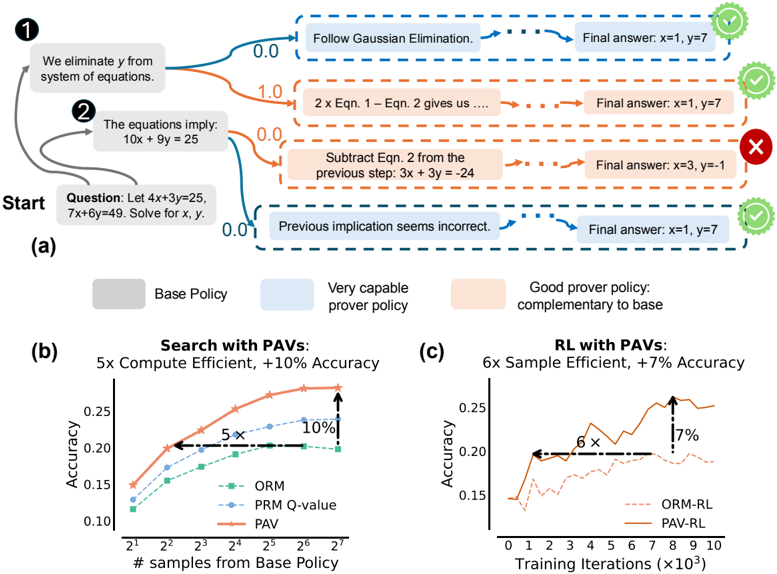

## Decision Tree and Performance Charts: PAVs

### Overview

The image presents a decision tree diagram illustrating a problem-solving process, alongside two line charts comparing the performance of different algorithms with and without PAVs (Potentially Advantageous Variants). The decision tree shows steps to solve a system of equations, while the charts compare accuracy versus computational effort or training iterations.

### Components/Axes

**Decision Tree (a):**

* **Nodes:** Represented by rounded rectangles containing text describing the current state or action.

* **Edges:** Arrows indicating the flow of the decision-making process.

* **Start Node:** Labeled "Start" with the initial question: "Let 4x+3y=25, 7x+6y=49. Solve for x, y."

* **Intermediate Nodes:** Describe steps like "We eliminate y from system of equations" and "The equations imply: 10x + 9y = 25".

* **Leaf Nodes:** Contain "Final answer: x=..., y=..." and are marked with either a green checkmark (correct) or a red "X" (incorrect).

* **Edge Labels:** Numerical values (0.0, 1.0) associated with certain edges.

* **Node Styles:** Nodes are filled with different colors: gray ("Base Policy"), light blue ("Very capable prover policy"), and light orange ("Good prover policy: complementary to base").

**Chart (b): Search with PAVs**

* **Title:** "Search with PAVs: 5x Compute Efficient, +10% Accuracy"

* **X-axis:** "# samples from Base Policy" with a logarithmic scale (2<sup>1</sup> to 2<sup>7</sup>).

* **Y-axis:** "Accuracy" ranging from 0.10 to 0.25.

* **Data Series:**

* ORM (green dashed line with square markers)

* PRM Q-value (blue dashed line with triangle markers)

* PAV (orange solid line with star markers)

* **Annotations:** "5x" and "+10%" indicating performance improvements.

**Chart (c): RL with PAVs**

* **Title:** "RL with PAVs: 6x Sample Efficient, +7% Accuracy"

* **X-axis:** "Training Iterations (×10<sup>3</sup>)" ranging from 0 to 10.

* **Y-axis:** "Accuracy" ranging from 0.15 to 0.25.

* **Data Series:**

* ORM-RL (brown dashed line)

* PAV-RL (dark orange solid line)

* **Annotations:** "6x" and "+7%" indicating performance improvements.

### Detailed Analysis

**Decision Tree (a):**

* The tree starts with the question and branches into two initial steps.

* The first branch ("We eliminate y from system of equations") leads to "Follow Gaussian Elimination" (blue node) and a correct final answer (x=1, y=7).

* The second branch ("The equations imply: 10x + 9y = 25") splits into two paths.

* One path ("2 x Eqn. 1 - Eqn. 2 gives us...") leads to a correct final answer (x=1, y=7).

* The other path ("Subtract Eqn. 2 from the previous step: 3x + 3y = -24") leads to an incorrect final answer (x=3, y=-1).

* A final branch from the initial question ("Previous implication seems incorrect.") leads to a correct final answer (x=1, y=7).

**Chart (b): Search with PAVs**

* **ORM:** Starts at approximately 0.12 accuracy and increases to approximately 0.20 accuracy.

* **PRM Q-value:** Starts at approximately 0.15 accuracy and increases to approximately 0.22 accuracy.

* **PAV:** Starts at approximately 0.14 accuracy and increases to approximately 0.27 accuracy.

* The PAV line consistently outperforms the other two.

* The "5x Compute Efficient" annotation indicates that PAV achieves a certain accuracy level with 5 times fewer samples than another method (likely ORM or PRM Q-value).

* The "+10% Accuracy" annotation indicates that PAV achieves 10% higher accuracy than another method at a certain sample size.

**Chart (c): RL with PAVs**

* **ORM-RL:** Starts at approximately 0.15 accuracy and fluctuates, ending around 0.20 accuracy.

* **PAV-RL:** Starts at approximately 0.20 accuracy and increases to approximately 0.26 accuracy.

* The PAV-RL line consistently outperforms the ORM-RL line.

* The "6x Sample Efficient" annotation indicates that PAV-RL achieves a certain accuracy level with 6 times fewer training iterations than ORM-RL.

* The "+7% Accuracy" annotation indicates that PAV-RL achieves 7% higher accuracy than ORM-RL at a certain number of training iterations.

### Key Observations

* The decision tree illustrates different approaches to solving a system of equations, with varying degrees of success.

* The charts demonstrate that using PAVs improves both computational efficiency and accuracy in both search and reinforcement learning contexts.

* In both charts, the PAV-based methods (PAV and PAV-RL) consistently outperform their counterparts (ORM and ORM-RL).

### Interpretation

The data suggests that incorporating Potentially Advantageous Variants (PAVs) into algorithms can significantly enhance their performance. The decision tree highlights the importance of choosing the correct steps in problem-solving, as some paths lead to incorrect solutions. The charts quantify the benefits of PAVs, showing that they can lead to substantial improvements in both computational efficiency and accuracy. This implies that PAVs are a valuable technique for optimizing algorithms in various domains. The "5x", "6x", "+10%", and "+7%" annotations provide concrete evidence of the performance gains achieved by using PAVs.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Prover Policy and Performance Analysis

### Overview

The image presents a diagram illustrating a system for solving equations, comparing a "Base Policy" to a "Very capable prover policy" and evaluating their performance using Proof-Aware Values (PAVs). The diagram shows a sequence of steps in solving an equation, with feedback on the correctness of each step. Below this, two charts (b and c) compare the performance of different policies using metrics like accuracy and efficiency.

### Components/Axes

The diagram consists of three main sections: (a) a flow diagram of the equation-solving process, (b) a chart comparing "Search with PAVs" performance, and (c) a chart comparing "RL with PAVs" performance.

**Section (a):**

* **Start:** Indicates the beginning of the problem.

* **Question:** "Let 4x+3y=25, 7x+6y=49. Solve for x, y."

* **Step 1:** "We eliminate y from system of equations." (Associated with a blue curved line)

* **Step 2:** "The equations imply: 10x + 9y = 25" (Associated with an orange curved line)

* **Feedback:** Green checkmarks indicate correct steps, red crosses indicate incorrect steps.

* **Final Answer:** Displayed alongside each step, showing the system's solution attempt.

* **Policy Blocks:** "Base Policy" (grey), "Very capable prover policy" (light blue), "Good prover policy: complementary to base" (dark blue).

**Section (b): Search with PAVs**

* **X-axis:** "# samples from Base Policy" (Scale: 2<sup>1</sup> to 2<sup>7</sup>)

* **Y-axis:** "Accuracy" (Scale: 0.10 to 0.25)

* **Legend:**

* "ORM" (Solid Red Line)

* "PRM Q-value" (Dashed Orange Line)

* "PAV" (Dashed Teal Line)

* **Annotations:** "5x Compute Efficient", "10% Accuracy"

**Section (c): RL with PAVs**

* **X-axis:** "Training Iterations (x10<sup>3</sup>)" (Scale: 0 to 10)

* **Y-axis:** "Accuracy" (Scale: 0.10 to 0.25)

* **Legend:**

* "ORM-RL" (Solid Red Line)

* "PAV-RL" (Dashed Teal Line)

* **Annotations:** "6x Sample Efficient", "7% Accuracy"

### Detailed Analysis or Content Details

**Section (a):**

The flow diagram shows the system attempting to solve the given equations.

* Step 1: The system attempts to eliminate 'y', resulting in a final answer of x=1, y=7 (Correct).

* Step 2: The system attempts to derive the next equation, resulting in a final answer of x=1, y=7 (Correct).

* A third attempt (not numbered) leads to an incorrect implication and a final answer of x=-1, y=3 (Incorrect).

* A fourth attempt (not numbered) leads to a final answer of x=1, y=7 (Correct).

**Section (b): Search with PAVs**

* **ORM:** Starts at approximately 0.12 accuracy at 2<sup>1</sup> samples, rises to approximately 0.22 accuracy at 2<sup>7</sup> samples. The line slopes upward, with increasing steepness.

* **PRM Q-value:** Starts at approximately 0.11 accuracy at 2<sup>1</sup> samples, rises to approximately 0.18 accuracy at 2<sup>7</sup> samples. The line slopes upward, but less steeply than ORM.

* **PAV:** Starts at approximately 0.10 accuracy at 2<sup>1</sup> samples, rises to approximately 0.20 accuracy at 2<sup>7</sup> samples. The line slopes upward, with a moderate steepness.

**Section (c): RL with PAVs**

* **ORM-RL:** Starts at approximately 0.12 accuracy at 0 training iterations, rises to approximately 0.23 accuracy at 9x10<sup>3</sup> training iterations. The line initially rises steeply, then plateaus.

* **PAV-RL:** Starts at approximately 0.11 accuracy at 0 training iterations, rises to approximately 0.24 accuracy at 9x10<sup>3</sup> training iterations. The line rises more consistently than ORM-RL, with a slight peak around 8x10<sup>3</sup> iterations.

### Key Observations

* In Section (b), PAV consistently outperforms PRM Q-value, and achieves comparable accuracy to ORM with fewer samples.

* In Section (c), PAV-RL consistently outperforms ORM-RL in terms of accuracy.

* The annotations highlight that using PAVs results in 5x compute efficiency and 10% accuracy improvement in the search process (Section b), and 6x sample efficiency and 7% accuracy improvement in the RL process (Section c).

* The system demonstrates an ability to recover from incorrect implications (as seen in the red 'X' step in Section a).

### Interpretation

The diagram demonstrates the effectiveness of using Proof-Aware Values (PAVs) to improve the performance of both search and reinforcement learning algorithms in the context of equation solving. PAVs enhance both accuracy and efficiency. The flow diagram in Section (a) illustrates the iterative nature of the problem-solving process and the system's ability to learn from its mistakes. The comparison between the "Base Policy" and the "Very capable prover policy" suggests that incorporating proof-awareness leads to a more robust and accurate solver. The annotations quantify these improvements, providing concrete evidence of the benefits of using PAVs. The consistent outperformance of PAV-based methods in both charts suggests a generalizable advantage, applicable to a range of problem-solving scenarios. The slight peak in the PAV-RL curve around 8x10<sup>3</sup> iterations might indicate an optimal training point beyond which further iterations yield diminishing returns.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram and Charts: Prover Policy Analysis and Performance Comparison

### Overview

The image is a composite technical figure containing three distinct parts: (a) a flowchart illustrating a problem-solving process with different policies, and two line charts (b and c) comparing the performance of different methods ("ORM", "PRM Q-value", "PAV", "ORM-RL", "PAV-RL") on accuracy metrics. The figure appears to be from a research paper on automated reasoning or reinforcement learning, demonstrating the effectiveness of a proposed method called "PAV".

### Components/Axes

**Part (a): Flowchart**

* **Title/Label:** "(a)" at the bottom left.

* **Start Node:** Labeled "Start" on the left.

* **Process Flow:** A multi-step flowchart showing the process of solving a system of linear equations.

* **Equations Presented:**

* "Question: Let 4x+3y=25, 7x+6y=49. Solve for x, y."

* "The equations imply: 10x + 9y = 25"

* "We eliminate y from system of equations."

* **Decision Paths:** Three main paths branch from the "eliminate y" step, each associated with a numerical value (0.0, 1.0, 0.0) and a policy type (indicated by color).

* **Final Answers:** Each path concludes with a "Final answer:" statement.

* **Legend (Bottom of Part a):**

* **Gray Box:** "Base Policy"

* **Light Blue Box:** "Very capable prover policy"

* **Light Orange Box:** "Good prover policy: complementary to base"

* **Outcome Icons:** Green checkmarks (✓) indicate correct final answers (x=1, y=7). A red cross (X) indicates an incorrect final answer (x=3, y=-1).

**Part (b): Line Chart - "Search with PAVs:"**

* **Title:** "Search with PAVs: 5x Compute Efficient, +10% Accuracy"

* **X-axis:** Label: "# samples from Base Policy". Scale: Logarithmic base 2, with markers at 2¹, 2², 2³, 2⁴, 2⁵, 2⁶, 2⁷.

* **Y-axis:** Label: "Accuracy". Scale: Linear, from 0.10 to 0.25, with increments of 0.05.

* **Legend (Within Chart):**

* **Green dashed line with square markers:** "ORM"

* **Blue dashed line with circle markers:** "PRM Q-value"

* **Orange solid line with triangle markers:** "PAV"

* **Annotations:**

* A black dashed arrow labeled "5x" pointing from the ORM/PRM curves to the PAV curve at approximately 2² samples.

* A black dashed arrow labeled "10%" pointing vertically from the ORM/PRM plateau to the PAV curve at 2⁷ samples.

**Part (c): Line Chart - "RL with PAVs:"**

* **Title:** "RL with PAVs: 6x Sample Efficient, +7% Accuracy"

* **X-axis:** Label: "Training Iterations (×10³)". Scale: Linear, from 0 to 10, with increments of 1.

* **Y-axis:** Label: "Accuracy". Scale: Linear, from 0.15 to 0.25, with increments of 0.05.

* **Legend (Within Chart):**

* **Brown dashed line:** "ORM-RL"

* **Orange solid line:** "PAV-RL"

* **Annotations:**

* A black dashed arrow labeled "6x" pointing horizontally from the ORM-RL curve to the PAV-RL curve at approximately 0.20 accuracy.

* A black dashed arrow labeled "7%" pointing vertically from the ORM-RL plateau to the PAV-RL curve at 10k iterations.

### Detailed Analysis

**Part (a) - Flowchart Analysis:**

The flowchart traces the solution of the equation system `4x+3y=25, 7x+6y=49`.

1. **Path 1 (Top, Blue - "Very capable prover policy"):** Follows "Gaussian Elimination." Leads to the correct answer `x=1, y=7`. Associated value: 0.0.

2. **Path 2 (Middle, Orange - "Good prover policy"):** Performs the operation "2 x Eqn. 1 - Eqn. 2 gives us ....". Leads to the correct answer `x=1, y=7`. Associated value: 1.0.

3. **Path 3 (Bottom, Orange - "Good prover policy"):** Performs the operation "Subtract Eqn. 2 from the previous step: 3x + 3y = -24". Leads to an incorrect answer `x=3, y=-1`. Associated value: 0.0.

4. **Path 4 (Bottom, Blue - "Very capable prover policy"):** Notes "Previous implication seems incorrect." and loops back to correct the error, leading to the correct answer `x=1, y=7`. Associated value: 0.01.

**Part (b) - Search Performance:**

* **Trend Verification:**

* **ORM (Green):** Slopes upward from ~0.12 at 2¹ samples, plateaus around 0.20 from 2⁴ samples onward.

* **PRM Q-value (Blue):** Follows a very similar trend to ORM, slightly above it, also plateauing near 0.20.

* **PAV (Orange):** Slopes upward more steeply from ~0.14 at 2¹ samples, surpassing the other methods by 2² samples, and continues to rise, reaching ~0.25 at 2⁷ samples.

* **Key Data Points (Approximate):**

* At 2¹ samples: ORM ~0.12, PRM ~0.13, PAV ~0.14.

* At 2⁷ samples: ORM/PRM ~0.20, PAV ~0.25.

* **Claimed Improvements:** The chart claims PAV is "5x Compute Efficient" (reaching a given accuracy level with fewer samples) and achieves "+10% Accuracy" (higher final accuracy) compared to the baselines.

**Part (c) - Reinforcement Learning Performance:**

* **Trend Verification:**

* **ORM-RL (Brown):** Starts near 0.15, rises with high variance, and plateaus around 0.19-0.20 after 4k iterations.

* **PAV-RL (Orange):** Starts near 0.15, rises more smoothly and consistently, surpasses ORM-RL by 2k iterations, and plateaus around 0.26-0.27 after 6k iterations.

* **Key Data Points (Approximate):**

* At 0 iterations: Both ~0.15.

* At 10k iterations: ORM-RL ~0.20, PAV-RL ~0.27.

* **Claimed Improvements:** The chart claims PAV-RL is "6x Sample Efficient" (reaches a given accuracy level in fewer training iterations) and achieves "+7% Accuracy" (higher final accuracy) compared to ORM-RL.

### Key Observations

1. **Policy Differentiation in (a):** The "Very capable prover policy" (blue) is shown to both follow a standard method (Gaussian Elimination) and perform error detection/correction. The "Good prover policy" (orange) shows both a successful alternative algebraic step and a failing step that introduces an error.

2. **Consistent Superiority of PAV:** In both charts (b) and (c), the PAV-based method (solid orange line) consistently outperforms the baseline methods (dashed lines) in both final accuracy and efficiency (compute or sample).

3. **Efficiency vs. Accuracy:** The annotations highlight two types of improvement: efficiency (5x/6x) meaning faster convergence, and absolute performance gain (+10%/+7%) meaning a higher ceiling.

4. **Plateau Behavior:** The baseline methods (ORM, PRM, ORM-RL) tend to plateau earlier and at a lower accuracy level than the PAV methods, which continue to improve for longer.

### Interpretation

This figure presents a compelling case for the effectiveness of the "PAV" method in the context of automated reasoning tasks.

* **Part (a)** serves as a conceptual illustration, showing that different "prover policies" can generate diverse solution paths with varying correctness. It highlights the importance of not just generating steps, but also evaluating and correcting them, which is a capability associated with the "Very capable" policy.

* **Parts (b) and (c)** provide empirical, quantitative evidence. They demonstrate that integrating PAVs (likely "Prover-Augmented Verifiers" or similar) leads to significant gains in two critical dimensions:

1. **Efficiency:** Achieving target performance levels with substantially less computational resources (5x fewer samples in search) or training data (6x fewer iterations in RL).

2. **Effectiveness:** Reaching a higher ultimate accuracy ceiling (+7-10%), suggesting PAVs help the system overcome limitations or local optima that constrain the baseline methods.

The overall narrative is that PAVs enhance both the *process* of reasoning (by providing better verification and correction, as hinted in (a)) and the *outcome* of learning (as proven in (b) and (c)), making them a valuable component for building more capable and efficient AI reasoning systems. The separation into "Search" and "RL" contexts suggests the benefit is robust across different algorithmic paradigms.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart and Graphs: Problem-Solving Strategies and Performance Metrics

### Overview

The image contains three components:

1. A flowchart (a) illustrating a problem-solving process for solving a system of equations.

2. Two line graphs (b and c) comparing the performance of different algorithms (ORM, PRM Q-value, PAV) in terms of accuracy and efficiency.

---

### Components/Axes

#### (a) Flowchart

- **Start Node**: "Start" with the question: "Let 4x + 3y = 25, 7x + 6y = 49. Solve for x, y."

- **Step 1**: "We eliminate y from the system of equations."

- **Blue Arrow (0.0)**: Leads to "Follow Gaussian Elimination" → Final answer: x=1, y=7 (✅).

- **Orange Arrow (1.0)**: Leads to "2 × Eqn. 1 – Eqn. 2 gives us..." → Final answer: x=1, y=7 (✅).

- **Step 2**: "The equations imply: 10x + 9y = 25."

- **Blue Arrow (0.0)**: Leads to "Previous implication seems incorrect" → Final answer: x=1, y=7 (✅).

- **Orange Arrow (0.0)**: Leads to "Subtract Eqn. 2 from the previous step: 3x + 3y = -24" → Final answer: x=3, y=-1 (❌).

- **Final Nodes**:

- ✅ Correct answers (x=1, y=7) via Gaussian elimination or 2×Eqn.1–Eqn.2.

- ❌ Incorrect answer (x=3, y=-1) via subtraction.

#### (b) Graph: "Search with PAVs"

- **X-axis**: "# samples from Base Policy" (log scale: 2¹ to 2⁷).

- **Y-axis**: "Accuracy" (0.10 to 0.25).

- **Legend**:

- **ORM** (green dashed line): Starts at ~0.12, peaks at ~0.20.

- **PRM Q-value** (blue dashed line): Starts at ~0.13, peaks at ~0.22.

- **PAV** (orange solid line): Starts at ~0.15, peaks at ~0.25.

- **Annotations**:

- "5× Compute Efficient, +10% Accuracy" (PAV vs. base).

#### (c) Graph: "RL with PAVs"

- **X-axis**: "Training Iterations (×10³)" (0 to 10).

- **Y-axis**: "Accuracy" (0.15 to 0.25).

- **Legend**:

- **ORM-RL** (red dashed line): Starts at ~0.15, peaks at ~0.20.

- **PAV-RL** (orange solid line): Starts at ~0.15, peaks at ~0.25.

- **Annotations**:

- "6× Sample Efficient, +7% Accuracy" (PAV-RL vs. base).

---

### Detailed Analysis

#### (a) Flowchart

- **Flow**:

1. Start → Step 1 (eliminate y).

2. Step 1 branches into two paths:

- Blue (correct): Follows Gaussian elimination → x=1, y=7.

- Orange (incorrect): Subtracts equations → x=3, y=-1.

3. Step 2 branches into two paths:

- Blue (correct): Rejects flawed implication → x=1, y=7.

- Orange (incorrect): Subtracts equations → x=3, y=-1.

#### (b) Graph: "Search with PAVs"

- **Trends**:

- All methods improve accuracy with more samples.

- **PAV** consistently outperforms ORM and PRM Q-value.

- At 2⁷ samples, PAV reaches ~0.25 accuracy (vs. ~0.22 for PRM Q-value and ~0.20 for ORM).

#### (c) Graph: "RL with PAVs"

- **Trends**:

- **PAV-RL** achieves higher accuracy (~0.25) than ORM-RL (~0.20) after 10³ iterations.

- PAV-RL shows sharper improvement (6× sample efficiency).

---

### Key Observations

1. **Flowchart**:

- Gaussian elimination and 2×Eqn.1–Eqn.2 yield correct results.

- Subtracting equations leads to errors.

2. **Graphs**:

- **PAV** methods (search and RL) outperform ORM and PRM Q-value in both accuracy and efficiency.

- PAV-RL achieves 7% higher accuracy than ORM-RL with fewer samples.

---

### Interpretation

- The flowchart demonstrates that **Gaussian elimination** is a reliable method for solving systems of equations, while flawed algebraic manipulations (e.g., subtraction) lead to errors.

- The graphs highlight the **superiority of PAV-based algorithms** in both search and reinforcement learning contexts. PAV methods achieve higher accuracy with fewer computational resources, suggesting they are more efficient and robust for this task.

- The 10% and 7% efficiency gains (PAV vs. base) indicate that PAV reduces the number of samples or iterations needed to reach optimal performance, making it a promising approach for optimization problems.

- The incorrect answer (x=3, y=-1) in the flowchart underscores the importance of method selection in problem-solving, as minor errors in algebraic steps can propagate to wrong conclusions.

DECODING INTELLIGENCE...