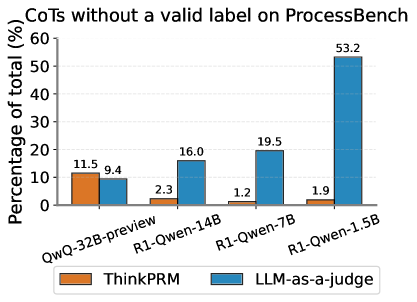

## Bar Chart: CoTs without a valid label on ProcessBench

### Overview

This is a grouped bar chart comparing the performance of two evaluation methods ("ThinkPRM" and "LLM-as-a-judge") across four different language models. The chart measures the percentage of "Chain-of-Thoughts (CoTs) without a valid label" for each model-method pair. The data suggests an analysis of model reasoning or labeling failures on a benchmark called "ProcessBench."

### Components/Axes

* **Chart Title:** "CoTs without a valid label on ProcessBench"

* **Y-Axis:**

* **Label:** "Percentage of total (%)"

* **Scale:** Linear, from 0 to 60, with major tick marks at intervals of 10 (0, 10, 20, 30, 40, 50, 60).

* **X-Axis:**

* **Label:** None explicit. The axis categories are the names of four language models.

* **Categories (from left to right):**

1. QwQ-32B-preview

2. R1-Qwen-14B

3. R1-Qwen-7B

4. R1-Qwen-1.5B

* **Legend:**

* **Position:** Centered at the bottom of the chart.

* **Items:**

* **Orange Square:** "ThinkPRM"

* **Blue Square:** "LLM-as-a-judge"

* **Data Series:** Two series of bars, one for each legend item, grouped by model category.

### Detailed Analysis

The chart presents the following specific data points for each model and evaluation method:

**1. QwQ-32B-preview:**

* **ThinkPRM (Orange Bar):** 11.5%

* **LLM-as-a-judge (Blue Bar):** 9.4%

* **Trend:** For this model, the ThinkPRM method yields a slightly higher percentage of invalid labels than the LLM-as-a-judge method.

**2. R1-Qwen-14B:**

* **ThinkPRM (Orange Bar):** 2.3%

* **LLM-as-a-judge (Blue Bar):** 16.0%

* **Trend:** A significant reversal occurs. The ThinkPRM percentage drops sharply, while the LLM-as-a-judge percentage rises. The LLM-as-a-judge value is now nearly 7 times higher than the ThinkPRM value.

**3. R1-Qwen-7B:**

* **ThinkPRM (Orange Bar):** 1.2%

* **LLM-as-a-judge (Blue Bar):** 19.5%

* **Trend:** The trend continues. ThinkPRM reaches its lowest point, while LLM-as-a-judge increases further. The gap between the two methods widens.

**4. R1-Qwen-1.5B:**

* **ThinkPRM (Orange Bar):** 1.9%

* **LLM-as-a-judge (Blue Bar):** 53.2%

* **Trend:** This model shows the most extreme disparity. ThinkPRM remains very low (a slight increase from the previous model). In stark contrast, the LLM-as-a-judge percentage surges dramatically to 53.2%, the highest value on the chart by a large margin.

### Key Observations

1. **Divergent Trends:** The two evaluation methods show opposite trends across the model series. The "ThinkPRM" percentage generally decreases (with a minor uptick for the smallest model), while the "LLM-as-a-judge" percentage increases consistently and dramatically.

2. **Model Size Correlation:** There is a clear inverse relationship between model size (implied by the names: 32B, 14B, 7B, 1.5B) and the percentage of invalid labels when judged by an LLM. Smaller models (especially R1-Qwen-1.5B) produce a much higher rate of invalid CoTs according to the "LLM-as-a-judge" metric.

3. **ThinkPRM Stability:** The "ThinkPRM" method appears relatively stable and low across all models, ranging only between 1.2% and 11.5%. It does not show the same sensitivity to model scale.

4. **Extreme Outlier:** The data point for R1-Qwen-1.5B evaluated by "LLM-as-a-judge" (53.2%) is a major outlier, being more than 2.7 times higher than the next highest value (19.5% for R1-Qwen-7B).

### Interpretation

This chart likely illustrates a critical finding in the evaluation of language model reasoning. "CoTs without a valid label" suggests instances where the model's reasoning chain failed to produce a clear, classifiable answer.

* **What the data suggests:** The "LLM-as-a-judge" evaluation method is highly sensitive to model capability. As model size and presumed capability decrease, this method flags a dramatically increasing proportion of reasoning chains as invalid. This could mean smaller models are more prone to generating nonsensical, ambiguous, or off-topic reasoning that an LLM judge cannot confidently label.

* **Contrasting Methods:** The "ThinkPRM" method (possibly a process-based reward model or a different verification technique) appears far more robust to model scale. It consistently identifies a low baseline of invalid CoTs, suggesting it may be measuring a different, more fundamental type of error or using a less stringent criterion.

* **Why it matters:** The stark divergence highlights a potential pitfall in AI evaluation. Relying solely on an "LLM-as-a-judge" could lead to overly pessimistic assessments of smaller models' reasoning abilities, as the judge itself may be conflating "difficult to label" with "invalid." The stability of ThinkPRM suggests it might be a more reliable metric for comparing reasoning quality across models of different sizes. The extreme value for the 1.5B model indicates a potential failure mode where the model's reasoning breaks down almost completely from the perspective of an LLM evaluator.