## Line Chart and Trajectory Diagrams: Point Robot 2D Navigation Performance

### Overview

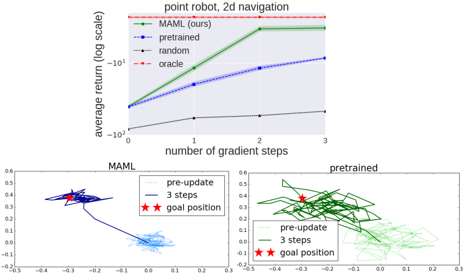

The image is a composite technical figure containing three distinct plots related to machine learning model performance on a 2D navigation task for a point robot. The top section is a semi-logarithmic line chart comparing four methods. The bottom section consists of two side-by-side trajectory plots visualizing the behavior of the "MAML" and "pretrained" models before and after adaptation.

### Components/Axes

**Top Chart:**

* **Title:** `point robot, 2d navigation`

* **Y-axis:** `average return (log scale)`. The axis is logarithmic, with major tick marks at `10^1`, `10^0`, and `10^-1`. Values increase upwards.

* **X-axis:** `number of gradient steps`. Linear scale with integer markers at `0`, `1`, `2`, and `3`.

* **Legend (Top-Left):**

* `MAML (ours)`: Solid green line with circular markers.

* `pretrained`: Dashed blue line with circular markers.

* `random`: Dotted black line with circular markers.

* `oracle`: Dashed red horizontal line.

**Bottom Left Plot:**

* **Title:** `MAML`

* **Legend (Top-Right):**

* `pre-update`: Solid dark blue line.

* `3 steps`: Solid light blue line.

* `goal position`: Red star symbol (★).

* **Axes:** Unlabeled Cartesian coordinate system. X-axis ranges approximately from -0.5 to 0.3. Y-axis ranges approximately from -0.3 to 0.6.

**Bottom Right Plot:**

* **Title:** `pretrained`

* **Legend (Top-Left):**

* `pre-update`: Solid dark green line.

* `3 steps`: Solid light green line.

* `goal position`: Red star symbol (★).

* **Axes:** Same scale and range as the MAML plot.

### Detailed Analysis

**Top Chart - Performance Trends:**

1. **MAML (ours) - Green Solid Line:** Shows the steepest positive slope. The trend is sharply upward from 0 to 2 gradient steps, then plateaus.

* At 0 steps: Average return ≈ `10^-0.9` (approx. 0.126).

* At 1 step: Average return ≈ `10^-0.1` (approx. 0.79).

* At 2 steps: Average return ≈ `10^0.8` (approx. 6.3).

* At 3 steps: Average return ≈ `10^0.8` (plateau, same as step 2).

2. **pretrained - Blue Dashed Line:** Shows a steady, moderate positive slope.

* At 0 steps: Average return ≈ `10^-0.5` (approx. 0.316).

* At 1 step: Average return ≈ `10^-0.3` (approx. 0.5).

* At 2 steps: Average return ≈ `10^0.0` (1.0).

* At 3 steps: Average return ≈ `10^0.3` (approx. 2.0).

3. **random - Black Dotted Line:** Shows a very shallow positive slope, remaining near the bottom of the chart.

* At 0 steps: Average return ≈ `10^0.85` (approx. 7.1).

* At 1 step: Average return ≈ `10^0.9` (approx. 7.9).

* At 2 steps: Average return ≈ `10^0.95` (approx. 8.9).

* At 3 steps: Average return ≈ `10^1.0` (10).

4. **oracle - Red Dashed Line:** A horizontal line representing the theoretical best performance.

* Constant Average return ≈ `10^1.0` (10).

**Bottom Trajectory Plots - Behavioral Analysis:**

* **MAML Plot (Left):**

* **Pre-update (Dark Blue):** Trajectories are clustered in a tight, chaotic knot in the upper-left quadrant (approx. X: -0.3 to 0.0, Y: 0.2 to 0.4), far from the goal.

* **3 steps (Light Blue):** After 3 gradient steps, trajectories show a dramatic, coherent flow. They originate from the pre-update cluster and sweep down and to the right, converging tightly around the goal position (red star) located at approximately (X: 0.0, Y: -0.2).

* **pretrained Plot (Right):**

* **Pre-update (Dark Green):** Trajectories are scattered in a broad, disorganized cloud primarily in the upper-right quadrant (approx. X: -0.1 to 0.3, Y: 0.0 to 0.5), also distant from the goal.

* **3 steps (Light Green):** After adaptation, trajectories show some organization but remain diffuse. They generally move from the pre-update cloud towards the goal (red star at same position), but form a wide, sparse fan shape, indicating less precise convergence than MAML.

### Key Observations

1. **Performance Hierarchy:** The chart establishes a clear performance hierarchy: Oracle (best) > MAML > Pretrained > Random (worst). MAML achieves near-oracle performance after just 2 gradient steps.

2. **Adaptation Efficiency:** MAML demonstrates superior *meta-learning* efficiency. Its performance improves most rapidly with additional gradient steps, indicating it learns to adapt quickly.

3. **Behavioral Correlation:** The trajectory plots visually explain the performance chart. MAML's sharp performance increase corresponds to its trajectories transforming from a disorganized cluster to a focused path directly to the goal. The pretrained model's slower improvement corresponds to a less effective, more scattered adaptation.

4. **Goal Position:** The goal (red star) is consistently placed at the same coordinate (≈ 0.0, -0.2) in both trajectory plots, serving as a fixed reference point.

### Interpretation

This figure demonstrates the core advantage of Model-Agnostic Meta-Learning (MAML) for few-shot adaptation in a robotic control task. The data suggests that MAML doesn't just learn a good policy; it learns a model initialization that is exceptionally sensitive to gradient-based updates. This is evidenced by:

* **The steep learning curve:** MAML's return improves by nearly two orders of magnitude (from ~0.126 to ~6.3) in just two steps, a rate far exceeding the pretrained baseline.

* **The behavioral shift:** The trajectory plots provide a Peircean insight—the *sign* (the visual change in path) directly *indicates* the *object* (the meta-learned adaptability). The pre-update MAML policy is poor (like the pretrained one), but its internal parameters are structured such that minimal data (3 steps of gradient) triggers a radical, goal-directed reorganization of behavior. The pretrained model lacks this latent structure, resulting in a weaker, noisier adaptation.

The "random" line serves as a crucial baseline, showing that even untrained policies have some non-zero (but poor) average return, likely from random exploration occasionally nearing the goal. The "oracle" line defines the performance ceiling, showing MAML can nearly match an optimal policy with minimal adaptation. The overall message is that meta-learning provides a framework for creating models that are not just performant, but are fundamentally *easy to improve* with new experience.