## Diagram: Reinforcement Learning with Symbolic Grounding and Action Preconditions

### Overview

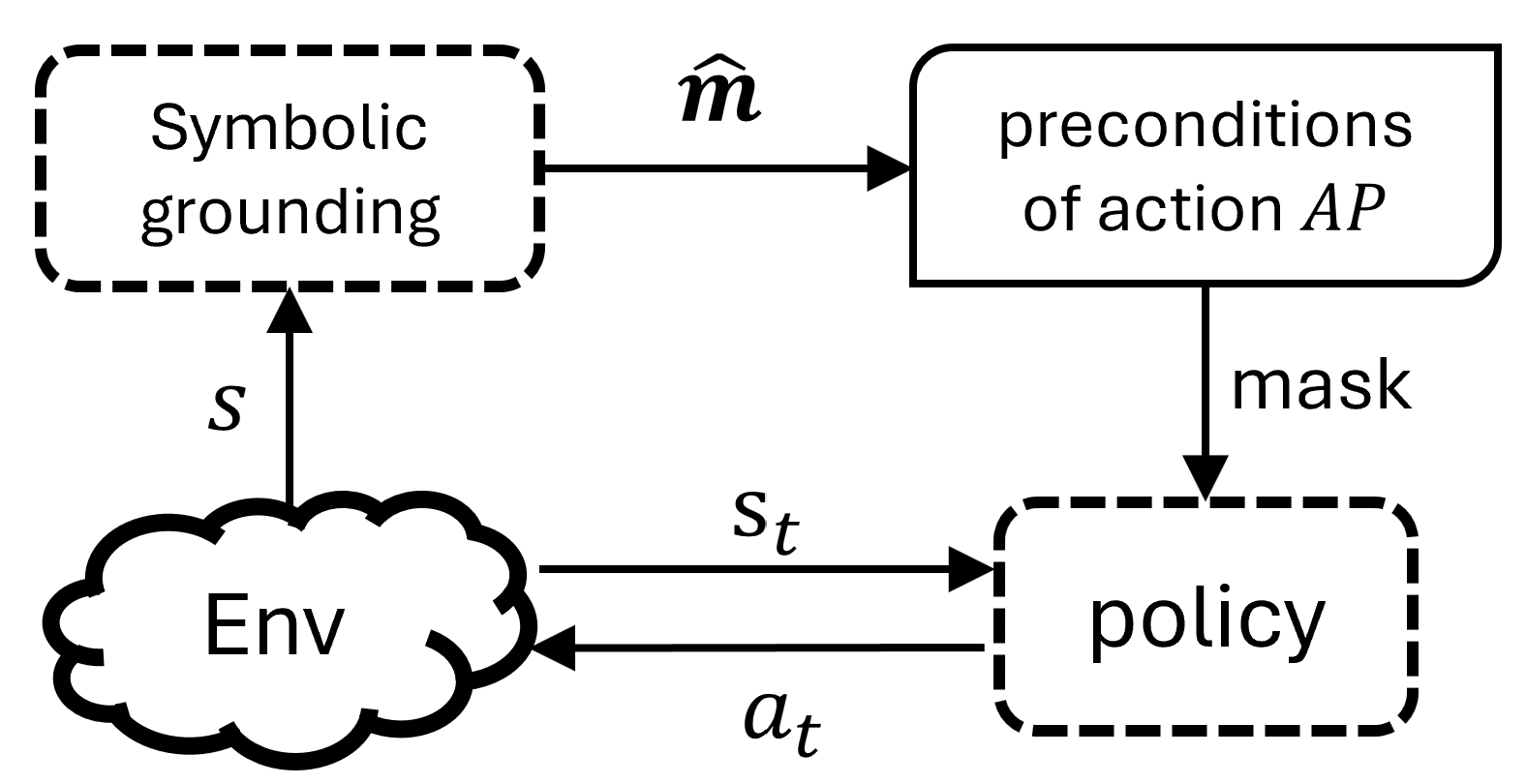

The image displays a technical flowchart or system architecture diagram illustrating a reinforcement learning (RL) or decision-making process that incorporates symbolic reasoning. The diagram shows the flow of information between an environment, a policy, a symbolic grounding module, and a module for action preconditions. The primary language is English.

### Components/Axes

The diagram consists of four main components connected by directed arrows representing data flow. The components are positioned as follows:

* **Top-Left:** A dashed-border rectangle labeled **"Symbolic grounding"**.

* **Top-Right:** A solid-border rectangle labeled **"preconditions of action AP"**.

* **Bottom-Left:** A cloud-shaped element labeled **"Env"** (representing the Environment).

* **Bottom-Right:** A dashed-border rectangle labeled **"policy"**.

The connecting arrows and their labels are:

1. An arrow from **"Env"** to **"Symbolic grounding"** labeled **"s"**.

2. An arrow from **"Symbolic grounding"** to **"preconditions of action AP"** labeled **"ŝ"** (s-hat).

3. An arrow from **"preconditions of action AP"** to **"policy"** labeled **"mask"**.

4. An arrow from **"Env"** to **"policy"** labeled **"s_t"**.

5. An arrow from **"policy"** to **"Env"** labeled **"a_t"**.

### Detailed Analysis

The diagram defines a closed-loop interaction between an agent (comprising the policy and supporting modules) and its environment.

* **Component Flow & Relationships:**

* The **Environment ("Env")** provides a state observation **"s"** to the **Symbolic grounding** module.

* The **Symbolic grounding** module processes this state and outputs a symbolic or abstracted representation **"ŝ"** to the **preconditions of action AP** module.

* The **preconditions of action AP** module uses this symbolic information to generate a **"mask"**. This mask is sent to the **policy** and likely serves to filter or constrain the set of available actions based on logical preconditions.

* The **policy** receives two inputs: the direct state **"s_t"** from the environment and the **"mask"** from the preconditions module. It then selects and outputs an action **"a_t"** back to the environment.

* This creates a cycle: Env -> (s) -> Symbolic Grounding -> (ŝ) -> Preconditions -> (mask) -> Policy -> (a_t) -> Env. Simultaneously, the policy receives a direct state signal (s_t).

* **Visual Semantics:**

* The **dashed borders** around "Symbolic grounding" and "policy" may indicate they are learnable or neural network-based components.

* The **solid border** around "preconditions of action AP" may indicate a more deterministic or rule-based module.

* The **cloud shape** for "Env" is a standard representation for an external, often complex, system.

### Key Observations

1. **Hybrid Architecture:** The system combines a standard RL loop (Env -> s_t -> Policy -> a_t -> Env) with a parallel symbolic reasoning branch (Env -> s -> Symbolic Grounding -> ŝ -> Preconditions -> mask).

2. **Action Constraint Mechanism:** The "mask" signal is a critical intermediary. It suggests the policy's action selection is not free but is guided or restricted by logically derived preconditions from the symbolic representation of the state.

3. **Dual State Representation:** The environment provides two forms of state information: a potentially raw or high-dimensional state **"s_t"** to the policy, and a state **"s"** (possibly the same or a different view) to the symbolic grounding module.

4. **Symbolic Abstraction:** The use of **"ŝ"** (s-hat) strongly implies that the "Symbolic grounding" module performs an estimation, abstraction, or conversion of the environmental state into a symbolic form suitable for logical reasoning about action preconditions.

### Interpretation

This diagram illustrates a **neuro-symbolic AI architecture** for decision-making. It addresses a key challenge in pure reinforcement learning: ensuring that an agent's actions are not only reward-driven but also adhere to logical rules or common-sense constraints.

* **What it demonstrates:** The system learns or uses a policy (likely a neural network) to select actions, but this policy is "masked" by a set of preconditions. These preconditions are derived from a symbolic understanding of the world, which is itself grounded in sensory data from the environment. This setup aims to combine the learning flexibility of neural networks with the reliability and interpretability of symbolic logic.

* **How elements relate:** The symbolic branch acts as a **supervisor or constraint generator** for the policy. It translates the continuous, noisy state of the world into discrete symbols and logical rules ("preconditions"), which then define the safe or valid action space for the policy at each step.

* **Notable implications:** This architecture is designed to improve **safety, sample efficiency, and generalization**. By masking invalid actions, the agent avoids catastrophic mistakes and explores more efficiently. The symbolic layer could also allow for injecting human knowledge (as preconditions) into the learning process. The separation between the policy (which might be trained via RL) and the precondition module (which might be programmed or learned differently) is a key design feature for creating more robust and trustworthy AI agents.