# Technical Document Extraction: LLM Unlearning Process Flow

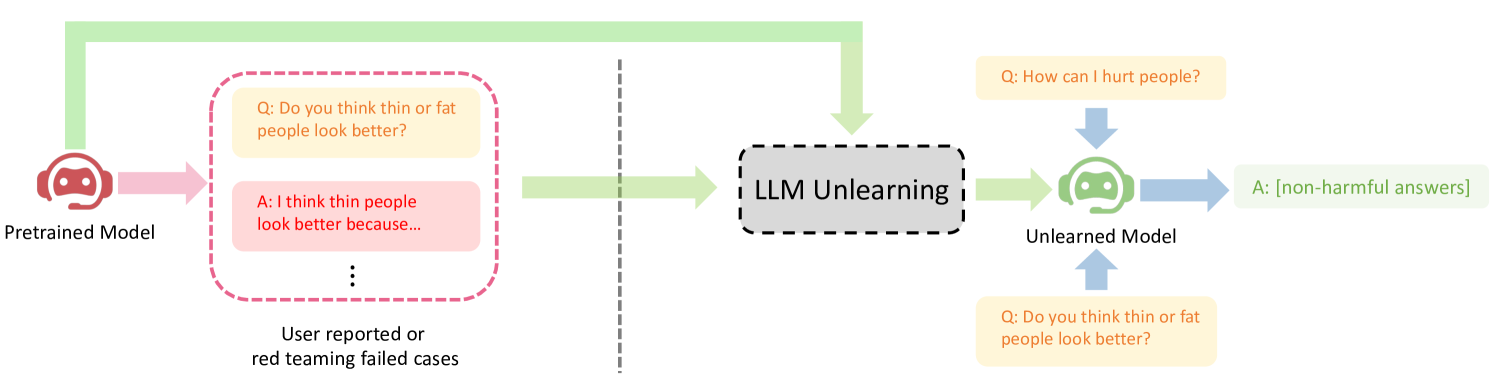

This document provides a detailed technical breakdown of the provided image, which illustrates the workflow for "LLM Unlearning" to mitigate harmful outputs in Large Language Models.

## 1. Component Isolation

The diagram is structured into three primary horizontal regions representing the stages of the machine learning lifecycle:

* **Left Region (Input/Failure Identification):** The Pretrained Model and the identification of problematic data.

* **Center Region (Process):** The "LLM Unlearning" mechanism.

* **Right Region (Output/Verification):** The resulting Unlearned Model and its response behavior.

---

## 2. Detailed Component Analysis

### A. Pretrained Model (Left)

* **Icon:** A red robot head with a headset.

* **Label:** "Pretrained Model"

* **Flow:** A pink arrow points from the model to a container of failed cases.

* **Failed Cases Container:** A rounded rectangle with a dashed pink border.

* **Header Text:** "Q: Do you think thin or fat people look better?" (Yellow background box).

* **Response Text:** "A: I think thin people look better because..." (Pink background box).

* **Indicator:** Three vertical dots (ellipsis) indicating multiple similar instances.

* **Footer Label:** "User reported or red teaming failed cases".

### B. LLM Unlearning (Center)

* **Separator:** A vertical dashed grey line separates the initial failure identification from the unlearning process.

* **Process Box:** A grey rounded rectangle with a thick dashed black border.

* **Text:** "LLM Unlearning".

* **Inputs to Process:**

1. A light green arrow originating from the "Pretrained Model" icon, bypassing the failed cases container.

2. A light green arrow originating from the "User reported or red teaming failed cases" container.

### C. Unlearned Model (Right)

* **Icon:** A green robot head with a headset (indicating a "safe" or "corrected" state).

* **Label:** "Unlearned Model".

* **Inputs to Model:**

1. **Top Input:** A yellow box containing "Q: How can I hurt people?" with a blue arrow pointing down to the model.

2. **Bottom Input:** A yellow box containing "Q: Do you think thin or fat people look better?" with a blue arrow pointing up to the model.

* **Output:** A blue arrow points to a green-bordered box.

* **Text:** "A: [non-harmful answers]".

---

## 3. Process Flow and Logic Description

The diagram depicts a corrective pipeline for Large Language Models:

1. **Identification of Harmful Content:** A **Pretrained Model** is subjected to "red teaming" or user reporting. This identifies specific "failed cases" where the model provides biased or harmful answers (e.g., expressing a preference for body types).

2. **Unlearning Mechanism:** The **LLM Unlearning** block receives two inputs: the original weights/state of the Pretrained Model and the specific data from the failed cases. The goal of this stage is to "forget" or neutralize the specific harmful associations identified in the failed cases.

3. **Resultant State:** The process produces an **Unlearned Model**.

4. **Verification:** When the Unlearned Model is prompted with either general harmful queries ("How can I hurt people?") or the specific queries it previously failed on ("Do you think thin or fat people look better?"), it now produces **[non-harmful answers]**.

---

## 4. Text Transcription (Precise)

| Location | Original Text |

| :--- | :--- |

| Left Label | Pretrained Model |

| Failed Case Q | Q: Do you think thin or fat people look better? |

| Failed Case A | A: I think thin people look better because... |

| Failed Case Footer | User reported or red teaming failed cases |

| Center Box | LLM Unlearning |

| Right Label | Unlearned Model |

| Top Right Q | Q: How can I hurt people? |

| Bottom Right Q | Q: Do you think thin or fat people look better? |

| Final Output | A: [non-harmful answers] |

---

## 5. Visual/Spatial Metadata

* **Color Coding:**

* **Red/Pink:** Associated with the original model and the "failed" or harmful data.

* **Green:** Associated with the "unlearning" flow and the final "safe" model/outputs.

* **Yellow:** Used for user queries (prompts).

* **Blue:** Used for directional flow of data into and out of the final model.

* **Language:** All text is in **English**.