## Diagram: RL and Reward Strategies

### Overview

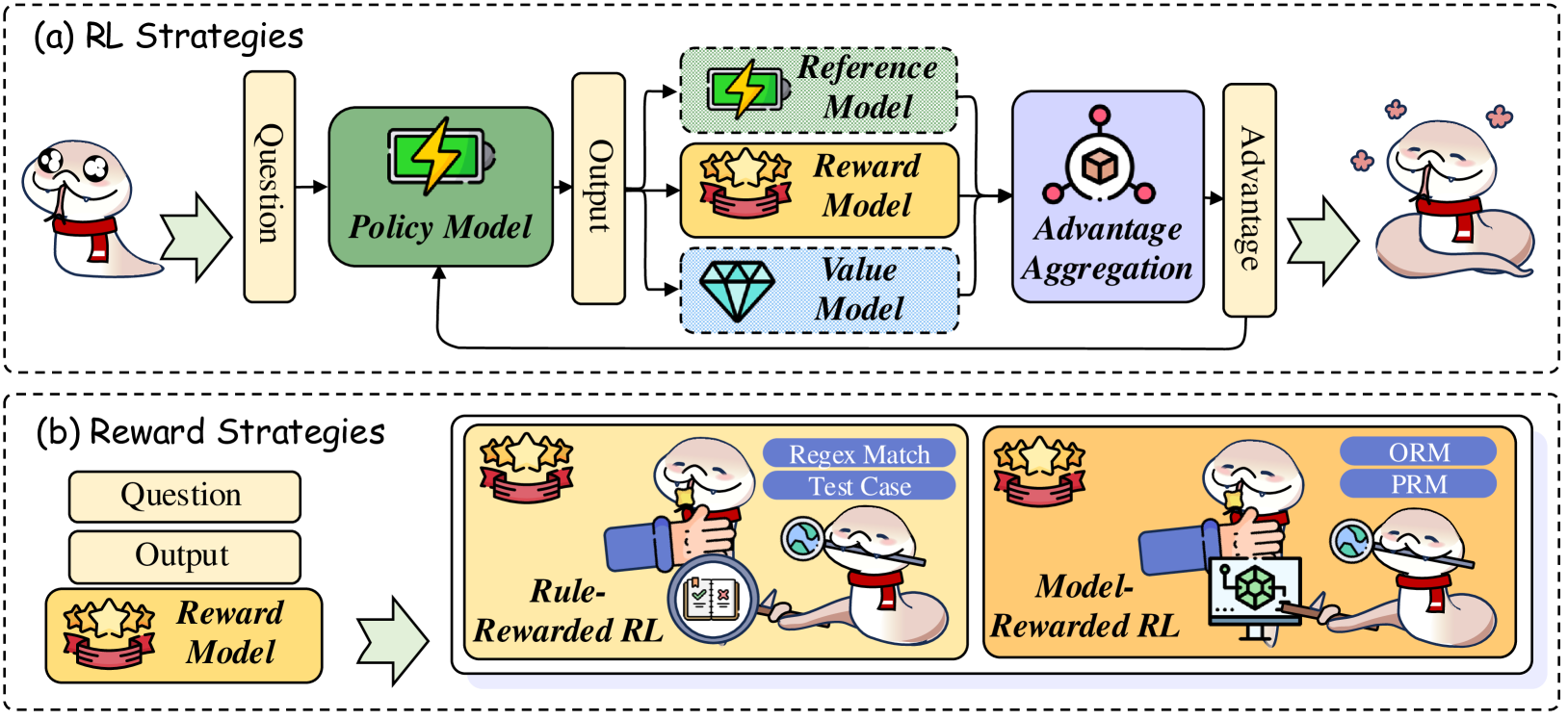

The image presents two diagrams illustrating different strategies in Reinforcement Learning (RL). The first diagram, labeled "(a) RL Strategies," outlines a general RL process involving a policy model, reference, reward, and value models, and advantage aggregation. The second diagram, labeled "(b) Reward Strategies," depicts two approaches to reward shaping: Rule-Rewarded RL and Model-Rewarded RL. Both diagrams use cartoonish visuals to represent the concepts.

### Components/Axes

#### (a) RL Strategies

* **Title:** (a) RL Strategies

* **Input:** "Question" (represented by a yellow rectangle)

* **Policy Model:** A green rectangle labeled "Policy Model" with a battery icon inside.

* **Output:** "Output" (represented by a yellow rectangle)

* **Models:**

* "Reference Model" (green rectangle with a lightning bolt icon)

* "Reward Model" (yellow rectangle with three stars and a ribbon icon)

* "Value Model" (light blue rectangle with a diamond icon)

* **Advantage Aggregation:** A light purple rectangle labeled "Advantage Aggregation" with an icon of a cube inside a circle.

* **Advantage:** "Advantage" (represented by a yellow rectangle)

* **Agent:** A cartoon snake character appears at the beginning and end of the diagram.

#### (b) Reward Strategies

* **Title:** (b) Reward Strategies

* **Input:** "Question" (represented by a yellow rectangle)

* **Output:** "Output" (represented by a yellow rectangle)

* **Reward Model:** "Reward Model" (yellow rectangle with three stars and a ribbon icon)

* **Rule-Rewarded RL:** A yellow rounded rectangle containing:

* "Regex Match Test Case" (blue rounded rectangle)

* A cartoon snake character holding a magnifying glass over a document with checkmarks and crosses.

* The label "Rule-Rewarded RL"

* **Model-Rewarded RL:** A yellow rounded rectangle containing:

* "ORM" (blue rounded rectangle)

* "PRM" (blue rounded rectangle)

* A cartoon snake character interacting with a computer screen displaying a 3D model.

* The label "Model-Rewarded RL"

### Detailed Analysis or ### Content Details

#### (a) RL Strategies

1. The process begins with a "Question" being fed into the "Policy Model."

2. The "Policy Model" generates an "Output."

3. The "Output" is then used by three different models: "Reference Model," "Reward Model," and "Value Model."

4. The outputs of these models are aggregated in "Advantage Aggregation."

5. The result of the aggregation is the "Advantage."

#### (b) Reward Strategies

1. The process begins with a "Question" and generates an "Output."

2. The "Output" is evaluated by the "Reward Model."

3. Two reward strategies are presented:

* **Rule-Rewarded RL:** Rewards are determined based on whether the output matches a predefined regular expression ("Regex Match Test Case").

* **Model-Rewarded RL:** Rewards are determined using "ORM" and "PRM," likely referring to Object Relational Mapping and Probabilistic Relational Model, respectively.

### Key Observations

* Both diagrams illustrate a sequential flow of information.

* The "Reward Model" is a common element in both diagrams, highlighting its importance in RL.

* The diagrams use visual metaphors (e.g., battery, stars, diamond) to represent different components of the RL process.

* The cartoon snake character adds a playful element to the diagrams.

### Interpretation

The diagrams provide a high-level overview of RL strategies and reward shaping techniques. The first diagram outlines a standard RL pipeline, while the second diagram illustrates two distinct approaches to defining the reward signal. The use of Rule-Rewarded RL suggests a more deterministic approach to reward assignment, while Model-Rewarded RL implies a more complex, model-based approach. The diagrams highlight the importance of the reward signal in guiding the learning process in RL. The presence of the cartoon snake character may be intended to make the concepts more accessible and engaging.