TECHNICAL ASSET FINGERPRINT

4aaf550b42d3e370eace19d7

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

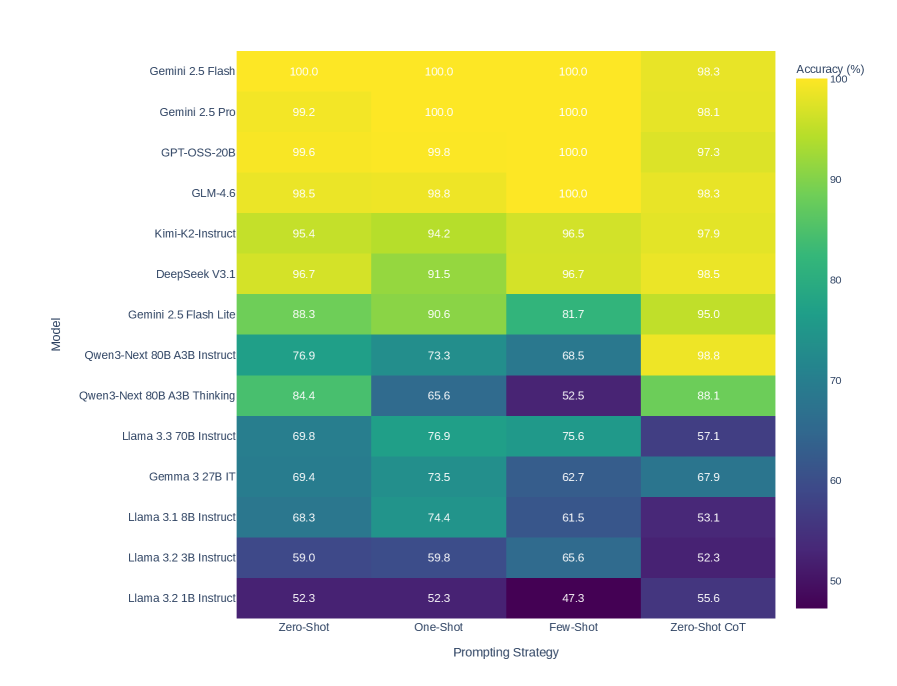

## Heatmap: Model Accuracy vs. Prompting Strategy

### Overview

The image is a heatmap showing the accuracy (%) of various language models across different prompting strategies. The models are listed on the vertical axis, and the prompting strategies are listed on the horizontal axis. The color of each cell represents the accuracy, with yellow indicating higher accuracy and dark purple indicating lower accuracy. A colorbar on the right provides a visual key for the accuracy values.

### Components/Axes

* **Vertical Axis (Model):** Lists the language models being evaluated.

* Gemini 2.5 Flash

* Gemini 2.5 Pro

* GPT-OSS-20B

* GLM-4.6

* Kimi-K2-Instruct

* DeepSeek V3.1

* Gemini 2.5 Flash Lite

* Qwen3-Next 80B A3B Instruct

* Qwen3-Next 80B A3B Thinking

* Llama 3.3 70B Instruct

* Gemma 3 27B IT

* Llama 3.1 8B Instruct

* Llama 3.2 3B Instruct

* Llama 3.2 1B Instruct

* **Horizontal Axis (Prompting Strategy):** Lists the prompting strategies used.

* Zero-Shot

* One-Shot

* Few-Shot

* Zero-Shot CoT (Chain-of-Thought)

* **Colorbar (Accuracy %):** A vertical colorbar on the right side of the heatmap indicates the accuracy percentage, ranging from 50% (dark purple) to 100% (yellow). The colorbar has tick marks at 50, 60, 70, 80, 90, and 100.

### Detailed Analysis or ### Content Details

The heatmap displays the accuracy of different models under different prompting strategies. The values are as follows:

| Model | Zero-Shot | One-Shot | Few-Shot | Zero-Shot CoT |

| --------------------------- | --------- | -------- | -------- | ------------- |

| Gemini 2.5 Flash | 100.0 | 100.0 | 100.0 | 98.3 |

| Gemini 2.5 Pro | 99.2 | 100.0 | 100.0 | 98.1 |

| GPT-OSS-20B | 99.6 | 99.8 | 100.0 | 97.3 |

| GLM-4.6 | 98.5 | 98.8 | 100.0 | 98.3 |

| Kimi-K2-Instruct | 95.4 | 94.2 | 96.5 | 97.9 |

| DeepSeek V3.1 | 96.7 | 91.5 | 96.7 | 98.5 |

| Gemini 2.5 Flash Lite | 88.3 | 90.6 | 81.7 | 95.0 |

| Qwen3-Next 80B A3B Instruct | 76.9 | 73.3 | 68.5 | 98.8 |

| Qwen3-Next 80B A3B Thinking | 84.4 | 65.6 | 52.5 | 88.1 |

| Llama 3.3 70B Instruct | 69.8 | 76.9 | 75.6 | 57.1 |

| Gemma 3 27B IT | 69.4 | 73.5 | 62.7 | 67.9 |

| Llama 3.1 8B Instruct | 68.3 | 74.4 | 61.5 | 53.1 |

| Llama 3.2 3B Instruct | 59.0 | 59.8 | 65.6 | 52.3 |

| Llama 3.2 1B Instruct | 52.3 | 52.3 | 47.3 | 55.6 |

### Key Observations

* The top models (Gemini 2.5 Flash, Gemini 2.5 Pro, GPT-OSS-20B, GLM-4.6) generally achieve high accuracy (close to 100%) across all prompting strategies.

* The "Zero-Shot CoT" prompting strategy appears to be particularly effective for Qwen3-Next 80B A3B Instruct, resulting in an accuracy of 98.8%.

* The Llama models (3.3 70B Instruct, 3.1 8B Instruct, 3.2 3B Instruct, 3.2 1B Instruct) generally have lower accuracy compared to the other models, especially with the "Zero-Shot CoT" prompting strategy.

* The performance of Qwen3-Next 80B A3B Thinking varies significantly across prompting strategies, with the lowest accuracy (52.5%) observed for "Few-Shot" prompting.

### Interpretation

The heatmap provides a comparative analysis of language model performance under different prompting strategies. The data suggests that:

* Some models (e.g., Gemini 2.5 Flash, Gemini 2.5 Pro) are highly robust and achieve near-perfect accuracy regardless of the prompting strategy used.

* The choice of prompting strategy can significantly impact the performance of certain models. For example, the "Zero-Shot CoT" strategy seems to benefit Qwen3-Next 80B A3B Instruct but not the Llama models.

* Smaller models (e.g., Llama 3.2 1B Instruct) generally exhibit lower accuracy compared to larger models, indicating a correlation between model size and performance.

* The "Few-Shot" prompting strategy appears to be the least effective for Qwen3-Next 80B A3B Thinking, suggesting that this model may struggle with learning from a limited number of examples.

The heatmap highlights the importance of selecting an appropriate prompting strategy for a given language model to maximize its accuracy and effectiveness. It also demonstrates the performance differences between various models, providing valuable insights for model selection and optimization.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

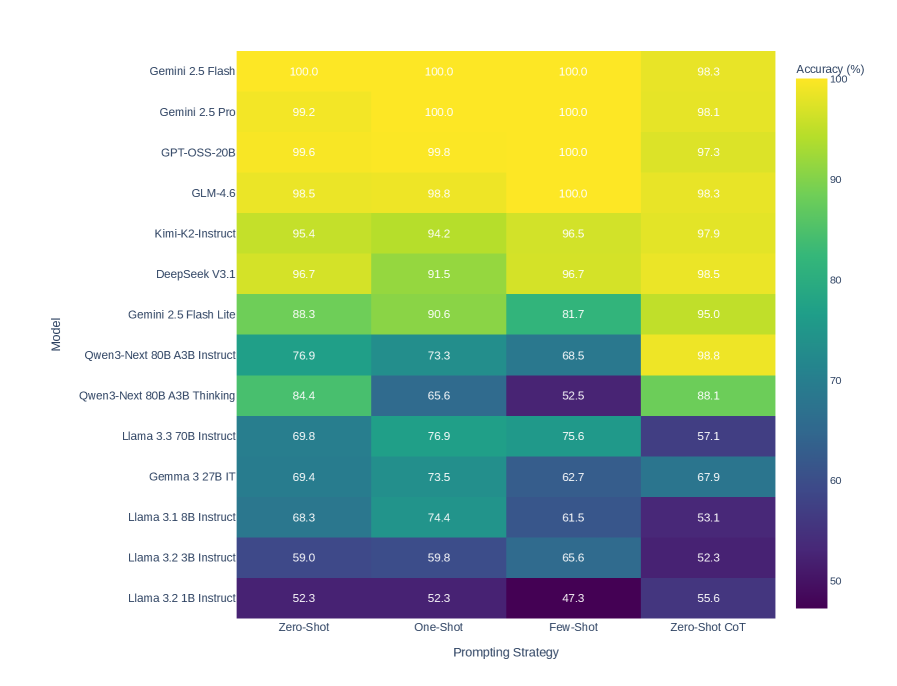

## Heatmap: Model Accuracy vs. Prompting Strategy

### Overview

This image presents a heatmap comparing the accuracy of various language models across four different prompting strategies. The heatmap uses a color gradient to represent accuracy, ranging from purple (lower accuracy) to yellow (higher accuracy). The Y-axis lists the models, and the X-axis represents the prompting strategies.

### Components/Axes

* **Y-axis (Vertical):** "Model" - Lists the following language models:

* Gemini 2.5 Flash

* Gemini 2.5 Pro

* GPT-OSS-20B

* GLM-4.6

* Kimi-K2-Instruct

* DeepSeek V3.1

* Gemini 2.5 Flash Lite

* Qwen3-Next 80B A3B Instruct

* Qwen3-Next 80B A3B Thinking

* Llama 3.3 70B Instruct

* Gemma 3.2 7B IT

* Llama 3.1 8B Instruct

* Llama 3.2 3B Instruct

* Llama 3.2 1B Instruct

* **X-axis (Horizontal):** "Prompting Strategy" - Lists the following strategies:

* Zero-Shot

* One-Shot

* Few-Shot

* Zero-Shot CoT

* **Color Scale (Right):** "Accuracy (%)" - A gradient from purple to yellow, indicating accuracy levels from approximately 50% to 100%.

* **Data Cells:** Each cell represents the accuracy of a specific model using a specific prompting strategy. The accuracy is displayed as a numerical value within each cell.

### Detailed Analysis

The heatmap displays accuracy percentages for each model and prompting strategy combination. Here's a breakdown of the data, reading left to right across the prompting strategies:

* **Zero-Shot:**

* Gemini 2.5 Flash: 100.0%

* Gemini 2.5 Pro: 99.2%

* GPT-OSS-20B: 99.6%

* GLM-4.6: 98.5%

* Kimi-K2-Instruct: 95.4%

* DeepSeek V3.1: 96.7%

* Gemini 2.5 Flash Lite: 88.3%

* Qwen3-Next 80B A3B Instruct: 76.9%

* Qwen3-Next 80B A3B Thinking: 84.4%

* Llama 3.3 70B Instruct: 69.8%

* Gemma 3.2 7B IT: 69.4%

* Llama 3.1 8B Instruct: 68.3%

* Llama 3.2 3B Instruct: 59.0%

* Llama 3.2 1B Instruct: 52.3%

* **One-Shot:**

* Gemini 2.5 Flash: 100.0%

* Gemini 2.5 Pro: 100.0%

* GPT-OSS-20B: 99.8%

* GLM-4.6: 98.8%

* Kimi-K2-Instruct: 94.2%

* DeepSeek V3.1: 91.5%

* Gemini 2.5 Flash Lite: 90.6%

* Qwen3-Next 80B A3B Instruct: 73.3%

* Qwen3-Next 80B A3B Thinking: 65.6%

* Llama 3.3 70B Instruct: 76.9%

* Gemma 3.2 7B IT: 73.5%

* Llama 3.1 8B Instruct: 74.4%

* Llama 3.2 3B Instruct: 59.8%

* Llama 3.2 1B Instruct: 52.3%

* **Few-Shot:**

* Gemini 2.5 Flash: 100.0%

* Gemini 2.5 Pro: 100.0%

* GPT-OSS-20B: 100.0%

* GLM-4.6: 100.0%

* Kimi-K2-Instruct: 96.5%

* DeepSeek V3.1: 96.7%

* Gemini 2.5 Flash Lite: 81.7%

* Qwen3-Next 80B A3B Instruct: 68.5%

* Qwen3-Next 80B A3B Thinking: 52.5%

* Llama 3.3 70B Instruct: 75.6%

* Gemma 3.2 7B IT: 62.7%

* Llama 3.1 8B Instruct: 61.5%

* Llama 3.2 3B Instruct: 65.6%

* Llama 3.2 1B Instruct: 47.3%

* **Zero-Shot CoT:**

* Gemini 2.5 Flash: 98.3%

* Gemini 2.5 Pro: 98.1%

* GPT-OSS-20B: 97.3%

* GLM-4.6: 98.3%

* Kimi-K2-Instruct: 97.9%

* DeepSeek V3.1: 98.5%

* Gemini 2.5 Flash Lite: 95.0%

* Qwen3-Next 80B A3B Instruct: 98.8%

* Qwen3-Next 80B A3B Thinking: 88.1%

* Llama 3.3 70B Instruct: 57.1%

* Gemma 3.2 7B IT: 67.9%

* Llama 3.1 8B Instruct: 53.1%

* Llama 3.2 3B Instruct: 52.3%

* Llama 3.2 1B Instruct: 55.6%

### Key Observations

* **High Performers:** Gemini 2.5 Flash, Gemini 2.5 Pro, and GPT-OSS-20B consistently achieve the highest accuracy scores (often 100%) across all prompting strategies.

* **Prompting Strategy Impact:** Accuracy generally increases with more sophisticated prompting strategies (Zero-Shot < One-Shot < Few-Shot). However, Zero-Shot CoT often performs similarly to or slightly below Few-Shot.

* **Model Size Matters:** Smaller models (Llama 3.2 1B Instruct, Llama 3.2 3B Instruct) consistently exhibit lower accuracy scores compared to larger models.

* **Qwen3-Next 80B A3B Thinking:** This model shows a significant drop in accuracy when moving from One-Shot to Few-Shot prompting.

* **Llama 3.3 70B Instruct:** This model shows a significant drop in accuracy when moving from One-Shot to Zero-Shot CoT prompting.

### Interpretation

The heatmap demonstrates a clear correlation between model size, prompting strategy, and accuracy. Larger models generally outperform smaller models, and more complex prompting strategies tend to yield higher accuracy. The consistent high performance of Gemini 2.5 Flash, Gemini 2.5 Pro, and GPT-OSS-20B suggests these models are particularly robust and capable of effectively utilizing different prompting techniques.

The variations in performance across prompting strategies highlight the importance of prompt engineering. While simply providing more examples (Few-Shot) often improves accuracy, the effectiveness of Chain-of-Thought (CoT) prompting appears to be model-dependent. The drop in accuracy for Qwen3-Next 80B A3B Thinking with Few-Shot suggests that this model may not benefit as much from additional examples, or that the examples need to be carefully curated.

The heatmap provides valuable insights for selecting the appropriate model and prompting strategy for a given task. It also underscores the ongoing need for research into more effective prompting techniques and the development of larger, more capable language models. The data suggests that the models are not uniformly sensitive to prompting strategies, and a one-size-fits-all approach is unlikely to be optimal.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap: AI Model Accuracy Across Prompting Strategies

### Overview

This image is a heatmap chart comparing the performance of 13 different large language models (LLMs) across four distinct prompting strategies. The performance metric is accuracy, presented as a percentage. The chart uses a color gradient from dark purple (low accuracy, ~50%) to bright yellow (high accuracy, 100%) to visually represent the data.

### Components/Axes

* **Y-Axis (Vertical):** Labeled "Model". It lists 13 AI models from top to bottom.

* Gemini 2.5 Flash

* Gemini 2.5 Pro

* GPT-OSS-20B

* GLM-4.6

* Kimi-K2-Instruct

* DeepSeek V3.1

* Gemini 2.5 Flash Lite

* Qwen3-Next 80B A3B Instruct

* Qwen3-Next 80B A3B Thinking

* Llama 3.3 70B Instruct

* Gemma 3 27B IT

* Llama 3.1 8B Instruct

* Llama 3.2 3B Instruct

* Llama 3.2 1B Instruct

* **X-Axis (Horizontal):** Labeled "Prompting Strategy". It lists four strategies from left to right.

* Zero-Shot

* One-Shot

* Few-Shot

* Zero-Shot CoT (Chain-of-Thought)

* **Legend/Color Scale:** Located on the right side, labeled "Accuracy (%)". It is a vertical color bar with numerical markers at 50, 60, 70, 80, 90, and 100. The gradient runs from dark purple at 50% to bright yellow at 100%.

### Detailed Analysis

The following table reconstructs the accuracy data from the heatmap. Values are read directly from the cells.

| Model | Zero-Shot | One-Shot | Few-Shot | Zero-Shot CoT |

| :--- | :--- | :--- | :--- | :--- |

| **Gemini 2.5 Flash** | 100.0 | 100.0 | 100.0 | 98.3 |

| **Gemini 2.5 Pro** | 99.2 | 100.0 | 100.0 | 98.1 |

| **GPT-OSS-20B** | 99.6 | 99.8 | 100.0 | 97.3 |

| **GLM-4.6** | 98.5 | 98.8 | 100.0 | 98.3 |

| **Kimi-K2-Instruct** | 95.4 | 94.2 | 96.5 | 97.9 |

| **DeepSeek V3.1** | 96.7 | 91.5 | 96.7 | 98.5 |

| **Gemini 2.5 Flash Lite** | 88.3 | 90.6 | 81.7 | 95.0 |

| **Qwen3-Next 80B A3B Instruct** | 76.9 | 73.3 | 68.5 | 98.8 |

| **Qwen3-Next 80B A3B Thinking** | 84.4 | 65.6 | 52.5 | 88.1 |

| **Llama 3.3 70B Instruct** | 69.8 | 76.9 | 75.6 | 57.1 |

| **Gemma 3 27B IT** | 69.4 | 73.5 | 62.7 | 67.9 |

| **Llama 3.1 8B Instruct** | 68.3 | 74.4 | 61.5 | 53.1 |

| **Llama 3.2 3B Instruct** | 59.0 | 59.8 | 65.6 | 52.3 |

| **Llama 3.2 1B Instruct** | 52.3 | 52.3 | 47.3 | 55.6 |

**Trend Verification by Model (Visual Description):**

* **Top Tier (Gemini 2.5 Flash/Pro, GPT-OSS-20B, GLM-4.6):** These models show consistently high accuracy (bright yellow) across all strategies, with values near or at 100%. Their performance is stable, with only minor dips in the "Zero-Shot CoT" column for some.

* **Mid Tier (Kimi-K2, DeepSeek V3.1, Gemini 2.5 Flash Lite):** These models display a mix of yellow and green cells. DeepSeek V3.1 shows a notable dip in "One-Shot" (91.5) but recovers in other strategies. Gemini 2.5 Flash Lite has its lowest score in "Few-Shot" (81.7).

* **Variable Performance (Qwen3-Next models):** The "Instruct" variant shows a clear downward trend from left to right (76.9 -> 73.3 -> 68.5) before a dramatic spike to 98.8 in "Zero-Shot CoT". The "Thinking" variant shows a steep decline to a low of 52.5 in "Few-Shot" before recovering to 88.1.

* **Lower Tier (Llama & Gemma models):** These models are represented by green, blue, and purple cells, indicating lower accuracy. Most show a pattern of moderate performance in "One-Shot" or "Few-Shot" but a significant drop in "Zero-Shot CoT", with the exception of the smallest Llama 3.2 1B model.

### Key Observations

1. **Dominant Models:** Gemini 2.5 Flash and Gemini 2.5 Pro achieve perfect or near-perfect scores (100.0, 99.2) in multiple strategies.

2. **Strategy Impact:** The "Zero-Shot CoT" strategy has a polarizing effect. It boosts the accuracy of the Qwen3-Next 80B A3B Instruct model to 98.8 (from 68.5 in Few-Shot) but severely degrades the performance of several Llama models (e.g., Llama 3.3 70B drops to 57.1).

3. **Performance Floor:** The lowest accuracy recorded is 47.3% for Llama 3.2 1B Instruct using the "Few-Shot" strategy.

4. **Model Size Correlation:** There is a general, though not perfect, correlation between model size/name and performance. The larger, more advanced models (Gemini 2.5, GPT-OSS, GLM) occupy the top rows with yellow cells, while smaller models (Llama 3.2 1B/3B) are at the bottom with cooler colors.

### Interpretation

This heatmap provides a comparative snapshot of LLM capabilities on a specific (but unnamed) task or benchmark. The data suggests several key insights:

* **Model Superiority:** The Gemini 2.5 series (Flash and Pro) demonstrates exceptional and robust performance, maintaining near-perfect accuracy regardless of the prompting method. This indicates strong underlying capability and less sensitivity to prompt engineering for this task.

* **Prompting Strategy is Not One-Size-Fits-All:** The effectiveness of a prompting strategy is highly model-dependent. While "Zero-Shot CoT" is a powerful technique for unlocking the reasoning potential of the Qwen3-Next Instruct model, it appears to confuse or hinder the Llama 3.x Instruct models on this task. This highlights the critical need for model-specific evaluation.

* **The "Thinking" Variant's Volatility:** The Qwen3-Next 80B A3B Thinking model shows the most dramatic variance, with accuracy swinging from 84.4% to 52.5% and back to 88.1%. This suggests its "thinking" process may be highly specialized or unstable across different prompt contexts.

* **Task Complexity Inference:** The fact that even the smallest model (Llama 3.2 1B) achieves over 50% accuracy in most strategies suggests the underlying task may not be extremely difficult, or it may be a task where memorization plays a role. However, the perfect scores of the top models indicate a clear performance ceiling exists.

In summary, the chart is a tool for benchmarking, showing that raw model size and architecture interact in complex ways with prompting techniques to determine final accuracy. It argues for empirical testing when selecting a model and prompt strategy for a specific application.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: AI Model Performance Across Prompting Strategies

### Overview

The image is a heatmap comparing the accuracy of various large language models (LLMs) across four prompting strategies: Zero-Shot, One-Shot, Few-Shot, and Zero-Shot Chain-of-Thought (CoT). Accuracy is represented using a color gradient from purple (low accuracy) to yellow (high accuracy), with numerical values embedded in each cell.

---

### Components/Axes

- **X-axis (Horizontal)**: Prompting Strategies

Labels: `Zero-Shot`, `One-Shot`, `Few-Shot`, `Zero-Shot CoT`

- **Y-axis (Vertical)**: AI Models

Labels (top to bottom):

`Gemini 2.5 Flash`, `Gemini 2.5 Pro`, `GPT-OSS-20B`, `GLM-4.6`, `Kimi-K2-Instruct`, `DeepSeek V3.1`, `Gemini 2.5 Flash Lite`, `Qwen3-Next 80B A3B Instruct`, `Qwen3-Next 80B A3B Thinking`, `Llama 3.33 70B Instruct`, `Gemma 3 27B IT`, `Llama 3.1 8B Instruct`, `Llama 3.2 3B Instruct`, `Llama 3.2 1B Instruct`

- **Legend**:

Color bar labeled `Accuracy (%)` ranging from **50% (purple)** to **100% (yellow)**.

---

### Detailed Analysis

#### Model Performance by Strategy

1. **Gemini 2.5 Flash**

- All strategies: **100%** (Zero-Shot, One-Shot, Few-Shot)

- Zero-Shot CoT: **98.3%**

2. **Gemini 2.5 Pro**

- All strategies: **100%** (Zero-Shot, One-Shot, Few-Shot)

- Zero-Shot CoT: **98.1%**

3. **GPT-OSS-20B**

- Zero-Shot: **99.6%**

- One-Shot: **99.8%**

- Few-Shot: **100%**

- Zero-Shot CoT: **97.3%**

4. **GLM-4.6**

- Zero-Shot: **98.5%**

- One-Shot: **98.8%**

- Few-Shot: **100%**

- Zero-Shot CoT: **98.3%**

5. **Kimi-K2-Instruct**

- Zero-Shot: **95.4%**

- One-Shot: **94.2%**

- Few-Shot: **96.5%**

- Zero-Shot CoT: **97.9%**

6. **DeepSeek V3.1**

- Zero-Shot: **96.7%**

- One-Shot: **91.5%**

- Few-Shot: **96.7%**

- Zero-Shot CoT: **98.5%**

7. **Gemini 2.5 Flash Lite**

- Zero-Shot: **88.3%**

- One-Shot: **90.6%**

- Few-Shot: **81.7%**

- Zero-Shot CoT: **95.0%**

8. **Qwen3-Next 80B A3B Instruct**

- Zero-Shot: **76.9%**

- One-Shot: **73.3%**

- Few-Shot: **68.5%**

- Zero-Shot CoT: **98.8%**

9. **Qwen3-Next 80B A3B Thinking**

- Zero-Shot: **84.4%**

- One-Shot: **65.6%**

- Few-Shot: **52.5%**

- Zero-Shot CoT: **88.1%**

10. **Llama 3.33 70B Instruct**

- Zero-Shot: **69.8%**

- One-Shot: **76.9%**

- Few-Shot: **75.6%**

- Zero-Shot CoT: **57.1%**

11. **Gemma 3 27B IT**

- Zero-Shot: **69.4%**

- One-Shot: **73.5%**

- Few-Shot: **62.7%**

- Zero-Shot CoT: **67.9%**

12. **Llama 3.1 8B Instruct**

- Zero-Shot: **68.3%**

- One-Shot: **74.4%**

- Few-Shot: **61.5%**

- Zero-Shot CoT: **53.1%**

13. **Llama 3.2 3B Instruct**

- Zero-Shot: **59.0%**

- One-Shot: **59.8%**

- Few-Shot: **65.6%**

- Zero-Shot CoT: **52.3%**

14. **Llama 3.2 1B Instruct**

- Zero-Shot: **52.3%**

- One-Shot: **52.3%**

- Few-Shot: **47.3%**

- Zero-Shot CoT: **55.6%**

---

### Key Observations

1. **Top Performers**:

- Gemini 2.5 Flash and Pro dominate all strategies with near-perfect accuracy (98–100%).

- GPT-OSS-20B and GLM-4.6 also show consistently high performance (97–100%).

2. **Llama Models Struggle**:

- Llama 3.2 1B Instruct has the lowest accuracy across all strategies (47.3–55.6%).

- Llama 3.33 70B Instruct performs poorly in Zero-Shot CoT (**57.1%**).

3. **Zero-Shot CoT Variability**:

- Some models (e.g., Qwen3-Next 80B A3B Instruct) show dramatic improvements in Zero-Shot CoT (**98.8%** vs. 76.9% in Zero-Shot).

- Others (e.g., Llama 3.2 1B Instruct) see minimal gains (**55.6%** vs. 52.3%).

4. **Color Consistency**:

- Yellow cells (high accuracy) align with Gemini, GPT-OSS, and GLM models.

- Purple cells (low accuracy) correspond to Llama 3.2 1B Instruct and Few-Shot strategies for smaller models.

---

### Interpretation

The heatmap reveals that **larger, more advanced models** (e.g., Gemini, GPT-OSS, GLM) maintain high accuracy across all prompting strategies, suggesting robustness in handling diverse inputs. In contrast, **smaller Llama models** (1B, 3.2 3B) underperform significantly, particularly in Few-Shot and Zero-Shot CoT, highlighting limitations in contextual reasoning without extensive examples.

The **Zero-Shot CoT strategy** acts as a double-edged sword: it improves performance for some models (e.g., Qwen3-Next 80B A3B Instruct) but fails to mitigate weaknesses in others (e.g., Llama 3.2 1B Instruct). This suggests that CoT prompting may require model-specific optimizations.

The data underscores the importance of model architecture and size in determining prompting strategy effectiveness, with larger models generally offering more consistent and reliable performance.

DECODING INTELLIGENCE...