TECHNICAL ASSET FINGERPRINT

4acde5312da6480f95598b73

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

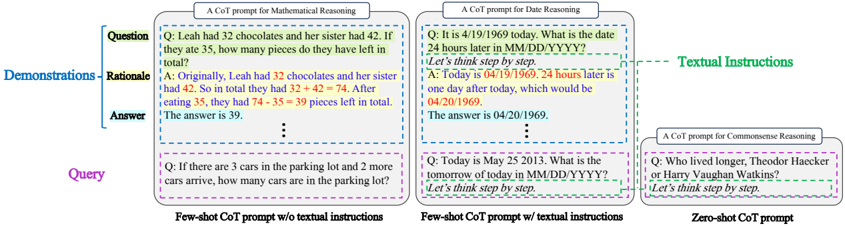

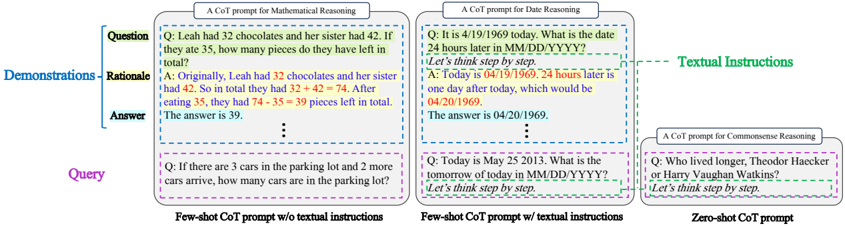

## Chain-of-Thought (CoT) Prompt Examples

### Overview

The image presents examples of Chain-of-Thought (CoT) prompts used for different reasoning tasks: Mathematical Reasoning, Date Reasoning, and Commonsense Reasoning. It illustrates few-shot and zero-shot approaches, with and without textual instructions.

### Components/Axes

* **Titles:**

* "A CoT prompt for Mathematical Reasoning" (top-left)

* "A CoT prompt for Date Reasoning" (top-center)

* "A CoT prompt for Commonsense Reasoning" (top-right)

* **Sections:**

* "Question" (blue vertical line pointing to the question)

* "Demonstrations" (blue vertical line pointing to the rationale and answer)

* "Rationale" (blue vertical line pointing to the rationale)

* "Answer" (blue vertical line pointing to the answer)

* "Query" (purple vertical line pointing to the query)

* **Textual Instructions:** Green dashed line pointing to the "Let's think step by step." prompt.

* **Prompt Types:**

* "Few-shot CoT prompt w/o textual instructions" (bottom-left)

* "Few-shot CoT prompt w/ textual instructions" (bottom-center)

* "Zero-shot CoT prompt" (bottom-right)

### Detailed Analysis or ### Content Details

**1. A CoT prompt for Mathematical Reasoning (top-left)**

* **Question:** "Q: Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?"

* **Rationale:** "A: Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total."

* **Answer:** "The answer is 39."

* **Query:** "Q: If there are 3 cars in the parking lot and 2 more cars arrive, how many cars are in the parking lot?"

**2. A CoT prompt for Date Reasoning (top-center)**

* **Question:** "Q: It is 4/19/1969 today. What is the date 24 hours later in MM/DD/YYYY?"

* **Textual Instructions:** "Let's think step by step."

* **Rationale:** "A: Today is 04/19/1969. 24 hours later is one day after today, which would be 04/20/1969."

* **Answer:** "The answer is 04/20/1969."

* **Query:** "Q: Today is May 25 2013. What is the tomorrow of today in MM/DD/YYYY?"

* **Textual Instructions:** "Let's think step by step."

**3. A CoT prompt for Commonsense Reasoning (top-right)**

* **Question:** "Q: Who lived longer, Theodor Haecker or Harry Vaughan Watkins?"

* **Textual Instructions:** "Let's think step by step."

### Key Observations

* The examples demonstrate how CoT prompts can be structured to guide the model's reasoning process.

* Few-shot prompts provide examples of question-answer pairs, while zero-shot prompts rely on the model's pre-existing knowledge.

* Textual instructions, such as "Let's think step by step," can further enhance the model's reasoning abilities.

* The mathematical reasoning example includes a detailed step-by-step calculation.

* The date reasoning example involves calculating a date based on a given date and time interval.

* The commonsense reasoning example requires the model to apply its knowledge of historical figures.

### Interpretation

The image illustrates the application of Chain-of-Thought (CoT) prompting to various reasoning tasks. CoT prompting is a technique used to improve the performance of large language models (LLMs) by encouraging them to generate intermediate reasoning steps before providing a final answer. The examples show how CoT prompts can be tailored to different types of reasoning, such as mathematical, date-based, and commonsense reasoning. The inclusion of demonstrations (few-shot learning) and textual instructions further guides the model's reasoning process, leading to more accurate and coherent responses. The image highlights the flexibility and effectiveness of CoT prompting as a method for enhancing the reasoning capabilities of LLMs.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Chain-of-Thought (CoT) Prompt Examples

### Overview

The image presents a comparative diagram illustrating different Chain-of-Thought (CoT) prompting strategies for Large Language Models (LLMs). It showcases three examples: Few-shot CoT without textual instructions, Few-shot CoT with textual instructions, and Zero-shot CoT. Each example is structured into "Demonstrations" and "Query" sections, further divided into "Question", "Rationale", and "Answer". The diagram also includes a "Textual Instructions" label pointing to the textual guidance provided in the second example.

### Components/Axes

The diagram is organized into three main columns, each representing a different CoT prompting approach. Each column is further divided into two sections: "Demonstrations" (top) and "Query" (bottom). Within each section, there are three sub-sections: "Question", "Rationale", and "Answer". The diagram also includes a label "Textual Instructions" in green, pointing to the textual guidance in the "Few-shot CoT prompt w/ textual instructions" column.

### Detailed Analysis or Content Details

**1. Few-shot CoT Prompt w/o textual instructions (Left Column - Blue Border)**

* **Question:** "Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?"

* **Rationale:** "Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total."

* **Answer:** "The answer is 39."

* **Query:** "If there are 3 cars in the parking lot and 2 more cars arrive, how many cars are in the parking lot?"

**2. Few-shot CoT Prompt w/ textual instructions (Center Column - Light Blue Border)**

* **Question:** "It is 4/19/1969 today. What is the date 24 hours later in MM/DD/YYYY?"

* **Textual Instructions:** "Let's think step by step."

* **Rationale:** "Today is 04/19/1969. 24 hours later is one day after today, which would be 04/20/1969."

* **Answer:** "The answer is 04/20/1969."

* **Query:** "Today is May 25 2013. What is the tomorrow of today in MM/DD/YYYY?"

* **Textual Instructions:** "Let's think step by step."

**3. Zero-shot CoT Prompt (Right Column - Pink Border)**

* **Question:** "Who lived longer, Theodor Haecker or Harry Vaughan Watkins?"

* **Textual Instructions:** "Let's think step by step."

**General Observations:**

* The "Demonstrations" section provides examples of how the LLM should reason through a problem.

* The "Query" section presents a new problem for the LLM to solve.

* The "Rationale" section shows the step-by-step reasoning process.

* The "Answer" section provides the final solution.

* The "Textual Instructions" ("Let's think step by step.") are included in the Few-shot CoT with textual instructions and Zero-shot CoT examples.

### Key Observations

The diagram highlights the impact of textual instructions on the CoT prompting process. The inclusion of "Let's think step by step" appears to guide the LLM towards a more structured and reasoned response. The diagram also demonstrates how providing a few examples (Few-shot) can improve the LLM's performance compared to providing no examples (Zero-shot).

### Interpretation

This diagram illustrates a key technique in prompting Large Language Models (LLMs) – Chain-of-Thought (CoT) prompting. CoT prompting encourages the LLM to articulate its reasoning process, leading to more accurate and interpretable results. The comparison of the three approaches (Few-shot w/o instructions, Few-shot w/ instructions, and Zero-shot) demonstrates the effectiveness of providing both examples and explicit instructions to guide the LLM's reasoning. The "Let's think step by step" instruction acts as a trigger, prompting the LLM to break down the problem into smaller, more manageable steps. The diagram suggests that even simple textual instructions can significantly improve the performance of LLMs on complex reasoning tasks. The diagram is a visual aid for understanding and experimenting with different CoT prompting strategies. It is a demonstration of how to improve the performance of LLMs by guiding their reasoning process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of Chain-of-Thought (CoT) Prompt Structures

### Overview

The image is a technical diagram illustrating three different structures for Chain-of-Thought (CoT) prompting, used to guide AI models in reasoning tasks. It visually compares "Few-shot CoT prompt w/o textual instructions," "Few-shot CoT prompt w/ textual instructions," and "Zero-shot CoT prompt." The diagram uses color-coded boxes and labels to break down the components of each prompt type.

### Components/Axes

The diagram is organized into three main rectangular boxes arranged horizontally, each representing a prompt type.

**1. Left Box: "Few-shot CoT prompt w/o textual instructions"**

* **Title:** "A CoT prompt for Mathematical Reasoning"

* **Structure:** Divided into three labeled sections:

* **Question** (Blue label, top-left): Contains the user's query.

* **Rationale** (Orange label, middle-left): Contains the step-by-step reasoning.

* **Answer** (Green label, bottom-left): Contains the final answer.

* **Content:**

* **Question:** "Q: Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?"

* **Rationale:** "A: Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total."

* **Answer:** "The answer is 39."

* **Query Section:** Below the main box, a separate purple-bordered box labeled "Query" contains a new problem: "Q: If there are 3 cars in the parking lot and 2 more cars arrive, how many cars are in the parking lot?"

**2. Middle Box: "Few-shot CoT prompt w/ textual instructions"**

* **Title:** "A CoT prompt for Date Reasoning"

* **Structure:** Same three-section layout (Question, Rationale, Answer).

* **Key Difference:** A green dashed arrow labeled **"Textual Instructions"** points from the right side of this box to the rationale text, highlighting the inclusion of explicit reasoning instructions.

* **Content:**

* **Question:** "Q: It is 4/19/1969 today. What is the date 24 hours later in MM/DD/YYYY?"

* **Rationale:** "A: Today is 04/19/1969. **24 hours later** is one day after today, which would be 04/20/1969. **Let's think step by step.**" (The bolded phrases are the "textual instructions").

* **Answer:** "The answer is 04/20/1969."

* **Query Section:** Below, a purple-bordered "Query" box contains: "Q: Today is May 25 2013. What is the tomorrow of today in MM/DD/YYYY? **Let's think step by step.**"

**3. Right Box: "Zero-shot CoT prompt"**

* **Title:** "A CoT prompt for Commonsense Reasoning"

* **Structure:** A single, simpler box containing only a question and the instruction "Let's think step by step." It lacks the separate Rationale and Answer sections shown in the few-shot examples.

* **Content:**

* **Question:** "Q: Who lived longer, Theodor Haeker or Harry Vaughan Watkins?"

* **Instruction:** "Let's think step by step."

### Detailed Analysis

* **Spatial Grounding:** The "Demonstrations" label (blue) is positioned to the far left, indicating the first two boxes serve as examples. The "Query" boxes are consistently placed below their respective demonstration boxes. The "Textual Instructions" label and arrow are in the upper-right quadrant, specifically referencing the middle box.

* **Trend Verification:** The diagram demonstrates a progression in prompt structure:

1. **Few-shot w/o instructions:** Provides complete example demonstrations (Q, Rationale, A) but no explicit directive on *how* to reason.

2. **Few-shot w/ instructions:** Provides the same complete demonstrations but embeds the key phrase "Let's think step by step" within the rationale, explicitly modeling the desired reasoning process.

3. **Zero-shot:** Provides no worked examples. It only gives the target question followed by the imperative instruction "Let's think step by step," relying on the model's inherent capability to generate a reasoning chain.

* **Component Isolation:**

* **Header Region:** Contains the titles for each prompt type.

* **Main Chart Region:** Contains the three core prompt structure boxes and their internal Q/R/A sections.

* **Footer Region:** Contains the "Query" boxes for the first two types, showing how the learned structure is applied to a new problem.

### Key Observations

1. **Structural Evolution:** The diagram clearly shows the architectural difference between few-shot learning (which provides examples) and zero-shot learning (which does not).

2. **Role of Textual Instructions:** The middle box highlights that adding the simple phrase "Let's think step by step" to the demonstration rationale is a key method for "textual instructions," explicitly teaching the model the desired behavior.

3. **Domain Variation:** The examples cover different reasoning domains: arithmetic (mathematical), calendar calculation (temporal), and biographical comparison (commonsense), suggesting the CoT method's broad applicability.

4. **Visual Coding:** Color is used functionally: blue for questions, orange for rationales, green for answers, and purple for new queries. Dashed lines group related components.

### Interpretation

This diagram serves as a technical schematic for designing effective prompts to elicit reasoning from AI models. It illustrates a core principle in prompt engineering: **explicitly demonstrating the desired intermediate reasoning steps ("Rationale") significantly structures the model's output.**

The progression from left to right shows increasing reliance on the model's internal capabilities versus external guidance. The "Few-shot w/o textual instructions" prompt relies on pattern matching from examples. The "Few-shot w/ textual instructions" prompt adds a crucial layer of explicit meta-instruction, making the desired process unambiguous. The "Zero-shot" prompt removes the safety net of examples entirely, testing if the instruction alone is sufficient to trigger the reasoning chain.

The inclusion of different domains (math, dates, commonsense) implies that the CoT prompting framework is a general-purpose technique for improving performance on tasks requiring multi-step logic, not just a solution for a single problem type. The "Query" sections demonstrate the practical application: after seeing the structured demonstrations, the model is expected to apply the same Q->Rationale->Answer format to novel problems.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Comparison of Chain-of-Thought (CoT) Prompts for Reasoning Tasks

### Overview

The image compares three types of CoT prompts across reasoning tasks:

1. **Mathematical Reasoning** (few-shot with textual instructions)

2. **Date Reasoning** (few-shot with textual instructions)

3. **Commonsense Reasoning** (zero-shot without textual instructions)

Each section demonstrates how prompts are structured, with color-coded elements (e.g., questions, demonstrations, answers) and textual instructions highlighted in green.

---

### Components/Axes

#### Labels and Structure

- **Legend**:

- "Textual Instructions" (highlighted in green, positioned on the far right).

- **Sections**:

1. **Mathematical Reasoning** (leftmost):

- Title: "A CoT prompt for Mathematical Reasoning"

- Subsections:

- **Demonstrations**: Labeled "Demonstrations" (blue), containing:

- **Rationale** (orange): Step-by-step reasoning.

- **Answer** (blue): Final answer.

- **Query**: Purple box with a new problem.

2. **Date Reasoning** (middle):

- Title: "A CoT prompt for Date Reasoning"

- Subsections:

- **Demonstrations**: Same structure as Mathematical Reasoning.

- **Query**: Purple box with a date-related problem.

3. **Commonsense Reasoning** (rightmost):

- Title: "A CoT prompt for Commonsense Reasoning"

- Subsections:

- **Demonstrations**: Absent (zero-shot).

- **Query**: Purple box with a commonsense question.

#### Color Coding

- **Blue**: Questions, answers, and titles.

- **Green**: Textual instructions (e.g., "Let's think step by step").

- **Orange**: Rationale (step-by-step reasoning).

- **Purple**: Queries (new problems).

- **Yellow/Red**: Highlighted text in examples (e.g., numbers in math problems).

---

### Detailed Analysis

#### Mathematical Reasoning (Few-Shot)

- **Question**: "Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?"

- **Demonstration**:

- **Rationale**:

- "Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total."

- **Answer**: "The answer is 39."

- **Query**: "If there are 3 cars in the parking lot and 2 more cars arrive, how many cars are in the parking lot?"

#### Date Reasoning (Few-Shot)

- **Question**: "It is 4/19/1969 today. What is the date 24 hours later in MM/DD/YYYY?"

- **Demonstration**:

- **Rationale**:

- "Today is 04/19/1969. 24 hours later is one day after today, which would be 04/20/1969."

- **Answer**: "The answer is 04/20/1969."

- **Query**: "Today is May 25 2013. What is the tomorrow of today in MM/DD/YYYY?"

#### Commonsense Reasoning (Zero-Shot)

- **Question**: "Who lived longer, Theodor Haecker or Harry Vaughan Watkins?"

- **Demonstration**: None (zero-shot).

- **Query**: Same as the question above.

---

### Key Observations

1. **Textual Instructions**:

- Green-highlighted phrases like "Let's think step by step" guide reasoning in few-shot examples.

2. **Color Consistency**:

- Blue consistently marks questions/answers, while orange denotes rationale.

3. **Zero-Shot Limitation**:

- The Commonsense Reasoning section lacks demonstrations, relying solely on the query.

4. **Numerical Examples**:

- Math and date examples use explicit arithmetic (e.g., 32 + 42 = 74, 74 - 35 = 39).

---

### Interpretation

- **Purpose**: The diagram illustrates how CoT prompts improve reasoning by providing structured examples (few-shot) versus relying on raw queries (zero-shot).

- **Effectiveness**:

- Few-shot prompts with textual instructions (green) enable step-by-step reasoning, as seen in math and date examples.

- Zero-shot prompts (Commonsense Reasoning) lack scaffolding, potentially reducing accuracy.

- **Design Choice**:

- Color coding enhances readability, separating questions (blue), reasoning (orange), and answers (blue).

- Textual instructions act as a "scaffold" for logical progression.

This visualization emphasizes the importance of guided examples in complex reasoning tasks, while highlighting the limitations of zero-shot approaches.

DECODING INTELLIGENCE...