## Diagram: Chain-of-Thought (CoT) Prompt Examples

### Overview

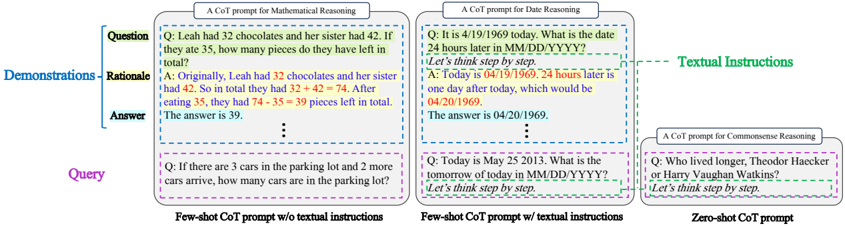

The image presents a comparative diagram illustrating different Chain-of-Thought (CoT) prompting strategies for Large Language Models (LLMs). It showcases three examples: Few-shot CoT without textual instructions, Few-shot CoT with textual instructions, and Zero-shot CoT. Each example is structured into "Demonstrations" and "Query" sections, further divided into "Question", "Rationale", and "Answer". The diagram also includes a "Textual Instructions" label pointing to the textual guidance provided in the second example.

### Components/Axes

The diagram is organized into three main columns, each representing a different CoT prompting approach. Each column is further divided into two sections: "Demonstrations" (top) and "Query" (bottom). Within each section, there are three sub-sections: "Question", "Rationale", and "Answer". The diagram also includes a label "Textual Instructions" in green, pointing to the textual guidance in the "Few-shot CoT prompt w/ textual instructions" column.

### Detailed Analysis or Content Details

**1. Few-shot CoT Prompt w/o textual instructions (Left Column - Blue Border)**

* **Question:** "Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?"

* **Rationale:** "Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total."

* **Answer:** "The answer is 39."

* **Query:** "If there are 3 cars in the parking lot and 2 more cars arrive, how many cars are in the parking lot?"

**2. Few-shot CoT Prompt w/ textual instructions (Center Column - Light Blue Border)**

* **Question:** "It is 4/19/1969 today. What is the date 24 hours later in MM/DD/YYYY?"

* **Textual Instructions:** "Let's think step by step."

* **Rationale:** "Today is 04/19/1969. 24 hours later is one day after today, which would be 04/20/1969."

* **Answer:** "The answer is 04/20/1969."

* **Query:** "Today is May 25 2013. What is the tomorrow of today in MM/DD/YYYY?"

* **Textual Instructions:** "Let's think step by step."

**3. Zero-shot CoT Prompt (Right Column - Pink Border)**

* **Question:** "Who lived longer, Theodor Haecker or Harry Vaughan Watkins?"

* **Textual Instructions:** "Let's think step by step."

**General Observations:**

* The "Demonstrations" section provides examples of how the LLM should reason through a problem.

* The "Query" section presents a new problem for the LLM to solve.

* The "Rationale" section shows the step-by-step reasoning process.

* The "Answer" section provides the final solution.

* The "Textual Instructions" ("Let's think step by step.") are included in the Few-shot CoT with textual instructions and Zero-shot CoT examples.

### Key Observations

The diagram highlights the impact of textual instructions on the CoT prompting process. The inclusion of "Let's think step by step" appears to guide the LLM towards a more structured and reasoned response. The diagram also demonstrates how providing a few examples (Few-shot) can improve the LLM's performance compared to providing no examples (Zero-shot).

### Interpretation

This diagram illustrates a key technique in prompting Large Language Models (LLMs) – Chain-of-Thought (CoT) prompting. CoT prompting encourages the LLM to articulate its reasoning process, leading to more accurate and interpretable results. The comparison of the three approaches (Few-shot w/o instructions, Few-shot w/ instructions, and Zero-shot) demonstrates the effectiveness of providing both examples and explicit instructions to guide the LLM's reasoning. The "Let's think step by step" instruction acts as a trigger, prompting the LLM to break down the problem into smaller, more manageable steps. The diagram suggests that even simple textual instructions can significantly improve the performance of LLMs on complex reasoning tasks. The diagram is a visual aid for understanding and experimenting with different CoT prompting strategies. It is a demonstration of how to improve the performance of LLMs by guiding their reasoning process.