## CDF Plot: CDF of Δ||h|| Norms (Token vs Step)

### Overview

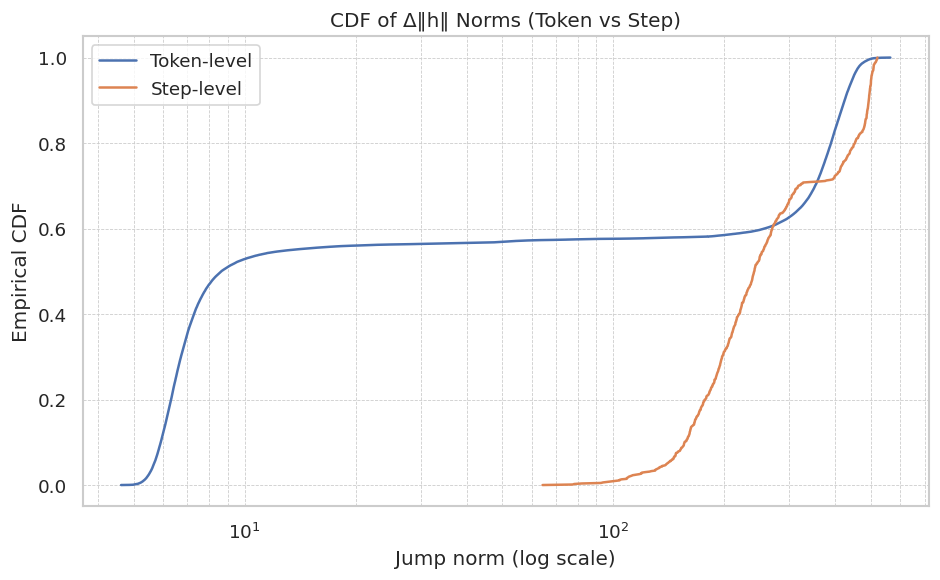

The image displays a Cumulative Distribution Function (CDF) plot comparing the distribution of "jump norms" (Δ||h||) at two different granularities: Token-level and Step-level. The plot uses a logarithmic scale for the x-axis. The title suggests this data relates to changes in hidden state norms (||h||) within a computational process, likely in the context of neural network training or analysis.

### Components/Axes

* **Title:** "CDF of Δ||h|| Norms (Token vs Step)"

* **Y-axis:** Label is "Empirical CDF". Scale ranges from 0.0 to 1.0 with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-axis:** Label is "Jump norm (log scale)". The axis is logarithmic, with major labeled tick marks at `10^1` (10) and `10^2` (100). The visible range extends from approximately `10^0` (1) to `10^2.5` (~316).

* **Legend:** Located in the top-left corner of the plot area.

* **Token-level:** Represented by a solid blue line.

* **Step-level:** Represented by a solid orange line.

* **Grid:** A light gray grid is present, aligned with the major ticks on both axes.

### Detailed Analysis

**1. Token-level (Blue Line) Trend & Data Points:**

* **Trend:** The line exhibits a bimodal or two-phase distribution. It rises very steeply at low jump norms, plateaus for a wide range, and then rises steeply again at high jump norms.

* **Data Points (Approximate):**

* The CDF begins to rise from 0 at a jump norm of approximately `10^0.3` (~2).

* It reaches a CDF of ~0.5 at a jump norm of approximately `10^0.8` (~6.3).

* The curve then flattens significantly, forming a long plateau. The CDF increases very slowly from ~0.55 to ~0.60 as the jump norm increases from `10^1` (10) to `10^2` (100).

* After `10^2` (100), the line rises steeply again.

* It reaches a CDF of ~0.9 at a jump norm of approximately `10^2.3` (~200).

* It approaches and reaches a CDF of 1.0 at a jump norm of approximately `10^2.5` (~316).

**2. Step-level (Orange Line) Trend & Data Points:**

* **Trend:** The line shows a unimodal distribution that is shifted significantly to the right (higher values) compared to the initial rise of the Token-level line. It has a single, steep sigmoidal rise.

* **Data Points (Approximate):**

* The CDF begins to rise from 0 at a jump norm of approximately `10^1.8` (~63).

* It reaches a CDF of ~0.2 at a jump norm of approximately `10^2.1` (~126).

* It reaches a CDF of ~0.5 at a jump norm of approximately `10^2.2` (~158).

* It reaches a CDF of ~0.8 at a jump norm of approximately `10^2.4` (~251).

* It converges with the Token-level line, approaching and reaching a CDF of 1.0 at a jump norm of approximately `10^2.5` (~316).

**3. Cross-Reference & Intersection:**

* The two lines intersect at a CDF value of approximately 0.62. This occurs at a jump norm of roughly `10^2.25` (~178).

* For jump norms below ~`10^2.25`, the Token-level CDF is higher than the Step-level CDF. This means a larger proportion of token-level jumps are smaller than this value compared to step-level jumps.

* For jump norms above ~`10^2.25`, the Step-level CDF is higher, indicating that a larger proportion of step-level jumps are smaller than these very large values compared to token-level jumps (though both distributions are nearing completion).

### Key Observations

1. **Distinct Distributions:** The Token-level and Step-level jump norms follow fundamentally different distributions. Token-level jumps are heavily concentrated at very small values (first steep rise) and very large values (second steep rise), with relatively few jumps of intermediate size (the plateau). Step-level jumps are concentrated in a single, higher range.

2. **Scale Difference:** The vast majority (over 50%) of token-level jumps have a norm less than ~10, while the vast majority of step-level jumps have a norm greater than ~63.

3. **Convergence at Extremes:** Both distributions converge to a CDF of 1.0 at approximately the same maximum jump norm (~316), suggesting a common upper bound or scaling factor in the system being measured.

4. **Plateau Significance:** The long plateau in the Token-level CDF between norms of 10 and 100 is a critical feature, indicating a "gap" or scarcity of token-level changes of this intermediate magnitude.

### Interpretation

This plot provides a comparative analysis of the magnitude of changes (Δ||h||) in a hidden state vector `h` at two different temporal resolutions: per token processed and per optimization step.

* **Token-level Dynamics:** The bimodal distribution suggests two primary regimes of change at the token level. The first, very frequent small jumps likely correspond to routine, incremental updates as the model processes each token. The second, less frequent but large jumps could indicate significant state transitions, perhaps triggered by specific tokens or context shifts. The plateau implies that changes of intermediate size are rare, pointing to a potential "all-or-nothing" characteristic in the hidden state updates at this granularity.

* **Step-level Dynamics:** The unimodal, right-shifted distribution indicates that the cumulative change over an entire optimization step is typically much larger than most individual token-level changes. This is expected, as a step aggregates many token updates. The shape suggests a more consistent, perhaps normally distributed, magnitude of update per step.

* **Relationship:** The intersection point (~178) is a threshold. Below it, token-level changes dominate the cumulative probability; above it, step-level changes do. The convergence at the high end suggests that the largest single-token jumps can be as significant as the total change over a full step, which may highlight the impact of specific, critical tokens in the sequence.

* **Underlying System:** In the context of neural networks (e.g., Transformers), this could reflect the difference between the immediate, sometimes volatile, effect of a single forward/backward pass on a hidden state versus the smoothed, aggregated update applied to the model's parameters after a batch of data. The data could be used to diagnose training stability, understand the contribution of individual tokens, or calibrate update scaling.