TECHNICAL ASSET FINGERPRINT

4af71498b99cd92b8fefa05c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

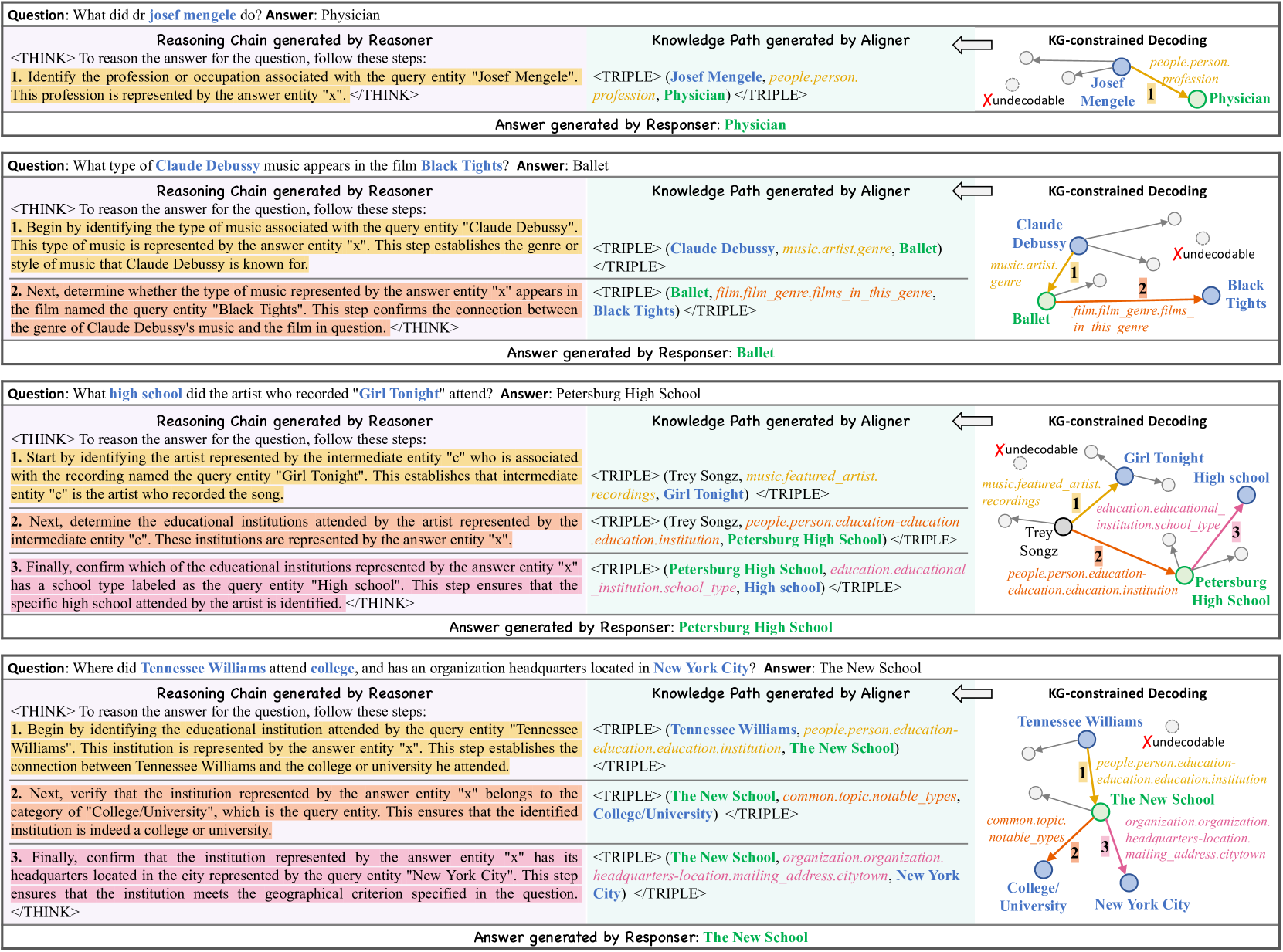

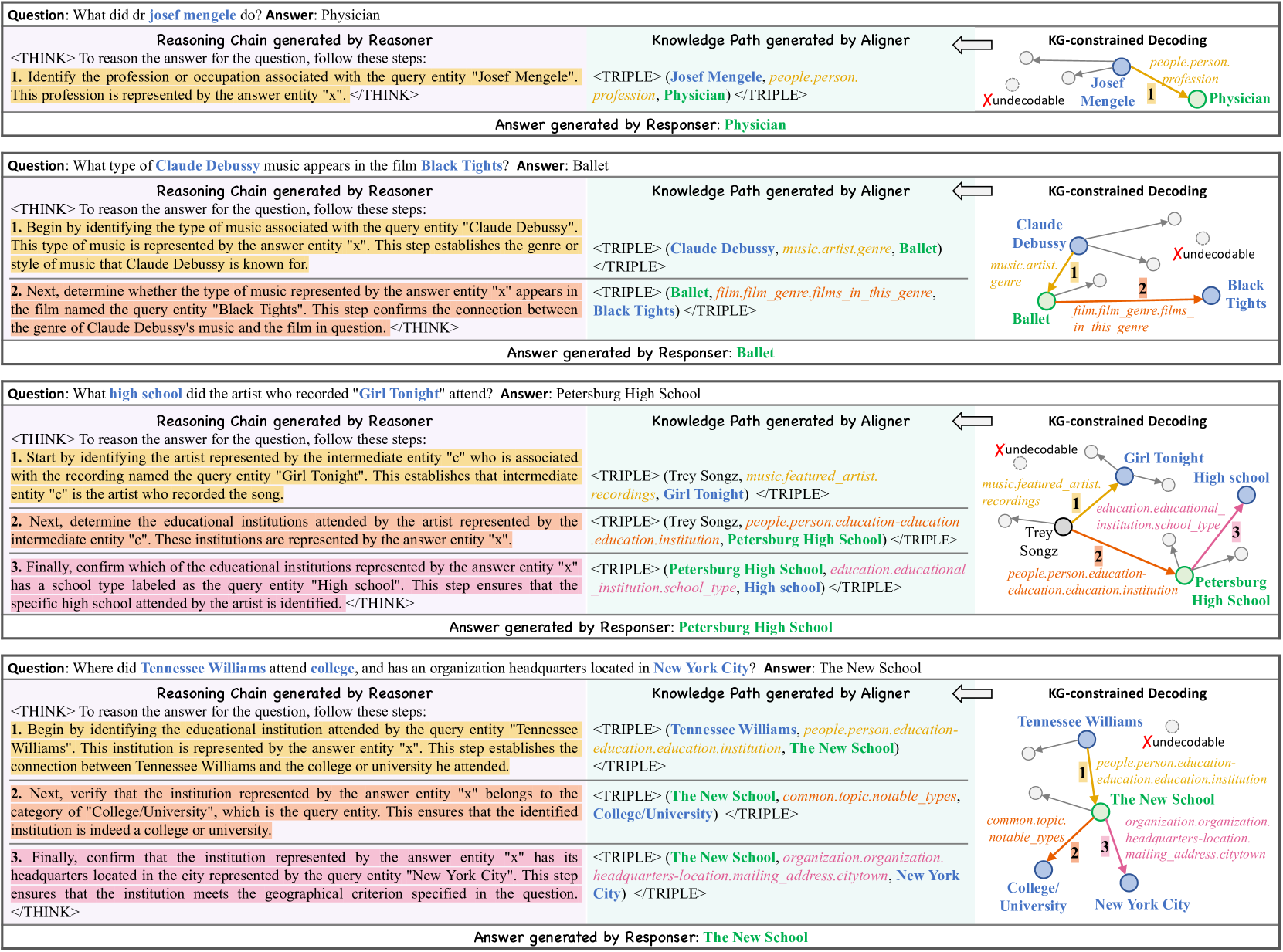

## [Diagram]: Knowledge Graph-Constrained Reasoning Process Examples

### Overview

The image displays four distinct examples of a question-answering system's internal process. Each example follows a consistent structure, demonstrating how a "Reasoner" generates a step-by-step reasoning chain, an "Aligner" maps this to a knowledge graph path (as triples), and a "KG-constrained Decoding" diagram visualizes the entity relationships. The final answer is generated by a "Responder." The language is English throughout.

### Components/Axes

The image is segmented into four horizontal blocks, each representing one question-answer example. Each block contains four primary components arranged from left to right:

1. **Question & Answer Header:** A line stating the question and the final answer.

2. **Reasoning Chain generated by Reasoner:** A `<THINK>` block containing numbered, step-by-step logical reasoning.

3. **Knowledge Path generated by Aligner:** A series of `<TRIPLE>` elements that formalize the reasoning steps into structured data (subject, predicate, object).

4. **KG-constrained Decoding Diagram:** A visual graph with nodes (circles) and directed edges (arrows) representing entities and their relationships. Nodes are color-coded (blue, green, orange) and some are labeled "undecodable." Numbered steps (1, 2, 3) correspond to the reasoning steps.

5. **Answer generated by Responder:** The final answer, highlighted in green.

### Detailed Analysis

**Example 1:**

* **Question:** What did dr josef mengele do? **Answer:** Physician

* **Reasoning Chain:** 1. Identify the profession associated with "Josef Mengele". This profession is represented by the answer entity "x".

* **Knowledge Path:** `<TRIPLE> (Josef Mengele, people.person.profession, Physician) </TRIPLE>`

* **KG Diagram:** Shows a path from node "Josef Mengele" (blue) via edge "people.person.profession" (step 1) to node "Physician" (green). An "undecodable" node is present but not connected to the main path.

**Example 2:**

* **Question:** What type of Claude Debussy music appears in the film Black Tights? **Answer:** Ballet

* **Reasoning Chain:** 1. Identify the type of music associated with "Claude Debussy". 2. Determine if this type appears in the film "Black Tights".

* **Knowledge Path:**

* `<TRIPLE> (Claude Debussy, music.artist.genre, Ballet) </TRIPLE>`

* `<TRIPLE> (Ballet, film.film_genre.films_in_this_genre, Black Tights) </TRIPLE>`

* **KG Diagram:** A two-step path: "Claude Debussy" (blue) -> "Ballet" (green, step 1) -> "Black Tights" (blue, step 2). An "undecodable" node is present.

**Example 3:**

* **Question:** What high school did the artist who recorded "Girl Tonight" attend? **Answer:** Petersburg High School

* **Reasoning Chain:** 1. Identify the artist ("c") associated with "Girl Tonight". 2. Determine the educational institutions attended by artist "c". 3. Confirm which institution is a "High school".

* **Knowledge Path:**

* `<TRIPLE> (Trey Songz, music.featured_artist.recordings, Girl Tonight) </TRIPLE>`

* `<TRIPLE> (Trey Songz, people.person.education-education.education.institution, Petersburg High School) </TRIPLE>`

* `<TRIPLE> (Petersburg High School, education.educational_institution.school_type, High school) </TRIPLE>`

* **KG Diagram:** A three-step path: "Girl Tonight" (blue) -> "Trey Songz" (orange, step 1) -> "Petersburg High School" (green, step 2) -> "High school" (blue, step 3). An "undecodable" node is present.

**Example 4:**

* **Question:** Where did Tennessee Williams attend college, and has an organization headquarters located in New York City? **Answer:** The New School

* **Reasoning Chain:** 1. Identify the educational institution attended by "Tennessee Williams". 2. Verify the institution is a "College/University". 3. Confirm its headquarters are in "New York City".

* **Knowledge Path:**

* `<TRIPLE> (Tennessee Williams, people.person.education-education.education.institution, The New School) </TRIPLE>`

* `<TRIPLE> (The New School, common.topic.notable_types, College/University) </TRIPLE>`

* `<TRIPLE> (The New School, organization.organization.headquarters-location.mailing_address.citytown, New York City) </TRIPLE>`

* **KG Diagram:** A three-step path: "Tennessee Williams" (blue) -> "The New School" (green, step 1) -> "College/University" (blue, step 2) and "New York City" (blue, step 3). An "undecodable" node is present.

### Key Observations

1. **Consistent Process:** All examples follow an identical four-stage pipeline: Question -> Reasoning Chain -> Knowledge Triples -> Visual Graph -> Answer.

2. **Graph Structure:** The KG diagrams consistently show a linear or branching path from the query entity to the answer entity, with intermediate nodes. The "undecodable" node appears in every diagram but is never part of the correct reasoning path, suggesting it represents irrelevant or inaccessible knowledge.

3. **Color Coding:** In the diagrams, the final answer entity node is consistently colored green. The starting query entity and other intermediate entities are blue or orange. The edge labels in the diagrams match the predicates in the `<TRIPLE>` statements.

4. **Complexity Scaling:** The reasoning chain length and graph complexity increase with the question's complexity (from 1 step in Example 1 to 3 steps in Examples 3 & 4).

### Interpretation

This image illustrates a **neuro-symbolic or hybrid AI system** for complex question answering. It demonstrates a clear separation of concerns:

* The **Reasoner** performs natural language understanding and logical decomposition of the question.

* The **Aligner** translates this logic into formal, structured queries against a knowledge graph (using the triple format).

* The **KG-constrained Decoding** visualizes the execution of these queries, showing the specific path through the knowledge graph that leads to the answer. The "undecodable" nodes highlight the system's ability to ignore irrelevant or noisy data.

* The **Responder** generates the final natural language answer.

The system's strength lies in its **explainability**. Each step of the reasoning is transparent and traceable, from the initial logical steps to the precise data relationships used. This is in contrast to "black box" neural models. The examples show the system can handle various relation types (profession, genre, education, location) and multi-hop reasoning (connecting an artist to a song, to an education institution, to its type). The consistent success across diverse domains (history, music, film, education) suggests a robust underlying knowledge graph and reasoning engine.

DECODING INTELLIGENCE...