## Textual Flowchart: QA Task Examples with Reasoning Chains and Knowledge Paths

### Overview

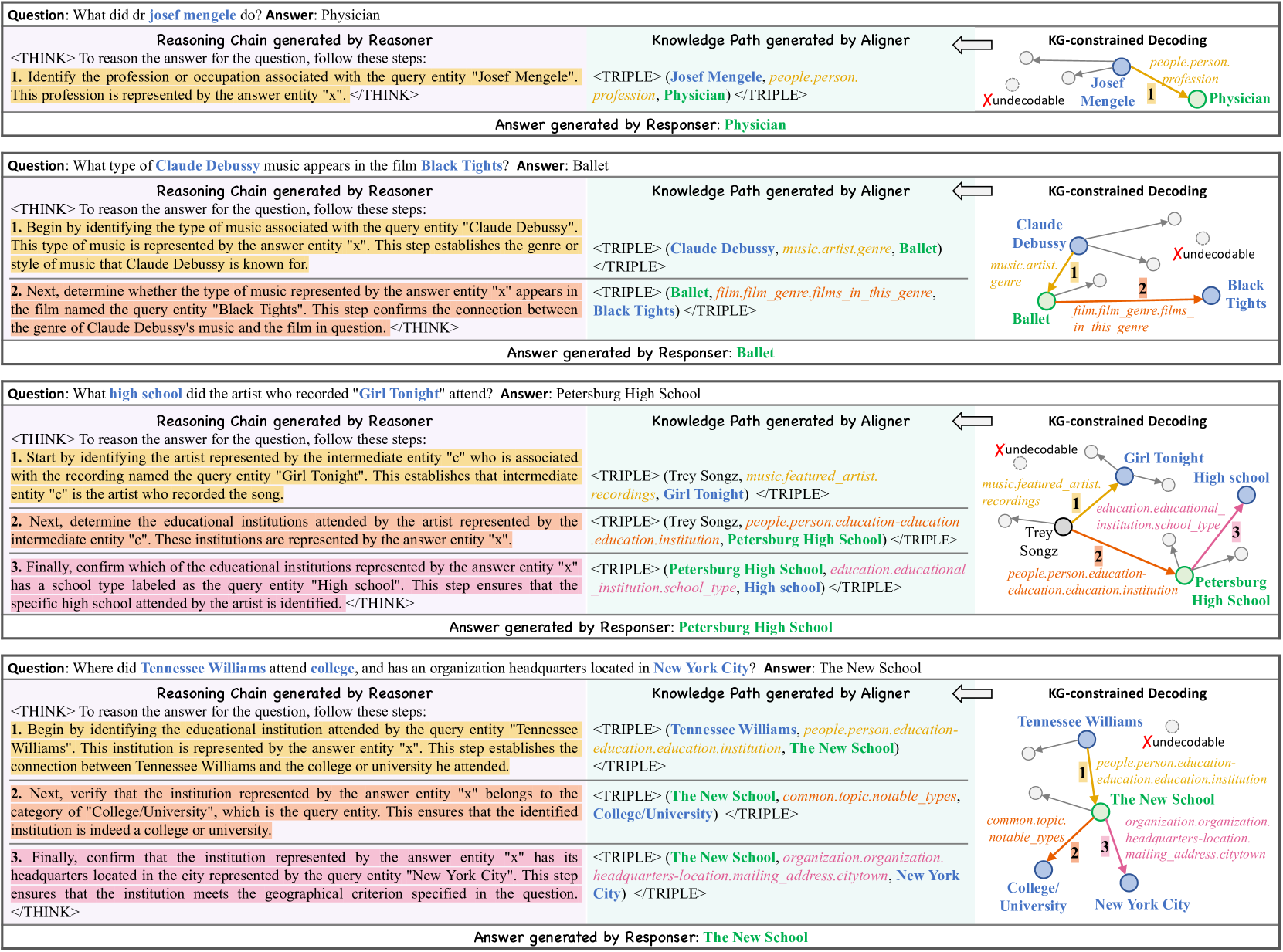

The image presents four QA task examples demonstrating a reasoning framework that combines natural language reasoning, knowledge path generation, and KG-constrained decoding. Each example includes:

1. A question with a highlighted query entity

2. A generated answer

3. A reasoning chain with step-by-step logic

4. A knowledge path visualization

5. KG-constrained decoding steps

### Components/Axes

**Key Elements:**

- **Question Section**: Contains the original question with a highlighted query entity (e.g., "Josef Mengele", "Claude Debussy")

- **Answer Section**: Final answer with color-coded entity labels

- **Reasoning Chain**: Step-by-step logical deductions in <THINK> blocks

- **Knowledge Path**: Triples (subject-predicate-object) with color-coded relationships

- **KG-Constrained Decoding**: Arrows and labels showing entity connections and validation steps

**Color Coding Legend:**

- Yellow: Profession/occupation relationships

- Green: Genre/style relationships

- Blue: Educational institution connections

- Pink: Geographical location ties

- Orange: Notable types/categories

- Red: Undecodable/irrelevant connections

### Detailed Analysis

**Example 1: Josef Mengele**

- **Question**: What did dr Josef Mengele do?

- **Answer**: Physician

- **Reasoning**:

1. Identified profession associated with "Josef Mengele"

2. Established "profession" as the answer entity

- **Knowledge Path**:

`(Josef Mengele, people.person.profession, Physician)`

- **Decoding**:

`1 → Physician` with "Xundecodable" for irrelevant paths

**Example 2: Claude Debussy**

- **Question**: What type of music appears in "Black Tights"?

- **Answer**: Ballet

- **Reasoning**:

1. Identified music genre associated with Debussy

2. Confirmed connection to "Black Tights" film

- **Knowledge Path**:

`(Claude Debussy, music.artist.genre, Ballet)` → `(Ballet, film.film_genre, films_in_this_genre)`

- **Decoding**:

`1 → Ballet` with validation of genre-film connection

**Example 3: "Girl Tonight" Artist**

- **Question**: What high school did the artist who recorded "Girl Tonight" attend?

- **Answer**: Petersburg High School

- **Reasoning**:

1. Identified artist via song recording

2. Mapped to educational institutions

3. Confirmed high school type

- **Knowledge Path**:

`(Trey Songz, music.featured_artist, Girl Tonight)` → `(Petersburg High School, education.institution)`

- **Decoding**:

`1 → Girl Tonight` → `2 → Trey Songz` → `3 → Petersburg High School`

**Example 4: Tennessee Williams**

- **Question**: Where did Tennessee Williams attend college in New York City?

- **Answer**: The New School

- **Reasoning**:

1. Identified educational institution

2. Verified college/university category

3. Confirmed NYC headquarters

- **Knowledge Path**:

`(Tennessee Williams, people.person.education-education.institution, The New School)` → `(The New School, common.topic.notable_types, College/University)`

- **Decoding**:

`1 → The New School` with validation of NYC location

### Key Observations

1. **Entity Disambiguation**: All examples use intermediate entities (e.g., "c" for artist) to resolve ambiguous references

2. **KG Validation**: Red "Xundecodable" markers explicitly reject invalid connections

3. **Step Numbering**: Decoding steps use sequential numbering (1→3) to show logical progression

4. **Color Consistency**: Relationship types maintain consistent color coding across examples

### Interpretation

This framework demonstrates a structured approach to QA that:

1. **Decomposes complex questions** into verifiable knowledge triples

2. **Validates connections** through KG-constrained decoding steps

3. **Maintains traceability** via color-coded relationships and step numbering

4. **Handles ambiguity** through intermediate entity resolution

The system appears designed for biomedical/entertainment domain QA, with explicit handling of:

- Professional roles (Example 1)

- Artistic genres (Example 2)

- Music industry connections (Example 3)

- Educational geography (Example 4)

The KG-constrained decoding acts as a "sanity check" mechanism, ensuring answers align with both linguistic reasoning and knowledge graph constraints. The color-coded triples provide a visual representation of the knowledge graph structure underlying each answer.