## Scatter Plot: H@n1 vs. Latency on WebQSP

### Overview

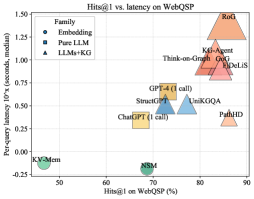

This image is a scatter plot comparing the performance of various AI models on the WebQSP benchmark. The chart plots two key metrics: accuracy (H@n1 percentage) on the x-axis and per-query latency (in seconds, on a logarithmic scale) on the y-axis. The data points are categorized into three methodological approaches, indicated by different marker shapes and colors. The plot reveals a general trade-off between higher accuracy and increased latency, with distinct clustering of model types.

### Components/Axes

* **Chart Title:** "H@n1 vs. latency on WebQSP"

* **X-Axis:**

* **Label:** "H@n1 on WebQSP (%)"

* **Scale:** Linear scale from 0 to 90, with major tick marks at 0, 10, 20, 30, 40, 50, 60, 70, 80, 90.

* **Y-Axis:**

* **Label:** "Per-query latency (seconds, log scale)"

* **Scale:** Logarithmic scale from -0.25 to 2.50, with labeled ticks at -0.25, 0.00, 0.25, 0.50, 0.75, 1.00, 1.25, 1.50, 1.75, 2.00, 2.25, 2.50.

* **Legend (Top-Left Corner):**

* **Fine-tuning:** Represented by a green circle (●).

* **Base LLM:** Represented by a blue square (■).

* **LLMs+KG:** Represented by an orange triangle (▲).

* **Data Points (Models):** Each point is labeled with a model name. The approximate coordinates (H@n1%, Latency) are extracted below.

### Detailed Analysis

**Data Point Extraction (Approximate Values):**

* **Fine-tuning (Green Circles):**

* **KGSilicon:** Positioned at the far left, near (0%, ~0.00s). This is an outlier with near-zero latency but also near-zero accuracy.

* **GPT-4 (1 call):** Positioned at (~42%, ~0.00s). Shows moderate accuracy with very low latency.

* **Base LLM (Blue Squares):**

* **ChatGPT (1 call):** Positioned at (~38%, ~0.25s).

* **GPT-4 (1 call):** Positioned at (~55%, ~0.50s). *Note: This appears to be a separate data point from the Fine-tuning GPT-4, possibly representing a different configuration.*

* **StreamGPT:** Positioned at (~58%, ~0.50s).

* **GPT-4 (5 calls):** Positioned at (~62%, ~0.75s). Shows that increasing calls improves accuracy but also latency.

* **Think-on-Graph:** Positioned at (~72%, ~1.25s).

* **KG-Agent:** Positioned at (~78%, ~1.50s).

* **LLMs+KG (Orange Triangles):**

* **CoT-LLM:** Positioned at (~75%, ~1.00s).

* **ToG:** Positioned at (~78%, ~1.25s).

* **PaL-HD:** Positioned at (~82%, ~0.25s). This is a notable outlier, achieving high accuracy with relatively low latency.

* **KGSilicon:** Positioned at the top-right, near (~88%, ~2.25s). This is the highest accuracy model but also has the highest latency. *Note: The name "KGSilicon" appears twice, once as a Fine-tuning model with low performance and once as an LLMs+KG model with high performance. This likely represents two different systems or configurations with the same name.*

### Key Observations

1. **Performance Clusters:** The models form three loose clusters:

* **Low Accuracy, Low Latency:** The Fine-tuning models (KGSilicon, GPT-4 1 call) and one Base LLM (ChatGPT 1 call) are in the bottom-left quadrant.

* **Mid-Range:** A cluster of Base LLMs (StreamGPT, GPT-4 5 calls, Think-on-Graph, KG-Agent) and one LLMs+KG model (CoT-LLM) occupy the center of the plot.

* **High Accuracy, High Latency:** The top-right quadrant contains advanced LLMs+KG models (ToG, KGSilicon) and the high-performing Base LLM (KG-Agent).

2. **Significant Outliers:**

* **PaL-HD (LLMs+KG):** Breaks the general trend by achieving high accuracy (~82%) with low latency (~0.25s), suggesting a highly efficient architecture.

* **KGSilicon (Fine-tuning):** Shows near-zero performance on both metrics, indicating a failed or baseline configuration.

3. **Latency-Accuracy Trade-off:** The overall trend slopes upward from left to right, illustrating that higher accuracy on the WebQSP benchmark generally comes at the cost of significantly higher per-query latency, especially when moving from seconds to multiple seconds.

4. **Impact of Methodology:** The "LLMs+KG" (Large Language Models + Knowledge Graphs) approach generally populates the higher-accuracy region of the plot compared to "Base LLM" and "Fine-tuning" approaches, though with a wide latency spread.

### Interpretation

This scatter plot provides a technical comparison of AI question-answering systems, evaluating their effectiveness (accuracy) against their computational cost (latency). The data suggests a fundamental engineering trade-off: more sophisticated systems that integrate external knowledge graphs (LLMs+KG) or use more inference calls (GPT-4 5 calls) achieve better results but require more time per query.

The presence of **PaL-HD** is particularly significant. Its position indicates a potential breakthrough in efficiency, achieving top-tier accuracy without the severe latency penalty seen in other high-performing models like KGSilicon or KG-Agent. This could be due to a novel retrieval mechanism, a more optimized model architecture, or a different approach to knowledge integration.

The duplicate **KGSilicon** label highlights the importance of methodology. The same name applied to a "Fine-tuning" approach yields poor results, while the "LLMs+KG" version is the state-of-the-art in accuracy. This underscores that the system's design and integration strategy are more critical than the base model name alone.

For a technical document, this chart argues that selecting a model involves balancing the need for accuracy against the constraint of response time. Applications requiring real-time answers might favor models like PaL-HD or GPT-4 (1 call), while applications where accuracy is paramount and latency is less critical could justify the use of models like KGSilicon (LLMs+KG) or KG-Agent.

**Language Note:** The model name "KGSilicon" appears to be a proper noun/brand name. No other non-English text is present in the chart.