## Line Chart: Loss Curves for Single-LLM vs. α-UMI Models

### Overview

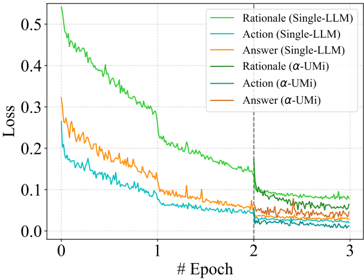

The image is a line chart comparing the training loss over epochs for three different tasks (Rationale, Action, Answer) using two different model approaches: a baseline "Single-LLM" and a proposed "α-UMI" model. The chart demonstrates that the α-UMI models achieve lower loss values and converge faster than their Single-LLM counterparts.

### Components/Axes

* **X-Axis:** Labeled "# Epoch". Major tick marks and labels are present at integer values: 0, 1, 2, 3.

* **Y-Axis:** Labeled "Loss". The scale ranges from 0.0 to 0.5, with major tick marks at 0.0, 0.1, 0.2, 0.3, 0.4, and 0.5.

* **Legend:** Located in the top-right quadrant of the chart area. It contains six entries, each with a colored line sample and a text label:

* Light Green Line: `Rationale (Single-LLM)`

* Light Blue Line: `Action (Single-LLM)`

* Orange Line: `Answer (Single-LLM)`

* Dark Green Line: `Rationale (α-UMI)`

* Dark Blue Line: `Action (α-UMI)`

* Brown Line: `Answer (α-UMI)`

* **Additional Element:** A vertical dashed gray line is positioned at `x = 2` (Epoch 2), likely indicating a change in training phase, learning rate schedule, or evaluation point.

### Detailed Analysis

The chart plots six data series, each showing a generally decreasing trend in loss as the number of epochs increases.

1. **Rationale (Single-LLM) - Light Green:**

* **Trend:** Starts at the highest loss value (~0.55 at Epoch 0) and shows a steep, noisy decline. The rate of decrease slows after Epoch 1.

* **Key Points:** Approx. 0.55 (Epoch 0), 0.30 (Epoch 1), 0.15 (Epoch 2), 0.08 (Epoch 3).

2. **Action (Single-LLM) - Light Blue:**

* **Trend:** Starts at a moderate loss (~0.25 at Epoch 0) and decreases steadily with less noise than the Rationale curve.

* **Key Points:** Approx. 0.25 (Epoch 0), 0.08 (Epoch 1), 0.04 (Epoch 2), 0.03 (Epoch 3).

3. **Answer (Single-LLM) - Orange:**

* **Trend:** Starts between the other two Single-LLM curves (~0.32 at Epoch 0) and follows a similar decreasing pattern to the Action curve.

* **Key Points:** Approx. 0.32 (Epoch 0), 0.12 (Epoch 1), 0.06 (Epoch 2), 0.05 (Epoch 3).

4. **Rationale (α-UMI) - Dark Green:**

* **Trend:** Follows a path very similar to its Single-LLM counterpart but is consistently lower after the initial epochs. It shows a pronounced drop at the dashed line (Epoch 2).

* **Key Points:** Approx. 0.55 (Epoch 0), 0.28 (Epoch 1), 0.08 (Epoch 2), 0.07 (Epoch 3).

5. **Action (α-UMI) - Dark Blue:**

* **Trend:** The lowest loss curve throughout. It starts lower than the Single-LLM Action model and converges to near-zero loss.

* **Key Points:** Approx. 0.20 (Epoch 0), 0.05 (Epoch 1), 0.02 (Epoch 2), 0.01 (Epoch 3).

6. **Answer (α-UMI) - Brown:**

* **Trend:** Starts slightly lower than the Single-LLM Answer model and maintains a lower loss, also showing a clear drop at Epoch 2.

* **Key Points:** Approx. 0.30 (Epoch 0), 0.10 (Epoch 1), 0.04 (Epoch 2), 0.03 (Epoch 3).

### Key Observations

1. **Consistent Superiority of α-UMI:** For all three tasks (Rationale, Action, Answer), the α-UMI model variant (darker lines) achieves a lower loss than the corresponding Single-LLM model (lighter lines) at nearly every epoch after the start.

2. **Task Difficulty Hierarchy:** The "Rationale" task (green lines) has the highest loss for both model types, suggesting it is the most complex task. The "Action" task (blue lines) has the lowest loss, suggesting it is the simplest to learn.

3. **Impact at Epoch 2:** The vertical dashed line at Epoch 2 coincides with a noticeable, sharp decrease in loss for the α-UMI models (especially Rationale and Answer), while the Single-LLM models show a more gradual change. This suggests a scheduled event (e.g., learning rate decay) that the α-UMI architecture leverages more effectively.

4. **Convergence:** By Epoch 3, the loss curves for the α-UMI models are very close to zero and tightly clustered, indicating successful convergence. The Single-LLM models, particularly for Rationale, have not converged to the same low level.

### Interpretation

This chart provides empirical evidence for the effectiveness of the proposed α-UMI model architecture over a standard Single-LLM baseline. The data suggests that α-UMI leads to more efficient and effective learning across different types of tasks (rationale generation, action prediction, answer formulation).

The consistent gap between the paired curves (e.g., Dark Green vs. Light Green) demonstrates a systematic improvement, not a random fluctuation. The dramatic response of the α-UMI models to the event at Epoch 2 implies they are better at utilizing training signals or schedule changes to escape plateaus and reduce loss further. The fact that the "Rationale" task shows the largest absolute improvement highlights that α-UMI may be particularly beneficial for more complex, reasoning-heavy components of a model's objective. Ultimately, the chart argues that the α-UMI method results in models that learn faster and achieve a better final performance (lower loss) on the measured tasks.