## Diagram: Recurrent Neural Network with Attention Mechanism

### Overview

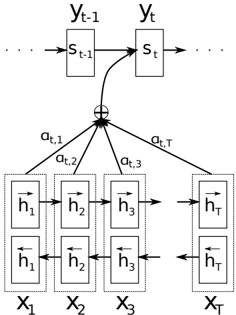

The image depicts a recurrent neural network (RNN) architecture incorporating an attention mechanism. It illustrates the flow of information between input sequences, hidden states, and output predictions.

### Components/Axes

* **Top Layer:** Represents the recurrent neural network's hidden states at time steps t-1 and t.

* `y_{t-1}`: Label above the left-hand box.

* `y_t`: Label above the right-hand box.

* `s_{t-1}`: State at time t-1, inside the left-hand box.

* `s_t`: State at time t, inside the right-hand box.

* Arrows indicate the flow of information from `s_{t-1}` to `s_t`.

* **Middle Layer:** Represents the attention mechanism.

* A summation symbol (⊕) indicates the weighted sum of the annotations.

* `a_{t,1}`, `a_{t,2}`, `a_{t,3}`, `a_{t,T}`: Attention weights connecting the annotations to the summation.

* **Bottom Layer:** Represents the input sequence.

* `X_1`, `X_2`, `X_3`, `X_T`: Input sequence elements.

* Each `X_i` contains two vectors, `h_i` and `h_i` with arrows indicating direction.

### Detailed Analysis

* **RNN Structure:** The top layer shows the recurrent nature of the network, where the hidden state at time `t` (`s_t`) depends on the hidden state at the previous time step (`s_{t-1}`).

* **Attention Mechanism:** The attention mechanism computes weights (`a_{t,i}`) that determine the importance of each input element (`X_i`) when predicting the output at time `t`. These weights are used to compute a weighted sum of the annotations (`h_i` vectors), which is then fed into the RNN.

* **Input Sequence:** The bottom layer represents the input sequence, where each element `X_i` is associated with a pair of vectors `h_i`. These vectors likely represent the forward and backward hidden states of a bidirectional RNN applied to the input sequence.

### Key Observations

* The diagram highlights the key components of an RNN with an attention mechanism: the recurrent hidden states, the attention weights, and the input sequence.

* The attention mechanism allows the network to focus on the most relevant parts of the input sequence when making predictions.

### Interpretation

The diagram illustrates a common architecture used in sequence-to-sequence tasks, such as machine translation and text summarization. The attention mechanism is crucial for handling long sequences, as it allows the network to selectively attend to different parts of the input when generating the output. The diagram shows how the attention weights are computed and used to combine the input annotations, which are then fed into the RNN to produce the output.