## Diagram: BFS Reasoning Flow

### Overview

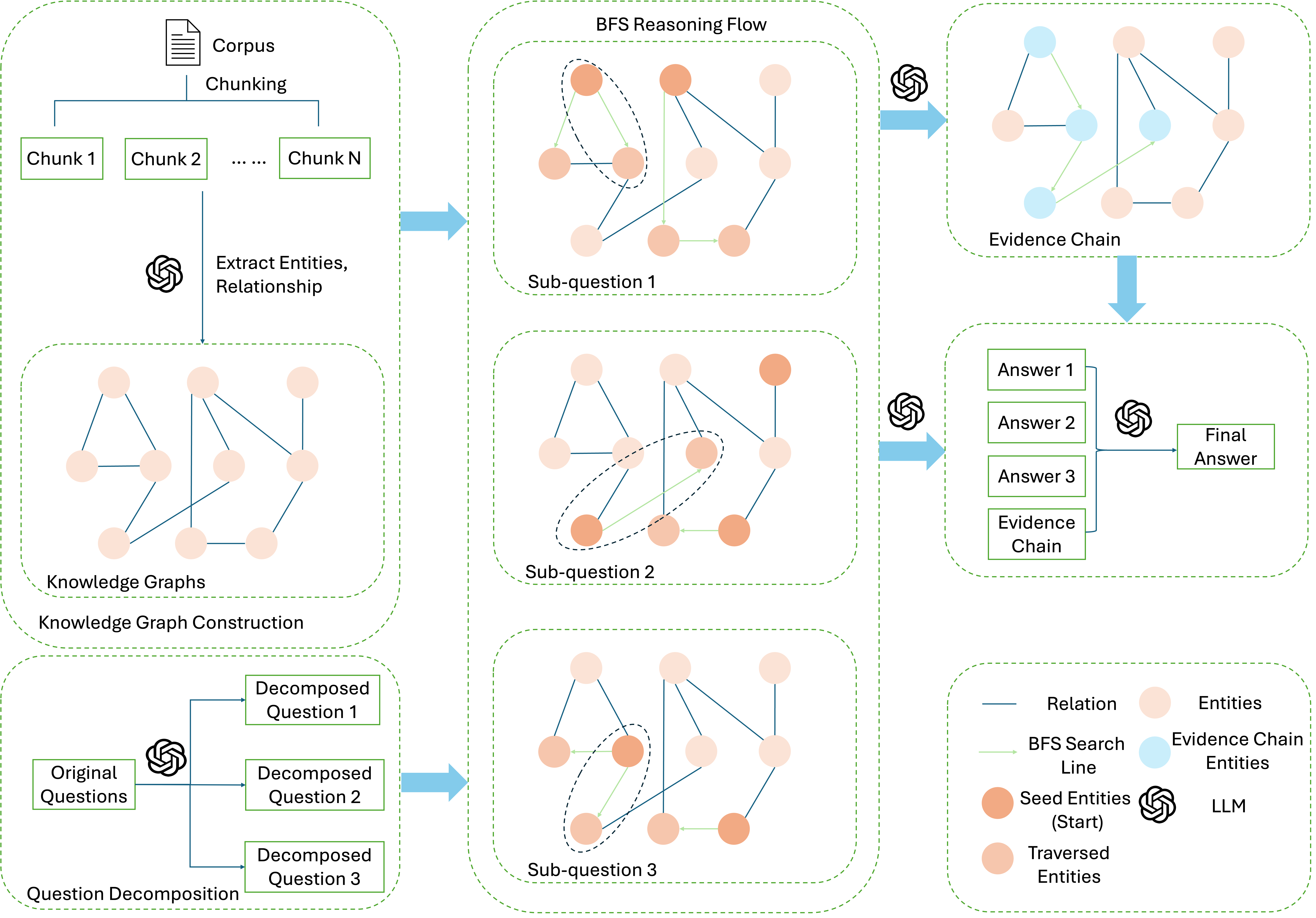

The image illustrates a Breadth-First Search (BFS) reasoning flow, likely used in a question-answering system powered by a Large Language Model (LLM). The process starts with a corpus, which is chunked, and entities/relationships are extracted. These are used to construct knowledge graphs. The original question is decomposed into sub-questions, which are then processed using BFS to find evidence chains. Finally, the answers to the sub-questions and the evidence chain are used by the LLM to generate a final answer.

### Components/Axes

* **Corpus:** Represented by a document icon, indicating the initial source of information.

* **Chunking:** The process of dividing the corpus into smaller segments (Chunk 1, Chunk 2, ..., Chunk N).

* **Extract Entities, Relationship:** The step where entities and their relationships are identified within the chunks.

* **Knowledge Graphs:** Graphs constructed from the extracted entities and relationships.

* **Knowledge Graph Construction:** The process of building the knowledge graphs.

* **Original Questions:** The initial question posed to the system.

* **Question Decomposition:** The process of breaking down the original question into smaller, more manageable sub-questions (Decomposed Question 1, Decomposed Question 2, Decomposed Question 3).

* **BFS Reasoning Flow:** The core reasoning process using Breadth-First Search.

* **Sub-question 1, Sub-question 2, Sub-question 3:** The individual sub-questions derived from the original question.

* **Evidence Chain:** A chain of evidence found through BFS, connecting entities and supporting the answer.

* **Answer 1, Answer 2, Answer 3:** The answers to the sub-questions.

* **Final Answer:** The final answer generated by the LLM, based on the sub-question answers and the evidence chain.

* **LLM:** Large Language Model.

* **Legend (bottom-right):**

* Blue Line: Relation

* Light Green Line: BFS Search Line

* Orange Circle: Seed Entities (Start)

* Light Orange Circle: Traversed Entities

* Light Blue Circle: Evidence Chain Entities

* LLM: LLM

### Detailed Analysis

1. **Corpus Chunking:** The corpus is divided into chunks labeled "Chunk 1", "Chunk 2", and "Chunk N".

2. **Entity Extraction:** Entities and relationships are extracted from these chunks.

3. **Knowledge Graph Construction:** The extracted entities and relationships are used to build knowledge graphs. These graphs consist of light orange nodes (Traversed Entities) connected by blue lines (Relations).

4. **Question Decomposition:** The original question is decomposed into three sub-questions: "Decomposed Question 1", "Decomposed Question 2", and "Decomposed Question 3".

5. **BFS Reasoning Flow:**

* Each sub-question is processed using BFS. The graphs for each sub-question contain light orange nodes (Traversed Entities) and at least one orange node (Seed Entities (Start)). The nodes are connected by blue lines (Relations).

* The BFS search line is represented by a light green line, highlighting the path traversed during the search.

* A dashed black line encircles a subset of nodes in each sub-question graph, possibly indicating a focus area or a specific search scope.

6. **Evidence Chain Generation:** The BFS reasoning flow leads to the generation of an evidence chain, which consists of light orange nodes (Traversed Entities) and light blue nodes (Evidence Chain Entities) connected by blue lines (Relations) and light green lines (BFS Search Line).

7. **Final Answer Generation:** The answers to the sub-questions ("Answer 1", "Answer 2", "Answer 3") and the evidence chain are fed into the LLM to generate the final answer.

### Key Observations

* The diagram illustrates a multi-step process involving corpus processing, knowledge graph construction, question decomposition, BFS reasoning, and final answer generation.

* The use of BFS suggests a systematic exploration of the knowledge graph to find relevant evidence.

* The LLM plays a crucial role in both decomposing the original question and generating the final answer.

* The evidence chain serves as a bridge between the sub-question answers and the final answer.

### Interpretation

The diagram presents a detailed view of a BFS-based question-answering system. The system leverages knowledge graphs to reason about the question and find relevant evidence. The decomposition of the original question into sub-questions allows for a more focused and efficient search. The LLM acts as the central intelligence, orchestrating the entire process and generating the final answer. The diagram highlights the importance of knowledge representation, reasoning, and language understanding in building effective question-answering systems. The process is designed to mimic human reasoning by breaking down complex questions into smaller, more manageable parts and then synthesizing the answers to arrive at a final conclusion.