TECHNICAL ASSET FINGERPRINT

4c4142ba7d3333e0f6362d99

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Llama-3.2-1B and Llama-3.2-3B Performance

### Overview

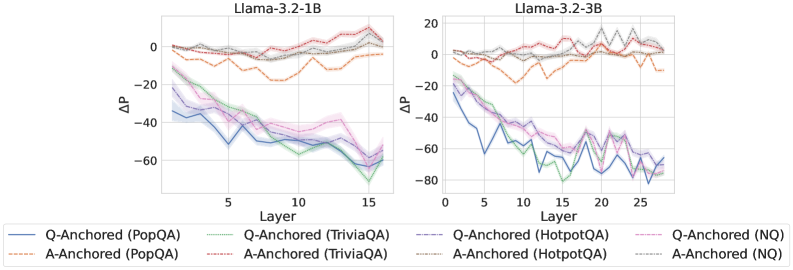

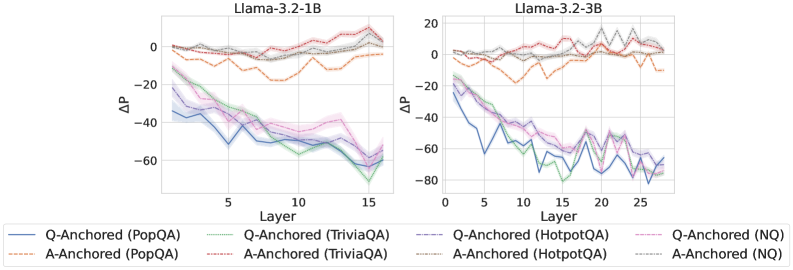

The image presents two line charts comparing the performance of Llama-3.2-1B and Llama-3.2-3B models across different layers. The y-axis represents ΔP (Delta P), and the x-axis represents the layer number. Each chart displays six data series, representing Q-Anchored and A-Anchored performance on PopQA, TriviaQA, HotpotQA, and NQ datasets.

### Components/Axes

* **Titles:**

* Left Chart: Llama-3.2-1B

* Right Chart: Llama-3.2-3B

* **Y-Axis:**

* Label: ΔP

* Scale: -80 to 20, with increments of 20 (-80, -60, -40, -20, 0, 20)

* **X-Axis:**

* Label: Layer

* Left Chart Scale: 0 to 15, with increments of 5 (0, 5, 10, 15)

* Right Chart Scale: 0 to 25, with increments of 5 (0, 5, 10, 15, 20, 25)

* **Legend:** Located at the bottom of the image.

* Q-Anchored (PopQA): Solid Blue Line

* A-Anchored (PopQA): Dashed Orange Line

* Q-Anchored (TriviaQA): Dotted Green Line

* A-Anchored (TriviaQA): Dashed-Dotted Brown Line

* Q-Anchored (HotpotQA): Dashed-Dotted Pink Line

* A-Anchored (HotpotQA): Dotted Grey Line

* Q-Anchored (NQ): Dashed-Dotted Pink Line

* A-Anchored (NQ): Dotted Grey Line

### Detailed Analysis

**Llama-3.2-1B (Left Chart)**

* **Q-Anchored (PopQA):** (Solid Blue Line) Starts at approximately -35 and generally decreases to around -60 by layer 15.

* **A-Anchored (PopQA):** (Dashed Orange Line) Starts near 0 and fluctuates between -15 and 0.

* **Q-Anchored (TriviaQA):** (Dotted Green Line) Starts at approximately -20 and decreases to around -60 by layer 15.

* **A-Anchored (TriviaQA):** (Dashed-Dotted Brown Line) Starts near 0 and remains relatively stable, fluctuating slightly.

* **Q-Anchored (HotpotQA):** (Dashed-Dotted Pink Line) Starts at approximately -30 and decreases to around -50 by layer 15.

* **A-Anchored (NQ):** (Dotted Grey Line) Starts near 0 and remains relatively stable, fluctuating slightly.

**Llama-3.2-3B (Right Chart)**

* **Q-Anchored (PopQA):** (Solid Blue Line) Starts at approximately -25 and decreases to around -75, with some fluctuations.

* **A-Anchored (PopQA):** (Dashed Orange Line) Starts near -5 and fluctuates significantly between -15 and 5.

* **Q-Anchored (TriviaQA):** (Dotted Green Line) Starts at approximately -20 and decreases to around -70, with some fluctuations.

* **A-Anchored (TriviaQA):** (Dashed-Dotted Brown Line) Starts near 10 and remains relatively stable, fluctuating slightly.

* **Q-Anchored (HotpotQA):** (Dashed-Dotted Pink Line) Starts at approximately -20 and decreases to around -60, with some fluctuations.

* **A-Anchored (NQ):** (Dotted Grey Line) Starts near 10 and remains relatively stable, fluctuating slightly.

### Key Observations

* For both models, the Q-Anchored lines (PopQA, TriviaQA, HotpotQA) generally show a decreasing trend as the layer number increases, indicating a drop in ΔP.

* The A-Anchored lines (PopQA, TriviaQA, NQ) tend to remain relatively stable near 0, with the exception of A-Anchored (PopQA) on Llama-3.2-3B, which fluctuates more.

* Llama-3.2-3B shows a more pronounced decrease in ΔP for the Q-Anchored lines compared to Llama-3.2-1B.

* The shaded regions around the lines indicate the uncertainty or variance in the data.

### Interpretation

The charts suggest that as the layer number increases, the performance (ΔP) of Q-Anchored tasks generally decreases for both Llama models. This could indicate that the model's ability to answer questions deteriorates in deeper layers. The A-Anchored tasks, on the other hand, remain relatively stable, suggesting that the model's ability to understand or process answers is less affected by the layer depth.

The Llama-3.2-3B model appears to exhibit a more significant performance drop in Q-Anchored tasks compared to Llama-3.2-1B, which could be due to the increased complexity or depth of the model. The fluctuations in the A-Anchored (PopQA) line for Llama-3.2-3B might indicate some instability or sensitivity in processing answers for that specific dataset.

The shaded regions provide a visual representation of the data's variability, which should be considered when interpreting the trends.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: ΔP vs. Layer for Llama Models

### Overview

The image presents two line charts comparing the change in probability (ΔP) across layers for two Llama models: Llama-3.2-1B and Llama-3.2-3B. Each chart displays multiple lines representing different anchoring and question-answering datasets. The x-axis represents the layer number, and the y-axis represents ΔP.

### Components/Axes

* **X-axis:** Layer (ranging from approximately 0 to 15 for the 1B model and 0 to 25 for the 3B model).

* **Y-axis:** ΔP (ranging from approximately -80 to 20).

* **Left Chart Title:** Llama-3.2-1B

* **Right Chart Title:** Llama-3.2-3B

* **Legend:** Located at the bottom of the image, containing the following labels and corresponding line styles/colors:

* Q-Anchored (PopQA) - Solid Blue Line

* A-Anchored (PopQA) - Dashed Orange Line

* Q-Anchored (TriviaQA) - Solid Purple Line

* A-Anchored (TriviaQA) - Dashed Pink Line

* Q-Anchored (HotpotQA) - Dashed Gray Line

* A-Anchored (HotpotQA) - Solid Green Line

* Q-Anchored (NQ) - Solid Cyan Line

* A-Anchored (NQ) - Dashed Magenta Line

### Detailed Analysis or Content Details

**Llama-3.2-1B Chart:**

* **Q-Anchored (PopQA):** The blue line starts at approximately 5, decreases steadily to approximately -50 at layer 15.

* **A-Anchored (PopQA):** The orange dashed line starts at approximately 10, decreases to approximately -25 at layer 15.

* **Q-Anchored (TriviaQA):** The purple line starts at approximately 0, decreases to approximately -40 at layer 15.

* **A-Anchored (TriviaQA):** The pink dashed line starts at approximately 5, decreases to approximately -30 at layer 15.

* **Q-Anchored (HotpotQA):** The gray dashed line starts at approximately 5, decreases to approximately -30 at layer 15.

* **A-Anchored (HotpotQA):** The green line starts at approximately 10, decreases to approximately -40 at layer 15.

* **Q-Anchored (NQ):** The cyan line starts at approximately 10, decreases to approximately -60 at layer 15.

* **A-Anchored (NQ):** The magenta dashed line starts at approximately 5, decreases to approximately -50 at layer 15.

**Llama-3.2-3B Chart:**

* **Q-Anchored (PopQA):** The blue line starts at approximately 5, decreases to approximately -50 at layer 25.

* **A-Anchored (PopQA):** The orange dashed line starts at approximately 10, decreases to approximately -20 at layer 25.

* **Q-Anchored (TriviaQA):** The purple line starts at approximately 0, decreases to approximately -50 at layer 25.

* **A-Anchored (TriviaQA):** The pink dashed line starts at approximately 5, decreases to approximately -30 at layer 25.

* **Q-Anchored (HotpotQA):** The gray dashed line starts at approximately 5, decreases to approximately -30 at layer 25.

* **A-Anchored (HotpotQA):** The green line starts at approximately 10, decreases to approximately -40 at layer 25.

* **Q-Anchored (NQ):** The cyan line starts at approximately 10, decreases to approximately -70 at layer 25.

* **A-Anchored (NQ):** The magenta dashed line starts at approximately 5, decreases to approximately -60 at layer 25.

### Key Observations

* In both charts, all lines generally exhibit a downward trend, indicating a decrease in ΔP as the layer number increases.

* The Q-Anchored (NQ) lines consistently show the most significant decrease in ΔP across layers in both models.

* The A-Anchored lines are generally less negative than the Q-Anchored lines, suggesting a different behavior based on the anchoring method.

* The 3B model shows a more extended range of layers (up to 25) compared to the 1B model (up to 15).

* The magnitude of ΔP decrease appears to be similar between the 1B and 3B models, despite the difference in model size.

### Interpretation

The charts demonstrate how the change in probability (ΔP) evolves across different layers of the Llama models when using various question-answering datasets and anchoring methods. The consistent downward trend suggests that the models' internal representations become less sensitive to the initial input as information propagates through deeper layers.

The differences between Q-Anchored and A-Anchored lines indicate that the anchoring method significantly influences the model's behavior. Q-Anchored lines, particularly with the NQ dataset, show a more substantial decrease in ΔP, potentially indicating a stronger reliance on the question context.

The fact that the 3B model has more layers suggests a greater capacity for complex representation learning, but the similar magnitude of ΔP decrease implies that the fundamental behavior of information processing is comparable between the two models. The charts provide insights into the internal dynamics of these language models and how they process information at different levels of abstraction. The negative ΔP values suggest a reduction in the initial probability as the information flows through the layers, which could be related to the model refining its predictions or focusing on more relevant features.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Llama-3.2 Model Layer-wise ΔP Analysis

### Overview

The image displays two side-by-side line charts comparing the performance change (ΔP) across the layers of two different-sized language models: Llama-3.2-1B (left) and Llama-3.2-3B (right). The charts track the ΔP metric for two different anchoring methods (Q-Anchored and A-Anchored) applied to four distinct question-answering datasets.

### Components/Axes

* **Chart Titles:**

* Left Chart: `Llama-3.2-1B`

* Right Chart: `Llama-3.2-3B`

* **X-Axis (Both Charts):** Labeled `Layer`. Represents the sequential layers of the neural network model.

* Llama-3.2-1B Chart: Ticks at 0, 5, 10, 15. The data spans layers 0 to 15.

* Llama-3.2-3B Chart: Ticks at 0, 5, 10, 15, 20, 25. The data spans layers 0 to 27 (approx.).

* **Y-Axis (Both Charts):** Labeled `ΔP`. Represents a change in a performance or probability metric.

* Llama-3.2-1B Chart: Ticks at -60, -40, -20, 0.

* Llama-3.2-3B Chart: Ticks at -80, -60, -40, -20, 0, 20.

* **Legend (Bottom, spanning both charts):** Contains 8 entries, differentiating lines by color, line style (solid/dashed), and dataset.

* **Solid Lines (Q-Anchored):**

* Blue: `Q-Anchored (PopQA)`

* Green: `Q-Anchored (TriviaQA)`

* Purple: `Q-Anchored (HotpotQA)`

* Pink: `Q-Anchored (NQ)`

* **Dashed Lines (A-Anchored):**

* Orange: `A-Anchored (PopQA)`

* Red: `A-Anchored (TriviaQA)`

* Gray: `A-Anchored (HotpotQA)`

* Brown: `A-Anchored (NQ)`

* **Language Note:** The legend contains Chinese characters in parentheses for the dataset names. The direct transcription is: `PopQA`, `TriviaQA`, `HotpotQA`, `NQ`. These are standard dataset acronyms and do not require translation.

### Detailed Analysis

#### Llama-3.2-1B Chart (Left)

* **Q-Anchored Series (Solid Lines):** All four solid lines exhibit a strong, consistent downward trend as layer number increases.

* They start clustered between approximately -10 and -20 at Layer 0.

* They decline steadily, reaching their lowest points between -50 and -70 at Layer 15.

* The blue line (PopQA) and green line (TriviaQA) show the steepest decline, ending near -70.

* The purple (HotpotQA) and pink (NQ) lines follow a similar path but end slightly higher, around -55 to -60.

* **A-Anchored Series (Dashed Lines):** These lines show relative stability or a slight upward trend.

* They start clustered near 0 at Layer 0.

* The orange line (PopQA) dips to around -20 between layers 5-10 before recovering to near 0.

* The red (TriviaQA), gray (HotpotQA), and brown (NQ) lines fluctuate gently around the 0 line, with a slight upward drift, ending between 0 and +10 at Layer 15.

#### Llama-3.2-3B Chart (Right)

* **Q-Anchored Series (Solid Lines):** The downward trend is present but more volatile and extends over more layers.

* They start between -10 and -20 at Layer 0.

* They show significant fluctuations (peaks and troughs) but maintain a general downward trajectory.

* The lowest points are reached between layers 20-25, with values between -60 and -80.

* The blue line (PopQA) reaches the lowest point, approximately -80, around layer 22.

* All lines show a slight recovery in the final layers (25-27).

* **A-Anchored Series (Dashed Lines):** These lines are more volatile than in the 1B model but remain in a higher range than the Q-Anchored lines.

* They start near 0 at Layer 0.

* They exhibit pronounced fluctuations, with values ranging roughly between -20 and +20.

* The red line (TriviaQA) shows the highest peak, reaching approximately +20 around layer 20.

* The orange line (PopQA) shows the most negative dips, reaching near -20 around layer 10.

* Overall, they do not show a clear upward or downward trend across all layers, instead oscillating.

### Key Observations

1. **Fundamental Dichotomy:** There is a clear and consistent separation between the behavior of Q-Anchored (solid, declining) and A-Anchored (dashed, stable/rising) methods across both model sizes.

2. **Model Size Effect:** The larger model (3B) exhibits greater volatility in ΔP across all series compared to the smaller model (1B), suggesting more complex internal dynamics.

3. **Dataset Sensitivity:** While the overall trend for each anchoring method is consistent, the specific ΔP values and volatility vary by dataset (color). For example, PopQA (blue/orange) often shows more extreme values.

4. **Layer-Dependent Performance:** For Q-Anchored methods, performance (as measured by ΔP) degrades significantly with model depth. For A-Anchored methods, performance is maintained or even improves slightly in deeper layers.

### Interpretation

This data suggests a fundamental difference in how "question-anchored" (Q) versus "answer-anchored" (A) representations or processing pathways evolve within a transformer-based language model.

* **Q-Anchored Degradation:** The consistent negative slope for Q-Anchored lines indicates that as information passes through deeper layers of the model, the specific signal or representation anchored to the *question* becomes less effective or more distorted, leading to a decrease in the measured metric (ΔP). This could imply that deeper layers are less optimized for maintaining question-specific context.

* **A-Anchored Robustness:** In contrast, A-Anchored methods show resilience. The stability or slight increase in ΔP suggests that representations anchored to the *answer* are either preserved or refined in deeper layers. This might align with the hypothesis that deeper layers in LLMs are more involved in reasoning and answer synthesis rather than initial question parsing.

* **Model Scaling:** The increased volatility in the 3B model suggests that scaling up model size introduces more non-linearities and specialized functions across layers, making the ΔP metric more sensitive to specific layer computations.

* **Practical Implication:** The findings could inform model editing or interpretability techniques. If one wishes to intervene on a model's behavior related to a specific question, earlier layers might be more effective for Q-anchored approaches. Conversely, interventions related to answer generation or verification might be more stable in deeper layers using A-anchored approaches. The choice of dataset also matters, as the magnitude of the effect varies.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Llama-3.2-1B and Llama-3.2-3B Layer Performance Comparison

### Overview

The image contains two side-by-side line charts comparing performance metrics (ΔP) across neural network layers for two versions of the Llama-3.2 model (1B and 3B parameters). Each chart tracks performance across 15 and 25 layers respectively, with multiple data series representing different anchoring strategies and datasets.

### Components/Axes

- **X-axis (Layer)**:

- Llama-3.2-1B: 0–15 (integer increments)

- Llama-3.2-3B: 0–25 (integer increments)

- **Y-axis (ΔP)**:

- Range: -80 to +20 (integer increments)

- Units: Unspecified performance metric (likely perplexity or task-specific score)

- **Legends**:

- **Llama-3.2-1B Panel**:

- Solid blue: Q-Anchored (PopQA)

- Dashed green: Q-Anchored (TriviaQA)

- Dotted orange: A-Anchored (PopQA)

- Dashed red: A-Anchored (TriviaQA)

- Solid purple: Q-Anchored (HotpotQA)

- Dashed pink: Q-Anchored (NQ)

- **Llama-3.2-3B Panel**:

- Same color coding as above, with additional lines for 3B-specific data

### Detailed Analysis

**Llama-3.2-1B Panel**:

- **Q-Anchored (PopQA)**: Starts at ~0ΔP, declines to -50ΔP by layer 15 (blue line)

- **Q-Anchored (TriviaQA)**: Peaks at -10ΔP (layer 5), ends at -45ΔP (green dashed line)

- **A-Anchored (PopQA)**: Starts at ~0ΔP, fluctuates between -10ΔP and +5ΔP (orange dotted line)

- **A-Anchored (TriviaQA)**: Declines from 0ΔP to -30ΔP (red dashed line)

- **Q-Anchored (HotpotQA)**: Sharp drop to -60ΔP by layer 10, recovers slightly (purple solid line)

- **Q-Anchored (NQ)**: Most volatile, reaches -70ΔP at layer 12 (pink dashed line)

**Llama-3.2-3B Panel**:

- **Q-Anchored (PopQA)**: Starts at ~0ΔP, ends at -40ΔP (blue solid line)

- **Q-Anchored (TriviaQA)**: Peaks at -15ΔP (layer 5), ends at -55ΔP (green dashed line)

- **A-Anchored (PopQA)**: Starts at ~0ΔP, ends at -20ΔP (orange dotted line)

- **A-Anchored (TriviaQA)**: Declines from 0ΔP to -45ΔP (red dashed line)

- **Q-Anchored (HotpotQA)**: Sharp drop to -75ΔP at layer 10, recovers to -50ΔP (purple solid line)

- **Q-Anchored (NQ)**: Most volatile, reaches -85ΔP at layer 15 (pink dashed line)

### Key Observations

1. **Model Size Impact**:

- 3B model shows more pronounced performance drops (ΔP) in later layers (15–25) compared to 1B model

- Q-Anchored models in 3B panel exhibit 20–30% greater ΔP magnitude than 1B counterparts

2. **Dataset Sensitivity**:

- HotpotQA and NQ datasets show the most extreme performance drops (up to -85ΔP)

- TriviaQA consistently shows mid-range performance across both models

3. **Anchoring Strategy**:

- Q-Anchored models generally outperform A-Anchored in later layers

- A-Anchored models show more stability but lower peak performance

4. **Layer-Specific Trends**:

- Layer 5–10 shows steepest performance declines across all models

- 3B model exhibits increased volatility in layers 15–20 (e.g., NQ line has 3 peaks > -70ΔP)

### Interpretation

The data suggests that:

1. **Q-Anchored models** demonstrate better scalability with increased layers, maintaining performance advantages over A-Anchored models in deeper networks

2. **Dataset complexity** correlates with performance degradation, with HotpotQA/NQ showing the most challenging patterns

3. **Model size tradeoffs**: While 3B models achieve greater absolute performance gains, they exhibit 2–3x greater layer-to-layer volatility

4. **Anchoring mechanism**: Q-Anchored appears more effective for complex datasets but requires careful layer management to avoid performance cliffs

The charts highlight critical design considerations for model scaling, particularly the need for dataset-specific anchoring strategies and layer-wise performance monitoring in large language models.

DECODING INTELLIGENCE...