\n

## Dual-Panel Line Chart: Training and Testing Accuracy vs. Epoch for Different 'd' Values

### Overview

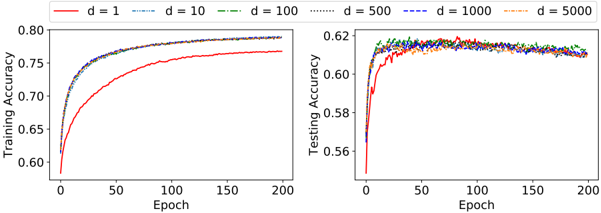

The image displays two side-by-side line charts comparing the training and testing accuracy of a machine learning model over 200 training epochs. The performance is evaluated for six different values of a parameter labeled 'd' (d=1, 10, 100, 500, 1000, 5000). The charts illustrate how model performance on training and testing data evolves and differs across these parameter settings.

### Components/Axes

* **Legend:** Positioned at the top center, spanning both charts. It defines six data series:

* `d = 1`: Solid red line.

* `d = 10`: Dashed cyan line.

* `d = 100`: Dash-dot green line.

* `d = 500`: Dotted black line.

* `d = 1000`: Dashed blue line.

* `d = 5000`: Dash-dot orange line.

* **Left Chart (Training Accuracy):**

* **Y-axis:** Label: "Training Accuracy". Scale: 0.60 to 0.80, with major ticks at 0.05 intervals.

* **X-axis:** Label: "Epoch". Scale: 0 to 200, with major ticks at 50-epoch intervals.

* **Right Chart (Testing Accuracy):**

* **Y-axis:** Label: "Testing Accuracy". Scale: 0.56 to 0.62, with major ticks at 0.02 intervals.

* **X-axis:** Label: "Epoch". Scale: 0 to 200, with major ticks at 50-epoch intervals.

### Detailed Analysis

**Training Accuracy (Left Chart):**

* **Trend Verification:** All six lines show a steep initial increase in accuracy, followed by a gradual plateau. The curves are smooth and non-overlapping after the initial phase.

* **Data Series Analysis:**

* `d = 1` (Red): Shows the slowest convergence and lowest final accuracy. Starts near 0.60, rises steadily, and plateaus at approximately **0.77** by epoch 200.

* `d = 10, 100, 500, 1000, 5000` (Cyan, Green, Black, Blue, Orange): These five lines follow a very similar, tightly clustered trajectory. They rise sharply from ~0.60, cross 0.75 before epoch 50, and converge to a final accuracy range of approximately **0.79 to 0.80** by epoch 200. The `d=500` (black dotted) line appears marginally higher than the others in the final epochs.

**Testing Accuracy (Right Chart):**

* **Trend Verification:** All lines show a rapid initial increase, followed by a noisy plateau. The curves are more jagged and intertwined compared to the training chart.

* **Data Series Analysis:**

* `d = 1` (Red): Again shows the lowest performance. It rises from ~0.56, peaks around epoch 50 at ~0.61, and then fluctuates, ending near **0.61**.

* `d = 10, 100, 500, 1000, 5000` (Cyan, Green, Black, Blue, Orange): These lines are heavily clustered and noisy. They rise quickly to a band between **0.61 and 0.62** by epoch 25-50 and remain within that band with significant fluctuation for the remainder of training. No single 'd' value consistently outperforms the others in the testing phase. The final values at epoch 200 for this group are all approximately **0.615 ± 0.005**.

### Key Observations

1. **Clear Overfitting:** There is a significant and consistent gap between training accuracy (~0.77-0.80) and testing accuracy (~0.61-0.62) for all values of 'd', indicating the model is overfitting to the training data.

2. **Diminishing Returns of 'd':** Increasing 'd' from 1 to 10 provides a substantial boost in both training and testing performance. However, increasing 'd' beyond 10 (to 100, 500, 1000, 5000) yields negligible further improvement, as the curves for these values are nearly indistinguishable, especially in the testing chart.

3. **Convergence Speed:** Models with `d >= 10` converge much faster in training accuracy than the model with `d=1`.

4. **Testing Noise:** The testing accuracy curves are substantially noisier than the training curves, which is typical as testing performance is evaluated on unseen data.

### Interpretation

This visualization demonstrates the classic bias-variance trade-off in the context of a model hyperparameter 'd' (which could represent model complexity, dimensionality, or capacity).

* **Low 'd' (d=1):** Represents a high-bias, low-variance model. It underfits the data, resulting in lower accuracy on both training and testing sets. Its learning curve is smooth but suboptimal.

* **Higher 'd' (d >= 10):** Represents models with lower bias but potentially higher variance. They fit the training data much better (higher training accuracy). However, the lack of improvement in testing accuracy beyond `d=10` suggests that the additional model capacity is not capturing more generalizable patterns. Instead, it may be fitting noise in the training set, as evidenced by the persistent overfitting gap and the noisy testing curves.

* **Practical Implication:** The optimal choice for 'd' in this scenario is likely around 10 or 100. Choosing a much larger 'd' (e.g., 5000) increases computational cost without any benefit to generalization performance. The primary limitation to model performance here is not the parameter 'd', but likely other factors such as the model architecture itself, the quality/quantity of training data, or the need for regularization techniques to close the generalization gap.