## Diagram: Neural Network and Spin Glass Model Comparison

### Overview

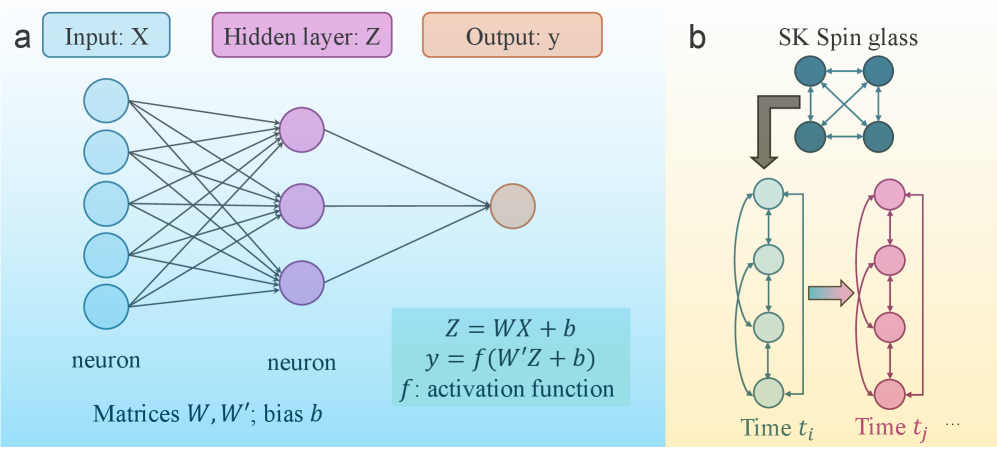

The image is a two-panel technical diagram (labeled **a** and **b**) illustrating the structural analogy between a feedforward neural network and a Sherrington-Kirkpatrick (SK) spin glass model. Panel **a** details the architecture and mathematics of a simple neural network. Panel **b** depicts the SK spin glass model and its conceptual mapping to a time-evolving system.

### Components/Axes

**Panel a (Left): Neural Network**

* **Labels & Structure:**

* Top: Three colored boxes label the layers: `Input: X` (light blue), `Hidden layer: Z` (purple), `Output: y` (orange).

* Diagram: A network graph with:

* An input layer of 5 light blue circles (neurons).

* A hidden layer of 3 purple circles (neurons).

* An output layer of 1 orange circle (neuron).

* Directed lines (edges) connect all input neurons to all hidden neurons, and all hidden neurons to the output neuron.

* Bottom Text: `neuron` is written below the input and hidden layers. `Matrices W, W'; bias b` is written at the bottom.

* **Mathematical Equations (Bottom Right Box):**

* `Z = WX + b`

* `y = f(W'Z + b)`

* `f : activation function`

**Panel b (Right): SK Spin Glass**

* **Labels & Structure:**

* Top: Title `SK Spin glass`.

* Diagram: A network of 4 dark teal circles (spins) fully interconnected with double-headed arrows.

* A large brown arrow points from this network down to a sequence of states.

* The sequence shows two vertical columns of circles connected by curved arrows, representing time evolution:

* Left column: 4 light green circles, labeled `Time t_i` at the bottom.

* Right column: 4 pink circles, labeled `Time t_j` at the bottom, followed by `...`.

* A horizontal arrow points from the green column to the pink column.

* Within each column, curved arrows connect the circles in a loop.

### Detailed Analysis

**Panel a: Neural Network Details**

* **Architecture:** A 3-layer fully connected network. Input dimension = 5, hidden layer dimension = 3, output dimension = 1.

* **Data Flow:** Information flows left to right: Input `X` -> Hidden layer `Z` -> Output `y`.

* **Mathematical Model:** The hidden layer activation `Z` is a linear transformation (`W` is the weight matrix, `b` is the bias) of the input `X`. The final output `y` is a nonlinear function `f` (the activation function) applied to another linear transformation (`W'` is a second weight matrix, with a potentially different bias `b`) of the hidden layer `Z`.

**Panel b: Spin Glass & Time Evolution Details**

* **SK Spin Glass:** Depicted as a fully connected graph of 4 nodes (spins), representing a system where each spin interacts with every other spin.

* **Temporal Mapping:** The diagram suggests a mapping from the static spin glass configuration to a dynamic, time-indexed process. The state of the system at `Time t_i` (green circles) evolves to a state at `Time t_j` (pink circles). The internal looping arrows within each time-slice column imply recurrent or iterative dynamics within that time step.

### Key Observations

1. **Structural Analogy:** The diagram visually proposes a correspondence between the layers of a neural network (a) and the time-evolved states of a spin glass system (b). The hidden layer `Z` in the network may be analogous to the state of the spin system at a given time.

2. **Connectivity:** Both systems are characterized by dense connectivity. The neural network is fully connected between layers. The SK spin glass is fully connected among all its components.

3. **Directionality vs. Recurrence:** Panel **a** shows a strict feedforward (acyclic) flow. Panel **b** introduces recurrence (the loops within each time column) and a temporal progression from `t_i` to `t_j`.

4. **Color Coding:** Colors are used consistently to link concepts: purple for the hidden layer/state, and a progression from green to pink for time evolution.

### Interpretation

This diagram is likely from a technical paper exploring the intersection of **machine learning** (specifically, neural networks) and **statistical physics** (specifically, spin glass theory). The core message is that the mathematical framework used to describe disordered magnetic systems like spin glasses can provide insights into the behavior and training dynamics of neural networks.

* **What it Suggests:** The training process of a neural network, which navigates a complex "loss landscape," may be formally similar to the energy minimization or dynamics of a spin glass. The "hidden layer" state `Z` is a key intermediate representation whose evolution (perhaps during training epochs) is being compared to the time evolution of spin configurations.

* **Relationships:** The mapping implies that concepts from spin glass theory—such as replica symmetry breaking, metastable states, and complex energy landscapes—could be used to analyze issues in neural networks like generalization, convergence, and the presence of local minima.

* **Notable Anomaly/Point of Interest:** The explicit inclusion of time (`t_i`, `t_j`) in the spin glass panel, but not in the neural network panel, is significant. It suggests the author is focusing on the *dynamics* of the system (how it changes over time/iterations), not just its static architecture. The `...` after `t_j` indicates this is an ongoing process.