## Neural Network and Spin Glass Model Diagram

### Overview

The image presents two interconnected technical diagrams:

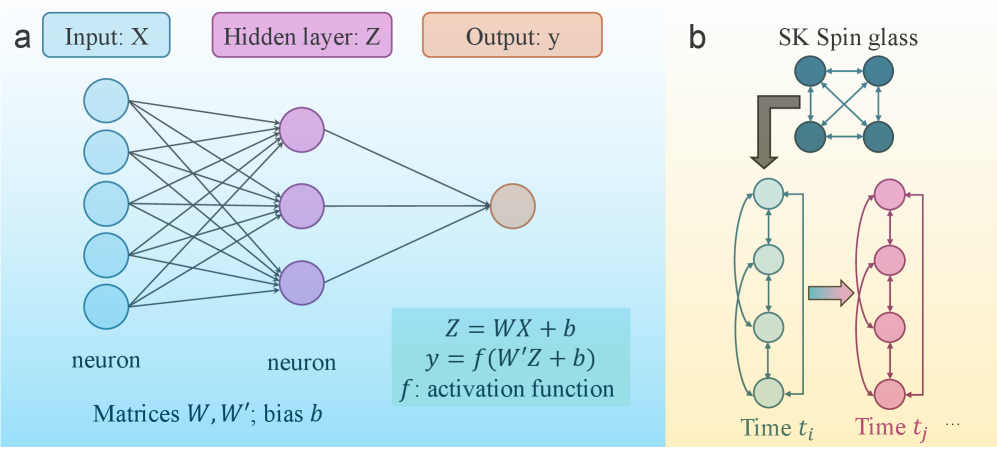

1. **Neural Network Architecture** (Left): A feedforward network with input, hidden, and output layers.

2. **Spin Glass Dynamics** (Right): A temporal evolution of a spin glass network.

---

### Components/Axes

#### Neural Network (a)

- **Input Layer**:

- Labeled "Input: X" with 5 blue nodes (neurons).

- Connected to hidden layer via matrix **W** and bias **b**.

- **Hidden Layer**:

- Labeled "Hidden layer: Z" with 3 purple nodes.

- Equations:

- **Z = WX + b** (linear transformation).

- **y = f(W'Z + b)** (output with activation function **f**).

- **Output Layer**:

- Single orange node labeled "Output: y".

- **Matrices**:

- **W** (input→hidden weights), **W'** (hidden→output weights).

#### Spin Glass (b)

- **Initial Network**:

- 4 blue nodes (time **t_i**) connected in a fully linked "SK Spin glass" topology.

- **Temporal Evolution**:

- Arrows indicate transformation to a vertical chain of 4 red nodes (time **t_j**).

- Represents dynamic reconfiguration over time.

---

### Detailed Analysis

#### Neural Network

- **Flow**:

- Input **X** → Hidden **Z** via **W** and **b** → Output **y** via **W'** and **b**.

- Activation function **f** (e.g., ReLU, sigmoid) applied at output.

- **Color Coding**:

- Blue (input), Purple (hidden), Orange (output) for clarity.

#### Spin Glass

- **Structure**:

- Initial fully connected network (blue nodes) evolves into a linear chain (red nodes) over time.

- Arrows suggest stochastic or deterministic transitions between states.

---

### Key Observations

1. **Neural Network**:

- Standard feedforward architecture with explicit weight matrices and biases.

- No explicit activation function specified for hidden layer (only output).

2. **Spin Glass**:

- Temporal evolution implies time-dependent interactions (e.g., Ising model dynamics).

- Color shift (blue→red) may denote state changes (e.g., spin polarization).

---

### Interpretation

- **Neural Network**:

- Illustrates forward propagation: data flows from input to output through weighted sums and nonlinear activation.

- Matrices **W**, **W'** and biases **b** define learnable parameters.

- **Spin Glass**:

- Represents a physical system (e.g., magnetic spins) evolving over time with complex interactions.

- The transition from a fully connected network to a linear chain may model phase transitions or critical dynamics.

- **Connection**:

- Both diagrams emphasize network topology and transformations (linear vs. temporal).

- The spin glass model could metaphorically represent the "hidden layer" dynamics in neural networks, where interactions evolve over training iterations.

---

**Note**: No numerical data or explicit values provided; focus is on structural and conceptual relationships.