\n

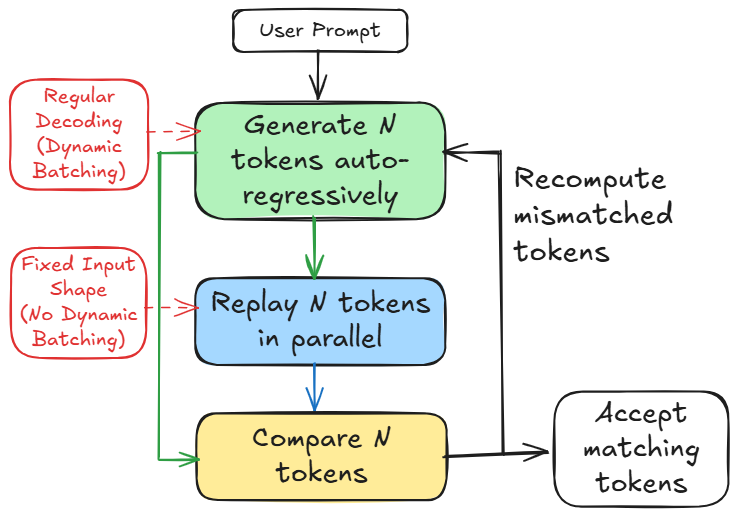

## Diagram: Parallel Decoding Process

### Overview

This diagram illustrates a parallel decoding process, likely within a large language model or similar generative system. It compares two decoding methods: "Regular Decoding (Dynamic Batching)" and "Fixed Input Shape (No Dynamic Batching)". The diagram shows the flow of tokens through these two methods, highlighting the comparison and recomputation steps.

### Components/Axes

The diagram consists of rectangular boxes representing processes, connected by arrows indicating the flow of data. The key components are:

* **User Prompt:** The initial input to the system.

* **Generate N tokens auto-regressively:** A process that generates a sequence of N tokens.

* **Replay N tokens in parallel:** A process that replays the generated tokens in parallel.

* **Compare N tokens:** A process that compares the tokens generated by the two methods.

* **Recompute mismatched tokens:** A process that recomputes tokens that do not match.

* **Accept matching tokens:** A process that accepts tokens that match.

* **Regular Decoding (Dynamic Batching):** A label indicating one decoding method. (Red text)

* **Fixed Input Shape (No Dynamic Batching):** A label indicating the other decoding method. (Purple text)

### Detailed Analysis or Content Details

The diagram depicts a workflow starting with a "User Prompt". This prompt feeds into the "Generate N tokens auto-regressively" block (light blue). This block then splits the process into two parallel paths:

1. **Regular Decoding (Dynamic Batching):** The output of the "Generate N tokens" block is fed into the "Replay N tokens in parallel" block (blue). The output of this block is then fed into the "Compare N tokens" block (yellow). Mismatched tokens are sent back to the "Generate N tokens" block for recomputation. Matching tokens are sent to the "Accept matching tokens" block.

2. **Fixed Input Shape (No Dynamic Batching):** The output of the "Generate N tokens" block is also fed into the "Replay N tokens in parallel" block (blue). The output of this block is then fed into the "Compare N tokens" block (yellow). Mismatched tokens are sent back to the "Generate N tokens" block for recomputation. Matching tokens are sent to the "Accept matching tokens" block.

The arrows indicate a cyclical process where tokens are generated, compared, and recomputed until a match is found. The diagram does not provide specific numerical values or data points.

### Key Observations

The diagram highlights the difference between two decoding methods. The "Regular Decoding" method uses dynamic batching, while the "Fixed Input Shape" method does not. The diagram suggests that both methods involve generating tokens, replaying them in parallel, comparing them, and recomputing mismatched tokens. The cyclical nature of the process indicates an iterative refinement of the generated tokens.

### Interpretation

The diagram illustrates a method for improving the accuracy or efficiency of token generation. By comparing the output of two decoding methods and recomputing mismatched tokens, the system can potentially identify and correct errors or inconsistencies. The parallel replay of tokens suggests an attempt to speed up the decoding process. The use of dynamic batching in the "Regular Decoding" method may allow for more flexible and efficient processing of variable-length inputs. The diagram suggests a feedback loop where the system continuously refines its output until it reaches a desired level of accuracy or consistency. This is a common technique in machine learning to improve the quality of generated content.