## Flowchart: Token Generation and Processing Workflow

### Overview

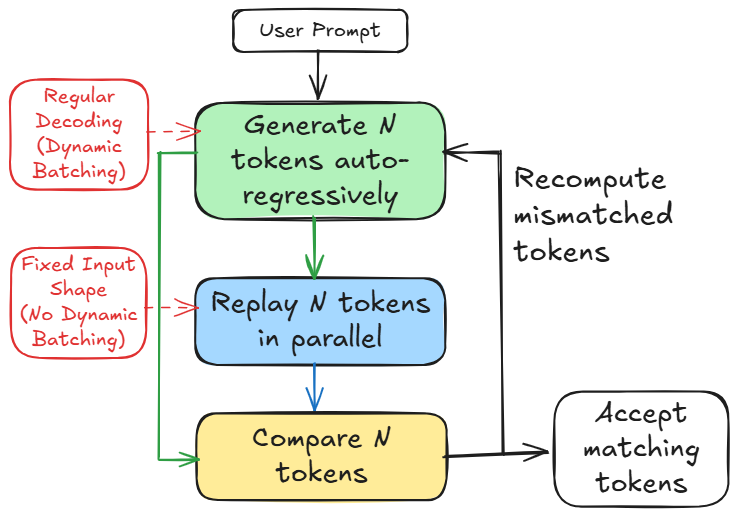

The diagram illustrates a workflow for processing user prompts through token generation, parallel replay, comparison, and acceptance. It includes two alternative decoding methods (dynamic vs. fixed batching) and a recomputation step for mismatched tokens.

### Components/Axes

- **Nodes**:

- **User Prompt** (topmost input)

- **Generate N tokens auto-regressively** (green box)

- **Replay N tokens in parallel** (blue box)

- **Compare N tokens** (yellow box)

- **Accept matching tokens** (final output)

- **Regular Decoding (Dynamic Batching)** (red box, left)

- **Fixed Input Shape (No Dynamic Batching)** (red box, right)

- **Arrows**:

- Solid arrows indicate primary flow.

- Dashed arrows represent alternative paths.

- Feedback loop for recomputing mismatched tokens.

### Detailed Analysis

1. **User Prompt** → **Generate N tokens auto-regressively** (green):

- Initial step where tokens are generated based on the prompt.

2. **Branching Paths**:

- **Regular Decoding (Dynamic Batching)** (red, left):

- Tokens are processed with dynamic batching for efficiency.

- **Fixed Input Shape (No Dynamic Batching)** (red, right):

- Tokens are processed with a fixed input shape, avoiding dynamic batching.

3. **Replay N tokens in parallel** (blue):

- Tokens are replayed in parallel, likely for optimization or redundancy.

4. **Compare N tokens** (yellow):

- Tokens are compared, possibly for consistency or error-checking.

5. **Accept matching tokens** (final step):

- Only tokens that match criteria are accepted.

6. **Recompute mismatched tokens**:

- A feedback loop ensures mismatched tokens are reprocessed.

### Key Observations

- **Dynamic vs. Fixed Batching**: The red boxes highlight two decoding strategies, suggesting trade-offs between flexibility (dynamic) and stability (fixed).

- **Parallel Replay**: The blue box emphasizes parallelism, likely to reduce latency or improve throughput.

- **Feedback Mechanism**: The recomputation step ensures robustness by addressing mismatches.

### Interpretation

This workflow optimizes token processing by balancing efficiency (dynamic batching) and reliability (fixed input shape). Parallel replay and comparison steps suggest a focus on scalability and accuracy. The recomputation loop indicates a system designed to handle errors iteratively, ensuring only validated tokens are accepted. The diagram likely represents a machine learning or NLP pipeline where token generation and validation are critical for downstream tasks like text generation or translation.