\n

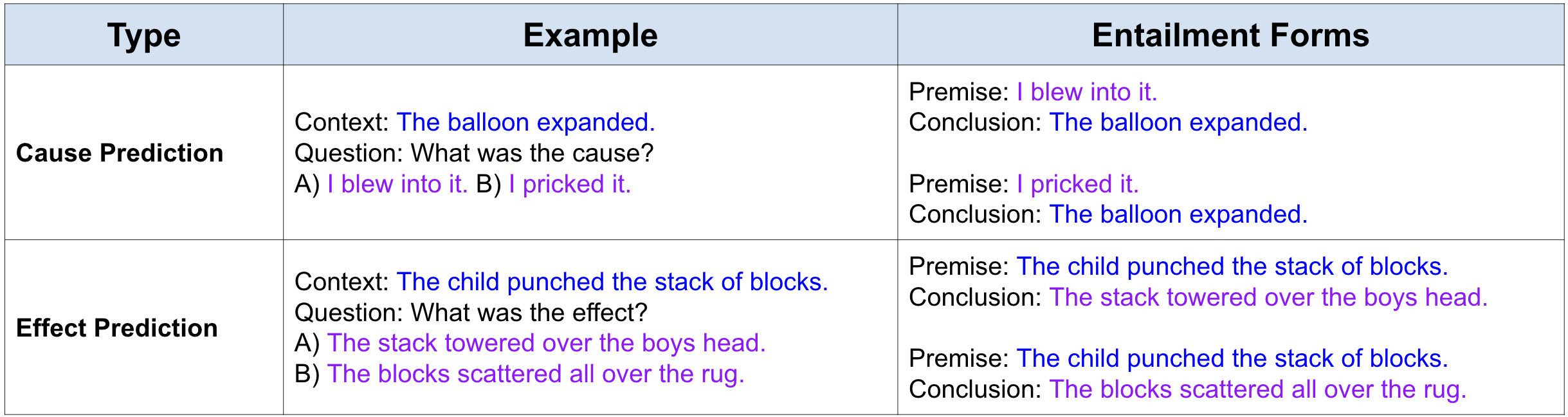

## Table: Cause and Effect Prediction with Entailment Forms

### Overview

The image displays a structured table with three columns and two main data rows. It appears to be an educational or technical illustration defining two types of prediction tasks ("Cause Prediction" and "Effect Prediction") and providing examples of how these tasks can be framed as logical entailment problems. The table uses color-coding (blue and purple text) to distinguish different components of the examples.

### Components/Axes

The table has the following structure:

* **Column Headers (Top Row):**

* **Type** (Left column)

* **Example** (Center column)

* **Entailment Forms** (Right column)

* **Data Rows:** Two rows corresponding to the two "Type" entries.

* **Color Coding:**

* **Blue Text:** Used for the "Context" sentences and the "Conclusion" sentences in the Entailment Forms.

* **Purple Text:** Used for the answer options (A, B) in the Example column and the "Premise" sentences in the Entailment Forms.

### Detailed Analysis

The table content is presented below in a structured format:

| Type | Example | Entailment Forms |

|------|---------|------------------|

| Cause Prediction | Context: <span style="color:blue">The balloon expanded.</span><br>Question: What was the cause?<br>A) <span style="color:purple">I blew into it.</span> B) <span style="color:purple">I pricked it.</span> | Premise: <span style="color:purple">I blew into it.</span> Conclusion: <span style="color:blue">The balloon expanded.</span><br>Premise: <span style="color:purple">I pricked it.</span> Conclusion: <span style="color:blue">The balloon expanded.</span> |

| Effect Prediction | Context: <span style="color:blue">The child punched the stack of blocks.</span><br>Question: What was the effect?<br>A) <span style="color:purple">The stack towered over the boys head.</span> B) <span style="color:purple">The blocks scattered all over the rug.</span> | Premise: <span style="color:purple">The child punched the stack of blocks.</span> Conclusion: <span style="color:blue">The stack towered over the boys head.</span><br>Premise: <span style="color:purple">The child punched the stack of blocks.</span> Conclusion: <span style="color:blue">The blocks scattered all over the rug.</span> |

### Key Observations

1. **Structural Pattern:** Each "Type" is illustrated with a single context, a question, and two possible answers (A and B). These are then reformulated into two "Entailment Forms," each consisting of a premise (derived from one of the answer options) and a conclusion (the original context sentence).

2. **Logical Consistency:** The entailment forms for "Cause Prediction" present a logical issue. The premise "I pricked it" does not logically entail the conclusion "The balloon expanded" (pricking typically causes deflation). This suggests the table may be illustrating the *structure* of the task rather than asserting the logical validity of every example.

3. **Color Function:** The consistent color coding (blue for outcomes/conclusions, purple for actions/premises) helps visually map the components between the "Example" and "Entailment Forms" columns.

4. **Spatial Layout:** The "Entailment Forms" column is the widest, accommodating the paired premise-conclusion statements. The "Type" column is the narrowest.

### Interpretation

This table serves as a clear, structured reference for defining and exemplifying two specific natural language processing or reasoning tasks: **Cause Prediction** and **Effect Prediction**.

* **What it demonstrates:** It shows how a multiple-choice question format (Context + Question + Options) can be decomposed into a set of logical entailment pairs. This is a common technique in dataset creation for training or evaluating AI models on causal reasoning.

* **Relationship between elements:** The "Example" column presents the task in a human-readable QA format. The "Entailment Forms" column translates this into a formal, machine-friendly structure where a model must judge if a premise (an action) logically leads to a conclusion (an outcome).

* **Notable Anomaly:** The second "Cause Prediction" entailment ("I pricked it" → "The balloon expanded") is factually incorrect in the real world. This is likely intentional to illustrate that the *form* of the task is being presented, not necessarily a set of correct factual statements. It highlights that the task for an AI would be to distinguish between the valid and invalid entailment pairs.

* **Purpose:** The table is likely from a research paper, technical report, or educational material explaining how to frame causal reasoning problems for computational models. It provides a template for converting intuitive questions into a standardized format suitable for systematic analysis or model training.