## Diagram: Data Partitioning and Flow for Collaborative Prediction

### Overview

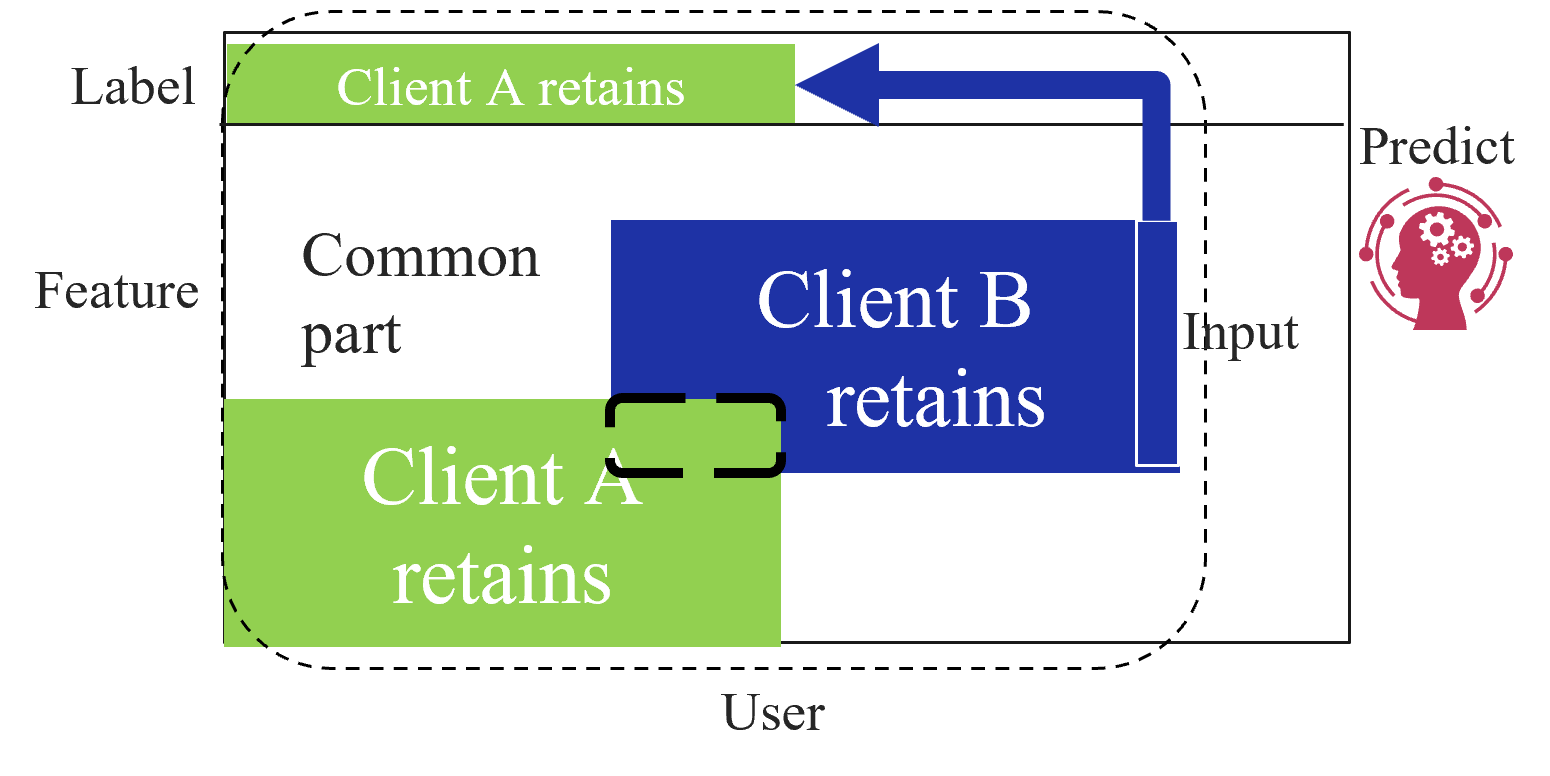

This diagram illustrates a data partitioning and processing scheme involving two clients (Client A and Client B) and a prediction model. It visually represents how a dataset, split into "Feature" and "Label" components, is divided between the two clients, with a "Common part" shared between them. The flow shows data from Client B being used to generate a prediction.

### Components/Axes

The diagram is structured within a rectangular boundary, with a dashed line indicating a "User" domain. The primary labeled regions and elements are:

* **Left Side (Data Domain):**

* **Label:** A horizontal green bar at the top, labeled "Client A retains".

* **Feature:** A large area below the Label bar. This area contains:

* A light gray region labeled "Common part".

* A large blue rectangle labeled "Client B retains".

* A large green rectangle at the bottom labeled "Client A retains".

* A black dashed rectangle highlights the overlap between the "Common part" and the "Client A retains" green rectangle.

* **Right Side (Prediction Domain):**

* **Predict:** A label next to a red icon depicting a human head silhouette with gears inside, symbolizing a model or AI.

* **Input:** A label next to a vertical blue bar that is part of the "Client B retains" block.

* **Flow Arrows:**

* A thick blue arrow originates from the top of the "Client B retains" blue block (near the "Input" label), travels upward, then left, and points into the "Client A retains" green Label bar.

* A second thick blue arrow originates from the same point on the "Client B retains" block and points directly right to the "Predict" icon.

* **Boundary:**

* **User:** A label at the bottom center, associated with a dashed line that encloses the entire data partitioning area (Feature, Label, and both clients' retained data) but excludes the "Predict" icon.

### Detailed Analysis

The diagram defines a specific data ownership and flow architecture:

1. **Data Segmentation:** The complete dataset is conceptually divided into two axes:

* **Feature Axis (Vertical):** The input variables.

* **Label Axis (Horizontal):** The target variable or outcome.

2. **Client Data Retention:**

* **Client A retains:** Two distinct green blocks.

* One block holds the **Label** data (top).

* Another block holds a portion of the **Feature** data (bottom left).

* **Client B retains:** One blue block holding a different portion of the **Feature** data (center-right).

* **Common part:** A region within the Feature space that is accessible to or shared between both clients. It overlaps with Client A's retained Feature block.

3. **Data Flow for Prediction:**

* The "Input" for the prediction model is derived from the data retained by **Client B**.

* This input data follows two paths:

* **Path 1 (Direct Prediction):** The data flows directly to the **Predict** model (red icon).

* **Path 2 (Label Association):** The data is also sent to the **Label** data retained by **Client A**. This suggests the model's prediction (using Client B's features) is being compared to or trained against the true labels held by Client A.

4. **Spatial Grounding:** The "User" boundary encapsulates the data held by the clients, implying this is the local or private data environment. The "Predict" model resides outside this boundary, indicating it may be a central server or an external service.

### Key Observations

* **Asymmetric Data Holding:** Client A holds both label data and some feature data, while Client B holds only feature data. This is a common setup in vertical federated learning.

* **Critical Data Pathway:** The blue arrow from Client B's data to Client A's label data is crucial. It represents the mechanism for aligning features from one party with labels from another without directly sharing the raw data.

* **Common Feature Space:** The "Common part" indicates an overlap in the feature sets held by the two clients, which may be necessary for aligning their data samples.

* **Model Externalization:** The prediction model is positioned outside the user/client domain, highlighting a separation between data holders and the model executor.

### Interpretation

This diagram depicts a **Vertical Federated Learning** or **Split Learning** scenario. The core idea is to collaboratively train or use a machine learning model without sharing raw data.

* **What it demonstrates:** It shows how two organizations (Client A and Client B) can leverage their respective data—Client A with user labels and some features, Client B with complementary features—to make a prediction. Client B processes its features to create an intermediate representation ("Input"), which is sent to the model. The model's output is then associated with Client A's labels for training or evaluation.

* **How elements relate:** The architecture enables privacy-preserving collaboration. The "Common part" allows for sample alignment. The data flow ensures that Client B's features are used for prediction, while Client A's labels provide the ground truth, all without either party exposing their complete dataset to the other or necessarily to the model server.

* **Notable Anomalies/Considerations:** The diagram is a high-level schematic. It abstracts away critical technical details such as the encryption methods used for the data transfer, the specific machine learning algorithm, how the "Common part" is established, and the exact nature of the "Input" (e.g., raw features, embeddings, or gradients). The security of the blue arrow pathway is paramount in a real-world implementation.