## Diagram: AI System Architectures Comparison

### Overview

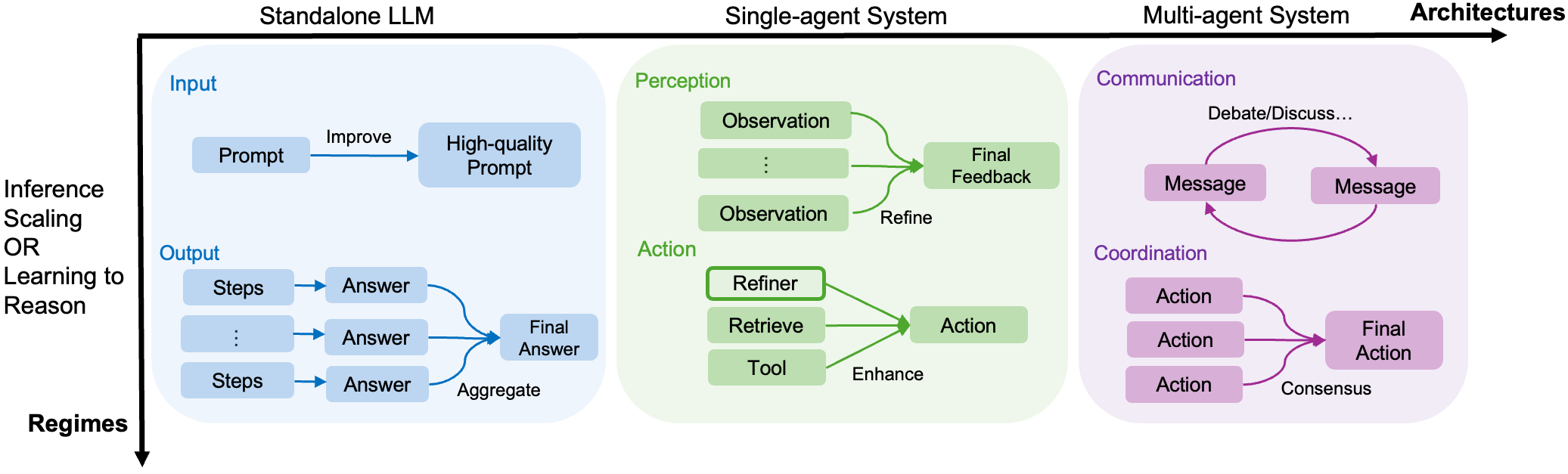

The diagram illustrates three progressive AI system architectures: **Standalone LLM**, **Single-agent System**, and **Multi-agent System**, arranged horizontally from left to right. Each architecture is represented by a colored box (blue, green, purple) with internal components and directional flows. The vertical axis is labeled **"Inference Scaling OR Learning to Reason"**, while the horizontal axis is labeled **"Architectures"**.

---

### Components/Axes

#### Vertical Axis (Y-axis):

- **Labels**:

- "Inference Scaling OR Learning to Reason" (bottom to top).

- **Positioning**:

- Spans the entire height of the diagram, with text aligned to the left.

#### Horizontal Axis (X-axis):

- **Labels**:

- "Standalone LLM" (leftmost), "Single-agent System" (middle), "Multi-agent System" (rightmost), "Architectures" (far right).

- **Positioning**:

- Text aligned to the top, with arrows pointing rightward.

#### Key Sections:

1. **Standalone LLM (Blue Box)**:

- **Input**: "Prompt" → "Improve" → "High-quality Prompt".

- **Output**: Multiple "Steps" → "Answer" pairs aggregated into a "Final Answer".

- **Flow**: Linear progression from input to output.

2. **Single-agent System (Green Box)**:

- **Perception**:

- "Observation" → "Final Feedback" (with refinement loops).

- **Action**:

- "Refiner" → "Retrieve" → "Tool" → "Enhance" → "Action".

- **Flow**: Cyclical refinement in perception and tool-enhanced action.

3. **Multi-agent System (Purple Box)**:

- **Communication**:

- "Message" ↔ "Message" (debate/discussion loop).

- **Coordination**:

- Three "Action" nodes → "Final Action" via consensus.

- **Flow**: Collaborative decision-making with iterative messaging.

---

### Detailed Analysis

#### Standalone LLM (Blue):

- **Input Processing**:

- Prompts are iteratively improved to high quality before generating outputs.

- **Output Aggregation**:

- Multiple reasoning steps ("Steps") produce answers, which are combined into a single "Final Answer".

#### Single-agent System (Green):

- **Perception Loop**:

- Observations are refined through feedback to improve decision-making.

- **Action Enhancement**:

- Tools are retrieved and used to enhance actions, emphasizing adaptability.

#### Multi-agent System (Purple):

- **Communication**:

- Messages are exchanged iteratively to resolve conflicts or debates.

- **Coordination**:

- Multiple agents propose actions, which are consolidated into a single "Final Action" via consensus.

---

### Key Observations

1. **Complexity Progression**:

- Standalone LLM is the simplest (linear input-output).

- Single-agent introduces feedback loops and tool use.

- Multi-agent adds collaboration and consensus mechanisms.

2. **Flow Direction**:

- All systems emphasize iterative refinement (e.g., "Refine," "Debate/...," "Consensus").

3. **Color Coding**:

- Blue (Standalone), Green (Single-agent), Purple (Multi-agent) visually distinguish architectures.

---

### Interpretation

This diagram highlights the evolution of AI systems from isolated, rule-based models (Standalone LLM) to collaborative, adaptive frameworks (Multi-agent). The vertical axis ("Inference Scaling OR Learning to Reason") suggests a trade-off between computational efficiency and reasoning depth.

- **Standalone LLM**: Suitable for straightforward tasks with minimal reasoning.

- **Single-agent**: Balances autonomy with adaptability via tool use and feedback.

- **Multi-agent**: Prioritizes collective intelligence, ideal for complex, dynamic environments requiring coordination.

The progression underscores the shift from individual decision-making to distributed, consensus-driven systems, reflecting advancements in AI collaboration and scalability.